Uncertainty in the market forces manufacturing companies to increase the flexibility of their production. Industrial leaders have already implemented automated processes and connected high tech equipment to perform live data analytics. But the transactional data generated during production are often only used for short-term analysis to detect issues and are then deleted from enterprise systems due to high storage costs. This report explains our best practice how to optimize the digitalization of industrial production lines with Big Data technologies.

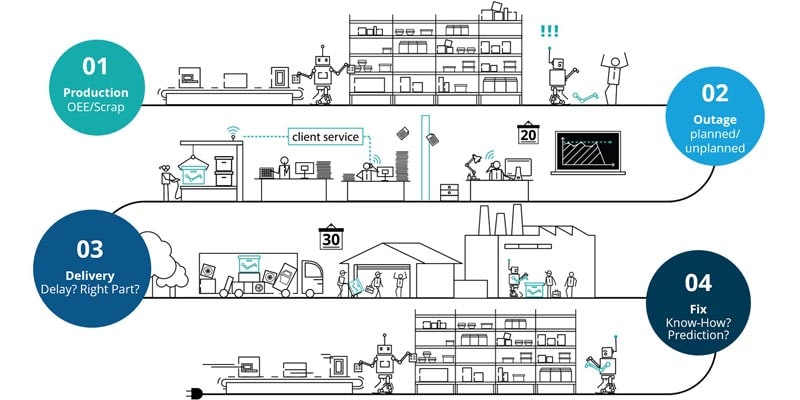

Current issues of industrial companies

Analysing data directly in the database of a running production line might cause production downtime and outages. Apart from this, many companies are assembling more sensors to gain a comprehensive understanding of each production line. For example, sensors to detect temperature, air humidity and vibration strength, are applied to measure how environment factors affect yield and product quality. Collecting more and more data with current enterprise systems not only leads to higher storage costs but also proves to be a huge burden on the computation power of an enterprise system. Accordingly, there is no capacity for advanced analysis left. Although manufacturing data can potentially generate high value information, it has to be abandoned. To solve these problems, industrial companies need to adopt analysis and processing tools for the complete digitalization of industrial production lines.

What is a digital production line? And why do companies need it?

A digital production line integrates an organization’s business, design and operations to improve the production process.

On the one hand it accelerates the production process by leveraging robots and detecting failures earlier, and on the other hand, it makes the production design more flexible and thus generates capabilities for new product designs and geometries. To digitalize an existing automated production process, industrial companies need to go through the following steps:

- Connect production logs or deploy sensors, RFID-Tags, GPS, robots in the factory

- Build a digital backbone to connect each manufacturing part

- Collect all manufacturing data, enrich and analyze data with Big Data technologies

- Visualize the insights and KPIs on an intuitive role-based dashboard

According to our on-site experiences with clients, step 3 to step 4 is usually ignored despite the existence of automated and connected production lines in factories. Companies are stuck in the previous steps because they lack the necessary know-how of building a Data Lake. From a technical perspective, deploying a Big Data cluster requires skills in programming, managing data structures, machine learning, analytics and cloud computing.

Finding a team that is competent in all these aspects is a challenging task. In addition, to truly exert the value of a Data Lake, indices for production efficiency need to be implemented, for example OEE (Overall Equipment Effectiveness), Production Performance and Quality indices. Most corporations have not applied these indicators on production lines. Therefore, experts with insights on both supply chains and business management are required to design intuitive and role-based KPI dashboards.

FEATURES OF DIGITAL PRODUCTION LINES

- Sensors/Gateways (smarter and more connected) – enable edge-computing, self-quality checks, offline mode and different data transfer methods

- Connectivity – connecting the factory floor from the sensor level to the cloud

- Big Data – an explosion in the amount and variety of data collected and processed at speed

- User interfaces – simplifying technology use (and increasing worker mobility)

- Robots – able to work “hand-in-hand” with employees

- Additive manufacturing – new product designs and geometries

Benefits of a digital production line

A good example is the transition from Preventive Maintenance to Predictive Maintenance, enabled by a digital production line. Both approaches aim to prevent unplanned downtime and expensive cost from equipment failure. Preventive Maintenance corresponds with the average or expected downtime for the equipment lifecycle. Meanwhile, Predictive Maintenance is based on the actual equipment condition and information from the whole production process. This approach coordinates information from both the up- and the downstream of a supply chain and offers comprehensive insights for the usage of production lines. As a result, Predictive Maintenance promises cost saving for just-in-time maintenance actions and minimizes disruptions of system operations.

Why is a Data Lake indispensable?

A Data Lake is a repository that stores all structured and unstructured data at a large scale. It provides a platform to analyze and visualize data with different methods, such as machine learning tools, real-time analytics and reporting dashboards.

Although many enterprise system vendors now offer cloud storage and data analysis solutions, the high storage cost and vendor lock-effects are - for many of our customers – still main drivers to choose an open-source Data Lake.

Compared to enterprise systems a Data Lake has the following advantages:

- Reliable data storage with low cost and flexible cost structure. Data in the cluster are automatically replicated and stored on multiple servers. When one of them shuts down, the cluster would reproduce the data from this server according to corresponding data on other servers. This mechanism builds up a fault-tolerant and robust data storage space. Furthermore, storing data in the cloud saves hardware investment and allows companies to pay only for the services procured. This leads to significant lower costs compared to enterprise systems.

- Data analysis without contaminating the running production line. As production data are extracted and analyzed in the Data Lake, the running production line is not affected. As a result the data extracting process not only avoids the risk of production downtime or outages, but also creates a backup for data in the production system.

- Guaranteed performance even with a large data volume. Advanced analytics of historical data need high computation capacity which is hard to achieve without big data models like Map-reduce. Map-reduce models process the data parallel on a group of servers. It allows computation power to be scaled vertically and to significantly shorten the processing time of historical production data.

- Potential to achieve Predictive Analytics. Due to its benefits, Predictive Analytics is now regarded as the next evolutionary step for industrial maintenance. Imagine yourself as a production manager: Reporting tools are sending you the results of your Predictive Analytics and alert you on a daily basis about the depreciation of your machines. In case of an emergent production failure, machine learning algorithms can generate an optimized design for the distribution of the overall performance onto other machines which consequently minimizes the damage until the spare part arrives. Without large amounts of historical data Predictive Analytics can’t work efficiently and Big Data Technologies are currently the best solution for processing large data volumes.

To sum up: Sensors and robots construct the physical part of a digital production line, a Data Lake further empowers the production line to implement advanced analysis. The physical equipment and information form a joint ecosystem. This ecosystem allows the factory to achieve higher efficiency without affecting the normal production line and also frees up the companies from vendor effects with added benefit of low storage cost.

How we support the transformation: deploying the digital production line for our clients

The first step is to reinforce the physical deployment with sensors, RFID-Tags and actuators, such as robots. Different types of sensors help to collect data regarding transaction time, temperature, air humidity etc. and to save the data in enterprise systems.

The next step is to set up a cluster in the cloud and to analyze the production data.

For example in the Deloitte Digital Factory we deployed a Cloudera cluster based on Amazon Web Services (AWS). As a cloud service provider AWS offers a pay-per-use model that encourages companies to produce more demo and testing projects with minimal budget. Cloudera significantly reduces the time for deploying a cluster of servers and provides a platform for various analysis tools. After deploying a cluster, companies are able to move the historical and current production data from expensive enterpris systems into the cluster in the cloud. One way to do this is to use an ETL (Extract, Transform, Load) software. In the Digital Factory we chose Talend as our ETL tool. Talend has more than 900 connectors, it allows users to extract data from databases and enterprise systems and to send the data into a Data Lake. Moreover it provides users with a graphic interface to intuitively design the data pipeline. The ETL can be optimized by applying an appropriate CDC (Change Data Capture). Change Data Capture is a software set that accesses the database logs of insertion, update and deletion commands.

By applying CDC records in the Data Lake, companies can keep the Data Lake up-to-date with minimal impact on the original systems. At this point the data are fully integrated in the cluster but their value is not yet retrieved. In order to do this, data engineers and data scientists are working together to clean, analyze and transform the unstructured sensor data into decision support information.

The last step is to provide an intuitive insight derived from the data while the visual analytic group designs automated reports and KPI dashboards. The visualized information and insights are then sent back to the factory, so that managers and workers can detect flaws of the product and subsequently eliminate the inefficiencies.

What our clients can expect from digital production lines

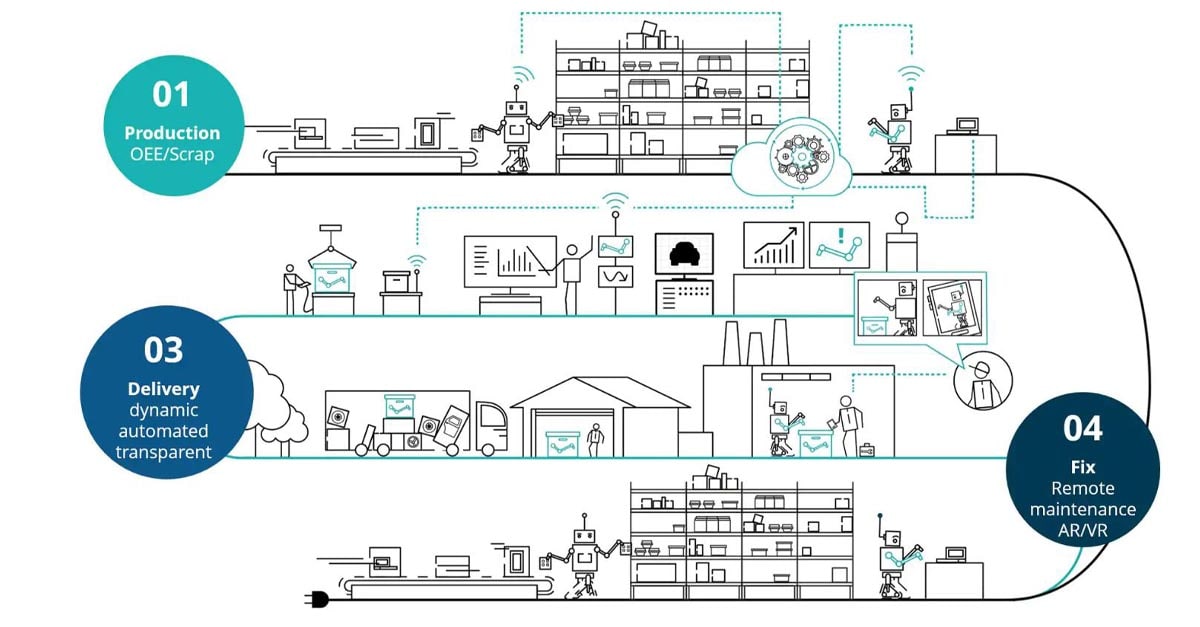

Digital production lines are the base of a smart factory. In our vision, a factory can only be smart if it and its components are:

- connected

- transparent

- agile

- predictive

Digital production lines allow this vision to become real: Visualization tools present operational statuses in dashboards and allow workers to quickly detect anomalies and track those issues precisely. KPI reports provide managers with a timely overview of the factories and thus accelerate decision-making.

Now companies can not only combine the historical and the current production data but apply Predictive Maintenance that significantly enhances the automation level in factories. Routine operations such as restocking and replenishment are done automatically with minimal human interaction. Predictive maintenance also helps to identify supplier and quality issues in advance, so that factories can react faster, and therefore improve their production efficiency.

Information reduces the risk of re-planning production lines. Factory supervisors are now able to adapt the factory layout and equipment more flexibly to various product types and are able to determine the most efficient design with the help of machine learning models. As a result, adaptable scheduling and changeovers mitigate the impairment from changing market trends and allow companies to deliver their best within short time.

As a global leader in Analytics, Deloitte has more than 15.000 consultants with extensive analytics expertise and has successfully implemented more than 4.000 Analytics projects worldwide. Our vast industry expertise and partnership constitutes our capability to embed analytical decision-making. By developing strategies tailored to specific requirements of your business, we help you turn everyday information in your company into actionable insights.

Deloitte Digital Factory

To reduce our clients’ burden from the unexpected risks of digital transformation and to show untapped potential of a smart factory, Deloitte's Digital Factory delivers its own digital vision. It provides our clients with a tangible digital production line that demonstrates the combination of the old and new supply chain world and manufacturing operations.

The digitalization of industrial production lines has already been realized at the Digital Factory. It consists of four stations. Each of them integrates seamlessly with robots, sensors, RFID-Tags and other digital technologies such as smart glasses and 3D printers. All data generated during production are sent to a cluster of servers in the cloud and then cleaned and analyzed with trending Big Data tools.

Contact us

Andreas Staffen

Andreas Staffen verantwortet das Offering IoT and IT Architecture (Smart Manufacturing) für Deutschland und gestaltet die Digitalisierung der Supply Chain seit 2004. Dabei begleitet er deutsche, europäische und globale Unternehmen bei der erfolgreichen Umsetzung schlanker und integrierter IT Architekturen für die Entwicklung und Produktion. Durch die Umsetzung des Industrie 4.0 Gedanken in der Deloitte Digital Factory werden die Auswirkungen auf die Geschäftsmodelle unserer Kunden erlebbar und die weitere Gestaltung einfacher realisierbar.

Florian Ploner

Florian Ploner is Consulting Partner in Technology Strategy & Transformation. For more than 20 years he has been advising his clients from industry and technology in Europe, America and Asia on global transformation programs. Florian is a proven expert in the areas of Global Delivery, Industry 4.0, Smart Factory and Go2Market. Florian leads a strategic global technology client and the Global Deliver Network for Consulting in Germany.