The last-mile problem: How data science and behavioral science can work together has been saved

The last-mile problem: How data science and behavioral science can work together Deloitte Review Issue 16

27 January 2015

If we want to act on data to get fit or reduce heating bills, we need to understand not just the analytics of the data, but how we make decisions.

“Many programs and services are designed not for the brains of humans but of Vulcans. Thanks in large part to Kahneman and his many collaborators, pupils, and acolytes, this can and will change.” — Rory Sutherland, Ogilvy & Mather

Deloitte Review, issue 16

See the full issue

Join the conversation

#DeloitteReview

Two overdue sciences

Near the end of Thinking, Fast and Slow, Daniel Kahneman wrote, “Whatever else it produces, an organization is a factory that produces judgments and decisions.”2 Judgments and decisions are at the core of two of the most significant intellectual trends of our time, and the one-word titles of their most successful popularizations have become their taglines. “Moneyball” is shorthand for applying data analytics to make more economically efficient decisions in business, health care, the public sector, and beyond. “Nudge” connotes the application of findings from psychology and behavioral economics to prompt people to make decisions that are consistent with their long-term goals.

Other than the connection with decisions, the two domains might seem to have little in common. After all, analytics is typically discussed in terms of computer technology, machine learning algorithms, and (of course) big data. Behavioral nudges, on the other hand, concern human psychology. What do they have in common?

When the ultimate goal is behavior change, predictive analytics and the science of behavioral nudges can serve as two parts of a greater, more effective whole.

Quite a bit, as it turns out. Business analytics and the science of behavioral nudges can each be viewed as different types of responses to the increasingly commonplace observation that people are predictably irrational. Predictive analytics is most often about providing tools that correct for mental biases, analogous to eyeglasses correcting for myopic vision. In the private sector, this enables analytically sophisticated competitors to grow profitably in the inefficient markets that exist thanks to abiding cultures of biased decision making in business. In government and health care, predictive models enable professionals to serve the public more economically and effectively.

The “behavioral insights” movement is based on a complementary idea: Rather than try to equip people to be more “rational,” we can look for opportunities to design their choice environments in ways that comport with, rather than confound, the actual psychology of decision making.3 For example, since people tend to dislike making changes, set the default option to be the one that people would choose if they had more time, information, and mental energy. (For example, save paper by setting the office printer to the default of double-sided printing. Similarly, retirement savings and organ donation programs are more effective when the default is set to “opt in” rather than “opt out.”) Since people are influenced by what others are doing, make use of peer comparisons and “social proof” (for example, asking, “Did you know that you use more energy than 90 percent of your neighbors?”). Because people tend to ignore letters written in bureaucratese and fail to complete buggy computer forms, simplify the language and user interface. And since people tend to engage in “mental accounting,” allow people to maintain separate bank accounts for food money, holiday money, and so on.4

Richard Thaler and Cass Sunstein call this type of design thinking “choice architecture.” The idea is to design forms, programs, and policies that go with, rather than against, the grain of human psychology. Doing so does not restrict choices; rather, options are arranged and presented in ways that help people make day-to-day choices that are consistent with their long-term goals. In contrast with the hard incentives of classical economics, behavioral nudges are “soft” techniques for prompting desired behavior change.

Proponents of this approach, such as the Behavioural Insights Team and ideas42, argue that behavioral nudges should be part of policymakers’ toolkits.5 This article goes further and argues that the science of behavioral nudges should be part of the toolkit of mainstream predictive analytics as well. The story of a recent political campaign illustrates the idea.

Yes, they did

The 2012 US presidential campaign has been called “the first big data election.”6 Both the Romney and Obama campaigns employed sophisticated teams of data scientists charged with (among other things) building predictive models to optimize the efforts of volunteer campaign workers. The Obama campaign’s strategy, related in Sasha Issenberg’s book The Victory Lab, is instructive: The team’s data scientists built, and continually updated, models prioritizing voters in terms of their estimated likelihood of being persuaded to vote for Obama. The strategy was judicious: One might naively design a model to simply identify likely Obama voters. But doing so would waste resources and potentially annoy many supporters already intending to vote for Obama. At the opposite extreme, directing voters to the doors of hard-core Romney supporters would be counterproductive. The smart strategy was to identify those voters most likely to change their behavior if visited by a campaign worker.7

So far this is a “Moneyball for political campaigns” story. A predictive model can weigh more factors—and do so more consistently, accurately, and economically—than the unaided judgment of overstretched campaign workers. Executing an analytics-based strategy enabled the campaign to derive considerably more benefit from its volunteers’ time.

But the story does not end here. The Obama campaign was distinctive in combining the use of predictive analytics with outreach tactics motivated by behavioral science. Consider three examples: First, campaign workers would ask voters to fill out and sign “commitment cards” adorned with a photograph of Barack Obama. This tactic was motivated by psychological research indicating that people are more likely to follow through on actions that they have committed to. Second, volunteers would also ask people to articulate a specific plan to vote, even down to the specific time of day they would go to the polls. This tactic reflected psychological research suggesting that forming even a simple plan increases the likelihood that people will follow through. Third, campaign workers invoked social norms, informing would-be voters of their neighbors’ intentions to vote.8

The Obama campaign’s combined use of predictive models and behavioral nudge tactics suggests a general way to enhance the power of business analytics applications in a variety of domains.9 It is an inescapable fact that no model will provide benefits unless appropriately acted upon. Regardless of application, the implementation must be successful in two distinct senses: First, the model must be converted into a functioning piece of software that gathers and combines data elements and produces a useful prediction or indication with suitably short turnaround time.10 Second, end users must be trained to understand, accept, and appropriately act upon the indication.

In many cases, determining the appropriate action is, at least in principle, relatively straightforward. For example, if an analysis singles out a highly talented yet underpaid baseball player, scout him. If an actuarial model indicates that a policyholder is a risky driver, set his or her rates accordingly. If an emergency room triage model indicates a high risk of heart attack, send the patient to intensive care. But in many other situations, exemplified by the challenge of getting out the vote, a predictive model can at best point the end user in the right direction. It cannot suggest how to prompt the desired behavior change.

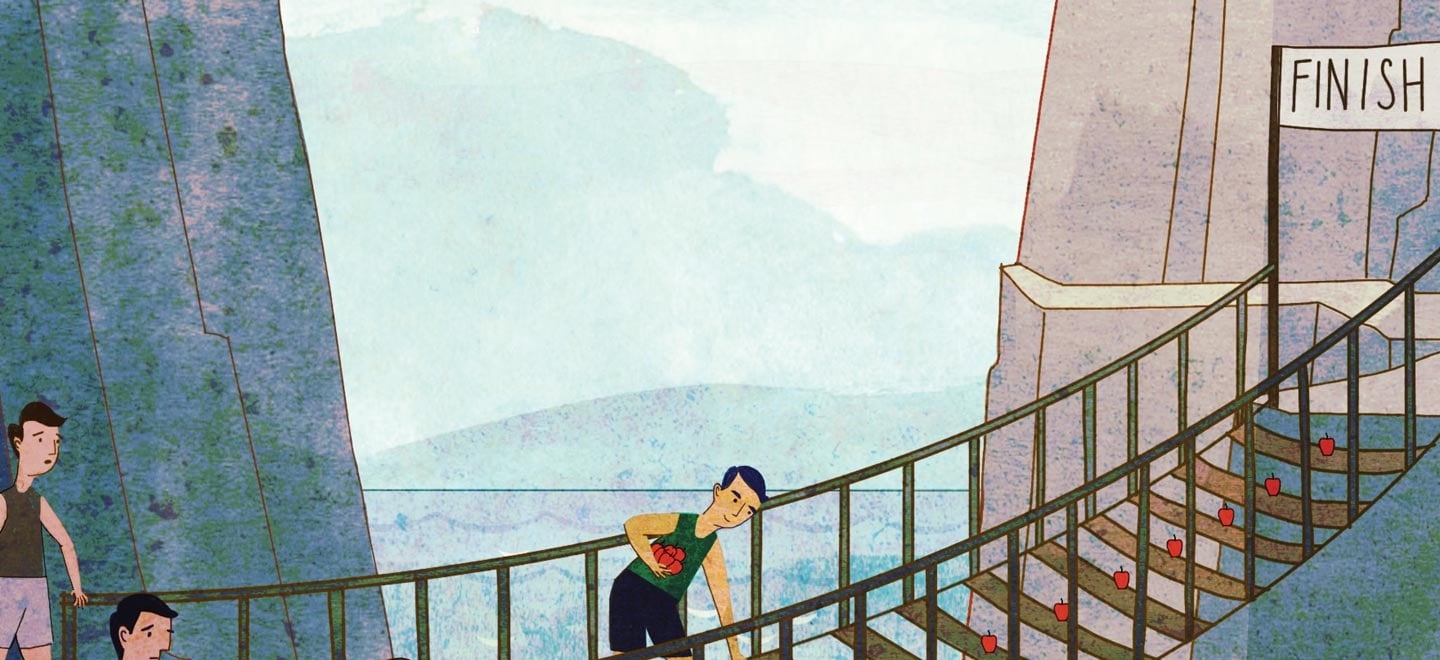

I call this challenge “the last-mile problem.” The suggestion is that just as data analytics brings scientific rigor to the process of estimating an unknown quantity or making a prediction, employing behavioral nudge tactics can bring scientific rigor to the (largely judgment-driven) process of deciding how to prompt behavior change in the individual identified by a model. When the ultimate goal is behavior change, predictive analytics and the science of behavioral nudges can serve as two parts of a greater, more effective whole.

Push the worst, nudge the rest

Once one starts thinking along these lines, other promising applications come to mind in a variety of domains, including public sector services, behavioral health, insurance, risk management, and fraud detection.

Supporting child support

Several US states either have implemented or intend to implement predictive models designed to help child support enforcement officers identify noncustodial parents at risk of falling behind on their child support payments.11 The goal is to enable child support officers to focus more on prevention and hopefully less on enforcement. The application is considered a success, but perhaps more could be done to achieve the ultimate goal of ensuring timely child support payments.12 The Obama campaign’s use of commitment cards suggests a similar approach here. For example, noncustodial parents identified as at-risk by a model could be encouraged to fill out commitment cards, perhaps adorned with their children’s photographs. In addition, behavioral nudge principles could be used to design financial coaching efforts.13

Several US states either have implemented or intend to implement predictive models designed to help child support enforcement officers identify noncustodial parents at risk of falling behind on their child support payments. The goal is to enable child support officers to focus more on prevention and hopefully less on enforcement.

Taking the logic a step further, the model could also be used to identify more moderate risks, perhaps not in immediate need of live visits, who might benefit from outreach letters. Various nudge tactics could be used in the design of such letters. For example the letters could address the parent by name, be written in colloquial and forthright language, and perhaps include details specific to the parent’s situation. Evidence from behavioral nudge field experiments in other applications even suggests that printing such letters on colored paper increases the likelihood that they will be read and acted upon. There is no way of knowing in advance which (if any) combination of tactics would prove effective.14 But randomized control trials (RCTs) could be used to field-test such letters on treatment and control groups.

The general logic common to the child support and many similar applications is to use models to deploy one’s limited workforce to visit and hopefully ameliorate the highest-risk cases. Nudge tactics could help the case worker most effectively prompt the desired behavior change. Other nudge tactics could be investigated as a low-cost, low-touch way of prompting some medium- to high-risk cases to “self-cure.” The two complementary approaches refine the intuitive process case workers go through each day when deciding whom to contact and with what message. Essentially the same combined predictive model/behavioral nudge strategy could similarly be explored in workplace safety inspections, patient safety, child welfare outreach, and other environments.

Let’s keep ourselves honest

Similar ideas can motivate next-generation statistical fraud detection efforts. Fraud detection is among the most difficult data analytics applications because (among other reasons) it is often the case that not all instances of fraud have been flagged as such in historical databases. Furthermore, fraud itself can be an inherently ambiguous concept. For example, much automobile insurance fraud takes the form of opportunistic embellishment or exaggeration rather than premeditated schemes. Such fraud is often referred to as “soft fraud.” Fraud “suspicion score” models inevitably produce a large proportion of ambiguous indications and false-positives. Acting upon a fraud suspicion score can therefore be a subtler task than acting on, for example, child welfare or safety inspection predictive model indications.

Behavioral nudge tactics offer a “soft touch” approach that is well suited to the ambiguous nature of much fraud detection work. Judiciously worded letters could be crafted to achieve a variety of fraud mitigation effects. First, letters that include specific details about the claim and also remind the claimant of the company’s fraud detection policies could achieve a “sentinel effect” that helps ward off further exaggeration or embellishment. If appropriate, letters could inform individuals of a “lottery” approach to random fraud investigation. Such an approach is consistent with two well-established psychological facts: People are averse to loss, and they tend to exaggerate small probabilities, particularly when the size of the associated gain or loss is large and comes easily to mind.15

A second relevant finding of behavioral economics is that people’s actions are influenced not only by external (classically economic) punishments and rewards but by an internal reward system as well. For example, social norms exert material influences on our behaviors: People tend to use less energy and fewer hotel towels when informed that people like them tend to conserve. Physician report cards have been found to promote patient safety because they prompt physicians to compare their professional conduct to that of their peers and trigger such internal, noneconomic rewards as professional pride and the pleasure of helping others.16 Similarly, activating the internal rewards system of a potential (soft) fraudster might provide an effective way of acting upon ambiguous signals from a statistical fraud detection algorithm. Behavioral economic research suggests that a small amount of cheating flies beneath the radar of people’s internal reward mechanism. Dan Ariely calls this a “personal fudge factor.” In addition, people simply deceive themselves when committing mildly dishonest acts.17 Such nudge tactics as priming people to think about ethical codes of honor and contrasting their actual behavior with their (honest) self-images are noneconomic levers for promoting honest behavior.

Physical and financial health

Keeping health care utilization under control is a major goal of both predictive analytics and behavioral economics. In the United States alone, health expenditures topped $2.8 trillion in 2013, accounting for more than 18 percent of the country’s GDP.18 Furthermore, chronic diseases, exacerbated by unhealthy lifestyles, account for a startling proportion of this spending.19 All of this makes health spending an ideal domain for applying predictive analytics: The issue is one of utmost urgency; unhealthy behaviors can be predicted using behavioral and lifestyle data; and models are capable of singling out small fractions of individuals accounting for a disproportionate share of utilization. For example, in his New Yorker article “The Hot Spotters,” Atul Gawande described a health utilization study in which the highest health utilizers, 5 percent of the total, accounted for approximately 60 percent of the total spending of the population.20

Of course, identifying current—and future—high utilizers is crucial, but it’s not the ultimate goal. Models can point us toward those who eat poorly, don’t exercise enough, or are unlikely to stick to their medical treatments, but they do not instruct us about which interventions prompt the needed behavior change. Once again, behavioral economics is the natural framework to scientifically attack the last-mile problem of going from predictive model indication to the desired action.

In contrast with the building inspection and fraud detection examples, it is unlikely that purely economic incentives are sufficient to change the behavior of the very worst risks. In this context, the worst risks are those with multiple chronic diseases. A promising behavioral strategy, described in Gawande’s article, is assigning health coaches to high-utilizing patients who need personalized help to manage their health.

Such coaches are selected based more on attitudes and cultural affinities with the patient than on medical training. Indeed, this suggests a further data science application: Use the sort of analytical approaches employed by online matchmaking services to hire and match would-be health coaches to patients based on personality and cultural characteristics. Gawande recounts the story of a diabetic, obese woman who had suffered three heart attacks. After working with a health coach, she managed to leave her wheelchair and began attending yoga classes. Asked why she would listen to her health coach and not her husband, the patient replied, “Because she talks like my mother.”21 In behavioral economics, “messenger effects” refer to the tendency to be influenced by a message’s source, not just its content.

Closely analogous ideas can be pursued in such arenas as financial health and back-to-work programs. For example, a recent pilot study of a “financial health check” program reported a 21 percent higher savings rate compared with a control group. The health check was a one-hour coaching session designed to address such behavioral bottlenecks as forgetfulness, burdensome paperwork, lack of self-control, and letting short-term pleasures trump long-term goals.22 Predictive models (similar to credit-scoring models) could be built to identify in advance those who most need such services. As with the child support case study, this would enable prevention as well as remediation. But behavioral science is needed to identify the most effective ways to provide the coaching.

Viewed through a traditional lens, the telematics black-box devices increasingly used by insurance companies are the ultimate actuarial segmentation machine. They can capture fine-grained details about how much or abruptly we drive, accelerate, change lanes, turn corners, and so on.

In 3D: Data meets digital meets design

The discussion so far has focused on behavioral nudge tactics as a way of bridging the gap between predictive model indications and the appropriate actions. But nudge tactics are more commonly viewed in the context of a more everyday last-mile problem: the gap between people’s long-term intentions and their everyday actions. An overarching theme of behavioral economics is that people’s short-term actions are often at odds with their own long-term goals. We suffer inertia and information overload when presented with too many choices; we regularly put off until “tomorrow” following up on important diet, exercise, and financial resolutions; and we repeatedly fool ourselves into believing that it’s okay to indulge in something risky “just this once.”23

Just as behavioral science can help overcome the last-mile problem of predictive analytics, perhaps data science can assist with the last-mile problem of behavioral economics: In certain contexts, useful nudges can take the form of digitally delivered, analytically constructed “data products.”

Consider that much of what we call “big data” is in fact behavioral data. These are the “digital breadcrumbs” that we leave behind as we go about our daily activities in an increasingly digitally mediated world.24 They are captured by our computers, televisions, e-readers, smartphones, self-tracking devices, and the data-capturing “black boxes” installed in cars for insurance rating. If we refocus our attention from data capture to data delivery, we can envision “data products,” delivered via apps on digital devices, designed to help us follow through on our intentions. Behavioral economics supplies some of the design thinking needed for such innovations. The following examples illustrate this idea.

Driving behavior change

Viewed through a traditional lens, the telematics black-box devices increasingly used by insurance companies are the ultimate actuarial segmentation machine. They can capture fine-grained details about how much or abruptly we drive, accelerate, change lanes, turn corners, and so on.

But in our digitally mediated world of big data, it is possible to consider new business models and product offerings. After all, if the data are useful in helping an insurance company understand a driver’s risk, they could also be used to help the driver to better understand—and control—his or her own risk. Telematics data could inform periodic “report cards” that contain both judiciously selected statistics and contextualized composite risk scores informing people of their performance. Such reports could help risky drivers better understand (and hopefully improve) their behavior, help beginner drivers learn and improve, and help older drivers safely remain behind the wheel longer.

Doing so would be a way of countering the “overconfidence bias” that famously clouds drivers’ judgment. Most drivers rate themselves as better than average, though a moment’s reflection indicates this couldn’t possibly be true.25 Peer effects could be another effective nudge tactic. For example, being informed that one’s driving is riskier than a large majority of one’s peers would likely prompt safer driving.

It is worth noting that employing nudge tactics does not preclude using classical economic incentives to promote safer driving. For example, the UK insurer ingenie uses black-box data to calculate risk scores, which in turn are reported via mobile apps back to drivers so that they can better understand and improve their driving behavior. In addition, the driver’s insurance premium is periodically recalculated to reflect the driver’s improved (or worsened) performance.26

Resisting the siren song

Self-tracking devices are the health and wellness equivalent of telematics black boxes. Here again, it is plausible that appropriately contextualized periodic feedback reports (“you are in the bottom quartile of your peer group for burning calories”) could nudge people for the better.

It is reasonable to anticipate better results when the two approaches are treated as complementary and applied in tandem. Behavioral science principles should be part of the data scientist’s toolkit, and vice versa.

Perhaps we can take the behavioral design thinking a step further. In behavioral economics, “present bias” refers to short-term desires preventing us from achieving long-term goals. Figuratively speaking, our “present selves” manifest different preferences than our “future selves” do. An example from classical mythology is recounted in Homer’s Odyssey: Before visiting the Sirens’ island, Odysseus instructed his crew to tie him to a mast. Odysseus knew that in the heat of the moment, his present self would give in to the Sirens’ tempting song.27

Behavioral economics offers a somewhat less dramatic device: the commitment contract. For example, suppose Jim wants to visit the swimming pool at least 15 times over the coming month but also knows that on any given day he will give in to short-term laziness and put it off until “tomorrow.” At the beginning of the month, Jim can sign a contract committing him to donate, say, a thousand dollars to charity—or even to a political organization he loathes—in the event that he fails to keep his commitment to his future self.

Commitment contracts digitally implemented on mobile app devices offer an intriguing application for self-tracking devices that goes beyond real-time monitoring or feedback reports. Data from such devices could automatically flow into apps preprogramed with commitment contracts that reflect diet, exercise, or savings goals. Oscar Wilde famously said that “the only way to get rid of temptation is to yield to it.” Perhaps. But Wilde wrote long before behavioral economics.28

Washing our hands of safety noncompliance

I yield to the temptation to give one final example of data-fueled, digitally implemented, and behaviorally designed innovation. A striking finding of evidence-based medicine is that nearly 100,000 people die each year in the United States alone from preventable hospital infections. A large number of lives could therefore be saved by prompting health care workers to wash their hands for the prescribed length of time. Medical checklists of the sort popularized in Atul Gawande’s The Checklist Manifesto are one behavioral strategy for improving health care workers’ compliance with protocol.29 An innovative digital soap dispenser suggests the possibilities offered by complementary, data-enabled strategies.

The DebMed Group Monitoring System is an electronic soap dispenser equipped with a computer chip that records how often the members of different hospital wards wash their hands.30 Each ward’s actual hand washing is compared with statistical estimates of appropriate hand-washing that are based on World Health Organization guidelines as well as data specific to the ward. Periodic feedback messages report not specific individuals’ compliance but the compliance of the entire group. This design element exemplifies what MIT Media Lab’s Sandy Pentland calls “social physics”:Social influences help shape individual behavior.31In such cases, few individuals will want to be the one who ruins the performance of their team. Administered with the appropriate behavioral design thinking, an ounce of data can be worth a pound of cure.

Act now

In predictive analytics applications, the last-mile problem of prompting behavior change tends to be left to the professional judgment of the model’s end user (child support enforcement officer, safety inspector, fraud investigator, and so on). On the other hand, behavioral nudge applications are often one-size-fits-all affairs applied to entire populations rather than analytically identified sub-segments. It is reasonable to anticipate better results when the two approaches are treated as complementary and applied in tandem. Behavioral science principles should be part of the data scientist’s toolkit, and vice versa.

Behavioral considerations are also not far from the surface of discussions of “big data.” As we have noted, much of big data is, after all, behavioral data continually gathered by digital devices as we go about our daily activities. Such data are controversial for reasons not limited to a basic concern for privacy. Behavioral data generated in one context can be repurposed for use in other contexts to infer preferences, attitudes, and psychological traits with accuracy that many find unsettling at best, Orwellian at worst.

In addition, many object to the idea of using psychology to nudge people’s behavior on the grounds that it is manipulative or a form of social engineering. These concerns are crucial and not to be swept under the carpet. At the same time, it is possible to view both behavioral data and behavioral nudge science as tools that can be used in either socially useful or socially useless ways. Hopefully the examples discussed here illustrate the former sort of applications. There is no unique bright line separating the usefully personalized from the creepily personal, or the inspired use of social physics ideas from hubristic social engineering. Principles of social responsibility are therefore best considered not merely topics for public and regulatory debate but inherent to the process of planning and doing both data science and behavioral science.

Behavioral design thinking suggests one path to “doing well by doing good” in the era of big data and cloud computing.32 The idea is for data-driven decision making to be more of a two-way street. Traditionally, large companies and governments have gathered data about individuals in order to more effectively target market and actuarially segment, treat, or investigate them, as their business models demand. In a world of high-velocity data, cloud computing, and digital devices, it is increasingly practical to “give back” by offering data products that enable individuals to better understand their own preferences, risk profiles, health needs, financial status, and so on. The enlightened use of choice architecture principles in the design of such products will result in devices to help our present selves make the choices and take the actions that our future selves will be happy with.