Success at scale A guide to scaling public sector innovation

25 May 2018

Scaling is the key to any innovation. This is particularly true—and more difficult—in the public sector. Absent market indicators, governments can improve a project’s odds of success with strategies that use data analysis to drive scaling success.

The challenge of scaling

Scaling innovation is one of the greatest challenges faced by any organization.– Startup Genome1

Scaling can be even more challenging in the public sector, and government institutions that successfully test a new internal process or program often struggle to replicate it across the entire enterprise. The lack of traditional market indicators to drive decision-making tends to make it difficult for innovators in the public sector to assess whether a new solution would work and whether it is worth investing in a large-scale implementation.

Learn more

Explore the government and public services collection

Subscribe to receive public sector content

However, scaling innovations successfully—even in the public sector—is possible. Governments can improve their odds of success by taking a strategic, data-driven approach to scaling that accounts for the greatest scaling risk factors. We’ve examined dozens of public sector organizations that have scaled innovations to develop a playbook to assist public sector organizations in overcoming potential challenges of scaling new products, services, or processes.

This playbook revolves around a three-step process for scaling innovations in government:

- Design the pilot;

- Execute and evaluate the pilot; and

- Scale the innovation.

Sounds easy, right? In reality, how you approach each of these steps can determine the success or failure in scaling an innovation. The playbook was designed to identify and address some of the thorniest challenges often encountered during the scaling process, and offers strategies to consider to help public officials ensure that good ideas can be effective at scale.

Step one: Design the pilot

Nearly 70 percent of startups die not due to a flawed idea but from a failure to successfully scale their efforts. A good idea may show success as a proof of concept, but that doesn’t mean it will work everywhere.– Startup Genome2

We’ve all seen it before—an innovative approach shows promise in a pilot location and generates funding for expanding the effort. But once the approach is tried in multiple locations, the results are disappointing.

What went wrong? In many cases, failure at scale can be traced to problems related to how the pilot was set up. Designing an effective pilot generally entails much more than merely choosing a location to try out a new idea. Ideally, the designers of a successful pilot should:

- Create an innovation profile;

- Select pilot and control groups;

- Establish measures of success; and

- Develop a deployment plan.

Create an innovation profile

When designing a pilot, one of the first steps should be a diagnostic that identifies important factors associated with the innovation and the populations to which it can be deployed. We call this analysis an innovation profile.

The innovation profile helps to identify elements of an innovation that may impact the adoption and usage of a new solution. It includes details of the solution, as well as information about the target adopters of that solution. Implementation teams can draw on innovation profiles to inform the selection of pilot and control sites. Figure 1 includes some of the key data elements that should be included in developing an innovation profile.

A recently published report from the Innovation Unit and the Health Foundation of the United Kingdom entitled Against the odds: Successfully scaling innovation in the NHS highlights the importance of understanding both the innovation and its targeted users in eventually scaling a given solution: “The ‘adopters’ of innovation need greater recognition and support. The current system primarily rewards innovators, but those taking up innovations often need time, space and resources to implement and adapt an innovation in their own setting.”3

The process of crafting an innovation profile can be far from simple. In 2009, the Obama administration set out to end homelessness among veterans, in an initiative called Opening Doors.4 Decades of sociological studies had already explored the complex problem from several perspectives, attributing the issues to a lack of health care, mental trauma, addiction, job placement, housing policy, and benefits distribution. Various interventions had shown disparate results. Organizers for Opening Doors needed to use previous studies and learning to develop their pilot based on effective interventions and the target population.

For Opening Doors, the ideal adopter was easy to identify: homeless veterans. However, this information alone would not have provided the insight to make a solution successful. By mapping the ecosystem dynamics, end-user requirements, and adopter needs of this group, Opening Doors came to better understand the variety of challenges and systems that homeless veterans dealt with. They also came to realize that their interventions should begin with a single priority: housing. Once homeless people had a place to sleep, they found it easier to kick addictions, secure jobs, treat health problems, pay debts, re-establish relationships, and maintain the daily stability that facilitates a healthy life. Of all the reinforcing stages in a difficult cycle, one mattered most: housing. By building a profile of homeless veterans, Opening Doors was able to better understand who they were designing for and what type of solution would have the greatest impact.

Creating an innovation profile can also be useful for understanding where and how end users would acquire the innovation, and how it could impact their day-to-day life. For example, when India’s National Innovation Council was spreading telehealth at a village level, they learned to resist making presentations about technology to their audience. Instead, they emphasized how their intervention could spare pregnant women a long, dangerous hike to the nearest hospital.5 Putting women at the center of their design helped gain buy-in and shape what the innovation would require when deployed, ultimately making scaling more effective.

Select pilot and control groups

The innovation profile lays a foundation for narrowing the potential adopters of the innovation, and aids in the selection of a group with which to pilot the solution. Specifically, the innovation profile helps select a site or population for the pilot based on: (1) where the innovation would have a measurable impact on the identified pain point; 2) infrastructure and process considerations; and (3) the broader ecosystem.

Consider a hypothetical innovation: a mobile application designed to connect food-insecure individuals to nonprofit resources in inner cities. Information found in the innovation profile would help narrow potential cities to those with a large enough population of food-insecure individuals. It would also help identify which of these populations has access to smartphones. It would further narrow the pilot group to those cities where nonprofit institutions provide a large enough resource base. It also helps to determine how to publicize the application.

Finally, the team would select a pilot site. They’d double-check for factors which could confound results, such as a long history of innovations aimed at food insecurity, or other interventions running in the same location.

Piloting pitfalls

When scaling fails, it is often because of how organizations select pilot locations or populations. Many scaling challenges associated with pilot selection include:

- The pilot site is selected based on interest and the ability of local staff to make the pilot successful. If you test an idea at your “best” site, what do you really learn? Really good people can make a mediocre idea successful. A pilot site should be “representative”—serving a typical population with an ordinary staff.

- The site may be piloting multiple initiatives. If a site is running multiple pilot initiatives, confounding variables may impact the outcomes of a given pilot, making it difficult to determine which variable to credit for results.

- The pilot site was not evaluated for fit and need for the specific innovation. A particular site may lack the infrastructure and environment to determine whether the innovation is successful. For example, the served population may lack a key resource (a mobile app tested with a technologically illiterate population, for example) which could inhibit an evaluation of whether the pilot worked.

- The pilot lacks a control location. Many pilots are implemented without a similar site or population serving as a control. The pilot is introduced, and the success is determined based on a before/after comparison. Without a control to test against, the pilot’s “impact” could fail to account for important environmental, political, and trend-driven factors that skew results.

The team would then select a control site from the pool of randomized candidates that possess similar characteristics to the pilot site.

Establish measures of success

Before rolling out a pilot, it is important to establish clear metrics to help answer three questions related to the innovation that will be piloted and scaled: (1) is the innovation having the desired impact; (2) is the innovation fully developed; and (3) how will we know when the innovation is ready to be deployed to a new site or population.

Impact measures: Impact measures should define whether an innovation is having the desired impact. Impact measures are often directly tied to the organization’s mission, are often time-bound, and should be used to support the development of success criterion for a given solution. By comparing the realized impact to what was determined to be the baseline measures for success, impact measures can be key in making go/no-go decisions related to investing in scaling an innovation more broadly.

The City of San Diego introduced software to automate the immense paperwork required to verify benefits eligibility. The automation “reduced the time it takes to approve a SNAP application from 60 days to less than a week.”6 That clear impact measure—time to approve an application—helps the county recognize that the endeavor succeeded.

Taking it a step further, the impact of the automated software reduced wait times, but what would the cost savings have to be to scale the software to other jurisdictions? Success criteria can help to determine if the initiative should continue based on the cost to impact in scaling. For San Diego, scaling was a no-brainer because of the reduction in wait times and minimal additional costs to rolling out the software across the whole office. Additional considerations would likely need to be taken if the State of California, for example, was looking to adopt a similar software and model.

Technical measures: Technical measures study and reveal whether the innovation functions as intended. Verifying technical measures, for example, could include testing whether a self-driving bus could avoid collisions, or using A/B testing on new driver registration forms. They help answer the question, “Is the solution fully developed, or in need of further iteration?”

One software designed to flag potential fraud in benefits was turning up too many false positives. Using that as a technical measure—the accuracy of flagged claims—the agency employed machine learning to improve the software’s accuracy until 95 percent of flagged claims revealed fraud.7

Implementation measures: Implementation measures can help determine whether a solution is fully rolled out at a given site. It is typically one of the most useful measures during scaling, as it can determine when a deployment team can depart from one group of adopters and begin introducing the innovation to another population or site.

An implementation measure could be “80 percent of nonprofits providing services to the food-insecure population are accessible via the mobile application,” or “30 percent of the food-insecure population has downloaded the application.”

Impact measures define the impact an innovation is intended to have.

Technical measures define whether a specific solution needs further iteration.

Implementation measures define whether an innovation has been fully deployed at a given site or with a given population.

Develop a deployment plan

Collectively, the innovation profile, pilot and control groups, and measures of success would feed into the creation of a deployment plan. The deployment plan articulates the timing, required resources, and roles and responsibilities for personnel at each stage of the pilot.

Plan your approach: Identify the who, what, when, where, why, how, and how much elements of conducting the pilot with the selected pilot group. Begin by laying out your objectives and timeline, and assign desired outputs to different locations and time segments.

Budget for your pilot: Identify your total budget and estimate the total costs to testing the pilot in different locations. Consider how to allocate the budget based on desired results, factoring in the possible need for variability in testing sites and target groups.

Build a data collection plan: Consider the kinds of questions that the data you are collecting would allow you to answer. Statistics are powerful, but stories from the ground related to the implementation may ultimately help you determine what made the pilot succeed or fail. Consider utilizing mixed method approaches to your data collection. Do not overlook the importance of sound data collection instruments. Test your interview guides, survey questions, and other instruments before utilizing them to evaluate the pilot.

Develop communications materials: The deployment schedule should include all materials to publicize the pilot to relevant stakeholders. Appeal to the stakeholder needs identified in the innovation profile. If your stakeholders seem hungry, point out if you’re offering food. If stakeholders’ concern is ensuring participation in class at school, explain that students are more focused and attentive when they have eaten, and the importance of making sure they’ve had lunch.

Step two: Execute and evaluate the pilot

Too many innovators and entrepreneurs think if they just find the right idea, everything else comes easy. They got it the wrong way around. 1) The idea is easy. 2) The search and iteration for a value proposition and business model is hard. 3) Execution and scaling is where winners are decided.Alexander Osterwalder, developer of the Business Model Canvas8

All the prework associated with developing an innovation profile, selecting a pilot group, establishing measures, and developing a deployment plan can prepare an organization to roll out and evaluate the new solution as efficiently as possible.

Deploy and evaluate the pilot

Execute on the deployment plan and launch the pilot to its initial site(s). As the pilot is deployed, monitor it. Collect data. Document lessons learned. Check progress against your goals. If the plan worked, figure out why. Look for quantitative and qualitative factors that drove success.

If it was successful, what factors can be repeated in other sites? Were specific features unique to that location? Document any relevant factors. Update the deployment plan to include these factors before the pilot expands to additional sites. Similarly, document and assess the costs and resources that were invested in deploying the pilot. How much would this cost to scale? The technical, implementation, and impact measures identified during deployment planning can be of particular importance to determine whether the pilot is correctly deployed, meets the needs of end users, and is successful in achieving mission impact.

All of this data could be valuable in ultimately making decisions around scaling.

Document the key steps, dependencies, and success factors

One of the most important elements of piloting an innovation effort is documenting the key steps in an innovation’s successful implementation. However, many organizations fail to document these steps, and as a result, must “start over” each time they attempt to expand to a new site or population.

San Francisco’s Start-up in Residence (STIR) program, which incubates small teams of tech entrepreneurs to update civic capabilities, didn’t make this mistake. It previously spread to the cities of West Sacramento, San Leandro, and Oakland, and in 2018 is scaling to more than 11 cities nationwide.9

“With a program that has a lot of big parts, one of the biggest challenges is when you try to bring someone on, or get them up to speed,” says STIR’s Daniel Palmer. “So we build out documentation around what the program looks like.”10 STIR also keeps standard legal agreements and purchasing documents. New programs can quickly adopt these rather than start them from scratch.

Step three: Scale the innovation

Innovation refers to an idea, embodied in a technology, product, or process, which is new and creates value. To be impactful, innovations must also be scalable, not merely one-off novelties.Economic Council and Office of Science and Technology Policy11

Now it’s time to scale the innovation. If the pilot worked, incorporate your observations into the rollout plan for the next site. Rather than attempting to go from pilot to full-scale immediately, consider expanding the solution incrementally, strategically moving from one adopter group to the next based on the data you are collecting and following a clearly defined plan.

Scaling is incremental

The United Kingdom’s NHS partnered with Babylon Health to use chatbots to provide basic medical advice. Citizens describe their symptoms and are advised whether they should see a doctor. After initial testing, NHS expanded the chatbots to five carefully selected pilot programs in London boroughs, rather than merely making the chatbots available to the public at large.

To give an innovation the greatest chance of scaling successfully, consider the following questions: (1) When and where (or with whom) am I going to scale the innovation? (2) How am I going to introduce the innovation to a new group? (3) What infrastructure or platforms can support scaling across all sites and populations? (4) Who are going to be my scaling champions? and (5) How can I modify the innovation or my scaling approach to meet the needs of a specific adopter group? The following steps can help you address each of these questions in a consistent, strategic manner.

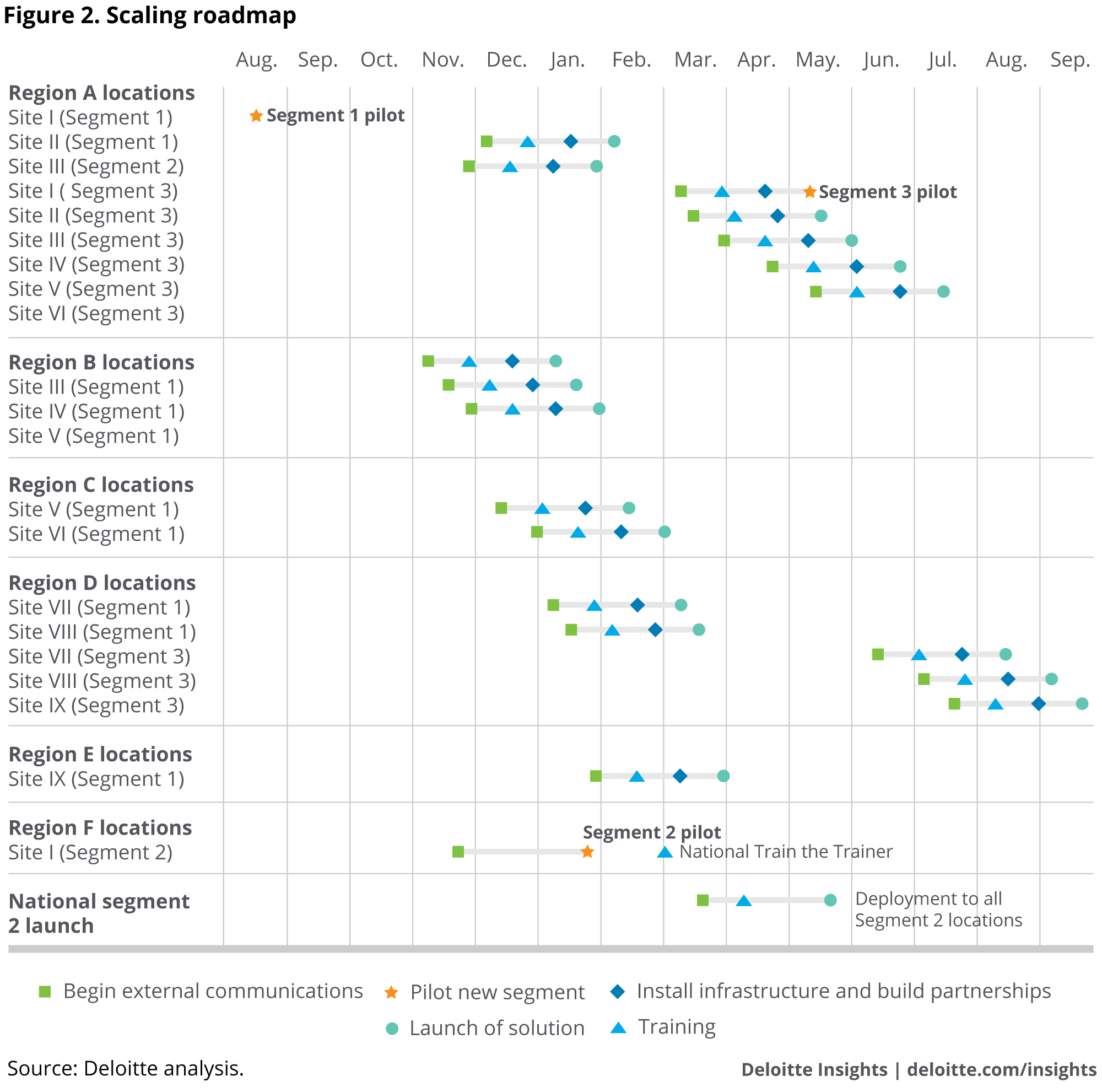

DEVELOP A SCALING ROADMAP

The scaling roadmap details where, when, and in what order to expand the solution (see figure 2). The roadmap should lay out every single location or population the innovation will target, and detail everything down to the cost of peanut butter sandwiches.

Identify the desired rate of scaling: Check your budget. If you’ve got the time or the money, you can determine how far, and for how long, you intend to expand. If your pilot can be trusted, you should be able to predict your cost and return on investment at each stage.

The importance of funding and timing in scaling can be seen in the example of Kutsuplus. In 2012, Helsinki, Finland launched a transportation plan that included an optimized bus service that picked up users where they requested, then optimized routes—two years before Uber Pool rolled out a similar strategy. But Kutsuplus ran into trouble when it hit a middle drop in cost-effectiveness—the program was successful enough to justify investment in more buses, but too cash-strapped to expand to a service that could reach many thousands more people and capitalize on economies of scale. The service ended shortly thereafter, illustrating the effects of scaling at possibly the wrong pace.12

Use data to decide where to scale next: Ideally, the scaling roadmap is driven by data. The sites/populations most likely to successfully adopt an innovation are often those most similar to the ones with which the innovation has already shown results. Data can show which sites share your pilot’s characteristics. Consider segmenting potential adopter groups and selecting specific segments to scale the solution to. Consider using regression analysis to help plan where to scale next based on your findings from the evaluation stage. For example, San Francisco’s STIR studied the successful components of their programs in the Bay Area, and when it was time to scale nationwide, selected sites based on those qualities.

Develop a timeline: With these steps complete, it should be possible to detail exactly where and when to implement each intervention.

DEVELOP A SCALING CHECKLIST

Once you have identified where and when to scale a solution, it is important to document how you are going to introduce the innovation to each new group. A scaling checklist details the essential steps to deploy the innovation at a given site or with a given population. Generally, the items detailed in such a scaling checklist can be divided across three broad phases: (1) inform, (2) deploy, and (3) sustain.

Inform: Scaling means letting go of control of your creation. So whatever innovation you’ve raised, the new people responsible for it need support. Your scaling checklist should detail each step necessary to inform new groups about the innovation and equip them with the tools to champion it themselves.

Document a clear set of strategies to inform potential champions of what they need to know to deploy your innovation. “Inform” is all about creating a community capable of driving the innovation forward. For example, STIR describes its efforts to expand as “really about finding a couple of champions within each city who can be fully up to speed. Documentation helps. STIR scales using a point person who works in each municipality. With the burden shared, STIR does not need to add a new administrator for every new site.”

Deploy: Support your new champions in deploying the innovation to new groups. Your scaling checklist should identify each step necessary to provide them with what they’ll need to deploy the innovation successfully. Many of these items should have been documented when you were piloting the innovation initially. Those you have selected to champion your innovation with new groups should never feel that success is completely up to them.

Consider the case of Challenge.gov, which aimed to encourage the use of prize challenges across the Federal government. A good challenge can be tricky. Some require challengers to submit their work publicly, sharing their progress with competitors. Others require challengers to give up intellectual property. Challenge design is crucial to ensure the challenge encourages the desired result. “It takes a lot of time and thoughtfulness,” says Jenn Gustetic, who has spent her career expanding the public sector’s innovation potential at NASA, in the White House, and as a Harvard Kennedy School Digital HKS fellow.13 Because setting up good prizes can be so tricky, Challenge.gov has established an extensive support system to help walk people through the process. Challenge.gov takes about 10 calls a week talking prospective sponsors through challenge ideas. They have even launched a mentorship program.

Support/sustain: How will you go about monitoring the innovation as it is introduced to each new group, evaluate its success, and identify areas for further improvement? Action items related to these activities would go in the “support/sustain” portion of your scaling checklist. Specific action items to consider include those related to:

- Knowledge capture: Networks need a way to learn from experience. There’s no sense repeating a mistake once per site. So identify a set of steps you will take to track lessons learned and strategies used. Then share them. As part of its commitment to transparency, the US federal government began creating “open innovation toolkits.” These toolkits “document best practices, case studies, and relevant policy and law and provide step-by-step instructions for creating open-innovation programs.”14 Anyone attempting to open up government to innovation now has a handbook.

- Knowledge sharing: Measuring success shouldn’t stop once the data has proved that an innovation works. A record of statistics can help administrators improve. Sites should be able to share lessons and compare performance. “This is where our 700-plus case studies of prizes and challenges are hugely important to pull from,” says Gustetic. When she was trying to persuade new agencies to adopt prizes and challenges, she could point to results that are relevant to their program areas. “As I'm sitting and trying to persuade them, I can pull 20 or 30 different examples.” She’ll say, “None of them are 100 percent analogous to their mission, but if you pool these design features, you could do something really interesting for your program.”15

The implementation measures from the pilot design can tell you when the work is finished at a given site. Your checklist should also include all the tools, templates, and information needed to execute each step detailed within. As attention and resources are reallocated to a new site, the toolkit should include any communications materials, processes, data-collection templates, or other items needed.

Together, the creation of a scaling roadmap and checklist allow an organization to repeat the same message to adopters in the same consistent manner, reducing confusion and expediting adoption. In discussing their book, Scaling Up Excellence: Getting to More Without Settling for Less, authors Hayagreeva Rao and Robert Sutton cite how John Lilly, the former CEO of Mozilla, emphasizes the importance of standardizing messaging in scaling, making sure he said the same thing over and over so that people wouldn’t get conflicting messages. “If you’ve all got the same poetry in your head—these mantras like “the customer is boss”—they can actually drive a bunch of decisions. It can let everybody be on the same page and know where they’re heading.”16

DEVELOP SHARED INFRASTRUCTURE AND COMMON PLATFORMS

While the scaling checklist should detail every action needed to deploy the innovation to a new group of adopters, the implementation of platforms, policies, and infrastructure can serve as a foundation for scaling across all future adopter groups. Rather than repeating steps each time the innovation is introduced to a new group of users, what steps can be taken to consolidate these efforts to save time and money?

Establish legal and policy frameworks: Avoiding duplication can speed up replication. The city of San Francisco has created boilerplate procurement contracts for Software as a Service (SaaS). It sounds small, but most government agencies acquire cloud software with the same general procedures used to acquire a tank. With standard legal documents, every new iteration of STIR has a tested means of establishing the legal relationship between a startup team and government entity.

Setting up legal frameworks has similarly helped government organizations use prizes more effectively. Gustetic describes the movement’s infancy: “Early innovators figured out how to implement prizes under … previously passed laws that could be interpreted (on legal review) as applying to prizes.”17 In 2010, she writes, an OMB policy memo summarized those existing legal authorities so innovators didn’t need to start from scratch. By the end of the year, Congress passed a bill explicitly authorizing the new approach.18

Establish shared infrastructure and platforms: Some innovations require all users to access the same, or similar, information on an ongoing basis. Creating a single shared platform, system, or infrastructure to support activities across all adopters of your innovation can both reduce costs and increase the consistency of the user experience.

In 2002, the No Child Left Behind (NCLB) Act actively encouraged the adoption and funding of “scientifically based research” educational programs. However, many practitioners had difficulty identifying which programs had evidence of effectiveness and what to make of the wide range of available data and evaluation quality. With a limited time period for implementation and lacking the training to evaluate research quality, practitioners didn’t know how to source evidence-based programs as NCLB instructed. Without a robust and valid source of data to determine what worked and under what conditions, it was challenging for the Department of Education to get it off the ground, and the initial programs receiving funding were not always ready for scaling.

Eventually, agency staff and appropriators identified this challenge and worked to address it. As a part of the Education Sciences Reform Act, the Department of Education’s Institute of Education Science created the What Works Clearinghouse (WWC). An initiative of the National Center for Education Evaluation and Regional Assistance, the mission of the WWC is to “be a central and trusted source of scientific evidence for what works in education. WWC is now one of the longest-running federal clearinghouses and has served as a model for other searchable evidence databases like those for Teen Pregnancy Prevention and Opportunity Youth programs.”19

GROW THE NETWORK

With the scaling roadmap, checklist, and shared infrastructure in place, now it’s time to focus on growing the network of supporters and implementers.

Nurture a community of practice: Innovations are implemented by real people. They typically thrive with support, enthusiasm, and intellectual stimulation. Connect people who are excited about change. Communities can be formal and informal, from a committee to a listserv. The federal government’s community of practice for citizen science (known as CSS) began with stray participants staying late to chat at a monthly roundtable. Years later, it has congressional recognition.20

Seek mentors: Most experts are excited to make a positive change in their field. Effective innovations often occur at an intersection of disciplines, so experts from various fields should be willing to contribute perspective. These should include experts in the target community, and experts in scaling itself. Seek their input. That help can be invaluable.

Recruit champions: Not all public sector experiments have a financial incentive to spread. So for a project to develop its own momentum, the innovation may need partners and cheerleaders in other organizations. Outside partners may have perspectives that help revise the project. Partners within a target group can implement an innovation with an insider’s deftness. These people understand their community, and already have relationships and trust.

ALLOW ROOM FOR PERSONALIZATION

A good intervention will have to adapt to personalities, the vagaries of target populations, and the cultures of partner organizations. Decide which aspects must maintain rigid uniformity. Let other aspects evolve. Trust your partners and employees to make each program their own.

Outreach to homeless veterans was difficult. So was convincing more than 440 mayors and community leaders to commit to ending homelessness in their communities—with help from scads of federal agencies and NGOs. The Opening Doors initiative convened these major players, and explained the approach validated by the previous testing: housing first. It established shared metrics for measuring success. Lastly, the federal government provided billions of dollars in funding, including grants to communities that participated in the push to end homelessness—provided they collected and shared a standard set of progress metrics. However, the approach also left individual NGOs and communities the room to control the specifics of implementation. The housing first policy would scale. The housing each community provided would adjust to that community’s needs.

Conclusion

In recent years, the push for a “moneyball” approach to government and evidence-driven policy seems to have reshaped the public sector landscape. Different types of programs now receive funding based in part on their potential for scaling. As more organizations pivot toward data-driven decision-making and funding, they would likely need a structured approach for piloting and scaling innovations (as envisioned in figure 3). While expansion can be daunting, with the right structure and “secret sauce,” successful public pilot programs can find effective ways to scale.