No data? No problem. has been saved

Article

No data? No problem.

Introducing Siamese networks for one-shot learning

7 min read

Leo Liu is a manager in the Data Analytics Delivery team at Deloitte. Leo is responsible for delivering large-scale AI projects for clients from proof of concept to productionisation. When solving problems with machine learning you will often need lots of data, known as ‘big data’, to train your models. This is because they need substantial historical information to identify predictive patterns. However, sometimes there is not enough data, and your models need to infer from the data you have available. For example, most face recognition applications you need to recognise the person from a single image of that person’s face. This is what is known as one-shot learning.

What is one-shot learning?

A one-shot learning model learns a similarity function where the input is two images , and the output is the degree of difference between the two images. If the input is of the same object then the model’s output, known as degree of difference, will be small. If the input images are of different objects, the model’s output will be large. In a facial verification process, for each pairwise comparison , if the model’s output is smaller than a certain threshold, the two input images are classified as ‘the same’. Conversely, the input images will be considered as different persons.

Let’s say there are four employees in your organization. One day someone shows up in the office and your face verification system needs to recognize whether this person is an employee in your organization despite having seen only one photo from each employee. A one-shot learning model can learn from just one example to recognize the person again.

Figure 1. Facial verification process example

A one-shot learning model is essentially learning a similarity function, where the input are two images and output is the degree of difference between the two images. If the input is of the same person, the model’s output, known as degree of difference, will be small; whereas if the input images are from very different persons, the model’s output will be large. In the facial verification process, for each pairwise comparison, if the model’s output is smaller than a certain threshold, the two input images are classified as ‘the same’; conversely, the input images will be considered as different persons.

This allows you to solve the one-shot learning problem so long as you can teach the function that tells whether the input pair are the same or different.

What is a Siamese network?

A Siamese network is an example of a one-shot learning model. Its architecture consists of two identical convolutional neural networks that process the input image pair and produce the encodings of the original images as output. The pair of encodings is compared by computing their similarity, which is defined as the norm of the difference between the encodings.

A Siamese network is an example of a one-shot learning model. Its architecture consists of two identical convolutional neural networks that process the input image pair and produce the encodings of the original images as output. The pair of encodings is compared by computing their similarity, which is defined as the norm of the difference between the encodings. The objective of the learning is that if the two images are the same, the degree of difference of image encodings is small. Conversely, the difference should be large. During training process, backpropagation is used to ensure the learning objective is satisfied.

Figure 2. Siamese network architecture

How do you train a Siamese network?

To ensure that a Siamese network gives the required encodings of the input images is to define and apply gradient descent on the triplet loss function.

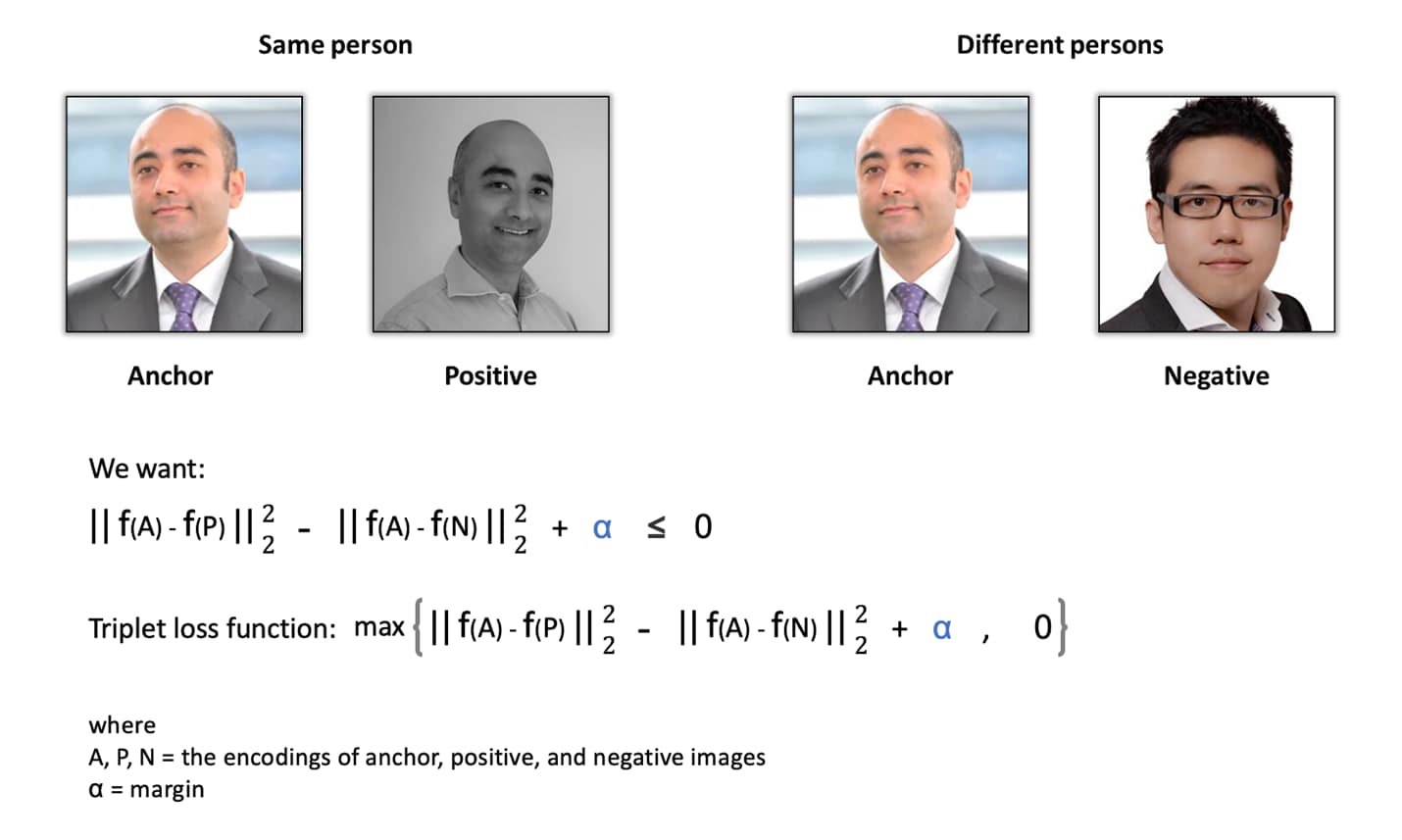

The learning objective of the model is to compare an anchor image with one positive image from the same person and one negative image from a different person, by minimising the degree of difference between the anchor and the positive image while maximising that between the anchor and the negative image . This learning objective function is known as the triplet loss function – always looking at three images (anchor, positive, and negative) during model training.

Figure 3. Siamese network learning objective

A small margin α is added to the equation to make sure the neural networks don’t always produce zeros as output for all the image encodings so that the triplet loss function can be satisfied without the learning functions parameter, i.e. outputting zeroes for all image encodings.. This is also to ensure that the difference between anchor image and positive image is much larger than that between anchor image and negative image.

To train this network for a face recognition system, it is required to have multiple images for the same person. Ideally, we want to choose the triplets that are ‘hard’ to train on, i.e. the difference between anchor and positive image is similar to that between anchor and negative image, to increase the computational efficiency of the learning algorithm. Conversely, if images are chosen randomly, it is very easy for the neural networks to satisfy the objective function, therefore gradient descent is not able to learn much.

Once the network is trained and tuned, it can be applied to the one-shot learning system, where for the face recognition system, a single image of a person is enough for the system.

Using Siamese networks for facial recognition and signature verification

Siamese network architecture can be used in a variety of ways. Facial recognition or verification systems built on a Siamese network are widely applied in today’s digital economy. For example, employees at Baidu’s headquarter in Beijing have the option of registering their faces, which they can use to get through security checkpoints and even pay for things like lunch or items at vending machines1.

Another popular use is for automated signature verification. Once a model is built, banks only need one example of a hand-written signature in order to verify a customer’s identity.

Siamese network architecture is also applied in medicine. Siamese deep neural networks have been developed and combined with an aggregation strategy to provide a viable strategy for the automated detection in MRI images of spinal metastasis, the most common malignant disease in the spine2.

Using Siamese networks in semantic similarity analysis

In natural language processing (NLP), a Siamese network can be used to compare the semantic similarity for pairing textual documents. There is a significant amount of academic research using Siamese networks to investigate semantic similarity between sequences of texts.

One potential application of a Siamese network in semantic similarity analysis is building an investment strategy based on comparing live US Federal Open Market Committee (FOMC) meeting statements with the most immediate prior statement. During times of low market volatility, the FOMC is likely to use the same or very similar statements to the prior meeting when commenting on current and future US economic outlook. Slight variations in the words chosen by FOMC is likely to result in an immediate market reaction. These market reactions usually correct themselves as the market spends more time digesting FOMC’s statements. A trading model can be built using a Siamese network architecture to capture the true semantic difference between the current statement and the baseline, i.e. the prior statement, by betting on the side of true economic reality.

One-shot learning and beyond

One-shot learning models such as Siamese networks can help address the issue of not having lots of training data or ‘big data’. It allows individuals and organisations to build applications to solve real-world problems. It is exciting to see more and more hypothetical and real use cases are being realised by artificial intelligence (AI) practitioners and applied in all sectors to advance our digital economy.

There are many more challenges ahead for AI practitioners to solve. In the long term, models will be more like programmes that have capabilities far beyond the continuous geometric transformations of the input data we currently work with. Also, models will blend algorithmic modules to provide formal reasoning, search, abstraction, intuition, and pattern recognition capabilities. At the current pace of development, in the foreseeable future, artificial general intelligence (AGI) will emerge and become engrained in every aspect of our life.

Learning is a lifelong journey, especially in the field of AI, where there are far more unknowns than certitudes. So please keep learning, questioning, and researching. Don’t stop. Despite the progress we have made so far, most fundamental questions in AI remain unanswered. Don’t wait around, join the community, be active, and contribute. Our AI future is for you, for me, and for us to build.

References

Images are from pexels.com, a free stock photos and videos website