Financial crime

Running faster just to stay in placeIn focus

- Many firms’ capabilities to combat financial crime continue to fall short of expectations. Fixing these issues requires significant organisational change to eliminate silos, to change resourcing models, and to leverage new technologies, in economic conditions where firms will be under pressure to control costs.

- While the EU’s new financial crime supervisor - Anti-Money Laundering Authority (AMLA) - will not be up and running this year, standards will be raised across the bloc in anticipation of its incorporation, and industry should treat 2023 as a “transition year” to the new regime.

- In the UK the Financial Conduct Authority (FCA) will maintain its intense supervision of financial crime, with the continued use of Dear CEO letters as a prompt for firms to act, the ever-present threat of broad and invasive skilled persons reviews where problems persist, and the likelihood of enforcement increasing as FCA patience wears thin.

Following several years of intense supervisory scrutiny, and the industry’s continuing difficulty to meet expectations fully, many firms’ financial crime operating models appear not to be fit for purpose. Financial crime teams rallied and worked overtime to keep up with the imposition of sanctions following Russia’s invasion of Ukraine, but resource pressures remain and relevant skills are in short supply due to competition for staff.

Given the sheer volume of alerts generated by transaction monitoring systems, the inherent limitations of legacy systems and data, continued supervisory investigations, strengthened baseline regulatory expectations, and the ever-changing external environment (the cost-of-living crisis, for instance, implies an uptick in attempted fraud in 2023), it is no wonder that some firms feel they are having to run ever faster just to keep up. Efforts to address these issues in 2023 will be further challenged by the inevitable pressure to control cost that will accompany the deteriorating economic environment.

Financial crime operating model reform

The status quo is not sustainable. Some recurring problems are basic: on-boarding processes are not effective for particular clients; controls are not always properly documented; processes tend to remain “tick box” exercises rather than identifying risks; and transaction monitoring systems generate overwhelming numbers of false positives.

But more fundamentally the way in which financial crime risks are currently managed does not provide the foundations for sustainable improvement towards more effective systems that are better able to prevent financial crime.

Internal structures are not efficient, with responsibilities overlapping across multiple teams. Different elements of oversight can be siloed, with change in one area (e.g. fraud or sanctions) not being pulled through to others (e.g. anti-money laundering). Fixing this requires top-down organisational change, but also changes in how financial crime officers implement on the ground.

Backlogs will not come down without extra resource, whether hired externally or trained internally, but the Russia sanctions episode demonstrates that resourcing models also need to be able to flex to accommodate external “shocks” that otherwise draw significant resource away from business as usual processes.

Financial crime operating model reform must also incorporate technology strategy. Supervisors expect firms to be using technologies such as artificial intelligence (AI) and machine learning (ML), particularly given that supervisors are themselves using them, enabling them to explore significant volumes of data with analytics to target follow-up requests more accurately.

A central use case for new technology is to reduce the volumes of false positives in transaction monitoring systems to free up expert resource for higher value-added work. For firms not already moving in this direction, the starting point should be a review of existing systems to identify whether they enable new approaches; if they do not, firms should consider moving to tools which can. But the adoption of a new tool (and a potential change of vendor) is not a quick fix: a large institution could expect this process to take two years or more. Nor should these technologies be treated as “plug and play” – they depend on data quality, training, and proper governance, and should be subject to model risk management.

Preparing for the EU AMLA

On the regulatory side, countries across the EU will be gearing up to implement the new AML supervisory framework, the central pillar of which will be the new AMLA. Details of the new framework, including AMLA’s location once it is incorporated, should be finalised around the middle of 2023.

AMLA will not be operational until 2024, but as was the case in the run-up to the formation of the European Central Bank Single Supervisory Mechanism, the impact of the new authority will be felt before its formal arrival as authorities across the continent anticipate a boosting of standards and changes in home/host authority relationships. The Bank of Italy, for instance, has already incorporated a new AML unit it has said will enable it to engage more effectively with the new regime, and regulatory fact-finding initiatives are likely to follow.1

Industry should therefore treat 2023 as a transition period in which to prepare itself for AMLA: issues that might be seen as tolerable weaknesses today could become “problems” under the new regime. Furthermore, firms should not underestimate the potential for additional standardisation to change workloads significantly if AMLA converges on tougher baseline expectations or higher frequencies for certain processes than some firms may be used to. Other jurisdictions may observe progress with the AMLA and contemplate setting up similar structures.

FCA areas of focus

In the UK the FCA has made clear that firms should be working through the financial crime implications of the cost-of-living crisis,2 and the FCA will likely follow-up on the issue in 2023.

The “Dear CEO” letter has become a favoured mechanism through which the FCA communicates expectations, with letters typically followed by supervisory visits 18 months to two years down the line. The October 2021 letter on trade finance is a case-in-point, where firms should be prepared for the possibility of supervisory follow-ups in 2023.

The FCA expects firms to demonstrate understanding of specific high-risk lines of business, for which some firms find that they cannot articulate their risk exposures or controls.

Dear CEO letters should be treated as guides to future action, providing a basis from which to extrapolate to other high-risk areas of business. Digital assets is a particularly pertinent example, in need of scrutiny now to forestall what otherwise feels like an inevitable build-up of problems. The cost of falling short of expectations can include being subject to increasingly expensive, invasive and resource-intensive Section 166 Skilled Persons reports, while the likelihood of enforcement also increases over time as FCA patience with slow rates of remediation and transformation wears thin.

Authorised Push Payment (APP) scams

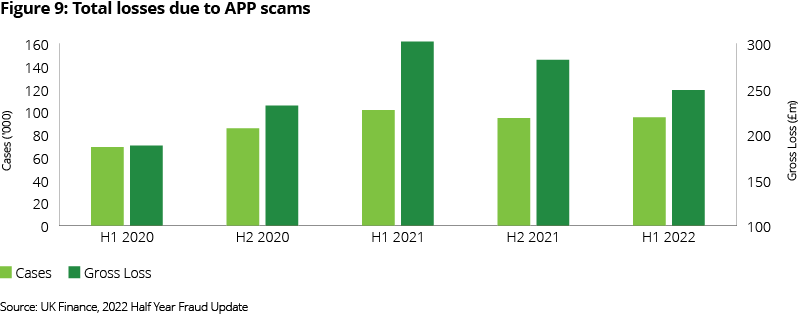

In the UK payments sector, the Payment Systems Regulator’s (PSR) proposed a new policy of (near) mandatory reimbursement for APP scams – which continue to be a significant challenge for the industry - will likely be finalised around mid-year, with an expected implementation deadline during 2024 (subject to progress on the Financial Service and Markets Bill (FSMB) which will give the PSR the regulatory powers to do so). The new PSR reimbursement policy will sit alongside a wider set of measures including the continued widespread rollout of Confirmation of Payee and the proposed publication of APP scam protection performance for the UK largest Payment Service Providers (PSPs) from summer 2023.

With the shift to a split liability model in which both senders and receivers of fraudulent payments are on the hook, and the proposed dispute resolution mechanism allowing for the costs of reimbursement to be re-allocated between firms “to better reflect the steps each PSP took to prevent the scam”,3 there are strong incentives for firms to invest in more sophisticated oversight of both outgoing and incoming payments.

But where large banks may have substantial data from which to build client profiles to distinguish between genuine and fraudulent activities, smaller PSPs may find themselves less equipped to make such distinctions within their relatively newer customer bases. Ensuring the availability of critical data points (such as fraud claim histories and device information), and selecting the right fraud detection vendor (for instance, those with consortium capabilities and access to broader intelligence such as suspect IP addresses), are crucial.

Rapid commercial growth has outstripped the capacity of some smaller PSPs to scale their risk management and controls. But regulators are unlikely to be sympathetic on this front, and there is no real substitute for using some of the financial capacity generated by business growth to improve fraud controls, even if that comes at the cost of higher frictions for client payments.

Figure 1: Total losses due to APP scams4

Source: UK Finance, 2022 Half Year Fraud Update

Data quality challenges

Lastly, data quality remains a critical challenge, particularly at larger firms that have grown through acquisition. The difficulties of sharing data – an area in which the regulatory framework does not always facilitate the best outcomes – further complicates know your client and customer due diligence processes.

Regulatory efforts to address some of these data challenges will progress in the UK in 2023 through the Economic Crime Bill, including through reforms to boost the reliability of Companies House data. The UK is also moving towards a regime in which aspects of data protection laws can be disapplied where there are legitimate financial crime concerns, paving the way for greater cooperation between firms regarding problem clients, and the further development of data-related public-private partnerships.

It remains to be seen whether the EU’s efforts to promote convergence in financial crime supervision will facilitate similar levels of data sharing, or if the bloc aligns on a baseline that is more restrictive.

Actions for firms

All firms: invest in new capabilities

- Continued investment in financial crime capabilities is an absolute necessity, but firms should be looking at fundamental overhauls of existing ways of working by removing silos to join up AML, fraud and sanctions activities, developing better medium-term technology strategies, and changing resourcing models.

- Review financial crime technology strategies, beginning with an assessment of existing tools and capabilities. Where these do not enable the deployment of more advanced solutions capable of reducing workloads, firms should move to new tools, even where this entails a change of vendor relationships.

UK firms

- Expect the FCA to return to issues raised in recent Dear CEO letters in 2023. Firms should treat existing letters as indicators of future supervisory focus by extrapolating FCA commentary to other high-risk areas of business that will likely be the subject of future investigations.

EU firms

- Treat 2023 as a transition period in which to prepare for the arrival of AMLA, recognising that standards will likely rise across the bloc in anticipation of this.

Financial Markets Regulatory Outlook 2023

Explore other chaptersEndnotes

1 Speech by Ignazio Visco, Governor of the Bank of Italy, July 2022

2 Speech by Sarah Pritchard, FCA Executive Director, Markets, September 2022

3 PSR, Consultation paper on Authorised push payment (APP) scams: Requiring reimbursement, September 2022

4 UK Finance, Half Year Fraud Update 2022, October 2022