Inside the Internet of Things (IoT) has been saved

Inside the Internet of Things (IoT) A primer on the technologies building the IoT

22 August 2015

Explore the inner workings of the Internet of Things in this deep dive into some of the technologies that make it possible.

The Information Value Loop

If you’ve ever seen the “check engine” light come on in your car and had the requisite repairs done in a timely way, you’ve benefited from an early-stage manifestation of what today is known as the Internet of Things (IoT). Something about your car’s operation—an action—triggered a sensor,1 which communicated the data to a monitoring device. The significance of these data was determined based on aggregated information and prior analysis. The light came on, which in turn triggered a trip to the garage and necessary repairs.

In 1991 Mark Weiser, then of Xerox PARC, saw beyond these simple applications. Extrapolating trends in technology, he described “ubiquitous computing,” a world in which objects of all kinds could sense, communicate, analyze, and act or react to people and other machines autonomously, in a manner no more intrusive or noteworthy than how we currently turn on a light or open a tap.

Receive IoT insights

Subscribe

Explore the

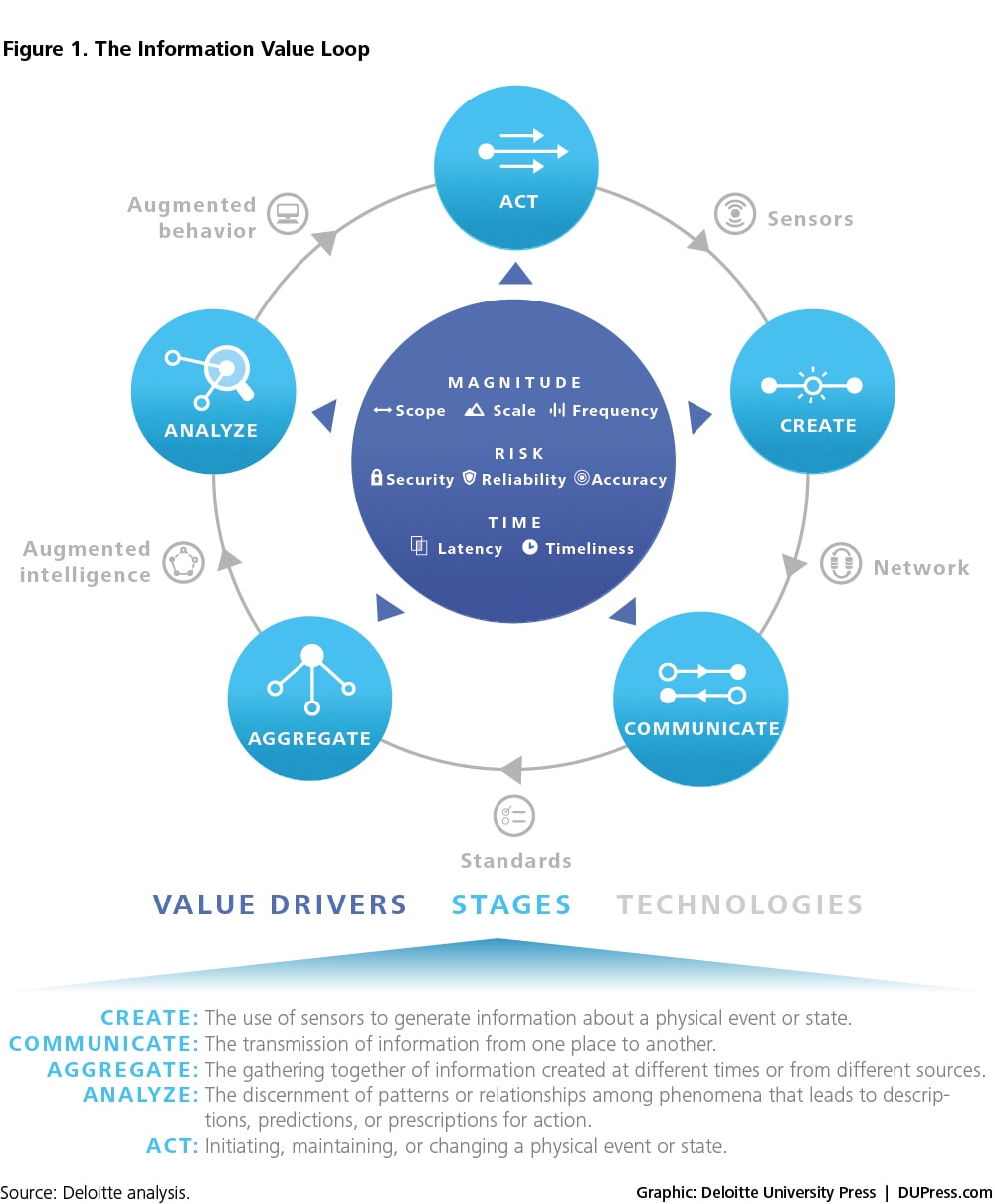

Internet of Things collection

One way of capturing the process implicit in Weiser’s model is as an Information Value Loop with discrete but connected stages. An action in the world allows us to create information about that action, which is then communicated and aggregated across time and space, allowing us to analyze those data in the service of modifying future acts.

Although this process is generic, it is perhaps increasingly relevant, for the future Weiser imagined is more and more upon us—not thanks to any one technological advance or even breakthrough but, rather, due to a confluence of improvements to a suite of technologies that collectively have reached levels of performance that enable complete systems relevant to a human-sized world.

As illustrated in figure 2 below, each stage of the value loop is connected to the subsequent stage by a specific set of technologies, defined below.

The business implications of the IoT are explored in an ongoing series of Deloitte reports. These articles examine the IoT’s impact on strategy, customer value, analytics, security, and a wide variety of specific applications. Yet just as a good chef should have some understanding of how the stove works, managers hoping to embed IoT-enabled capabilities in their strategies are well served to gain a general understanding of the technologies themselves.

To that end, this document serves as a technical primer on some of the technologies that currently drive the IoT. Its structure follows that of the technologies that connect the stages of the Information Value Loop: sensors, networks, standards, augmented intelligence, and augmented behavior. Each section in the report provides an overview of the respective technology—including factors that drive adoption as well as challenges that the technology must overcome to achieve widespread adoption. We also present an end-to-end IoT technology architecture that guides the development and deployment of Internet of Things systems. Our intent, in this primer, is not to describe every conceivable aspect of the IoT or its enabling technologies but, rather, to provide managers an easy reference as they explore IoT solutions and plan potential implementations. Our hope is that this report will help demystify the underlying technologies that comprise the IoT value chain and explain how these technologies collectively relate to a larger strategic framework.

Sensors

An overview

Most “things,” from automobiles to Zambonis, the human body included, have long operated “dark,” with their location, position, and functional state unknown or even unknowable. The strategic significance of the IoT is born of the ever-advancing ability to break that constraint, and to create information, without human observation, in all manner of circumstances that were previously invisible. What allows us to create information from action is the use of sensors, a generic term intended to capture the concept of a sensing system comprising sensors, microcontrollers, modem chips, power sources, and other related devices.

Most “things,” from automobiles to Zambonis, the human body included, have long operated “dark,” with their location, position, and functional state unknown or even unknowable. The strategic significance of the IoT is born of the ever-advancing ability to break that constraint, and to create information, without human observation, in all manner of circumstances that were previously invisible. What allows us to create information from action is the use of sensors, a generic term intended to capture the concept of a sensing system comprising sensors, microcontrollers, modem chips, power sources, and other related devices.

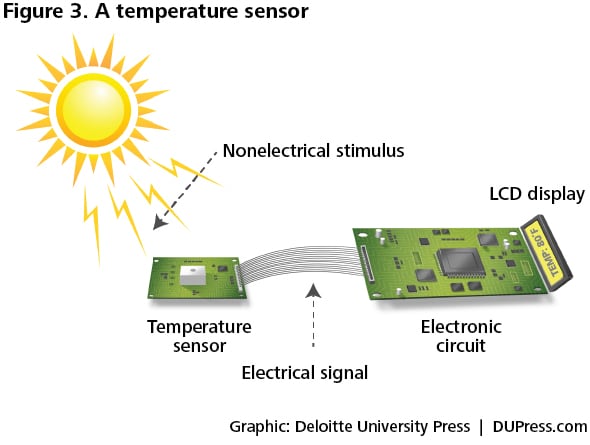

A sensor converts a non-electrical input into an electrical signal that can be sent to an electronic circuit. The Institute of Electrical and Electronics Engineers (IEEE) provides a formal definition:

An electronic device that produces electrical, optical, or digital data derived from a physical condition or event. Data produced from sensors is then electronically transformed, by another device, into information (output) that is useful in decision making done by “intelligent” devices or individuals (people).7

The technological complement to a sensor is an actuator, a device that converts an electrical signal into action, often by converting the signal to nonelectrical energy, such as motion. A simple example of an actuator is an electric motor that converts electrical energy into mechanical energy. Sensors and actuators belong to the broader category of transducers: A sensor converts energy of different forms into electrical energy; a transducer is a device that converts one form of energy (electrical or not) into another (electrical or not). For example, a loudspeaker is a transducer because it converts an electrical signal into a magnetic field and, subsequently, into acoustic waves.

Different sensors capture different types of information. Accelerometers measure linear acceleration, detecting whether an object is moving and in which direction,8 while gyroscopes measure complex motion in multiple dimensions by tracking an object’s position and rotation. By combining multiple sensors, each serving different purposes, it is possible to build complex value loops that exploit many different types of information. For example:

- Canary: A home security system that comes with a combination of temperature, motion, light, and humidity sensors. Computer vision algorithms analyze patterns in behaviors of people and pets, while machine learning algorithms improve the accuracy of security alerts over time.9

- Thingsee: A do-it-yourself IoT device that individuals can use to combine sensors such as accelerometers, gyroscopes, and magnetometers with other sensors that measure temperature, humidity, pressure, and light in order to collect personally interesting data.10

Types of sensors

Sensors are often categorized based on their power sources: active versus passive. Active sensors emit energy of their own and then sense the response of the environment to that energy. Radio Detection and Ranging (RADAR) is an example of active sensing: A RADAR unit emits an electromagnetic signal that bounces off a physical object and is “sensed” by the RADAR system. Passive sensors simply receive energy (in whatever form) that is produced external to the sensing device. A standard camera is embedded with a passive sensor—it receives signals in the form of light and captures them on a storage device.

Passive sensors require less energy, but active sensors can be used in a wider range of environmental conditions. For example, RADAR provides day and night imaging capacity undeterred by clouds and vegetation, while cameras require light provided by an external source.11

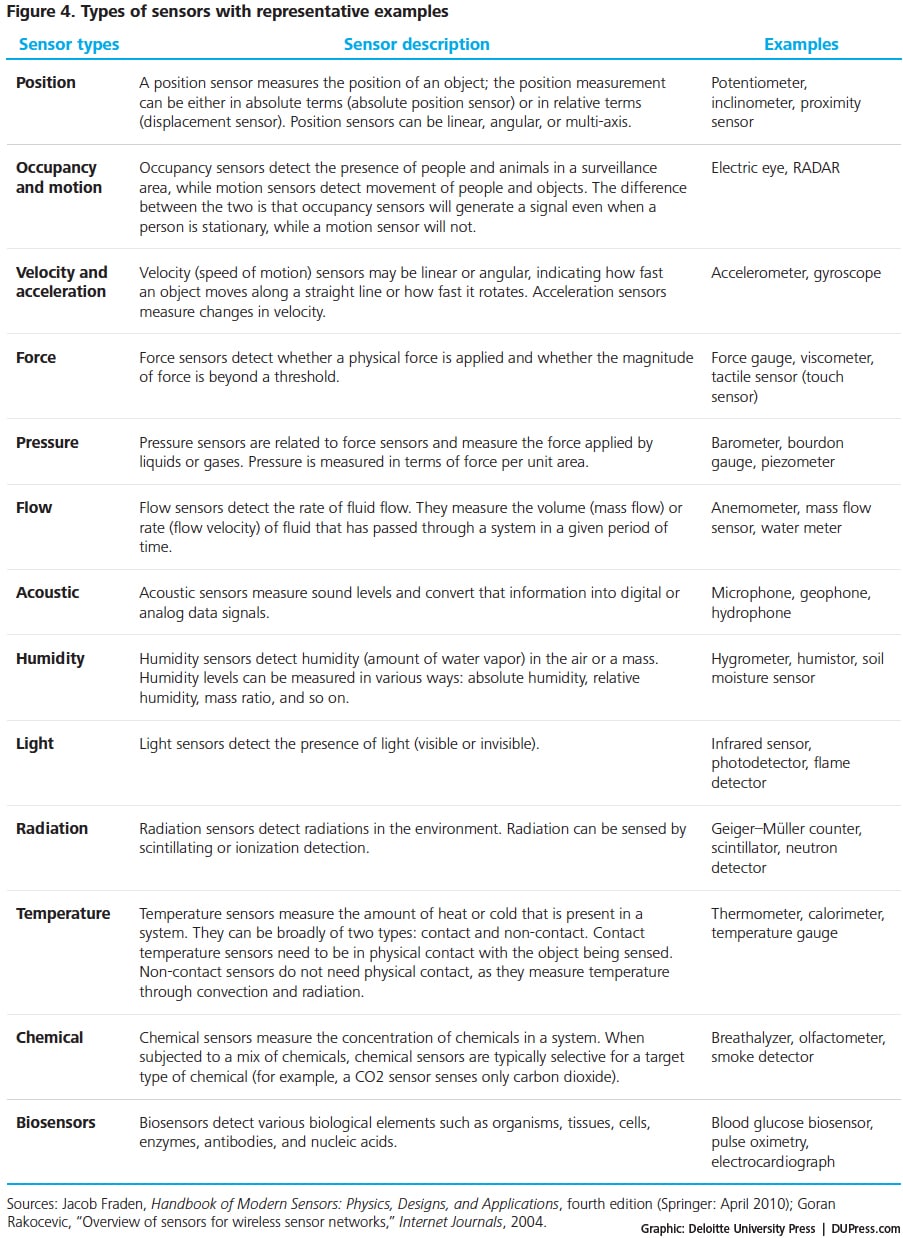

Figure 4 provides an illustrative list of 13 types of sensors based on the functions they perform; they could be active or passive per the description above.

Of course, the choice of a specific sensor is primarily a function of the signal to be measured (for example, position versus motion sensors). There are, however, several generic factors that determine the suitability of a sensor for a specific application. These include, but are not limited to, the following:12

- Accuracy: A measure of how precisely a sensor reports the signal. For example, when the water content is 52 percent, a sensor that reports 52.1 percent is more accurate than one that reports it as 51.5 percent.

- Repeatability: A sensor’s performance in consistently reporting the same response when subjected to the same input under constant environmental conditions.

- Range: The band of input signals within which a sensor can perform accurately. Input signals beyond the range lead to inaccurate output signals and potential damage to sensors.

- Noise: The fluctuations in the output signal resulting from the sensor or the external environment.

- Resolution: The smallest incremental change in the input signal that the sensor requires to sense and report a change in the output signal.

- Selectivity: The sensor’s ability to selectively sense and report a signal. An example of selectivity is an oxygen sensor’s ability to sense only the O2 component despite the presence of other gases.

Any of these factors can impact the reliability of the data received and therefore the value of the data itself.

Factors driving adoption within the IoT

There are three primary factors driving the deployment of sensor technology: price, capability, and size. As sensors get less expensive, “smarter,” and smaller, they can be used in a wider range of applications and can generate a wider range of data at a lower cost.13

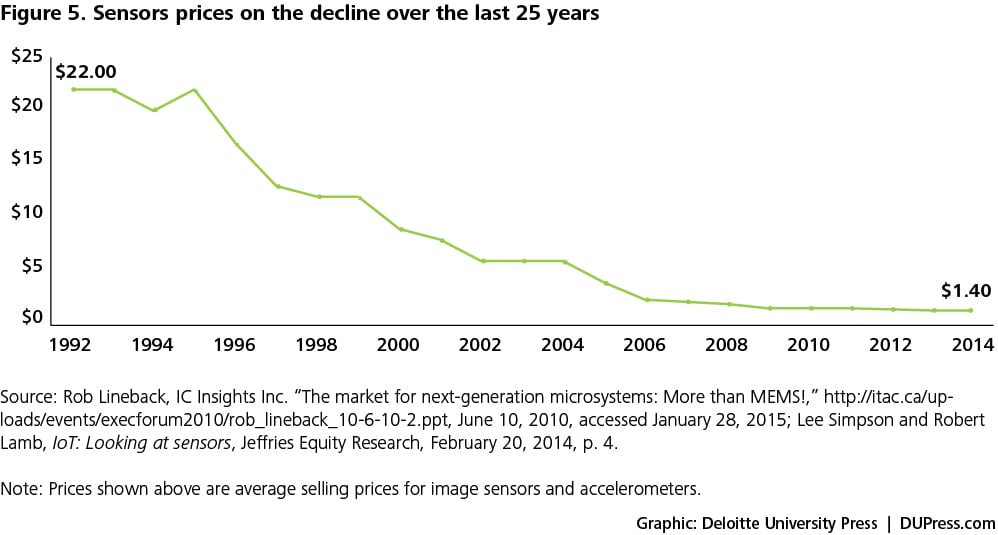

- Cheaper sensors: The price of sensors has consistently fallen over the past several years as shown in figure 5, and these price declines are expected to continue into the future.14 For example, the average cost of an accelerometer now stands at 40 cents, compared to $2 in 2006.15 Sensors vary widely in price, but many are now cheap enough to support broad business applications.

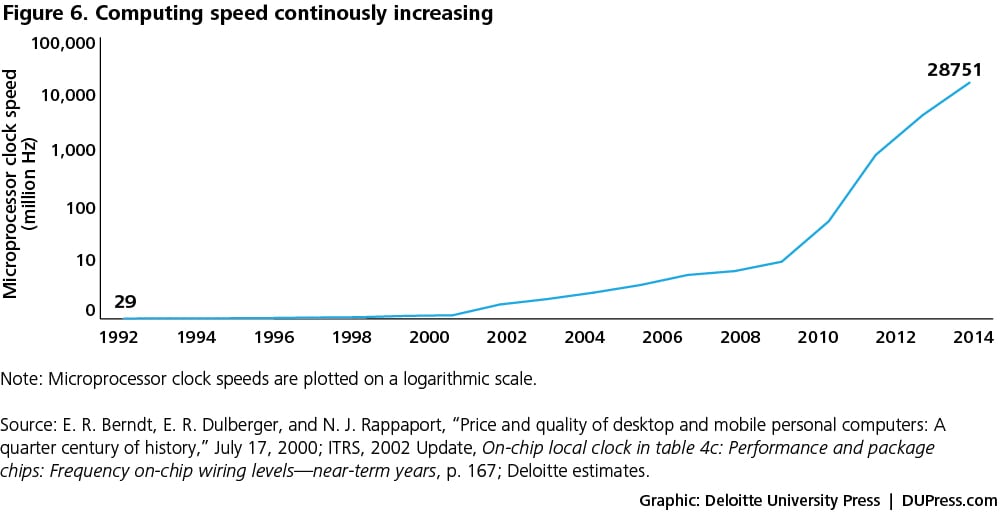

- Smarter sensors: As discussed earlier, a sensor does not function by itself—it is a part of a larger system that comprises microprocessors, modem chips, power sources, and other related devices. Over the last two decades, microprocessors’ computational power has improved, doubling every three years (see figure 6).

- Smaller sensors: There has been a rapid growth in the use of smaller sensors that can be embedded in smartphones and wearables. Micro-electro-mechanical systems (MEMS) sensors—small devices that combine digital electronics and mechanical components—have the potential to drive wider IoT applications.16 The average number of sensors on a smartphone has increased from three (accelerometer, proximity, ambient light) in 2007 to at least ten (including advanced sensors such as fingerprint- and gesture-based sensors) in 2014. Similarly, biosensors that can be worn and even ingested present new opportunities for the health care industry.

Challenges and potential solutions

Even in cases where sensors are sufficiently small, smart, and inexpensive, challenges remain. Among them are power consumption, data security, and interoperability.

- Power consumption: Sensors are powered either through in-line connections or batteries.17 In-line power sources are constant but may be impractical or expensive in many instances. Batteries may represent a convenient alternative, but battery life, charging, and replacement, especially in remote areas, may represent significant issues.18There are two dimensions to power:

- Efficiency: Thanks to advanced silicon technologies, some sensors can now stay live on batteries for over 10 years, thus reducing battery replacement cost and efforts.19 However, improved efficiency is counterbalanced by the power needed for increased numbers of sensors. Hence, systems’ overall power consumption often does not decrease or may, in fact, increase; this is an underlying challenge, as both energy and financial resources are finite.

- Source: While sensors often depend on batteries, energy harvesting of alternative energy sources such as solar energy may provide some alternatives, at a minimum providing support during the battery changing time.20 However, energy harvesters that are currently available are expensive, and companies are hesitant to make that investment (installation plus maintenance costs) given the unreliability associated with the supply of alternative power.21

- Security of sensors: Executives considering IoT deployments often cite security as a key concern.22 Tackling the problem at the source may be a logical approach. Complex cryptographic algorithms might ensure data integrity, though sensors’ relatively low processing power, the low memory available to them, and concerns about power consumption may all limit the ability to provide this security. Companies need to be mindful of the constraints involved as they plan their IoT deployments.23

- Interoperability: Most of the sensor systems currently in operation are proprietary and are designed for specific applications. This leads to interoperability issues in heterogeneous sensor systems related to communication, exchange, storage and security of data, and scalability. Communication protocols are required to facilitate communication between heterogeneous sensor systems. Due to various limitations such as low processing power, memory capacity, and power availability at the sensor level, lightweight communication protocols are preferable.24 Constrained Application Protocol (CoAP) is an open-source protocol that transfers data packets in a format that is lighter than that of other protocols such as Hypertext Transfer Protocol (HTTP), a protocol familiar to many, as it appears in most web addresses. While CoAP is well suited for energy-constrained sensor systems, it does not come with in-built security features, and additional protocols are needed to secure intercommunications between sensor systems.25

Networks

An overview

Information that sensors create rarely attains its maximum value at the time and place of creation. The signals from sensors often must be communicated to other locations for aggregation and analysis. This typically involves transmitting data over a network.

Sensors and other devices are connected to networks using various networking devices such as hubs, gateways, routers, network bridges, and switches, depending on the application. For example, laptops, tablets, mobile phones, and other devices are often connected to a network, such as Wi-Fi, using a networking device (in this case, a Wi-Fi router).

The first step in the process of transferring data from one machine to another via a network is to uniquely identify each of the machines. The IoT requires a unique name for each of the “things” on the network. Network protocols are a set of rules that define how computers identify each other. Broadly, network protocols can be proprietary or open. Proprietary network protocols allow identification and authorization to machines with specific hardware and software, making customization easier and allowing manufacturers to differentiate their offerings. Open protocols allow interoperability across heterogeneous devices, thus improving scalability.26

The first step in the process of transferring data from one machine to another via a network is to uniquely identify each of the machines. The IoT requires a unique name for each of the “things” on the network. Network protocols are a set of rules that define how computers identify each other. Broadly, network protocols can be proprietary or open. Proprietary network protocols allow identification and authorization to machines with specific hardware and software, making customization easier and allowing manufacturers to differentiate their offerings. Open protocols allow interoperability across heterogeneous devices, thus improving scalability.26

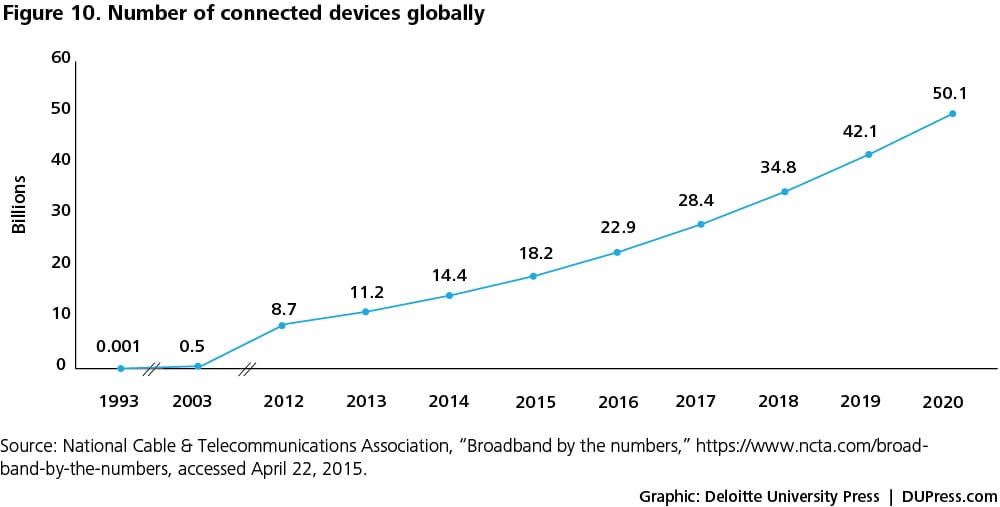

Internet Protocol (IP) is an open protocol that provides unique addresses to various Internet-connected devices; currently, there are two versions of IP: IP version 4 (IPv4) and IP version 6 (IPv6). IP was used to address computers before it began to be used to address other devices. About 4 billion IPv4 addresses out of its capacity of 6 billion addresses have already been used. IPv6 has superior scalability with approximately 3.4x1038 unique addresses compared to the 6 billion addresses supported by IPv4. Since the number of devices connected to the Internet is estimated to be 26 billion as of 2015 and projected to grow to 50 billion or more by 2020, the adoption of IPv6 has served as a key enabler of the IoT.

Enabling network technologies

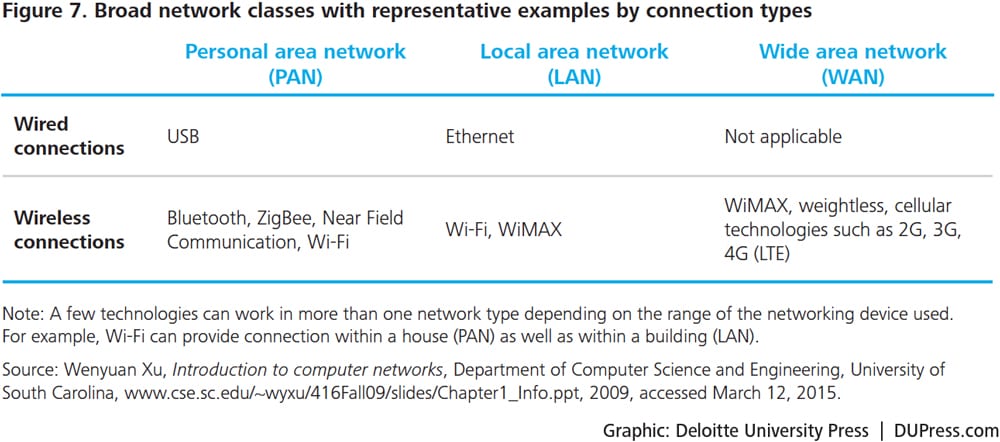

Network technologies are classified broadly as wired or wireless. With the continuous movement of users and devices, wireless networks provide convenience through almost continuous connectivity, while wired connections are still useful for relatively more reliable, secured, and high-volume network routes.27

The choice of a network technology depends largely on the geographical range to be covered. When data have to be transferred over short distances (for example, inside a room), devices can use wireless personal area network (PAN) technologies such as Bluetooth and ZigBee as well as wired connections through technologies such as Universal Serial Bus (USB). When data have to be transferred over a relatively bigger area such as an office, devices could use local area network (LAN) technologies. Examples of wired LAN technologies include Ethernet and fiber optics. Wireless LAN networks include technologies such as Wi-Fi. When data are to be transferred over a wider area beyond buildings and cities, an internetwork called wide area network (WAN) is set up by connecting a number of local area networks through routers. The Internet is an example of a WAN.

Data transfer rates and energy requirements are two key considerations when selecting a network technology for a given application. Technologies such as 4G (LTE, LTE-A) and 5G are favorable for IoT applications, given their high data transfer rates. Technologies such as Bluetooth Low Energy and Low Power Wi-Fi are well suited for energy-constrained devices.

Below, we discuss select wireless network technologies that could be used for IoT applications. For each of the following technologies, we discuss bandwidth rates, recent advances, and limitations. The technologies discussed below are representative, and the choice of an appropriate technology depends on the application at hand and the features of that technology.

Bluetooth and Bluetooth Low Energy

Introduced in 1999, Bluetooth technology is a wireless technology known for its ability to transfer data over short distances in personal area networks.28 Bluetooth Low Energy (BLTE) is a recent addition to the Bluetooth technology and consumes about half the power of a Bluetooth Classic device, the original version of Bluetooth.29 The energy efficiency of BLTE is attributable to the shorter scanning time needed for BLTE devices to detect other devices: 0.6 to 1.2 milliseconds (ms) compared to 22.5 ms for Bluetooth Classic. 30 In addition, the efficient transfer of data during the transmitting and receiving states enables BLTE to deliver higher energy efficiency compared to Bluetooth Classic. Higher energy efficiency comes at the cost of lower data rates: BLTE supports 260 kilobits per second (Kbps) while Bluetooth Classic supports up to 2.1 Mbps.31

Existing penetration, coupled with low device costs, positions BLTE as a technology well suited for IoT applications. However, interoperability is the persistent bottleneck here as well: BLTE is compatible with only the relatively newer dual-mode Bluetooth devices (called dual mode because they support BLTE as well as Bluetooth Classic), not the legacy Bluetooth Classic devices.32

Wi-Fi and Low Power Wi-Fi

Although Ethernet has been in use since the 1970s, Wi-Fi is a more recent wireless technology that is widely popular and known for its high-speed data transfer rates in personal and local area networks.

Typically, Wi-Fi devices keep latency, or delays in the transmission of data, low by remaining active even when no data are being transmitted. Such Wi-Fi connections are often set up with a dedicated power line or batteries that need to be charged after a couple of hours of use. Higher-cost, lower-power Wi-Fi devices “sleep” when not transmitting data and need just 10 milliseconds to “wake up” when called upon.33 Low Power Wi-Fi with batteries can be used for remote sensing and control applications.

Worldwide Interoperability for Microwave Access (WiMAX) and WiMAX 2

Introduced in 2001, WiMAX was developed by the European Telecommunications Standards Institute (ETSI) in cooperation with IEEE. WiMAX 2 is the latest technology in the WiMAX family. WiMAX 2 offers maximum data speed of 1 Gbps compared to 100 Mbps by WiMAX.34

In addition to higher data speeds, WiMAX 2 has better backward compatibility than WiMAX: WiMAX 2 network operators can provide seamless service by using 3G or 2G networks when required. By way of comparison, Long Term Evolution (LTE) and LTE-A, described below, also allow backward compatibility.

Long Term Evolution (LTE) and LTE-Advanced

Long Term Evolution, a wireless wide-area network technology, was developed by members of the 3rd Generation Partnership Project body in 2008. This technology offers data speeds of up to 300 Mbps.35

LTE-Advanced (LTE-A) is a recent addition to the LTE technology that offers still-higher data rates of 1 Gbps compared to 300 Mbps by LTE.36 There is debate among industry practitioners on whether LTE is truly a 4G technology: Many consider LTE a pre-4G technology and LTE-A a true 4G technology.37 Given its high bandwidth and low latency, LTE is touted as the more-promising technology for IoT applications; however, the underlying network infrastructure remains under development, as described in the challenges below.

Weightless

Weightless is a wireless open-standard WAN technology introduced in early 2014. Weightless uses unused bandwidth originally intended for TV broadcast to transfer data; based on the technical process of dynamic spectrum allocation, it can travel longer distances and penetrate through walls.38

Weightless can provide data rates between 2.5 Kbps to 16 Mbps in a wireless range of up to five kilometers, with batteries lasting up to 10 years.39 Weightless devices remain in standby mode, waking up every 15 minutes and staying active for 100 milliseconds to sync up and act on any messages; this leads to a certain latency.40 Given these characteristics, Weightless connections appear to be better- suited for delivering short messages in widespread machine-to-machine communications.

Factors driving adoption within the IoT

Networks are able to transfer data at higher speeds, at lower costs, and with lower energy requirements than ever before. Also, with the introduction of IPv6, the number of connected devices is rising rapidly. As a result, we are seeing an increasingly diverse composition of connected devices, from laptops and smartphones to home appliances, vehicles, traffic signals, and wind turbines. Such diversity in the nature of connected devices is driving a wider-scale adoption of an extensive range of network technologies.

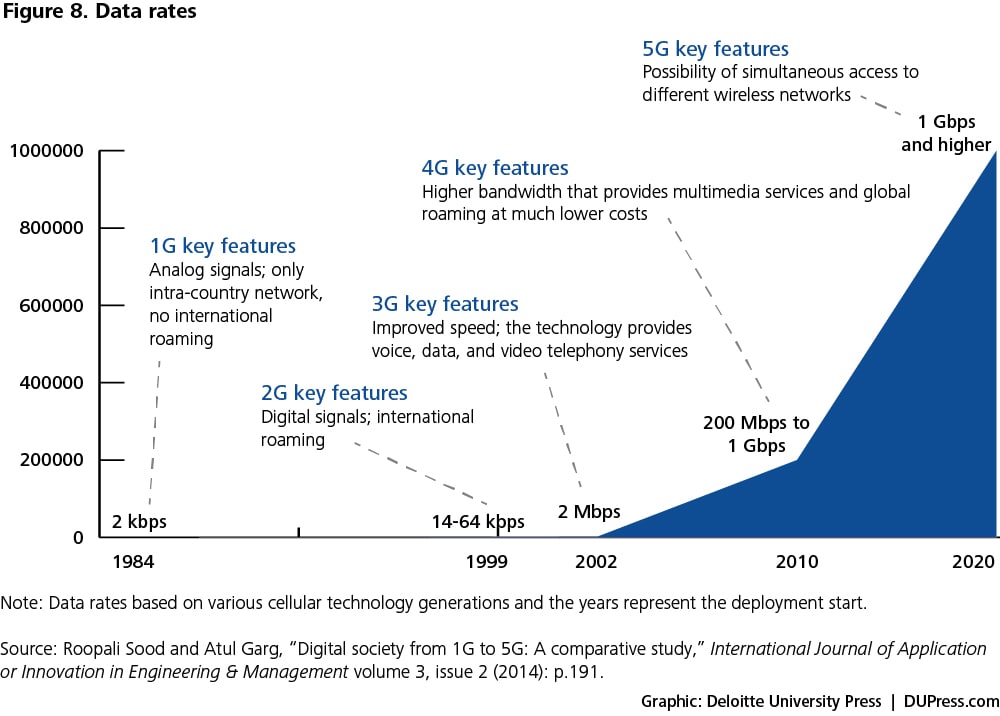

- Data rates: In the last 30 years, data rates have increased from 2 Kbps to 1 Gbps, facilitating seamless transfer of heavy data files (see figure 8 for various cellular-technology generations). The transition from the first cellular generation to the second changed the way communication messages were sent—from analog signals to digital signals. The transition from the second to the third generation marked a leap in capability, enabling users to share multimedia content over high-speed connections.

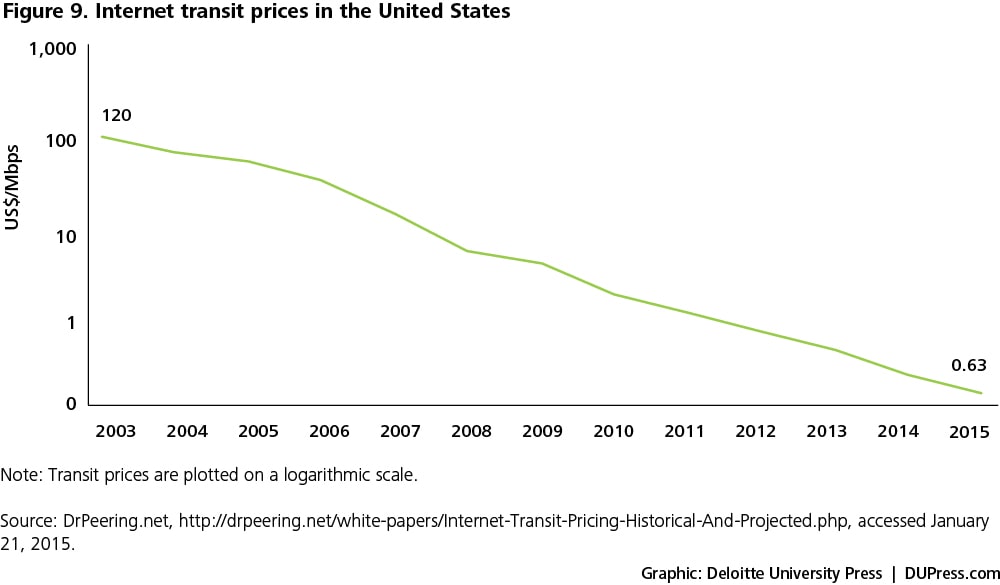

- Internet transit prices: The Internet transit price is the price charged by an Internet service provider (ISP) to transfer data from one point in the network to another. Since no single ISP can cover the worldwide network, the ISPs rely on each other to transfer data using network interconnections through gateways.41 Internet transit prices have come down in recent years due to technology developments such as the global increase in submarine cabling, the rising use of wavelength division multiplexing by ISPs, the transition to higher-capacity bandwidth connections, and increased competition between ISPs.42 In 2003, it cost $120 to transfer 1 Mbps in the United States; as of 2015, the cost has come down to 63 cents (see figure 9).

- Power efficiency: Availability of power-efficient networks is critical given the increase in the number of connected devices. Bluetooth Low Energy has a power consumption of 0.153 μW/bit (0.153 microwatts consumed in transferring 1 bit of data; 1 byte = 8 bits), about 50 percent lower than that of Bluetooth Classic.43

- IPv6 adoption: Given IPv6’s massive identification space, new devices are typically IPv6-based, while companies are transitioning existing devices from IPv4 to IPv6. In 2015, according to Cisco, the number of IPv6-capable websites increased by 33 percent over the prior year.44 By 2018, 50 percent of all fixed and mobile device connections are expected to be IPv6-based, compared to 16 percent in 2013.45

Challenges and potential solutions

Even though network technologies have improved in terms of higher data rates and lower costs, there are challenges associated with interconnections, penetration, security, and power consumption.

- Interconnections: Metcalfe’s Law states that “the value of a network is proportional to the square of the number of compatibly communicating devices.” There is limited value in connecting the devices to the Internet; companies can create enhanced value by connecting devices to the network and to each other. Different network technologies require gateways to connect with each other. This adds cost and complexity, which can often make security management more difficult.

- Network penetration: There is limited penetration of high-bandwidth technologies such as LTE and LTE-A, while 5G technology has yet to arrive.46 Currently, LTE accounts for only 5 percent of the world’s total mobile connections. LTE penetration as a percentage of connections is 69 percent in South Korea, 46 percent in Japan, and 40 percent in the United States, but its penetration in the developing world stands at just 2 percent.47 In emerging markets, network operators are treading the slow-and-steady path to LTE infrastructure, given the accompanying high costs and their focus on fully reaping the returns on the investments in 3G technology that they made in the last three to five years.

- Security: With a growing number of sensor systems being connected to the network, there is an increasing need for effective authentication and access control. The Internet Protocol Security (IPSec) suite provides a certain level of secured IP connection between devices; however, there are outstanding risks associated with the security of one or more devices being compromised and the impact of such breaches on connected devices.48 Maintaining data integrity while remaining energy efficient stands as an enduring challenge.

- Power: Devices connected to a network consume power, and providing a continuous power source is a pressing concern for the IoT. Depending on the application, a combination of techniques such as power-aware routing and sleep-scheduling protocols can help improve power management in networks. Power-aware routing protocols determine the routing decision based on the most energy-efficient route for transmitting data packets; sleep-scheduling protocols define how devices can “sleep” and remain inactive for better energy efficiency without impacting the output.

Standards

An overview

The third stage in the Information Value Loop—aggregate—refers to a variety of activities including data handling, processing, and storage. Data collected by sensors in different locations are aggregated so that meaningful conclusions can be drawn. Aggregation increases the value of data by increasing, for example, the scale, scope, and frequency of data available for analysis. Aggregation is achieved through the use of various standards depending on the IoT application at hand. According to the International Organization for Standardization (ISO), “a standard is a document that provides requirements, specifications, guidelines or characteristics that can be used consistently to ensure that materials, products, processes and services are fit for their purpose.”49

Two broad types of standards relevant for the aggregation process are technology standards (including network protocols, communication protocols, and data-aggregation standards) and regulatory standards (related to security and privacy of data, among other issues).

We discuss technology standards in the “Enabling technology standards” discussion later in this section. The second type of standards relates to regulatory standards that will play an important role in shaping the IoT landscape. There is a need for clear regulations related to the collection, handling, ownership, use, and sale of the data. Within the context of expanding IoT applications, it is worthwhile to consider the US Federal Trade Commission’s privacy and security recommendations dubbed the Fair Information Practice Principles (FIPPs) and described below.50

- Choice and notice: The principle of choice and notice states that entities that collect data should give users the option to choose what they reveal and notify users when their personal information is being recorded. This may not be required for IoT applications that aggregate information, de-linked to any specific individual.

- Purpose specification and use limitation: This principle states that entities collecting data must clearly state the purpose to the authority that permits the collection of those data. The use of data must be limited to the purpose specified, although this might hinder creative uses of collected data sets in various IoT applications.

- Data minimization: The principle of data minimization suggests that a company can collect only the data required for a specific purpose and delete that data after the intended use. This necessarily restricts the scope of analysis that can result from slicing and dicing the IoT data.

- Security and accountability: This principle states that entities that collect and store data are accountable and must deploy security systems to avoid any unauthorized access, modification, deletion, or use of the data.

It is unclear, as of now, who will design, develop, and implement any regulatory standards specifically tailored to IoT applications. There is discussion about the appropriateness of existing guidelines and whether they are adequate for evolving IoT applications. For example, the US Health Insurance Portability and Accountability Act (HIPAA) governs the protection of medical information collected by doctors, hospitals, and insurance companies.51 However, the act does not extend to information collected through personal wearable devices.52

Enabling technology standards

We just discussed the issues related to regulatory standards. Technology standards, the second type, comprises three elements: network protocols, communication protocols, and data-aggregation standards. Network protocols define how machines identify each other, while communication protocols provide a set of rules or a common language for devices to communicate. Once the devices are “talking” to each other and sharing data, aggregation standards help to aggregate and process the data so that those data become usable.

- Network protocols: Network protocols refer to a set of rules by which machines identify and authorize each other. Interoperability issues result from multiple network protocols in existence. In recent years, companies in the IoT value chain have begun working together to help align multiple network protocols. One example is the AllJoyn standard established by Qualcomm in late 2013 that allows devices to discover, connect, and communicate directly with other AllJoyn-enabled products connected to different technologies such as Wi-Fi, Ethernet, and possibly Bluetooth and ZigBee.53

- Communication protocols: Once devices are connected to a network and they identify each other, communication protocols (a set of rules) provide a common language for devices to communicate. Various communication protocols are used for device-to-device communication; broadly, they vary in the format in which data packets are transferred. There are ongoing efforts to identify protocols better suited to IoT applications. Toward that end, we earlier discussed the advantages and limitations of the Constrained Application Protocol, a communication protocol lighter than other popular protocols such as HTTP.

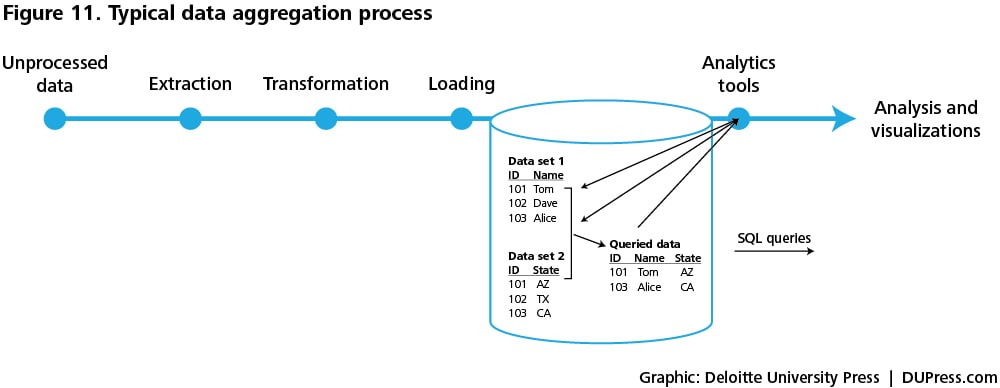

- Data aggregation standards: Data collected from multiple devices come in different formats and at different sampling rates—that is, the frequency at which data are collected. One set of data-aggregation tools—Extraction, Transformation, Loading (ETL) tools—aggregate, process, and store data in a format that can be used for analytics applications (see figure 11).54 Extraction refers to acquiring data from multiple sources and multiple formats and then validating to ensure that only data that meet a criterion are included. Transformation includes activities such as splitting, merging, sorting, and transforming the data into a desired format—for example, names can be split into first and last names, while addresses can be merged into city and state format. Loading refers to the process of loading the data into a database that can be used for analytics applications.

Traditional ETL tools aggregate and store the data in relational databases, in which data are organized by establishing relationships based on a unique identifier. It is easy to enter, store, and query structured data in relational databases using structured query language (SQL). The American National Standards Institute standardized SQL as the querying language for relational databases in 1986.55 SQL provides users a medium to communicate with databases and perform tasks such as data modification and retrieval. As the standard, SQL aids aggregation not just in centralized databases (all data stored in a single location) but also in distributed databases (data stored on several computers with concurrent data modifications).

With recent advances in easy and cost-effective availability of large volumes of data, there is a question about the adequacy of traditional ETL tools that can typically handle data in terabytes (1 terabyte = 1012 bytes). Big-data ETL tools developed in recent years can handle a much higher volume of data, such as in petabytes (1 petabyte = 1000 terabytes or 1015 bytes). In addition to handling large volume, big-data tools are also considered to be better suited to handle the variety of incoming data, structured as well as unstructured. Structured data are typically stored in spreadsheets, while unstructured data are collected in the form of images, videos, web pages, emails, blog entries, documents, etc.

Apache Hadoop is a big-data tool useful especially for unstructured data. Based on the Java programming language, Hadoop, developed by the Apache Software Foundation, is an open-source tool useful for processing large data sets. Hadoop enables parallel processing of large data across clusters of computers wherein each computer offers local aggregation and storage.56 Hadoop comprises two major components: MapReduce and Hadoop Distributed File System (HDFS). While MapReduce enables aggregation and parallel processing of large data sets, HDFS is a file-based storage system and a type of “Not only SQL (noSQL)” database. Compared to relational databases, NoSQL databases represent a wider variety of databases that can store unstructured data. Data processed and stored on Hadoop systems can be queried through Hadoop application program interfaces (APIs) that offer an easy user interface to query the data stored on HDFS for analytics applications.

Depending on the type of data and processing, different tools could be used. While MapReduce works on parallel processing, Spark, another big-data tool, works on both parallel processing and in-memory processing.57 Considering storage databases, HDFS is a file-based database that stores batch data such as quarterly and yearly company financial data, while Hbase and Cassandra are event-based storage databases that are useful for storing streaming (or real-time) data such as stock-performance data.58 We discussed select big-data tools above; other tools exist with a range of benefits and limitations, and the choice of a tool depends on the application at hand.

Factors driving adoption within the IoT

At present, the IoT landscape is in a nascent stage, and existing technology standards serve specific solutions and stakeholder requirements. There are many efforts under way to develop standards that can be adopted more widely. Primarily, we find two types of developments: vendors (across the IoT value chain) coming together to an agreement, and standards bodies (for example, IEEE or ETSI) working to develop a standard that vendors follow. Time will tell which one of these two options will prevail. Ultimately, it might be difficult to have one universal standard or “one ring to rule them all” either at the network or communication protocol level or at the data-aggregation level.

In terms of network and communication protocols, a few large players have at hand a meaningful opportunity to drive the standards that IoT players will follow for years to come. As an example, Qualcomm—with other companies such as Sony, Bosch, and Cisco—has developed the AllSeen Alliance that provides the AllJoyn platform, as described earlier.59 On similar lines, through the Open Interconnect Consortium, Intel launched the open-source IoTivity platform that facilitates device-to-device connectivity.60 IoTivity offers its members a free license of the code, while AllSeen does not. However, AllSeen-compliant devices are already available, while devices compliant with IoTivity are expected to be available by the second half of 2015.61 Both platforms are comparable but not interoperable, just as iOS and Android are.62

Concurrently, various standards bodies are also working to develop standards (for network and communication protocols) that apply to their geographical boundaries and could extend well beyond to facilitate worldwide IoT communications. As an example, the ETSI, which primarily has a focus on Europe, is working to develop an end-to-end architecture called the oneM2M platform that could be used worldwide.63 IEEE, another standards body, is making progress with the IEEE P2413 working group and is coordinating with standards bodies such as ETSI and ISO to develop a global standard by 2016.64

In terms of data aggregation, relational databases and SQL are considered to be the standards for storing and querying structured data. However, we do not yet have a widely used standard for handling unstructured data, even though various big-data tools are available. We discuss this challenge below.

Challenges and potential solutions

For effective aggregation and use of the data for analysis, there is a need for technical standards to handle unstructured data and legal and regulatory standards to maintain data integrity. There are gaps in people skills to leverage the newer big-data tools, while security remains a major concern, given the fact that all the data are aggregated and processed at this stage of the Information Value Loop.

- Standard for handling unstructured data: Structured data are stored in relational databases and queried through SQL. Unstructured data are stored in different types of noSQL databases without a standard querying approach. Hence, new databases created from unstructured data cannot be handled and used by legacy database-management systems that companies typically use, thus restricting their adoption.65

- Security and privacy issues: There is a need for clear guidelines on the retention, use, and security of the data as well as metadata, the data that describe other data. As discussed earlier, there is a trade-off between the level of security and the memory and bandwidth requirements.

- Regulatory standards for data markets: Data brokers are companies that sell data collected from various sources. Even though data appear to be the currency of the IoT, there is lack of transparency about who gets access to data and how those data are used to develop products or services and sold to advertisers and third parties. A very small fraction of the data collected online is sold online, while a larger share is sold through offline transactions between providers and users.66 As highlighted by the Federal Trade Commission, there is an increased need for regulation of data brokers.67

- Technical skills to leverage newer aggregation tools: Companies that are keen on leveraging big-data tools often face a shortage of talent to plan, execute, and maintain systems.68 There is an uptrend in the number of engineers being trained to use newer tools such as Spark and MapReduce, but this is far fewer than the number of engineers trained in traditional languages such as SQL.69

Augmented intelligence

An overview

Extracting insight from data requires analysis, the fourth stage in the Information Value Loop. Analysis is driven by cognitive technologies and the accompanying models that facilitate the use of cognitive technologies.70 We refer to these enablers collectively as “augmented intelligence” to capture the idea that systems can automate intelligence—a concept that for us includes notions of volition and purpose—in a way that excludes human agency but nevertheless can be supplemented and enhanced.

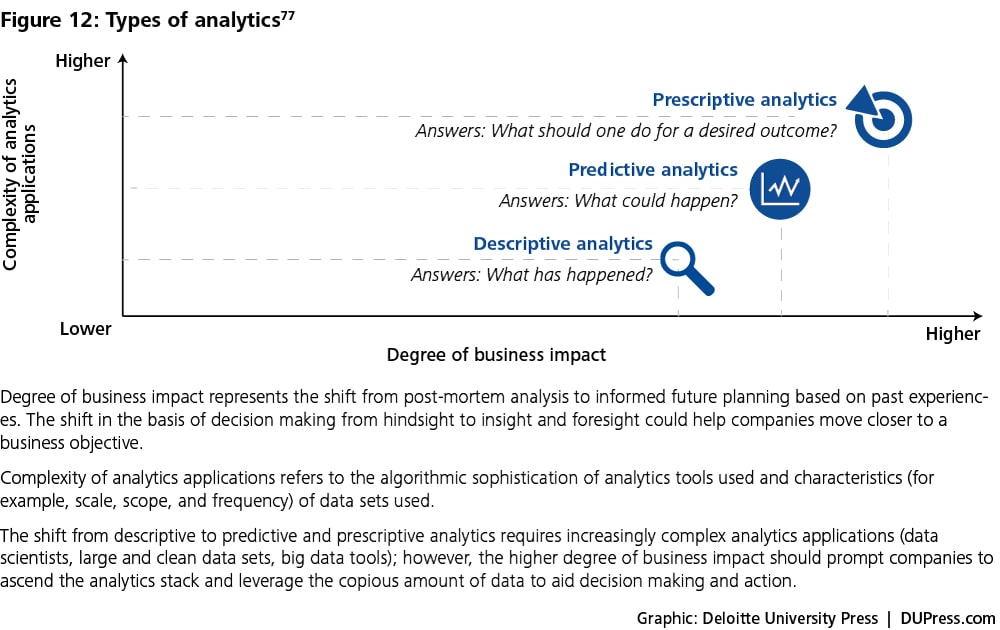

Enabling augmented-intelligence technologies

In the context of the value loop, analysis is useful only to the extent that it informs action. “Analytics typically involves sifting through mountains of what are often confusing and conflicting data—in search of nuggets of insight that may inform better decisions.”71 As figure 12 illustrates, there are three different ways in which analytics can inform action.72

At the lowest level, descriptive analytics tools augment our intelligence by allowing us to work effectively with much larger or more complex data sets than we could otherwise easily handle. Various data visualization tools such as Tableau and SAS Visual Analytics make large data sets more amenable to human comprehension and enable users to identify insights that would otherwise be lost in the huge heap of data.

Predictive analytics is the beginning of keener insight into what might be happening or could happen, given historical trends. Predictive analytics exploits the large quantity and increasing variety of data to build useful models that can correlate seemingly unrelated variables.73 Predictive models are expected to produce more accurate results through machine learning, a process that refers to computer systems’ ability to improve their performance by exposure to data without the need to follow explicitly programmed instructions. For instance, when presented with an information database about credit-card transactions, a machine-learning system discerns patterns that are predictive of fraud. The more transaction data that the system processes, the better its predictions should become.74 Unfortunately, in many practical applications, even seemingly strong correlations are unreliable guides to effective action. Consequently, predictive analytics in itself still relies on human beings to determine what sorts of interventions are likeliest to work.75

Finally, prescriptive analytics takes on the challenge of creating more nearly causal models.76 Prescriptive analytics includes optimization techniques that are based on large data sets, business rules (information on constraints), and mathematical models. Prescriptive algorithms can continuously include new data and improve prescriptive accuracy in decision optimizations. Since prescriptive models provide recommendations on the best course of action, the element of human participation becomes more important; the focus shifts from a purely analytics exercise to behavior change management. We discuss this more in the “Augmented behavior” section.

Finally, prescriptive analytics takes on the challenge of creating more nearly causal models.76 Prescriptive analytics includes optimization techniques that are based on large data sets, business rules (information on constraints), and mathematical models. Prescriptive algorithms can continuously include new data and improve prescriptive accuracy in decision optimizations. Since prescriptive models provide recommendations on the best course of action, the element of human participation becomes more important; the focus shifts from a purely analytics exercise to behavior change management. We discuss this more in the “Augmented behavior” section.

With advances in cognitive technologies’ ability to process varied forms of information, vision and voice have also become usable. Below, we discuss select cognitive technologies that are experiencing increasing adoption and can be deployed for predictive and prescriptive analytics.78

- Computer vision refers to computers’ ability to identify objects, scenes, and activities in images. Computer vision technology uses sequences of imaging-processing operations and other techniques to decompose the task of analyzing images into manageable pieces. Certain techniques, for example, allow for detecting the edges and textures of objects in an image. Classification models may be used to determine whether the features identified in an image are likely to represent a kind of object already known to the system.79 Computer vision applications are often used in medical imaging to improve diagnosis, prediction, and treatment of diseases.80

- Natural-language processing refers to computers’ ability to work with text the way humans do, extracting meaning from text or even generating text that is readable. Natural-language processing, like computer vision, comprises multiple techniques that may be used together to achieve its goals. Language models, a natural-language processing technique, are used to predict the probability distribution of language expressions—the likelihood that a given string of characters or words is a valid part of a language, for instance. Feature selection may be used to identify the elements of a piece of text that may distinguish one kind of text from another—for example, a spam email versus a legitimate one.81

- Speech recognition focuses on accurately transcribing human speech. The technology must handle various inherent challenges such as diverse accents, background noise, homophones (for example, “principle” versus “principal”), and speed of speaking. Speech-recognition systems use some of the same techniques as natural-language processing systems, as well as others such as acoustic models that describe sounds and the probability of their occurring in a given sequence in a given language.82 Applications of speech-recognition technologies include medical dictation, hands-free writing, voice control of computer systems, and telephone customer-service applications. Domino’s Pizza, for instance, recently introduced a mobile app that allows customers to use natural speech to place orders.83

Factors driving adoption within the IoT

Availability of big data—coupled with growth in advanced analytics tools, proprietary as well as open-source—is driving augmented intelligence. Typical intelligence applications are based on batch processing of data; however, the need for timely insights and prompt action is driving a growing adoption of real-time data analysis tools.

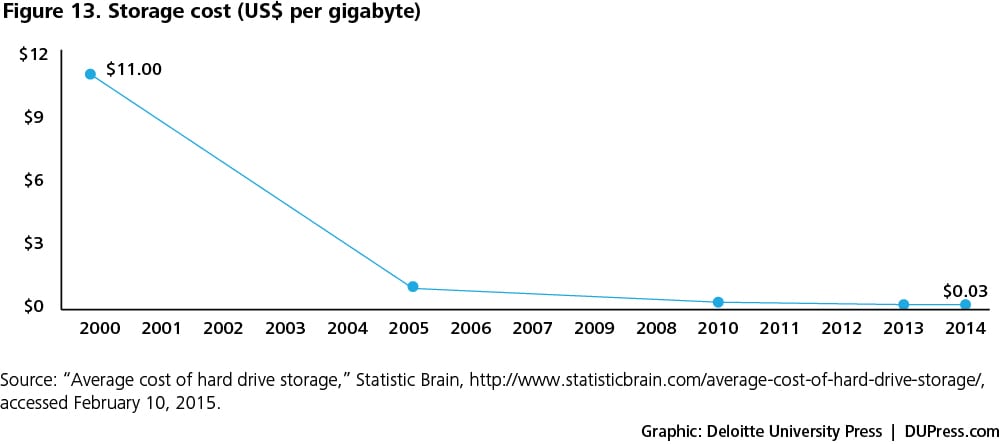

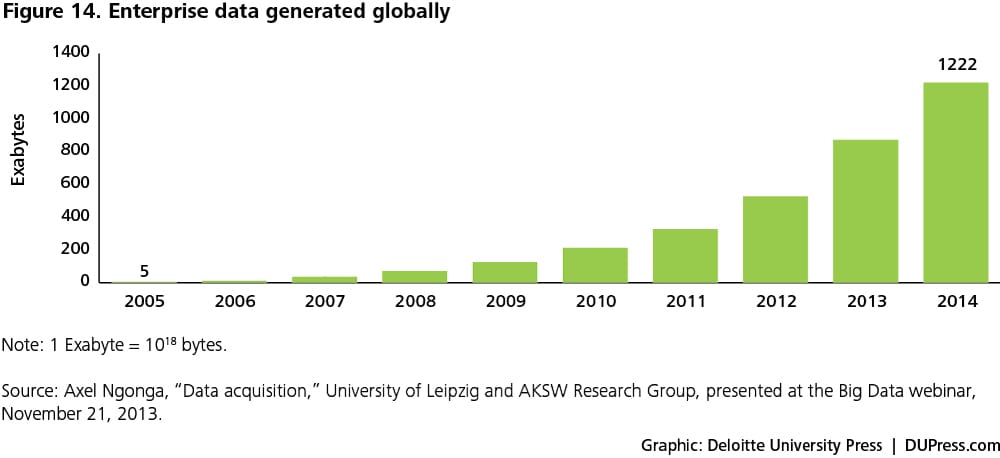

- Availability of big data: Artificial intelligence models can be improved with large data sets that are more readily available than ever before, thanks to the lower storage costs. Figures 13 and 14 show the recent decline in storage costs alongside the growth in enterprise data over the last decade.

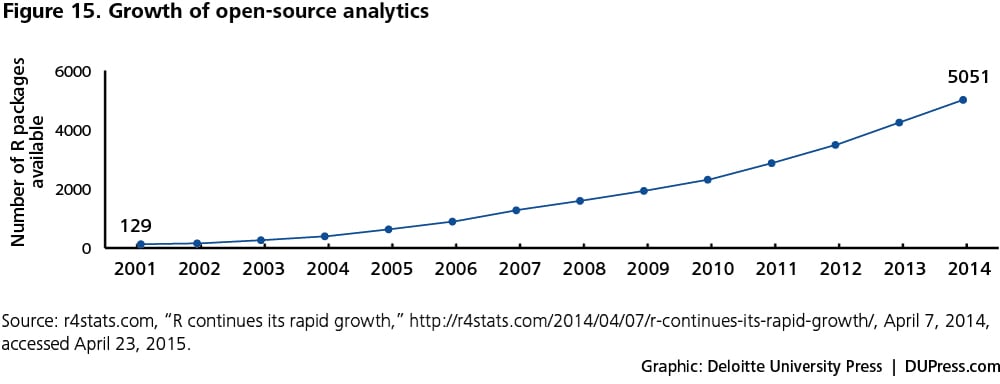

- Growth in crowdsourcing and open-source analytics software: Cloud-based crowdsourcing services are leading to new algorithms and improvements in existing ones at an unprecedented rate. Data scientists across the globe are working to improve the breadth and depth of analytics tools. As an example, the number of R packages (a package includes R functions, data sets, and underlying code in a usable format) has increased 40-fold since 2001 (see figure 15).84

- Real-time data processing and analysis: Analytics tools such as complex event processing (CEP) enable processing and analysis of data on a real-time or a near-real-time basis, driving timely decision making and action.87 An event is any activity—such as stock trades, sales orders, social media posts, and website clicks—that leads to the creation and potential use of data. CEP tools monitor, process, and analyze streams of data coming from multiple sources to identify movements in data that help identify abnormal events or patterns so that any required action can be taken as quickly as possible. CEP tools can be proprietary or open-source; Apache Spark, discussed earlier, is an example of a big-data tool that offers CEP functionality.CEP is relevant for the IoT in its ability to recognize patterns in massive data sets at low latency rates. A CEP tool identifies patterns by using a variety of techniques such as filtering, aggregation, and correlation to trigger automated action or flag the need for human intervention.86 CEP tools exhibit low latency rates—sometimes less than a second—by using techniques such as continuous querying, in-memory processing, and parallel processing.87 Continuous querying works on the principle of incremental processing. For example, once the system has calculated the average of a million observations, the moment the next data point comes in, the system simply updates the value and doesn’t scan the entire data set to calculate the average; this saves computational time and resources. In-memory processing—the process of storing data in random access memory (RAM) in lieu of hard disks—enables faster data processing. Lastly, parallel processing, the processing of data across clusters of computers, is achieved by partitioning data either by source or type.In the banking industry, CEP plays a key role in cross-selling services. A CEP tool can continuously monitor and analyze a customer’s transactions with the bank through various channels. The tool can be used to monitor any large withdrawals through the ATM or check payments. Further, the tool could correlate the transactions with any accompanying bank-branch transactions such as an address change—potentially indicating a house purchase—and present a cross-selling opportunity, say home insurance, before a competitor draws the customer away.88 Such cross-selling recommendations can then be automatically pushed onto web-based banking systems and concurrently used by bankers, tellers, and call-center representatives.

Challenges and potential solutions

Limitations of augmented intelligence result from the quality of data, human inability to develop a foolproof model, and legacy systems’ limited ability to handle unstructured and real-time data. Even if both the data and model are shipshape, there could be challenges in human implementation of the recommended action; in the next section, on augmented behavior, we discuss the challenges related to human behavior.

- Inaccurate analysis due to flaws in the data and/or model: A lack of data or presence of outliers may lead to false positives or false negatives, thus exposing various algorithmic limitations. Also, if all the decision rules are not correctly laid out, the algorithm could throw incorrect conclusions. For example, a social networking site recently featured the demise of a subscriber’s daughter on an automatically generated dashboard. The model was not programmed to recognize and exclude negative events, thus the faux pas.89

- Legacy systems’ ability to analyze unstructured data: Legacy systems are well suited to handle structured data; unfortunately, most IoT/business interactions generate unstructured data.90 Unstructured data are growing at twice the rate of structured data and already account for 90 percent of all enterprise data.91 While traditional relational-database systems will continue to be relevant for structured-data management and analysis, a steady influx of IoT-driven applications will require analytics systems that can handle unstructured data without compromising the scope of data.

- Legacy systems’ ability to manage real-time data: Traditional analytics software generally works on batch-oriented processing, wherein all the data are loaded in a batch and then analyzed.92 This approach does not deliver the low latency required for near-real-time analysis applications. Predictive applications could be designed to use a combination of batch processing and real-time processing to draw meaningful conclusions.93 Timeliness is a challenge in real-time analytics—that is, what data can be considered truly real?94 Ideally, data are valid the second they are generated; however, because of practical issues related to latency, the meaning of “real time” varies from application to application.

Augmented behavior

An overview

In its simplest sense, the concept of “augmented behavior” is the “doing” of some action that is the result of all the preceding stages of the value loop—from sensing to analysis of data. Augmented behavior, the last phase in the loop, restarts the loop because action leads to creation of data, when configured to do so.

There is a thin line between augmented intelligence and augmented behavior. For our purpose, augmented intelligence drives informed action, while augmented behavior is an observable action in the real world.

As a practical matter, augmented behavior finds expression in at least three ways:

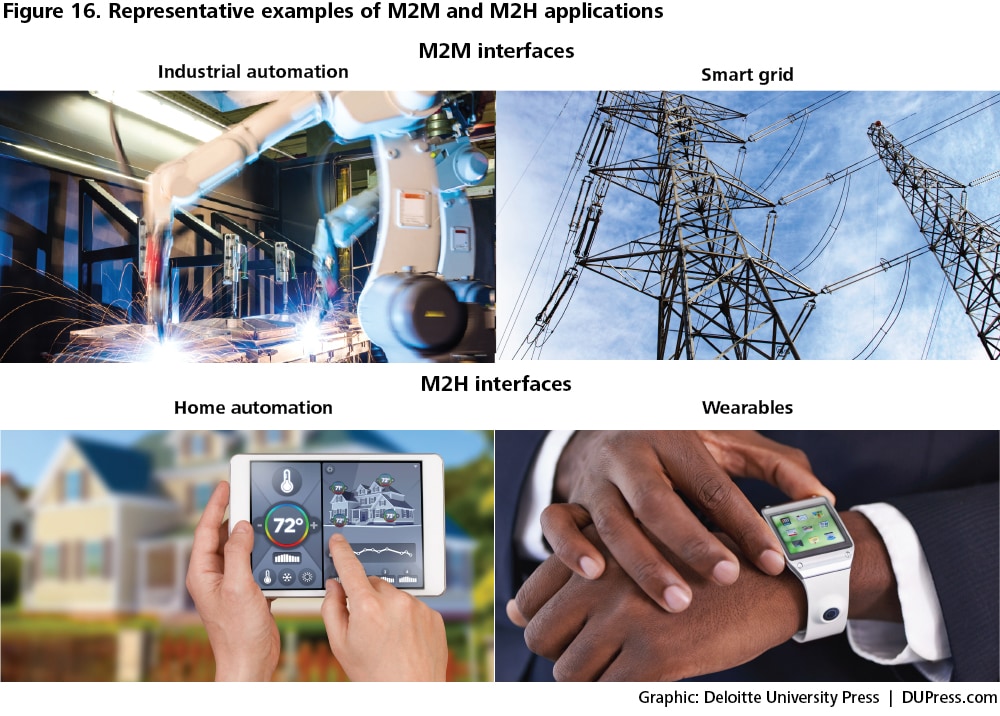

- Machine-to-machine (M2M) interfaces: M2M interfaces refer to the set of technologies that enable machines to communicate with each other and drive action. In common vernacular, M2M is often used interchangeably with the IoT.95 For our purposes, though, the IoT is a broader concept that includes machine-to-machine and machine-to-human (M2H) interfaces, as well as support systems that facilitate the management of information in a way that creates value.96

- Machine-to-human interfaces: We discuss M2H interfaces in the context of individual users; business users of M2H interfaces are discussed in the next element, organizational entities. Based on the data collected and algorithmic calculations, machines have the potential to convey suggestive actions to individuals who then exercise their discretion to take or not to take the recommended action. With human interaction, the IoT discussion shifts into a slightly different direction, toward behavioral sciences, which is distinct from the data science that encapsulates the preceding four stages focused on creating, communicating, aggregating, and analyzing the data to derive meaningful insights.97

- Organizational entities: Organizations include individuals and machines and thus involve the benefits as well as the challenges of both M2M and M2H interfaces. Managing augmented behavior in organizational entities requires changes in people’s behaviors and organizational processes. Business managers could focus on process redesign based on how information creates value in different ways.98

Enabling augmented behavior technologies

The enabling technologies for both M2M and M2H interfaces prompt a consideration of the evolution in the role of machines—from simple automation that involves repetitive tasks requiring strength and speed in structured environments to sophisticated applications that require situational awareness and complex decision making in unstructured environments. The shift toward sophisticated automation requires machines to evolve in two ways: improvements in the machine’s cognitive abilities (for example, decision making and judgment) discussed in the previous section and the machine’s execution or actuation abilities (for example, higher precision along with strength and speed). With respect to robots specifically, we present below an overview of how machines have ascended this evolutionary path:

1940–1970

In the late 1940s, a few non-programmable robots were developed; these robots could not be reprogrammed to adjust to changing situations and, as such, merely served as mechanical arms for heavy, repetitive tasks in manufacturing industries.99 In 1954, George Devol developed one of the first programmable robots,100 and in the early 1960s, an increasing number of companies started using programmable robots for industrial automation applications such as warehouse management and machining.101

1970–2000

This period witnessed key developments related to the evolution of adaptive robots.102 As the name suggests, adaptive robots embedded with sensors and sophisticated actuation systems can adapt to a changing environment and can perform tasks with higher precision and complexity compared to earlier robots.103 During this period, robotic machines that could adapt to varying situations were used to identify objects and autonomously take action in applications such as space vehicles, unmanned aerial vehicles, and submarines.104

2000–2010

The development of an open-source robot operating system (developed by the Open Source Robotics Foundation) in 2006 was an important driver enabling the development and testing of various robotic technologies.105 As robots’ intelligence and precision of execution improved, they increasingly started working with human beings on critical tasks such as medical surgeries. Following the US Defense Advanced Research Project Agency’s competition for developing autonomous military vehicles in 2004, many automakers made a headway into military and civilian autonomous vehicles.106 Even though the underlying technology is available, legal and social challenges related to the use of autonomous vehicles are yet to be resolved.

2010 onward

With the availability of big data, cloud-based memory and computing, new cognitive technologies, and machine learning, robots in general seem to be getting better at decision making and are gradually approaching autonomy in many actions. We are witnessing the development of machines that have anthropomorphic features and possess human-like skills such as visual perception and speech recognition.107 Machines are automating intelligence work such as writing news articles and doing legal research—tasks that could be done only by humans earlier.108

Factors driving adoption within the IoT

Improved functionality at lower prices is driving higher penetration of industrial robots and increasing the adoption of surgical robots, personal-service robots, and so on. For situations where a user needs to take the action, machines are increasingly being developed with basic behavioral-science principles in mind. This allows machines to influence human behaviors in effective ways.

Improved functionality at lower prices is driving higher penetration of industrial robots and increasing the adoption of surgical robots, personal-service robots, and so on. For situations where a user needs to take the action, machines are increasingly being developed with basic behavioral-science principles in mind. This allows machines to influence human behaviors in effective ways.

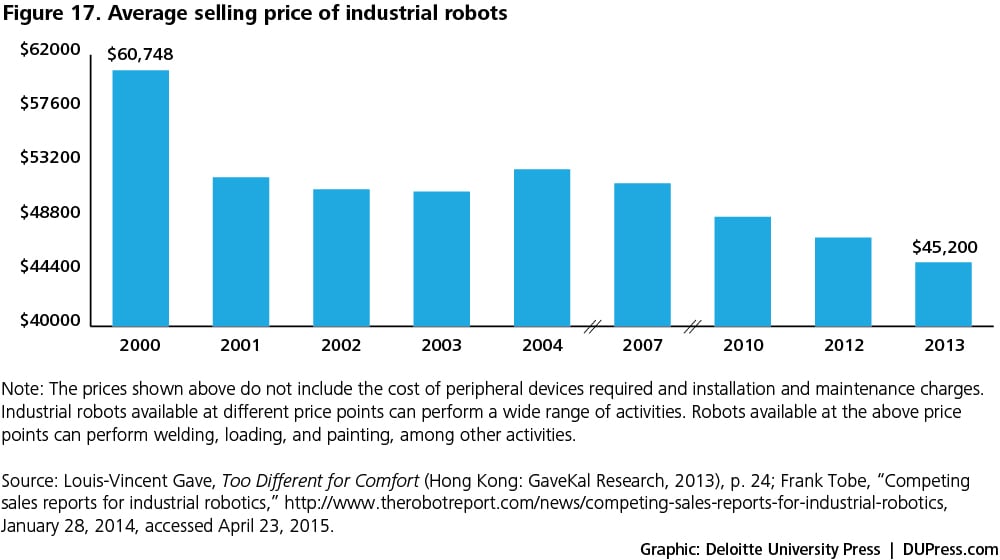

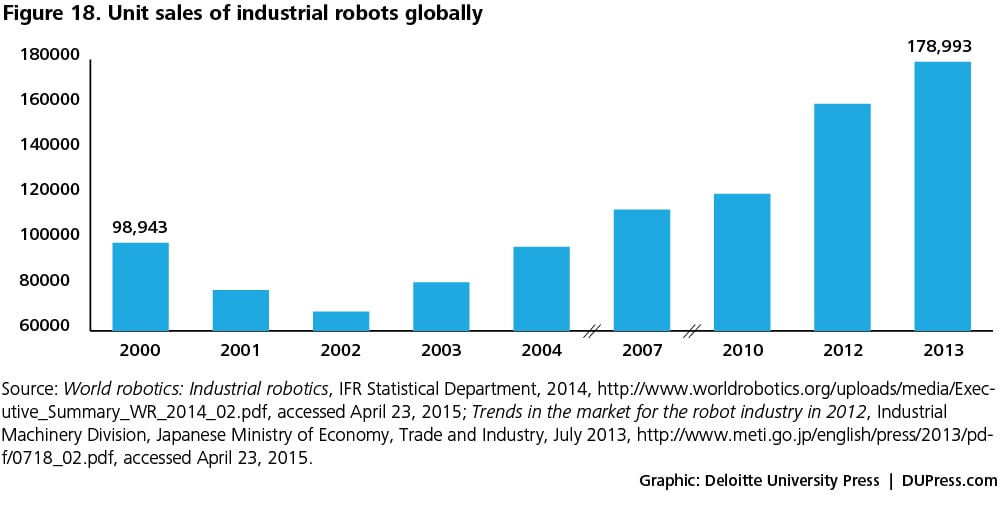

- Lower machine prices: The decreasing prices of underlying technologies—such as sensors, network connections, data processing and computing tools, and cloud-based storage—are leading to lower prices of robots. Figures 17 and 18 show a decline in the average selling price of industrial robots alongside increasing unit sales.

- Improved machine functionality: As discussed earlier, there is a thin line between augmented intelligence and augmented behavior. An underlying driver for the shift in the use of robots from mundane to sophisticated tasks is the development of elaborate algorithms that focus on the quality of fine decision making in live environments, and not simply a binary “yes/no” decision.Typically, robots made force-fitted decisions based on programmed algorithms, irrespective of the situation and information availability.109 However, recent advances in robotic control architecture prompt the machine to ask for more information if there is an information insufficiency before taking a decision.110 For example, a robot offers a pill to the patient and the patient refuses; instead of repeating the same instruction, the robot could analyze the patient’s behavior patterns based on his personal data stored on the cloud and try to deduce the reason for refusal. The robot may also deduce from the environment that the patient has a fever and inform the doctor. One of the many techniques under development enables users to train robots by “rewarding” them in cases where they have made the right decision by telling them so and asking them to continue to do the same—a kind of positive reinforcement.111

- Machines “influencing” human actions through behavioral-science rationale: Literature suggests that creating a new human behavior is challenging. Creating a new human behavior that endures is even more challenging. Nudge techniques—attempts to influence people’s behaviors—involve the design of choices that prompt them to move from “intention” to “action.”112 For example, placing a fruit at eye level is a nudge technique, while banning junk food is not, according to Richard Thaler and Cass Sunstein.113 Choice designs could be built by consciously choosing the options that should be presented to an individual and the manner in which the options are presented. For example, menus sometimes start with expensive items followed by relatively less expensive ones. This makes the customer feel that she is making a judicious choice by ordering any of the latter items, since her reference price is much higher. Furthermore, a health-conscious restaurateur could place the relatively healthful food items at relatively lower prices and, in so doing, “nudge” the customer to order them.In an analogous fashion, IoT devices can “nudge” human behaviors by establishing a feedback loop. A school in California was trying to find a solution to speeding drivers who were undeterred even by police ticketing. The school authorities experimented with a creative signboard that compared two data points: “your speed” (speed of a passing car measured by a radar sensor) against the “speed limit” of 25 miles/hour. Even though the radar signboard offered drivers no new information—as the dashboard display readily provides driver speed—the signboards “nudged” them into reducing their speed by an average of 10 percent, bringing their speed within the permissible limit or sometimes even lower.115 In a similar way, David Rose helped develop an IoT-connected pill bottle equipped with “GlowCaps” that “nudge” the patient with a flash of light at the predetermined time to take a pill.116In addition to influencing the choices of individuals on a stand-alone basis, IoT devices can also drive adoption by “using” social or peer pressure to achieve a desired result. For example, when a fitness device suggests to an individual that it’s time for a workout, the recommendation may go unheard. However, if the device shows how the user is doing vis-à-vis his peers (lagging or outperforming), the user is potentially more likely to act.117 The manufacturer of an electronic soap dispenser fitted its product with a computer chip that records the frequency with which health care professionals in different hospital wards wash their hands; it then compares these results with World Health Organization standards and conveys the comparisons back to the wards at a group level. Such a process of aggregation and comparison effectively makes personal hygiene a team sport: Each worker, in turn, is effectively “nudged” into a greater awareness of his hygienic habits, as he knows that he is a part of a team effort.118

Other examples abound showing how the IoT can influence human behavior to achieve normative outcomes. The larger point, though, is that the IoT may augment human behavior as much as it augments mechanical behavior. And the interplay between the IoT and human choice will likely only evolve and become more prominent in the years ahead.

Challenges and potential solutions

There are challenges related to machines’ judgment in unstructured situations and the security of the information informing such judgments. Interoperability is an additional issue when heterogeneous machines must work in tandem in an M2M setup. Beyond the issues related to machine behavior, managing human behaviors in the cases of M2H interfaces and organizational entities present their own challenges.

- Machines’ actions in unpredictable situations: Machines are typically considered to be more reliable than human beings in structured environments that can be simulated in programming models; however, in the real world, most situations we encounter are unstructured.119 In such cases, machines cannot possibly be relied upon completely; as such, the control should fall back to human beings.

- Information security and privacy: There is a looming risk of compromise to the machine’s security. An example of a privacy concern relates to an appliance manufacturer that offers televisions with voice-recognition systems. The voice-recognition feature collects information related to not only voice commands but also any other audio information it can sense.120 This raises concerns about the manner in which the audio information collected by the software will be used—both in benign and personally invasive ways. A benign use could include, for example, the personalization of television advertising. A personally invasive use might be the unauthorized sale of such information.

- Machine interoperability: Performance of M2M interfaces is impacted by interoperability challenges resulting from heterogeneous brands, hardware, software, and network connections. There is a need for a convergence of standards, as discussed earlier.That machines perform as desired in a particular context is a matter of getting the technology right, which is currently in an evolving stage. Perfecting human behavior is another matter entirely.

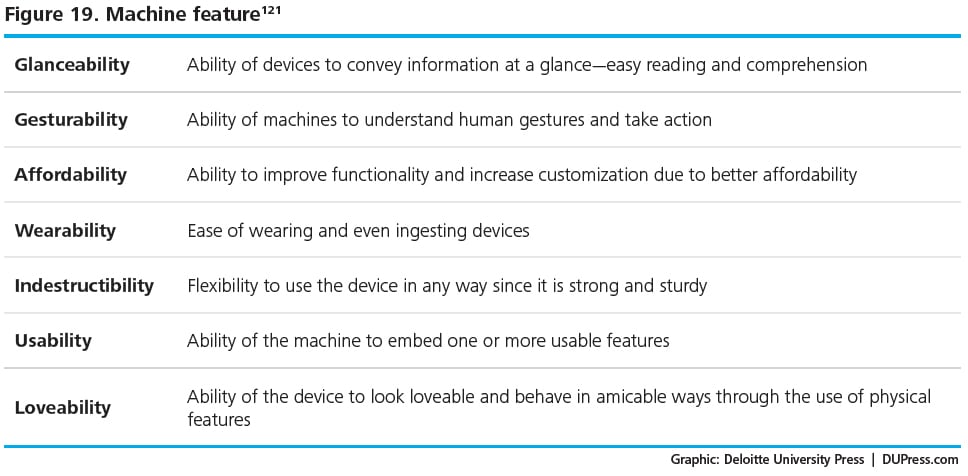

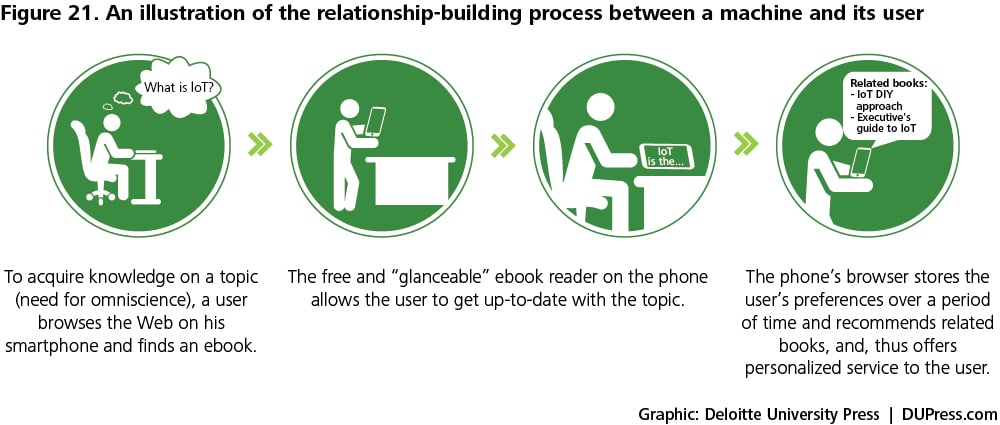

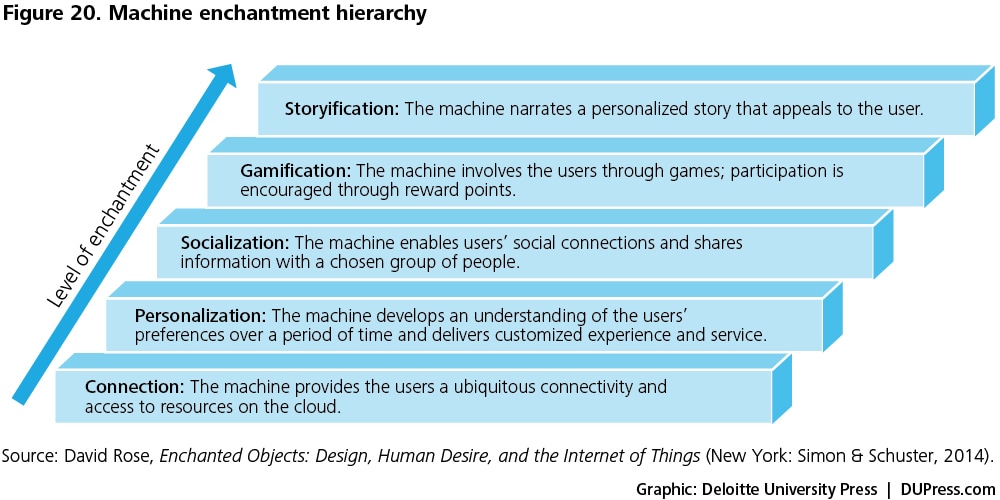

- Mean-reverting human behaviors: One of the main challenges in M2H interfaces is that, although users have the smart devices, they minimally “follow” the suggested course of action and, eventually, the devices end up serving as “shelfware.”122David Rose states that machines cater to human drives; he cites six such drives: omniscience (the need to know all), telepathy (human-to-human connections), safekeeping (protection from harm), immortality (longer life), teleportation (hassle-free travel), and expression (the desire to create and express). Machines serve human drives through one or more of their features (see figure 19). These features also determine the machine’s position in the enchantment hierarchy (see figure 20). The enduring association between the machine and its user is a function of the machine’s position in the enchantment hierarchy, starting from the most basic level of “connection” and going all the way up to “storyification.”123

- Inertia to technology-driven decision making in organizational entities: Decision makers have decades of experience running successful businesses wherein they have primarily relied on professional judgment. They typically encounter resistance to newer developments such as predictive and prescriptive analytics.124 Executives are skeptical of the accuracy and efficacy of conclusions drawn out of statistical analyses, since they view augmented intelligence tools as “black boxes” in which they do not understand how the outcome has been churned.125

To manage these augmented behavior changes in organizations, decision makers could give the new technologies a fair chance to contribute in their decision-making process by setting aside their biases. At the same time, data scientists and developers could focus on two objectives: continuously improving the statistical tools and the algorithms to bring the machine’s decision-making ability closer to reality, and making it easier for business users to comprehend the results through means such as easy-to-use visualization tools. In the current state of affairs, augmented behavior has the potential to grow, with an increasing number of successful use cases over time.

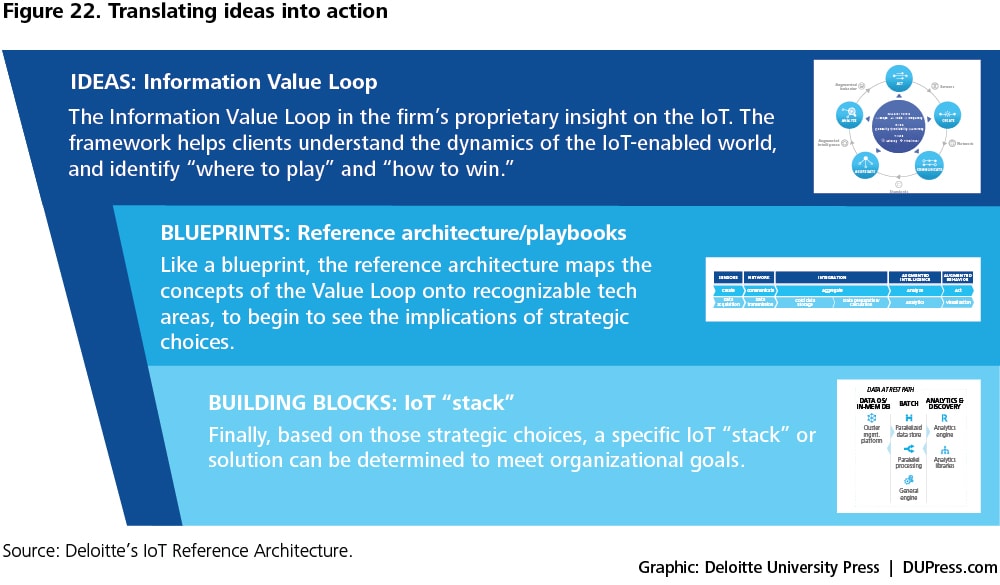

The IoT technology architecture

The Information Value Loop can serve as the cornerstone of an organization’s approach to IoT solution development for potential use cases. To transform ideas and concepts discussed earlier in the report into the concrete building blocks of a solution, we posit an end-to-end IoT technology architecture to guide IoT solution development. This architecture links strategy decisions to implementation activities. It can serve as a playbook for establishing the vision for an IoT solution and for converting that vision into tangible reality. The Information Value Loop informs and is present in each phase of this development, whereby ideas are made progressively more specific, and tactical decisions remain consistent with the overall strategic goals. The process of turning ideas into IoT solutions is shown in figure 22.

Our architecture for guiding the development and deployment of IoT systems consists of the following views:

- Business. This view defines the vision for an IoT system and covers aspects such as return on investment, value proposition, customer satisfaction, and maintenance costs.

- Functional. The linchpin of the reference architecture, this view spans modules that cater to high-level information flow through the system. It contains the functional layers for data creation, processing, and presentation.

- Usage. This view shows how the reference model realizes key capabilities desired in a usage scenario. It may include the detailed use case description, user journey, and requirements.

- Implementation. A technical representation of usage scenario deployment, this view incorporates the technologies and system components required to implement the functions prescribed by the usage and functional viewpoints.

- Specifications. Finally, this view captures the complete IoT stack to be deployed. It includes detailed technical specifications for the build-out of the solution, and translates these blueprints into the individual components needed to design, build, and implement the components and interconnections shown in the functional and implementation views.

Each of the views is detailed below.

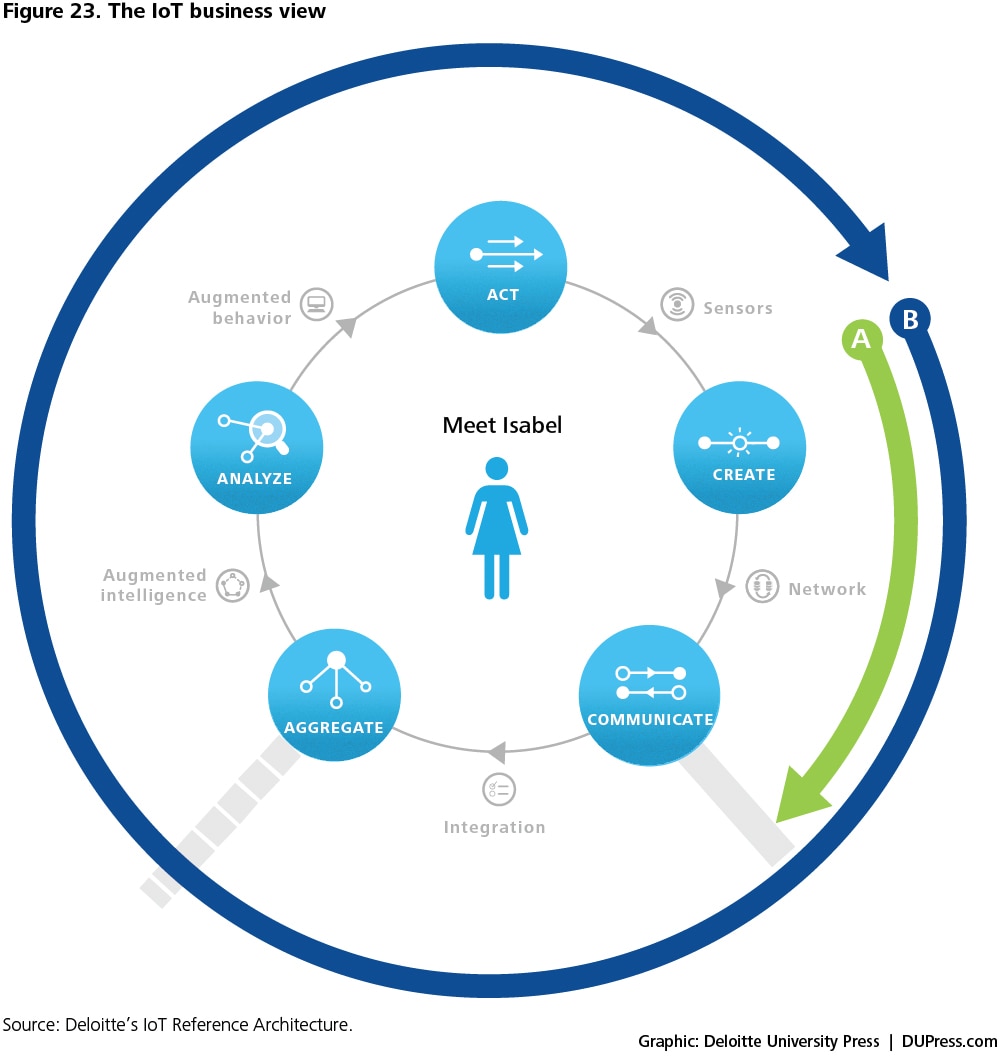

In the business view, the Information Value Loop stages are utilized to examine the flow of information which guides strategic decisions for the use case at hand. These decisions further help define the overall IoT strategy. An example of how value can be realized using IoT in health monitoring is shown in figure 23.

Prior to the IoT, the patient could wear a heart monitor, but the monitor’s data would usually be communicated to the external world using written records that had to be carried each time. This represented a blockage at the "communicate" step (see arrow "A").

With the introduction of the IoT, data can now be communicated between a patient and the physician using network connections. However, there is still a bottleneck associated with the ability of the smart systems to interface with existing electronic health record (EHR) systems in order to aggregate data. Alleviating this bottleneck is key to IoT applications in the health care industry.

In the “Meet Isabel’ scenario of figure 23, the bottleneck associated with data aggregation and use can be addressed by the “integration” layer wherein standards for sensor management, data transfer, storage, and aggregation come together in an integral fashion. In the earlier part of this report, we discuss “standards” as they relate to specific technologies that fall under the integration layer described further in the functional view.

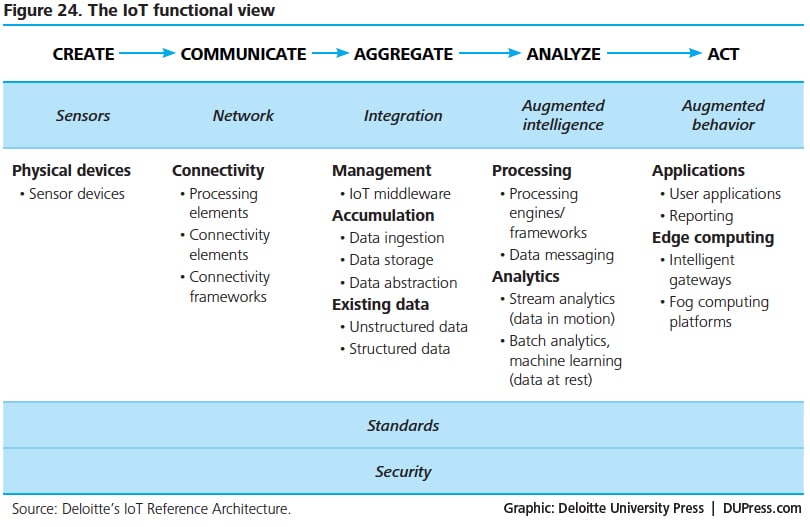

The functional view categorizes the components of an IoT system across the five value loop stages and five functional layers—sensors, network, integration, augmented intelligence, and augmented behavior. It serves as a guide to the functional considerations and technology choices of an IoT solution (see figure 24).

As discussed earlier in the report, sensors create the data that are sent downstream to subsequent layers of the architecture. Network is the connectivity layer that communicates data from the sensors and connects them to the Internet. The integration layer manages the sensor and network elements, and aggregates data from various sources for analysis. The augmented intelligence layer processes data into actionable insights. Finally, augmented behavior encapsulates the actions or changes in human or machine behavior resulting from these insights. The augmented behavior layer includes an edge computing sub-layer defined by local analysis (near the source of data) and action without the need for human intervention. Aligned with these layers and the value loop stages are standards for sensor management and data management and use, as well as security considerations including end-point protection, network security, intrusion prevention, and privacy and data protection.

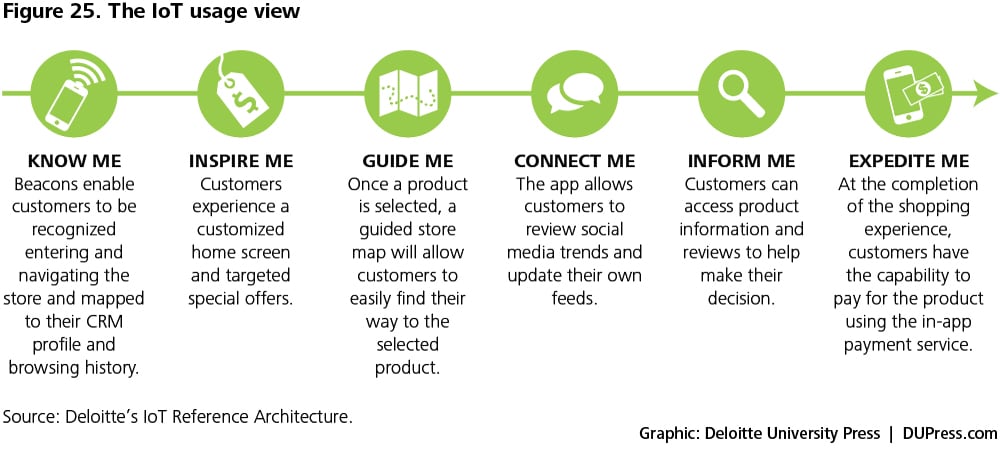

The usage view sets up the technical solution by describing the user’s journey through all the steps of the use case being implemented. This view would include the key actors, that may be users and/or machines, and the activities involved. The usage view also describes the use case from the point of view of user needs and system capabilities. Figure 25 illustrates a typical IoT use in a brick-and-mortar store.

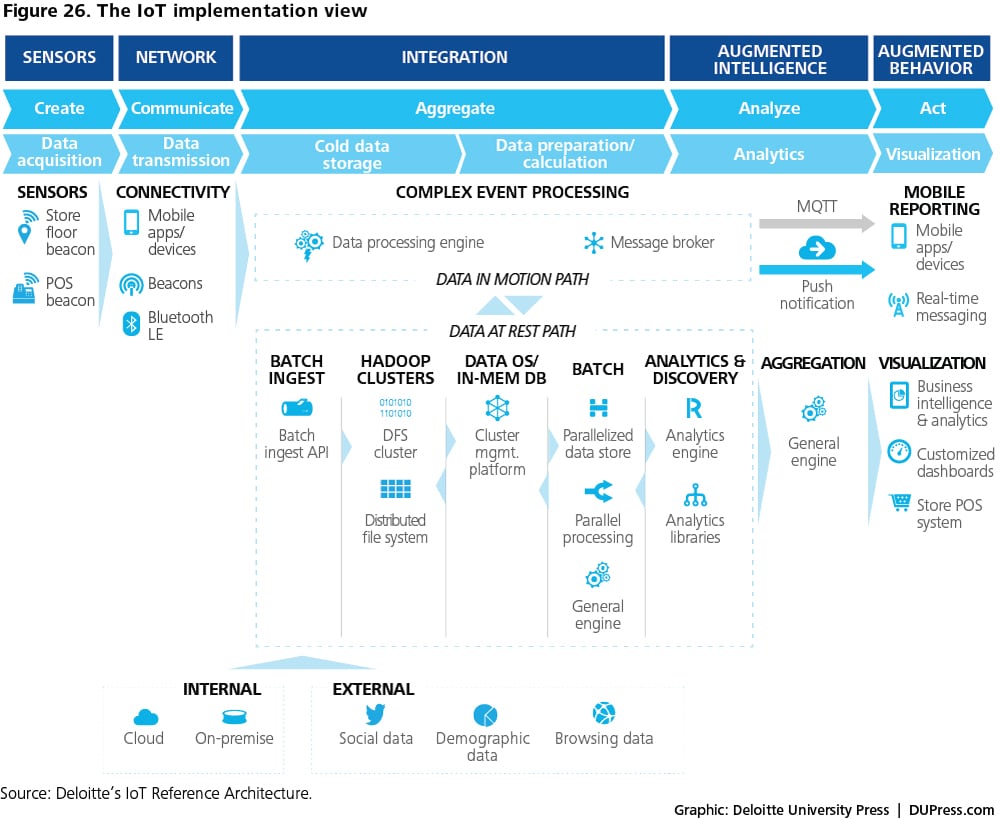

The implementation view delves deeper into specific technology choices and the vendor solutions that are used to deploy those choices. It leverages the high-level component view from the functional architecture to frame the specific system implementation.

Our IoT reference architecture describes parameters and benchmark criteria that can be used to identify the best mix of product solutions for an IoT implementation across different layers. Figure 26 shows a representative implementation view for the retail use case example described earlier.

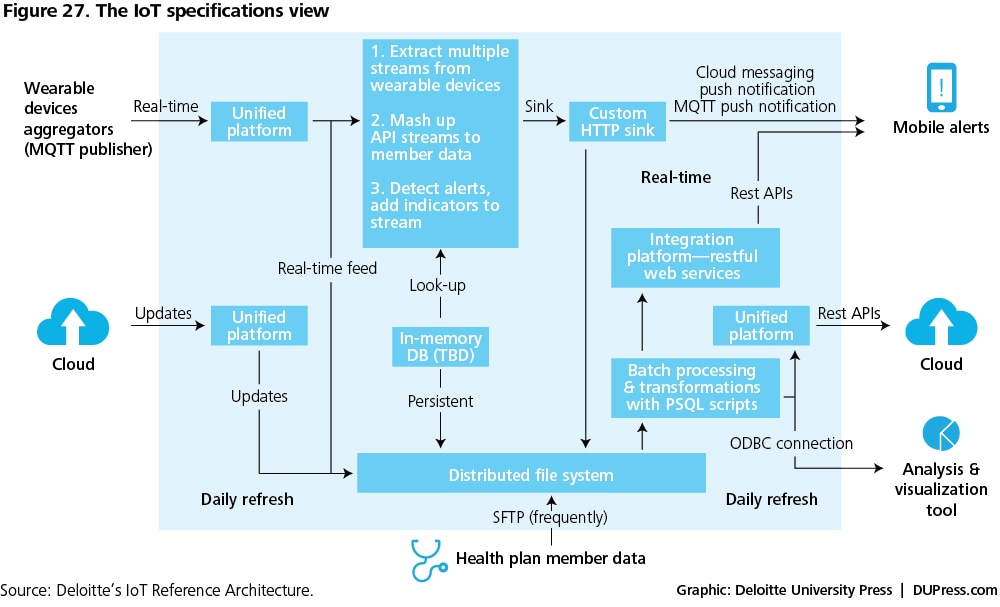

The specifications view captures the final translation of the various viewpoints described above as part of the IoT Reference Architecture into ground-level deployment. It crystalizes the functional requirements and specific technology choices identified earlier into detailed specification definitions that describe how all the selected components must be linked to work together. A sample specifications view is shown in figure 27.

Together, all the myriad viewpoints that comprise the Deloitte IoT Reference Architecture form an end-to-end blueprint for realizing an IoT system from strategy through implementation.

Closing thoughts

The Internet of Things is an ecosystem of ever-increasing complexity, and the vocabulary of its language is dynamic. As we stated at the outset, our intent in presenting this primer is not to answer every question that a reader may have about the IoT. No single resource could ever hope to achieve that end about anything as elaborate as the IoT. Rather, in developing this report, our objective was to provide a useful top-down reference to assist readers as they explore IoT-driven solutions. In using this primer, the reader should come away with a better understanding of what the IoT is as well as the elements that comprise its constituent parts within a strategic framework.

At this relatively nascent stage, the IoT ecosystem is fragmented and disorganized. Over time, the IoT ecosystem should undergo a streamlining and organizing process and a “knitting together” of its individual pieces. Because the IoT will play an increasingly important role in how we live and run our businesses, Deloitte is undertaking an IoT-focused eminence campaign. This primer will serve as a foundational resource for the campaign that will include thoughtware examining the IoT both from industry- and issue-specific perspectives.

Deloitte’s Internet of Things practice enables organizations to identify where the IoT can potentially create value in their industry and develop strategies to capture that value, utilizing IoT for operational benefit.

To learn more about Deloitte’s IoT practice, visit http://www2.deloitte.com/us/en/pages/technology-media-and-telecommunications/topics/the-internet-of-things.html.

Read more of our research and thought leadership on the IoT at http://dupress.com/collection/internet-of-things/.

Glossary

Actuator: a device that complements a sensor in a sensing system. An actuator converts an electrical signal into action, often by converting the signal to non-electrical energy, such as motion. A simple example of an actuator is an electric motor that converts electric energy into mechanical energy.

Analytics: the systematic analysis of often-confusing and conflicting data in search of insight that may inform better decisions.

Application program interfaces (API): a set of software commands, functions, and protocols that programmers can use to develop software that can run on a certain operating system or website. On the one hand, APIs make it easier for programmers to develop software; on the other, they ensure that users experience the same user interface when using software built on the same API.

Artificial intelligence: the theory and development of computer systems able to perform tasks that normally require human intelligence. The field of artificial intelligence has produced a number of cognitive technologies such as computer vision, natural-language processing, speech recognition, etc.

Batch processing: the execution of a series of computer programs without the need for human intervention. Traditional analytics software generally works on batch-oriented processing wherein data are aggregated in batches and then processed. This approach, however, does not deliver the low latency required for near-real-time analysis applications.