The transaction monitoring framework has been saved

Perspectives

The transaction monitoring framework

An intelligence led approach to transaction monitoring

Transaction monitoring is a critical component of the AML/CFT framework. Subject Persons (“SPs”) require robust transaction monitoring processes to detect suspicious transactions executed through their products and services; however, this is one of the more complex, time consuming and costly processes within the AML/CFT framework, especially if done poorly. This is in part due to the interconnectedness of transaction monitoring processes with several other processes within the wider AML/CFT framework, particularly the risk assessment, KYC and due diligence activities, and in part due to their dependencies on data, systems, tools, and human resources.

In this article, we explore how the challenges associated with developing and maintaining transaction monitoring processes could be managed intelligently, with the objective of deriving better outcomes, not only in terms the effectiveness and efficiency from an AML/CTF risk management perspective, but also in terms of how this process could bring value to the organisation by contributing to the achievement of its strategic goals. We share our point of view of what we believe to be the key components of a robust transaction monitoring framework, the common pitfalls we see in our market and potential solutions.

Challenges in the existing transaction monitoring landscape

Legacy technology and systems

Over time SPs have implemented different systems and processes with the introduction of new products and/or services, and operational changes. This often results in disparate sources of data being collected and stored across the organisation, which often leads to inconsistent and poor data quality. Older generation transaction monitoring systems have functional limitations that do not allow the flexibility to design dynamic rule sets and may not leverage emerging technologies that can significantly enhance anomaly detection capabilities. Processing can be complex and cumbersome due to archaic data modelling and storage methodologies and technologies.

Poor data quality

Data quality is a cornerstone of an effective transaction monitoring system. SPs must rely on the accuracy, and completeness of data, without which potentially illicit transactions can go under the radar and remain undetected. An example of poor data quality is the incorrect tagging of customers to different business segments e.g., retail customers being tagged as SME customers and vice versa. Poor data quality significantly restricts the ability to design and configure rules that directly address relevant typologies and may also lead to an inability to rely on the automated alert generation process. This results in a significant increase in the operational effort and cost due to the manual activity required to give integrity to the results generated by the system.

False positives

Due to the poor quality of data being fed into transaction monitoring systems, coupled with the limitations of dynamic monitoring, SPs are increasingly faced with the generation of large volumes of alerts. SPs engage large teams of resources to review and analyse alerts, which in turn increases the operational risk and cost of compliance, with little to no effect on the primary objective, i.e., the fight against financial crime. Even with a large pool of people reviewing alerts, most (if not all) SPs still have significant alert backlogs, which again defeats the purpose of transaction monitoring. What good is there in identifying illicit activity weeks (if not months) after it has taken place?

Regulatory consequences

In an attempt to keep pace with the number of alerts being generated and to clear alert backlogs, many SPs have been compromising on the quality of investigations completed prior to closing an alert. Often, alerts are closed without the necessary justification and/or without any supporting documentation to evidence the conclusion. In other instances, SPs have sought to file defensive SARs/STRs. This is not looked at favourably by regulators, as the focus should be on fighting financial crime, rather than just-in-case approaches to maintain compliance.

A transaction monitoring framework model

The transaction monitoring process is both critical and complex. Managing it effectively requires a structured and iterative process of continuous development and fine tuning. Designing a transaction monitoring framework that provides the structure, methodology and governance allows organisations to get the most value out of their transaction monitoring framework by maximising risk management outcomes at the most efficient cost.

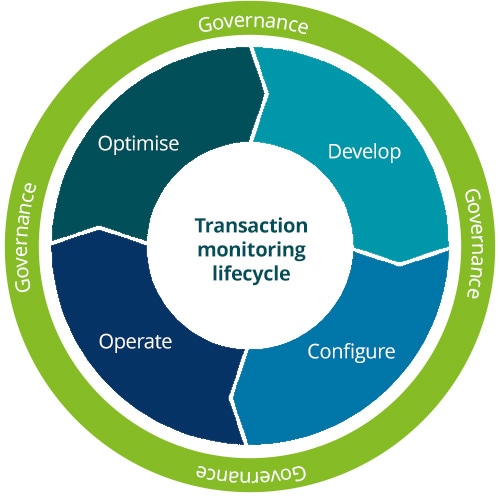

Our transaction monitoring framework model, as illustrated in Figure 1, is an iterative model based on four key stages within the transaction monitoring lifecycle: Develop, Configure, Operate and Optimise; wrapped by a layer of governance that together form the framework model.

Periodic typology assessments must be the first step of every transaction monitoring framework as this goes to the very core of what any AML/CFT framework seeks to achieve – understanding how products and services can be exploited by bad actors, to prevent this from happening by researching latest ML/FT trends from reputable sources (FATF, Wolfsburg, FINCEN, etc.) relevant to the organisation’s products/services and customers.

Client segmentation is a key component of a Risk-Based Approach (RBA). At its simplest, an RBA means identifying the areas that present the greatest financial crime risk and allocating sufficient resources to mitigate them accordingly. By harnessing dynamic data-driven insights, optimised transaction monitoring systems can better consider the transactional (and other) behaviour of clients and account for this behaviour, allowing SPs to cluster customers and set more precise transaction monitoring parameters and thresholds for those with similar behavioural patterns.

Data is the lifeblood of any transaction monitoring system, and therefore defining the data points required for the functioning of the rules is fundamental. Identifying datapoints is followed by an exercise of determining data gaps, data quality issues and feeding of such data points to the transaction monitoring system. Decision-making around data gap and quality remediation, specifically around the timing, approach, and feasibility of closing such gaps is key, and must be looked at from a broader risk management context. Crucially, any rules impacted by data issues will likely stop at this stage in the lifecycle until such gaps are closed. The resultant risk exposure should be either mitigated though alternative means or accepted by those charged with governance.

Rules design must be primarily focused on the targeted detection of specific typologies identified during the assessment phase. In addition to targeting typologies, rule design requires that due consideration be given to other factors such as time horizon, relevant segments, thresholds, and the interplay with other rules. Additionally, the functional capabilities of one’s transaction monitoring system and the accessibility of data points are overarching dependencies. Robust documentation of rules within a “rules inventory” provides transaction monitoring system implementers with clear instructions for configuration, a point of reference for ongoing review and ensures the formalisation of a key document for governance related matters.

Common pitfalls

- Adoption of “out-of-the-box rules”.

- Rules do not provide coverage required to mitigate key typologies.

- Inadequate thresholds.

- Weak data governance.

- No documentation of rules design.

Configure

The implementation of rules is a key task which, if done incorrectly, will have a considerable operational impact. Depending on the complexity of the overall IT architecture, implementation typically requires the involvement of multiple parties, such as the transaction monitoring system vendors or administrators, data warehouse administrators, IT developers and the project owners. Careful planning and coordination amongst all involved is critical to ensure that rules configured are reading the correct data and are operating as intended.

Functional testing of rules in a test environment prior to moving to production provide assurance that the rules as configured work as intended.

Back-testing new or amended rules through simulations on historical transaction data allows SPs to understand how rules are expected to behave prior to going into production. This is not only important to sense-check outcomes, but also provides insight on the impact of new or modified rules on the workload of transaction monitoring teams, thereby allowing for resource planning and change management.

Common pitfalls

- Alert clearing teams are not prepared/trained for new or modified rules.

- Alert case management is not integrated into the TM system, and can be manual and/or heavily reliant on spreadsheets that are prone to error and inefficiency.

- More time is spent on manual data gathering and documentation than on the analysis of the alert.

Operate

New rules or changes to existing transaction monitoring rules invariably impact the Business As Usual (“BAU”) teams responsible for the analysis of alerts as well as those who support in their clearance. Change management ensures that all impacted parties are aware of expected changes through ongoing communication and the provision of training, as required. Any expected changes in workload may have a bearing on resource management, which is key to ensure that backlogs are not created, and staff morale is not adversely impacted.

Alert case management is another key pillar of the transaction monitoring framework. Case management incorporates the workflows associated with: the capture and allocation of alerts to analysts; the process of reviewing, investigating, clearing, and escalating alerts; and quality control on the alerts managed. Effective case management workflows provide the functionality to assign alerts based on workload, to monitor the performance of analysts and enable quality control. Where investigations require reference to multiple systems, the use of data analytics and robotics can have a significant positive impact on the quality, efficiency and speed of the alert clearing process.

Common pitfalls

- Delayed involvement of IT and system administrators.

- Failure to carry out sufficient testing of rules prior to go-live.

- No visibility on expected impact on the alert clearing workload.

Optimise

Analysing alert data can provide insights into the effectiveness of existing transaction monitoring rules and provide a basis for recommendations on further optimisation of such rules. This analysis can involve a multiple step process:

A. Assess the number of alerts to determine the frequency with which a particular rule is flagged.

B. Conduct bucket analysis to sort the frequency with which a rule is flagged across various parameters e.g., by transaction value, account type, client risk rating, etc.

C. Determine scope for finetuning the rules based on the analysis conducted, using various metrics such as alert-to-SAR conversion rate, volume of false positives, etc.

Leveraging analytics can also help SPs to proactively identify risks and opportunities across a range of preventative financial crime use cases, helping to reduce operational workloads in case management. SPs may also be able to implement more targeted transaction thresholds by leveraging the historical information gathered through data analysis.

Simulation-led data analysis can provide insights into threshold tuning for optimisation of transaction monitoring rules and allow the SP to identify the effects of changing thresholds without having to implement them first. Experimentation in a sandbox environment can help the SP improve the flexibility and adaptability of rules for transaction monitoring, providing a testing area to build new rules or improve existing ones.

Common pitfalls

- SPs view the setting of TM rules as a one-off exercise.

- Failure to optimise periodically results in redundant rules, unmitigated risks and high cost of compliance.

- Own transaction history is not used to identify new patterns and outliers.

Governance

Governance principles apply to every phase within the transaction monitoring lifecycle. The transaction monitoring framework ought to be formalised within a policy or methodology document. This should define framework objectives and vision, process ownership, escalation and approval protocols, quality control expectations, and performance metric targets, amongst others.

The maintenance of the rules inventory referred to in the “Develop” phase, provides a go-to artefact for understanding the purpose and scope of the rules, the risks that they seek to mitigate, and a log of modifications over time with their respective reasoning and approvals.

Common pitfalls

- Over time SPs, lose track of the reasons why certain rules were set and configured.

- Organisations are unable to provide the rationale for historical changes in rules, such as thresholds, to regulators and other stakeholders.

- New management teams reinvent the wheel on rules rather than optimise.

Introducing new technologies into the transaction monitoring process

To achieve transaction monitoring optimisation, SPs can consider the introduction of new technologies such as Artificial Intelligence. There is a growing consensus that adopting technological innovations including robotics, cognitive automation, machine learning, data analytics and AI can significantly enhance compliance processes, including transaction monitoring. In tandem, regulators have also displayed an increasing openness to SPs implementing such techniques; for example, in April 2023, the FIAU issued a guidance note that encouraged the consideration of innovative technologies in transaction monitoring. SPs are designing AI tools to improve the identification of suspicious transactions and to refine the screening of Politically Exposed Persons (PEPs), sanctioned individuals and organisations. Until recently, SPs had heavily relied on rules-based systems for their transaction monitoring, which presented several limitations. However, SPs are now turning to machine learning to benefit from significant improvements in reducing the volume of false positives, increasing efficiency, and lowering the associated cost of compliance.

Among the variety of different machine learning uses, the transaction monitoring process presents a significant opportunity for application due to the solution’s ability to make judgments and to identify behavioural patterns.

Risk rating suspicious behaviour, alert classification and noise reduction

Machine learning algorithms can reduce false positive alerts by detecting suspicious behaviour and risk-classifying alerts as being higher or lower risk following an RBA. Advanced machine learning techniques allow resources to focus on high-risk activity by automating the detection of alerts that are likely to require investigation and auto-closing alerts that are non-suspicious.

Anomaly detection and identification of transactional patterns

Machine learning techniques can identify patterns, data anomalies and relationships amongst suspicious entities that could have gone unnoticed under a rules-based approach.

Transaction data analytics may also provide invaluable insight about customer behaviour that may be used to identify upselling opportunities, thereby contributing to the achievement of the SP’s commercial goals.

To conclude, the implementation of an iterative framework provides SPs a structured approach to continuous optimisation of their transaction monitoring activities. This translates into a significant improvement in the SP’s ability to identify suspicious activity whist getting far better value from the cost of compliance.