Quantum computers: The next supercomputers, but not the next laptops TMT Predictions 2019

15 minute read

12 December 2018

When quantum computers come, they will be big, immovable, expensive, and good only for certain types of problems. So why is it still imperative to pay attention to quantum computing now?

Deloitte Global is making not one, but five predictions about quantum computers (QCs) for 2019 and beyond:

- Quantum computers will not replace classical computers for decades, if ever. It is expected that 2019 or 2020 will see the first-ever proven example of “quantum supremacy,” sometimes known as “quantum superiority”: a case where a quantum computer will be able to perform a certain task that no classical (traditional transistor-based digital) computer can solve in a practical amount of time or using a practical amount of resources. But while this will indeed be an important milestone, the term “supremacy” may mislead. Yes, there will be certain useful and important computational problems that will be better solved by QCs, but that does not mean that QCs will be superior for all, most, or even 10 percent of the world’s computing tasks.

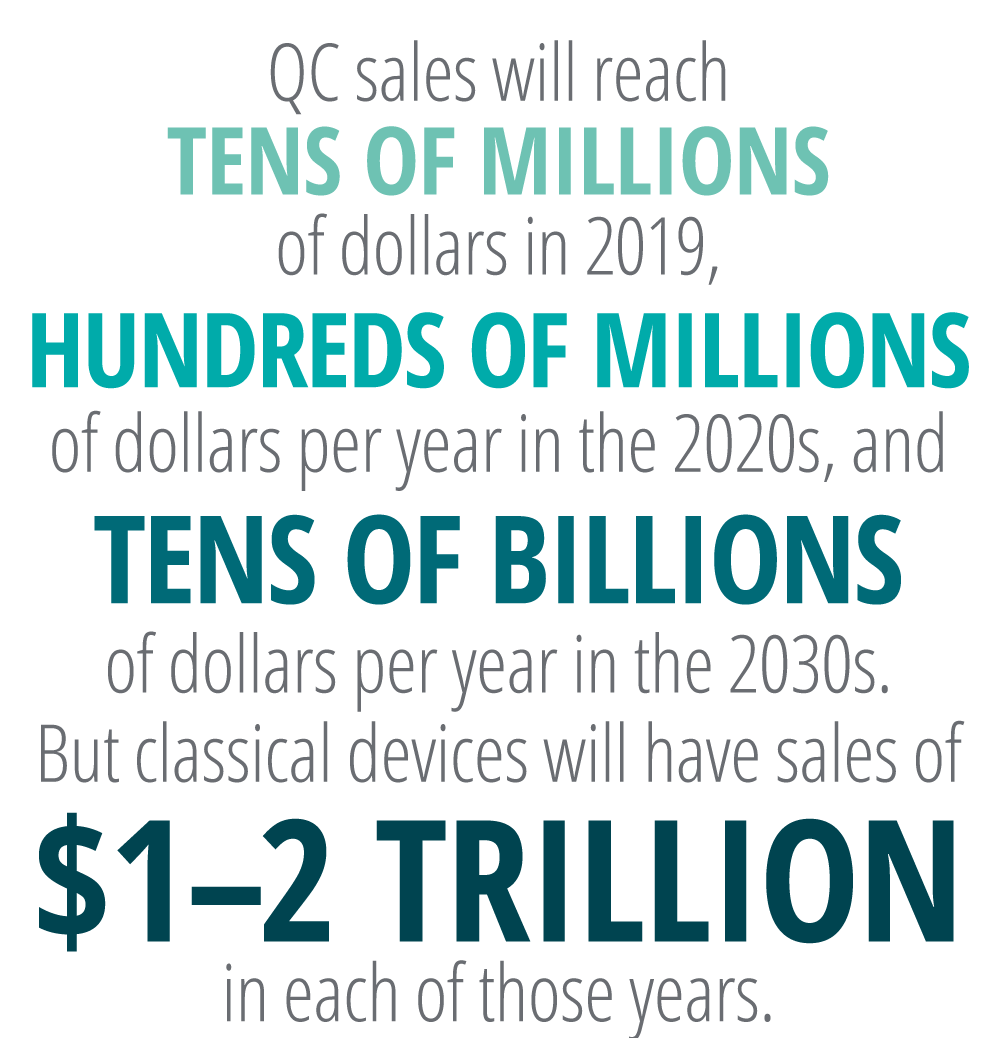

- The quantum computer market of the future will be about the size of today’s supercomputer market—around US$50 billion. In contrast, the market for classical computing devices (ranging from consumer smartphones up to enterprise supercomputers) is expected to be worth over US$1 trillion in 2019.1 Even in 2030, none of the billions of smartphones, computers, tablets, and lower-level enterprise computing devices in use will be quantum-powered, although they may sometimes or even often use quantum computing via the cloud.

- The first commercial general-purpose quantum computers will appear in the 2030s at earliest. The 2020s will likely be a time of progress in quantum computing, but the 2030s are the most likely decade for the larger market to develop.2 (It is worth noting that some serious scientists believe that a general QC will never be built,3 although this is a minority opinion.)

- The Noisy Intermediate Scale Quantum (NISQ) computing market—using what could be considered early-stage QCs—will be worth hundreds of millions of dollars per year in the 2020s. Early-stage QCs, so-called “NISQ” computers—whose computing bits are “noisy” and less reliable than the more powerful and flexible QCs that will eventually be built, but whose enhanced computing power is still useful—are likely to be commercially valuable. The full range of industries that will be able to take advantage of NISQ computing is unclear, but organizations in the biological and chemical sciences are almost certain to find it useful.

- The quantum-safe security industry is also likely to be worth hundreds of millions of dollars per year in the 2020s. One area in which large QCs will almost certainly deliver an exponential speedup is the area of security and cryptography. A technique called Shor’s algorithm is known (when executed by a sufficiently large quantum computer) to be able to break many current public key cryptosystems,4 such as RSA and ECC. Enterprises and governments should start protecting against the threat of powerful QCs today, not when it happens,5 since by then it will be too late.

A theoretical milestone with little pragmatic impact

Learn more

Listen to the related podcast

Watch the video or view the infographic for this prediction

View TMT Predictions 2019, download the full report, or create a custom PDF

QCs seem to have been hovering just over the horizon for years, if not decades. For those who are getting tired of waiting, the discipline is likely to celebrate an important milestone in the next couple of years: the achievement of “quantum supremacy.” When that happens, what will change?

The pragmatic answer is: not much at first. Although quantum supremacy will mark a conceptual turning point, the reality is that QCs will still be, at least in the near term, difficult to build, awkward to house, and challenging to program—and therefore not ready for the commercial market any time soon. However, progress in this domain is ongoing (albeit in fits and starts), and quantum computing holds a great deal of promise (both scientific and economic) for the future. To be able to sort through the hype that will undoubtedly surround quantum supremacy, it’s useful to understand some of the fundamentals behind quantum computing more thoroughly.

What’s a quantum computer made of?

QCs are measured by their number of quantum bits, or qubits, which are the equivalent of a transistor in a classical computer. Today’s QCs contain only physical qubits—embodied as two-state quantum systems such as a pair of trapped ions—which rapidly decay and are prone to error. It takes an estimated 1,000 physical qubits to make a single logical qubit—that is, a qubit that is fault-tolerant and error-corrected—and this goal is currently still far out of reach. A universal or general QC (which is what is needed to be able to solve a much larger and wider set of problems), in turn, will require hundreds of logical qubits, and therefore hundreds of thousands of physical qubits.

As of 2018, QCs containing both 20 physical qubits6 and 19 physical qubits7 exist whose performance specifications are known and published. Public announcements of devices with 50, 72, and even 128 physical qubits have also been made, but none of these have yet published their specs, so their level of control and error are not known. It is believed that quantum supremacy will be achieved with a machine that has 60 or more physical qubits,8 but progress is slow, since it gets increasingly harder to add physical qubits as their number increases. Nonetheless, by 2020, a QC of more than 60 physical qubits will almost certainly have been developed and its specs published, and it is likely that the first proof of quantum supremacy achieved.

A 200-logical-qubit machine, which is about the minimum size that is expected to be a commercially useful general-purpose QC (and which would be composed of 200,000 physical qubits, or three orders of magnitude more than the state of the art in 2018), is almost certainly much more than five years away, and possibly more than ten. But when it happens, the devices will be large, nonportable, cost millions of dollars, require experts to program and run, and be superior to classical for only a specific, limited set of hard computation problems. Because of this, whether it happens in 2025 (unlikely) or 2045 (more probably), the global market for general-purpose QC hardware (as distinct from the software and services enabled by them) is likely to be around US$50 billion per year. This is about the same size as the contemporary supercomputer market (which is also made up of large, nonportable million-dollar devices that are only suited to solving certain hard problems), which was worth about US$32 billion in 2017 and is expected to grow to US$45 billion by 2022.9

Quantum computing is cool. Really cool!

QCs require controlling and maintaining the quantum behavior of their qubits. Because temperature is often an obstacle to achieving this stability, many physical implementations of QCs are done at extremely low temperatures.

Atoms stop moving entirely at absolute zero (-273.15° on the Celsius scale; -459.67° on the Fahrenheit scale; 0 on the Kelvin scale). Nitrogen turns liquid at 77K, and helium liquefies at about 4K. As of 2018, the most common physical implementations (devices from Google, Intel, IBM and D-wave) rely on temperatures well below 4K—usually around 0.015K (15 millikelvin), although some are operating at even lower microkelvin levels. Such machines and their associated cooling systems, by necessity, weigh thousands of kilograms, are the size of a small car, cost millions of dollars, and use many kilowatts of power. This is not just true today; any QC that requires millikelvin temperatures will continue to be roughly that large, expensive, and energy-consuming even in the 2030s.

There are, however, proposed physical implementations that require “merely” the very cold temperatures that can be achieved with liquid nitrogen. These machines would be cheaper and smaller, albeit still larger and more expensive than almost all classical computers. There are also hopes for room-temperature QC technologies, but none of these have yet been demonstrated to work at more than one or two physical qubits.

Failing those room-temperature solutions, it becomes clear that we are not going to have quantum computing on our smartphones, except through the cloud!

Quantum computing is important today

Although the QC market will take years to arrive, will not replace classical computers, and will be worth US$50 billion rather than trillions of dollars in the 2030s, this is still a lot more than what is essentially zero today. Indeed, QC will be one of the largest “new” technology revenue opportunities to emerge over the next decade. In fields where quantum supremacy has been achieved, whole industries will be transformed.

Further, it’s not just quantum computing itself that is important, but also the innovations that quantum computing is prompting in traditional computing. The prospect of QCs is galvanizing the classical computing industry, with many advances occurring in the use of classical computers to simulate quantum techniques.10 These advances will be useful long before large commercial QCs are available.

Quantum-safe security was important yesterday

One frightening aspect of QC development is the certainty—not merely the potential—that QCs will be used to crack previously undecipherable codes and breach previously unhackable systems. This will likely only happen when commercial QCs hit the market (probably in the 2030s,11 although some academics even give it a one-in-six chance of happening by 202612), but the time to start planning for it is now. Confidential data, over-the-air software updates, identity management systems, long-lived connected devices, and anything else with long-term security obligations must be made quantum safe before large QCs are finally developed. Indeed, there are several industries where the time to start quantum-proofing has already passed. Organizations in the automotive, military and defense, power and utilities, health care, and financial services sectors are today deploying long-lived systems that are not quantum-safe, exposing them to significant liability and financial overhead in the future. And that’s not the worst that could happen. From a national security perspective, malicious adversaries could store classically encrypted information today to decrypt in the future using a QC, in a gambit known as a “harvest-and-decrypt” attack.

Definitions and glossary of quantum computing terms

Classical computer: The traditional form of binary digital electronic computing device, almost always running on silicon semiconductor transistor and integrated-circuit hardware.

Quantum computer: A computer that uses quantum-mechanical phenomena, such as superposition and entanglement, to perform its calculations. Various (more than 10) contending physical implementations are being tried, many of which require ultra-low temperatures. It is not clear at this time which physical implementation will triumph. Quantum computers are not better than classical computers at everything; they can offer spectacular speedups for certain tasks, but do no better at others, or could even be worse.

Superposition and entanglement: This is the secret sauce of how quantum computers do what they do … but it is not necessary to understand these terms to understand quantum computing’s likely market size and timing of commercial availability. For those who wish to know more about superposition and entanglement, many online articles explain them.

Quantum supremacy or superiority: Both terms are used more or less interchangeably to denote the point at which a quantum computer will be able to perform a certain task that no classical computer can execute in a practical amount of time or using a practical amount of resources. It is critical to note that just because a QC has demonstrated supremacy for one problem does not mean that it is superior for all other—or even any other—problems. There are different degrees by which a QC can provide speeds greater than a classical computer (see the below entries on quadratic and exponential speedups).

Quantum advantage: Although quantum supremacy is an important theoretical milestone, it is possible that it will be for a computing problem that is of no or little practical importance. Therefore, many believe that quantum advantage will be the more important breakthrough: when a quantum computer can perform a certain useful task that no classical computer can solve in a practical amount of time or using a practical amount of resources.

Quadratic speedup: There are certain computational problems, such as searching an unordered list,13 where a QC would outperform a classical computer by a quadratic amount: If it takes a classical computer N steps to run the process, the QC can do it in √N. (Grover’s algorithm is the most famous example of a technique that QCs could use to show a quadratic speedup.) For example, if a given calculation would take 365 days on a classical computer, it would take only 19.1 days on a QC. However, there are few real-world computing tasks done today that take a year, so it is more realistic to say that, if a computation takes eight hours on a classical machine, then it could be done in less than three hours on a QC. That may be a big enough speedup to justify using a quantum computer that costs millions of dollars and requires specialists to run and program it … or it might not. The business case for using a QC when the speedup is only quadratic is not always compelling.

Exponential speedup: The real case for QCs comes when they provide an exponential speedup, as is the case for certain problems such as breaking public key encryption or simulating chemical and biological systems. If a classical machine would take nine billion years to crack a public key by brute force, a quadratic speedup that reduces the calculation time to 3 billion years is not useful. But an exponential speedup that cracks the code in minutes, or even seconds, is potentially transformative. However, it is unclear just how often QCs will be able to offer exponential speedups. Only a small number of problems are known today where QCs would offer exponential speedups, but optimists note that more will become known over time.

Physical qubit: Any two-level quantum-mechanical system can be used as a qubit. These systems include, but are not limited to, photons, electrons, atomic nuclei, atoms, ions, quantum dots, and superconducting electronic circuits. As of 2018, most large QCs use either superconducting qubits or trapped ion technologies.

Logical qubit: A logical qubit uses multiple unreliable physical qubits to produce one reliable logical qubit that is both fault-tolerant and error-corrected. As of 2018, no one has built a logical qubit. The assumption is that a large number of physical qubits will be required to make a logical qubit; the current consensus is that hundreds or even thousands of physical qubits will be required. Large numbers of logical qubits are necessary for a universal or general QC that can solve a wide range of problems.

Noisy Intermediate Scale Quantum (NISQ) computing: Although a device composed of hundreds of logical qubits is the ultimate goal, devices with large numbers of physical qubits are likely to have some commercially interesting uses. These could be thought of as single-task QCs or simulators. According to a leading quantum researcher, Dr. John Preskill, “The 100-qubit quantum computer will not change the world right away—[but] we should regard it as a significant step toward the more powerful quantum technologies of the future.”14

Quantum simulation: The subjects of certain problems, such as chemical processes, molecular dynamics, and the electronic properties of materials, are actually quantum systems. Classical computers are notoriously ill-suited to performing simulations of quantum systems, and they must rely on crude approximations. Since QCs are also governed by the rules of quantum mechanics, they are well-suited to performing efficient simulations of other quantum systems.

Simulation of quantum computers by classical computers: Everything that can be done by today’s early-stage QCs can be also be done about as quickly on a classical machine simulating quantum computing. Many researchers believe that, at some specific number of physical qubits, a classical machine will be unable to match the QC device—the achievement of quantum supremacy. The twist, however, is that the technology behind the classical simulation of quantum devices is advancing more or less as quickly as the number of physical qubits in QCs is growing. In 2017, when the state of the art was a 20-physical-qubit machine, it was thought that a classical machine could match a 42-physical-qubit QC—but not a 48-physical-qubit QC—for a particular problem. In 2018, as machines with more physical qubits were in development, a mathematical advance was made that showed that a classical computer could now match the 48-physical-qubit device via simulation. So, at least for that specific problem, the “supremacy bar” has been raised, and supremacy will likely not be achieved without a 60-physical-qubit QC. But the supremacy bar cannot be raised indefinitely: For a classical computer merely to store the mathematical representation of a modestly sized (100-qubit) QC would require a hard drive made of all the atoms in the universe!

Bottom line

Organizations and governments can take steps now to help capitalize upon—and protect themselves in—a quantum-computing world:

Create a long-range quantum-safe cybersecurity plan. It is definitely not too early to begin planning to fortify cyber defenses against a quantum future. The National Institute of Standards and Technology (NIST, part of the US Department of Commerce) recently assessed the threat of quantum computers and advised organizations to develop “crypto agility”—that is, the ability to swiftly switch out cryptographic algorithms for newer, more secure ones as they are released or approved by NIST.15 Organizations should pay attention to these developments and have roadmaps in place to follow through on those recommendations.16

For companies working at the atomic level, think about NISQ. Single-task quantum devices of 50–100 physical qubits, though unsuited to most tasks, can be useful for modeling atomic behavior, and they will become available in the relatively near term. Companies in chemistry and biology will almost certainly benefit. Many companies in these fields are already investing in classical high-performance computing (HPC) computing resources;17 adding a NISQ initiative just makes sense.

For companies working at the regular-size level, also think about NISQ. More fields than chemistry and biology can use NISQ computers. In the financial sector, for instance, it is believed that these intermediate QCs can perform portfolio optimization,18 while other possible financial applications include trading strategy development, portfolio performance prediction, asset pricing, and risk analysis.19 The transportation industry is also looking at QCs: Some car companies are testing them for traffic modeling, machine learning algorithms, and better batteries.20 The logistics industry sees potential in QCs for route planning, flight scheduling, and solving the traveling salesman problem (a famously difficult task for classical computers).21 And, not unlike HPCs, NISQ computers are likely to find a place in both government and academia: for weather modeling22 and nuclear physics,23 to name just two examples.

Update high-performance computing architectures.24 Enterprises in industries that have already invested in HPCs, such as aerospace and defense, oil and gas, life sciences, manufacturing, and financial services, should familiarize themselves with the impact that quantum computing may have on the architecture of HPC systems. Hybrid architectures that link conventional HPC systems with quantum computers may become common. One company, for instance, has described an HPC–quantum hybrid for the simulation and design of a water distribution system; it uses quantum annealing, a restricted version of quantum computation, to narrow down the set of design choices that need to be simulated on the conventional system, with the potential to significantly reduce total computation time.25

Reimagine analytic workloads. Many companies regularly run large-scale computations for risk management, forecasting, planning, and optimization. Quantum computing could do more than just accelerate these computations—it could enable organizations to rethink how they operate, and to tackle entirely new challenges. Executives should ask themselves, “What would happen if we could do these computations a million times faster?” The answer could lead to new insights about operations and strategy.

As observed earlier, companies may even be able to reap some benefits from quantum computing before the machines themselves are commercially available. Quantum computing researchers have discovered improved ways of solving problems using conventional computers. Some researchers are seeking to bring “quantum thinking” to classical problems.26 A startup that offers quantum-inspired computing technology for machine intelligence claims to be seeing increases in computational speed using this approach.27

Explore academic R&D partnerships. Companies may find it worth allocating R&D dollars to collaborations with academic research institutions working in this area, as Commonwealth Bank of Australia is doing.28 An academic research partnership could be an effective way for an organization to get an early start on building knowledge and exploring the applications of quantum computing. Research institutions currently active in quantum computing include the University of Southern California, Delft University of Technology, University of Waterloo, University of New South Wales, University of Maryland, and Yale Quantum Institute.

Most CIOs will not be submitting budgets with line items for quantum computing in the next two years. But that doesn’t mean leaders should ignore this field. Because it is advancing rapidly, and because its impact is likely to be large, business and technology strategists should keep an eye on quantum computing starting now. Large-scale investments will not make sense for most companies for some time. But investments in internal training, R&D partnerships, and strategic planning for a quantum world may pay dividends.

© 2021. See Terms of Use for more information.

Explore the collection

-

Artificial intelligence: From expert-only to everywhere Article6 years ago

-

Radio: Revenue, reach, and resilience Article6 years ago

-

Does TV sports have a future? Bet on it Article6 years ago

-

3D printing growth accelerates again Article6 years ago

-

Quantum computers: The next supercomputers, but not the next laptops Article6 years ago