Industrialized analytics has been saved

Industrialized analytics Data is the new oil. Where are the refineries?

25 February 2016

To realize data’s full potential takes more than talent, platforms, and processes. It requires creating a structure for data assets and their use in analytics that can enable repeatable results and scale.

Data is a foundational component of digital transformation. Yet, few organizations have invested in the dedicated talent, platforms, and processes needed to turn information into insights. To realize data’s full potential, some businesses are adopting new governance approaches, multitiered data usage and management models, and innovative delivery methods to enable repeatable results and scale. Indeed, they are treating data analysis as a strategic discipline and investing in industrial-grade analytics.

Explore

View Tech Trends 2016

Learn more about Deloitte Technology Consulting

Create and download a custom PDF of the 2016 report

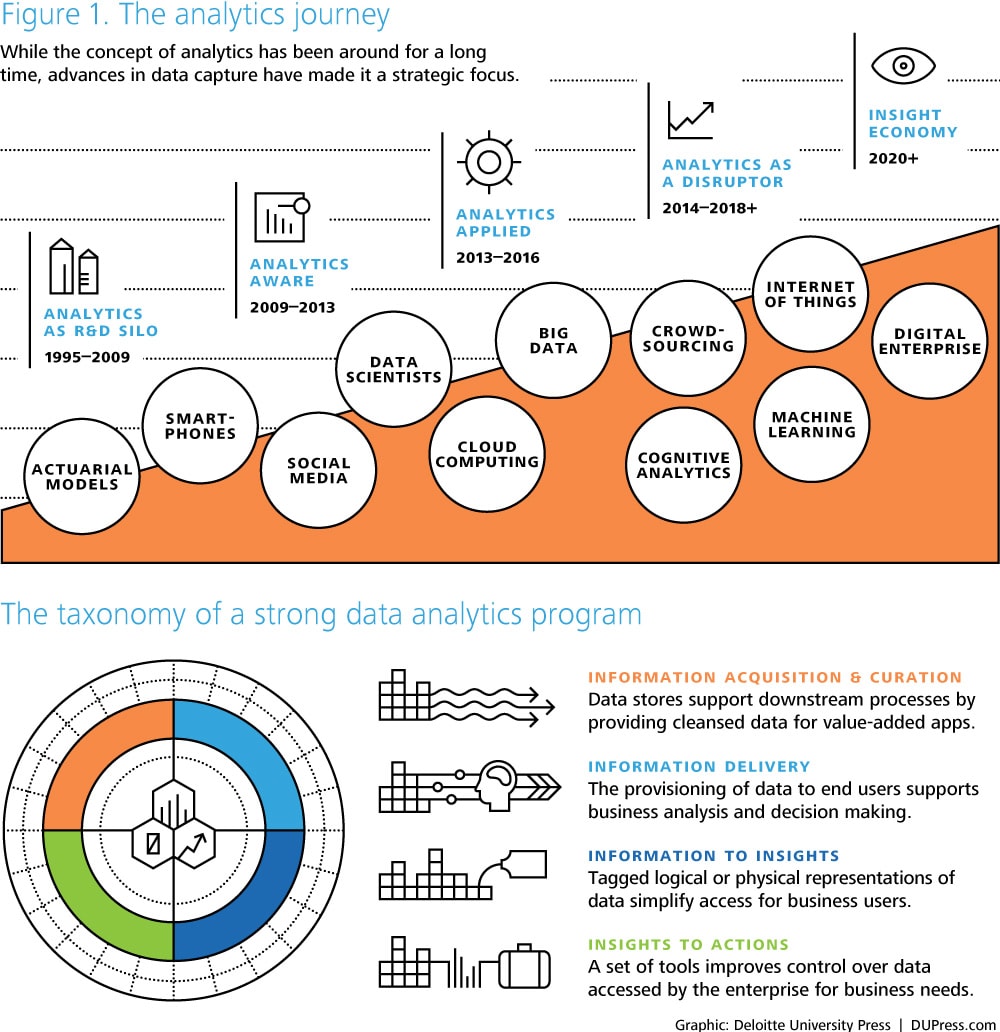

Over the past 10 years, data has risen from an operational byproduct to a strategic boardroom concern. Harnessing analytics has led to new approaches to customer engagement;1 the ability to amplify employee skills and intelligence;2 new products, services, and offerings; and even opportunities to explore new business models. In these times of talent scarcity, data scientists continue to be particularly prized—even more today than in 2012, when Harvard Business Review declared the data scientist role the “sexiest of the 21st century.”3

Analytics now dominates IT agendas and spend. In Deloitte’s 2015 global CIO survey, which polled 1,200 IT executives, respondents identified analytics as both a top investment priority and the IT investment that would deliver the greatest business impact. In a similar survey of a broader executive audience, 59 percent of participants either included data and analytics among the top five issues or considered it the single most important way to achieve a competitive advantage.4 Advances in distributed data architecture, in-memory processing, machine learning, visualization, natural language processing, and cognitive analytics have unleashed powerful tools that can answer questions and identify valuable patterns and insights that would have seemed unimaginable only a few years ago. Perhaps Ann Winblad, senior partner at technology venture capital firm Hummer-Winblad, said it best: “Data is the new oil.”5

Against this backdrop, it seems almost illogical that few companies are currently making the investments needed to harness data and analytics at scale. Where we should be seeing systemic capabilities, sustained programs, and focused innovation efforts, we see instead one-off studies, toe-in-the-water projects, and exploratory investments. While they may serve as starting points, such circumscribed efforts will likely not help companies effectively confront daunting challenges around master data, stewardship, and governance.

It’s time to take a new approach to data, one in which CIOs and business leaders deploy the talent, data usage and management models, and infrastructure required to enable repeatable results and scale. By “industrializing” analytics in this way, companies can finally lay the foundation for an insight-driven organization6 that has the vision, underlying technology capabilities, and operating scale necessary to take advantage of data’s full potential.

Thinking boldly

Beyond enabling scale and predictability of outcome, industrializing analytics can also help companies develop a greater understanding of data’s possibilities and how to achieve them. Many data efforts are descriptive and diagnostic in nature, focusing primarily on what happened and why things happened the way they did. These are important points, but they only tell part of the story. Predictive analytics broadens the approach by answering the next obvious question—“what is going to happen?” Likewise, prescriptive analytics takes it one step further by helping decision makers answer the ultimate strategic question: “What should I do?” Business executives have been trained to limit their expectations to the “descriptive” analytics realm. Education and engagement may help inspire these leaders to think more boldly about data and their potential. It could lead them to embrace the advanced visualization tools and cognitive techniques they’ll need to identify useful patterns, relationships, and insights within data. Most importantly, it could help them identify how those insights can be used to drive impact and real business outcomes.

Any effort to industrialize analytics should set forth the data agenda and delineate its strategic aspirations. This agenda can help define a company’s vision for data usage and governance and anchor efforts around key performance indicators that matter to the business. Moreover, it can help line up stakeholder support by clearly describing how an overarching analytics strategy might benefit individual groups within the enterprise. Demos and proofs of concepts that illuminate how analytics, dashboards, and advanced data analysis techniques can help drive strategy and communicate intent can be especially powerful here.

The times they are a-changin’

In addition to thinking more boldly about analytics’ and data’s potential, companies should rethink their current approaches for deploying and managing analytics. Many data efforts follow a well-worn path: Identify what you want to know. Build a data model. Put the data into a data warehouse. Cleanse, normalize, and then, finally, analyze the data. This approach has long been sufficient to generate passive historical reports from batch-driven feeds of company-owned, structured, transactional data.

Today, analyzing exploding volumes of unstructured data from across the value chain requires a different approach—and a different architecture. The way businesses should operate in today’s data-rich world is to acquire the data first, and then figure out what they may be able to do with it. Reordering the process in this way can lead to new approaches to data storage and acquisition, including distributed data solutions, unstructured data repositories, and data lakes. It also lends itself to applying advanced visualization, machine learning, and cognitive techniques to unearth hidden patterns and relationships. Or, in extreme cases, it makes it possible for decisions to be made and actions taken without human intervention—or even without human comprehension.7

This doesn’t mean that traditional “extract, transform, load” techniques are no longer valid. Data warehouses, business intelligence, and performance management tools still have a role to play. Only now, they can be more effective when complemented with new capabilities and managed with an eye toward creating repeatable enterprise platforms with high potential reuse.

Especially important are several long-standing “best practice” concepts that have become mandates. Master data management—establishing a common language for customers, products, and other essential domains as the foundation for analytics efforts—is more critical than ever. Likewise, because “anarchy doesn’t scale,”8 data governance controls that guide consistency and adoption of evolved platforms, operating models, and visions remain essential. Finally, data security and privacy considerations are becoming more important than ever as companies share and expose information, not just across organizational boundaries, but with customers and business partners as well.

Who, where, and how

The way a company organizes and executes around analytics is a big part of the industrialized analytics journey. The analytics function’s size, scale, and influence will evolve over time as the business view of analytics matures and the thirst for insight grows. Consider how the following operating models, tailored to accommodate industry, company, and individual executive dynamics could support your analytics initiatives:

- Centralized: Analysts reside in one central group where they serve a variety of functions and business units and work on diverse projects.

- Consulting: Analysts work together in a central group, but are deployed against projects and initiatives that are funded and owned by business units.

- Center of excellence: A central entity coordinates the activities of analysts across units throughout the organization and builds a community to share knowledge and best practices.

- Functional: Analysts are located in functions like marketing and supply chain where most analytical activity occurs.

- Dispersed: Analysts are scattered across the organization in different functions and business units with little coordination.

Regardless of organizational structure, an explicit talent and development model is essential. Look to retool your existing workforce. Modern skill sets around R programming and machine learning routines require scripting and Java development expertise. Recruit advocates from the line of business to provide much-needed operational and macro insights, and train them to use tools for aggregating, exploring, and analyzing.

Challenge consultant and contractor relationships to redirect tactical spend into building a foundation for institutional, industrialized analytics capabilities. Likewise, partner with local universities to help shape their curricula, seeding long-term workforce development channels while potentially also immediately tapping into eager would-be experts through externships or one-off data “hackathons.”

Developing creative approaches to sourcing can help with short-term skill shortages. For example, both established and start-up vendors may be willing to provide talent as part of a partnership arrangement. This may include sharing proofs of concept, supporting business case and planning initiatives, or even dedicating talent to help jump-start an initiative. Also, look at crowdsourcing platforms to tap into experts or budding apprentices willing to dig into real problems on your roadmap. This approach can not only yield fresh perspectives and, potentially, breakthrough thinking, but can also help establish a reliable talent pipeline. Data science platform Kaggle alone has more than 400,000 data scientists in its network.9

The final piece of the puzzle involves deploying talent and new organizational models. Leading companies are adopting Six Sigma and agile principles to guide their analytics ambitions. Their goal is to identify, vet, and experiment rapidly with opportunities in a repeatable but nimble process designed to minimize work in progress and foster continuous improvement. This tactic helps create the organizational memory muscle needed to sustain industrialized analytics that can scale and evolve while driving predictable, repeatable results.

Lessons from the front lines

Anthem’s Rx for the customer experience

With an enrollment of 38.6 million members and growing, Anthem Inc. is one of the United States’ leading health insurers and the largest for-profit managed health care company in the Blue Cross and Blue Shield association.10

The company is currently exploring new ways of using analytics to transform how it interacts with these members and with health care providers across the country. “We want to leverage new sources of data to improve the customer experience and create better relationships with providers than we have in the past,” says Patrick McIntyre, Anthem’s senior vice president of health care analytics. “Our goal is to drive to a new business model that produces meaningful results.”

From the project’s earliest planning stages, Anthem understood that it must approach big data in the right way if it hoped to generate useful insights—after all, incomplete analysis adds no value. Therefore, beginning in 2013, the company worked methodically to cleanse its data, a task that continued for 18 months. During this time, project leaders also worked to develop an in-depth understanding of the business needs that would drive the transformation initiative, and to identify the technologies that could deliver the kind of insights the company needed and wanted.

Those 18 months were time well-spent. In 2015, Anthem began piloting several differentiating analytics capabilities. Applied to medical, claim, customer service, and other master data, these tools are already delivering insights into members’ behaviors and attitudes. For example, predictive analytics capabilities drive consumer-focused analytics. Anthem is working to understand its members not as renewals and claims, but as individuals seeking personalized health care experiences. To this end, the company has built a number of insight markers to bring together claim and case-related information with other descriptors of behavior and attitudes, including signals from social and “quantified self” personal care management sources. This information helps Anthem support individualized wellness programs and develop more meaningful relationships with health care providers.

Meanwhile, Anthem is piloting a bidirectional exchange to guide consumers through the company’s call centers to the appropriate level of service. Data from the exchange and other call center functions have been used to build out predictive models around member dissatisfaction. Running in near-real time, these models, working in tandem with text mining and natural language processing applications, will make it possible for the company to identify members with high levels of dissatisfaction, and proactively reach out to those members to resolve their issues.

Another pilot project uses analytics to identify members with multiple health care providers who have contacted Anthem’s call center several times. These calls trigger an automated process in which medical data from providers is aggregated and made available to call center reps, who can use this comprehensive information to answer these members’ questions.

To maximize its analytics investments and to leverage big data more effectively, Anthem recently recruited talent from outside the health care industry, including data scientists with a background in retail. These analysts are helping the company think about consumers in different ways. “Having them ask the right questions about how consumers make health care choices—choices made traditionally by employers—has helped tremendously,” says McIntyre. “We are now creating a pattern-recognition and micro-segmentation model for use on our website that will help us understand the decision-making process that like-minded consumers use to make choices and decisions.”

McIntyre says Anthem’s analytics initiatives will continue to gain momentum in the coming months, and will likely hit full stride some time in 2017.11

Singing off the same sheet of data

In 2014, Eaton Corp., a global provider of power management solutions, began laying the groundwork for an industrial-scale initiative to extend analytics capabilities across the enterprise and reimagine the way the company utilizes transaction, sales, and customer data.

As Tom Black, Eaton’s vice president of enterprise information management and business intelligence, surveyed the potential risks and rewards of this undertaking, he realized the key to success lay in building and maintaining a master data set that could serve as a single source of truth for analytics tools enterprise-wide. “Without a solid master data plan, we would have ended up with lot of disjointed hypotheses and conjectures,” he says. “In order to make analytics really sing, we needed an enterprise view of master data.”

Yet creating a master data set in a company this size—Eaton employs 97,000 workers and sells its products in 175 countries12—would likely be no small task. “Trying to aggregate 20 years of transaction data across hundreds of ERP systems is not easy,” Black says. “But once we got our hands around it, we realized we had something powerful.”

Powerful indeed. Eaton’s IT team has consolidated many data silos and access portals into centralized data hubs that provide access to current and historic customer and product data, forecasting, and deep-dive reporting and analysis. The hubs also maintain links to information that may be useful, but is not feasible or necessary to centrally aggregate, store, and maintain. This framework supports large strategic and operational analytics initiatives while at the same time powering smaller, more innovative efforts. For example, a major industrial organization could be an original equipment manufacturer providing parts to Eaton’s product development group while at the same time purchasing Eaton products for use in its own operations. Advanced analysis of master data can reconcile these views, which might help sales reps identify unexplored opportunities.

To maintain its mission-critical master data set and to improve data quality, Eaton has established a top-down data governance function in which business line owners, data leads, and data stewards oversee the vetting and validation of data. They also collaborate to facilitate data security and to provide needed tool sets (for example, visualization and click view). At the user level, technology solution engagement managers help individuals and teams get more out of Eaton’s analytics capabilities and coordinate their analytics initiatives with others across the company.

Eaton recognizes the need to balance centralized analytics efforts with empowering the organization. In this way, the company harnesses industrialized analytics not only for efficiencies and new ways of working, but to also help fuel new products, services, and offerings.13

Pumping analytics iron

Describing the variation in analytics capabilities across the industries in which he has worked, Adam Braff, Zurich Insurance Group’s global head of enterprise data, analytics, and architecture, invokes the image of the humble fiddler crab, a semi-terrestrial marine creature whose male version has one outsized claw. “Every industry has one mature, heavily muscled limb that informs the dominant way the organization uses analytics. In insurance, analytics has traditionally been about pricing, underwriting, and their underlying actuarial models,” he says.

Because his industry has this mature analytics arm, Braff sees opportunities to look to underdeveloped analytics spaces that include indirect channels, customer life-cycle valuation, and operations excellence, among other areas. In the latter, “The challenge is identifying outliers and taking action,” says Braff. “Once you put data together and prioritize use cases, that’s where you can find success.”

Zurich is on a journey to identify analytics opportunities and then put the data resources, systems, and skill sets in place to pursue them. The first step is a “listening tour”—engaging leaders from the business side to understand the challenges they face and to explore ways that analytics might remedy those challenges. A dedicated “offense” team will then translate those needs into rapid data and analytics sprints, starting small, proving the solutions’ value, and scaling soon after.

The “defense” side, meanwhile, focuses on foundational elements such as data quality. Today, Zurich has decentralized analytics organizations and data stores. By performing a comprehensive data maturity assessment, the company is developing a better understanding of the quality and potential usefulness of its data assets. Meanwhile, Zurich is assessing how effectively different groups use data to generate value. It will also increase data quality measurement and accountability across all data-intake channels so data used downstream is of consistently high quality.

A third major step involves developing the organization and talent needed to industrialize analytics. In this environment, IT workers will need three things: sufficient business sense to ask the right questions; a background in analytical techniques and statistical modeling to guide approaches and vet the validity of conclusions; and the technical knowledge to build solutions themselves, not simply dictate requirements for others to build. “Working with the business side to develop these solutions and taking their feedback is a great way to learn how to apply analytics to business problems,” says Braff. “That iterative process can really help people build their skills—and the company’s analytics muscles.”14

My take

Justin Kershaw Corporate vice president and CIO, Cargill, Inc.

For Cargill Inc., a leading global food processing and agricultural commodity vendor and the largest privately held company in the United States, industrializing analytics is not an exploratory project in big data usage. Rather, it is a broadly scoped, strategically grounded effort to continue transforming the way we collect, manage, and analyze data—and to use the insights we glean from this analysis to improve our business outcomes. At Cargill, we are striving to have a more numerically curious culture, to utilize analytics in more and more of our decisions, and to create different and better outcomes for customers.

Cargill’s commodity trading and risk management analytics capabilities have always been strong, but that strength had not spread throughout all company operations. As we continue to digitize more of our operations, the opportunity to achieve a higher level of analytical capability operationally is front and center. This is not only in our production but also in all our supporting functions. The IT team has been transformed over the last two years, and much of this change was made possible by outcomes and targets derived from analytics. Our performance is now driven more numerically. Cargill is creating a more fully integrated way of operating by transitioning from a “holding entity” structure—comprising 68 business units operating on individual platforms—to a new organizational structure (and mind-set) in which five business divisions operate in integrated groups. This is giving rise to different ways of operating and new strategies for driving productivity and performance—more numerically, and with more capability from analytics.

As CIO, one of my jobs is to put the tools and processes in place to help both the company and IT better understand how they perform, or in some cases could perform, with integrated systems. To generate the performance insights and outcomes we want, we took a fresh approach to data management and analysis, starting with IT.

Cargill’s IT operation can be described as a billion-dollar business within a $135 billion operation. We run IT like a business, tracking income, expenses, and return on assets. We strive to set an example for the entire organization of how this business group uses analytics to generate critical insights and improve performance. To this end, we boosted our analytics capabilities, created scorecards and key performance indicators, and deployed dashboards to put numbers to how we are delivering.

In using analytics to build a more numerically driven culture, it is important to remember that bad data yields bad insights. Improving data quality where the work actually happens requires that leaders drive a comprehensive cultural shift in the way employees think about and use data and how they take responsibility for data. Companies often address the challenge of data quality from the other end by spending tons of money to clean messy data after it gets in their systems. What if you didn’t have to do that? What if you could transform your own data culture into one that rewards quality, tidiness, and disciplined data management approaches? That’s where rules help; they can support an outcome that gets rewarded. You can hire data scientists to help you better understand your data, but lightening their load by cleaning the data before they begin working with it can make a big difference. Then you can double down on your efforts to create a culture that prizes individuals who are mathematically curious and more numerically driven, who frame and satisfy their questions with data rather than anecdotes, and who care about accuracy at the root, making sure they know where the data enters the systems and where the work is done.

When I talk to business executives about the acquisitions or investments they are considering, I often share this advice: Before moving forward, spend a little money to train the individuals operating your systems and share steps they can take to improve data quality. Reward data excellence at the root. In the end, the investment pays off: Better data quality leads to better planning, which, in turn, leads to better execution.

Cyber implications

As companies create the governance, data usage, and management models needed to industrialize their analytics capabilities, they should factor cyber security and data privacy considerations into every aspect of planning and execution. Keeping these issues top of mind every step of the way can help heighten a company’s awareness of potential risks to the business, technology infrastructure, and customers.

It may also help an organization remain focused on the entire threat picture, which includes both external and internal threats. Effective cyber vigilance is not just about building a moat to keep out the bad guys; in some cases there are bad guys already inside the moat who are acting deliberately to compromise security and avoid detection. Then there are legions of otherwise innocent players who carelessly click on an unidentified link and end up infecting their computers and your company’s entire system. Cyber analytics plays an important role on these and other fronts. Analytics can help organizations establish baselines within networks for “normal,” which then makes it possible to identify behavior that is anomalous.

Until recently, companies conducted analysis on harmonized platforms of data. These existed in physical data repositories that would be built and maintained over time. In the new model, data no longer exists in these repositories, but in massive data lakes that offer virtualized views of both internal and external information.

The data lake model empowers organizations and their data scientists to conduct more in-depth analysis using considerably larger data sets. However, it may also give rise to several new cyber and privacy considerations:

- In a virtual environment, not all sources are created equal. Companies transitioning to a data lake model should determine how best to stratify trust, given that some sources live within the company’s firewall and can be considered safe, while external sources may not be. As part of this process, companies should also develop different approaches for handling data that have been willfully manipulated versus those which may just be generally unclean.

- Derived data come with unique risk, security, and privacy issues. By mining customer transaction data generated over the prior three months, an online retailer determines that one of its customers has a medical condition often treated with an array of products it sells. The company then sends this customer coupons for the products it believes she might need. In the best-case scenario, the customer uses the coupons and appreciates the company’s targeted offerings. In the worst case, the company may have violated federal privacy regulations for medical data.

It is impossible to know what information analytics programs will ultimately infer. As such, companies need strong data governance policies to help manage negative risk to their brand and to their customers. These policies could set strict limits on the life cycle of data to help prevent inaccuracies in inferences gleaned from out-of-date information. They might also mandate analyzing derived data for potential risks and flagging any suspicious data to be reviewed. Just as organizations may need an industrialized analytics solution that is scalable and repeatable, they may also need an analytics program operating on an enterprise level that takes into account new approaches to governance, multitiered data usage and management models, and innovative delivery methods.

Where do you start?

The expansive reach of data and analytics’ potential makes them a challenging domain. Ghosts abound in the machine—in legacy data and ops, as well as in some stakeholders’ dearly held convictions about the relative importance of data across the business. Perhaps even more challenging, the C-suite and other decision makers may lack confidence in IT’s ability to deliver crunchy, actionable business insights. But although the deck may seem stacked against success, the very act of attempting to industrialize analytics can help frame these issues, building support for streamlining delivery and tapping analytics’ full potential. To get the ball rolling, consider taking the following steps:

- Air grievances: Though it may be uncomfortable, it is important to get the “state of the state” out in the open before launching any transformation effort. Solicit constructive feedback from all quarters on how well existing analytics and data needs are being met and how these needs may change as business evolves. Address issues and perceptions, including those that are fact-based and those driven by misunderstandings and bias. Learn where inaccurate metrics or expectations are creating challenges and where redundant or outdated activities no longer add value. Likewise, examine instances in which overly simplistic models, overconfident analysts, lack of clarity on outcomes, or inaccurate assumptions have led to incorrect results. The emphasis should be on lighting candles versus cursing the darkness. Work to build the analytics agenda while setting a vivid baseline against which progress can be measured.

- Communicate clearly and purposefully: Don’t let analytics efforts get bogged down in a swamp of jargon, complicated tables, and overly indexed statistics. More often than not, the results with the greatest impact are those that are communicated clearly and concisely.

- Revitalize the data core: Many organizations have made long-standing investments in multiple areas across the industrialized analytics charter. Using those areas to industrialize analytics can accelerate the journey, but such an approach can also be risky. Legacy design choices can color your vision of the road ahead. Likewise, the technical debt and poor organizational constructs that accompany legacy systems can stifle progress and undermine project value.

- Educate and lead: The bigger and older the organization, the more difficult it is to drive a cultural change for analytic transformation. Don’t underestimate the need for education, communication, learning, and reinforcement, both within and beyond the direct analytics team. Moreover, becoming an insights-driven organization requires some level of buy-in across departments and hierarchies. To this end, senior leadership support is crucial, but no more so than clearly articulating the journey. Providing concrete details of what the transformation means at an individual level, along with examples of how early adoption can drive tangible results, can also help overcome wariness among staff. Finally, though it may not be necessary to have one executive explicitly “own” the initiative, some organizations have created “chief data officer” and “chief analytics officer” positions as a means to galvanize—and hold themselves accountable for—their analytics ambitions.

- Tailor your efforts: The results of industrialized analytics initiatives will vary by organization. With this in mind, project leaders should tailor their aspirations and roadmaps to reflect the specific DNA of their own organization—taking into account executive personalities and passions as much as operational and organizational dynamics.

- Rethink your talent strategies: Respect the scarcity of talent in the data science and analytics fields, but don’t let it be an excuse for lack of progress. Explore vendors, start-ups, service providers, academia, and the crowd to bolster a nontraditional sourcing strategy—especially as you formalize your analytics vision and overarching strategies.

- Blaze new trails: Optimizing current spend levels around the data and information space should definitely be a part of planning. But industrialized analytics should be about more than just cost containment and efficiency gains. Discovering new ways to harness data and activate insights could lead to breakthroughs across domains and functions.

Bottom line

“Data is the only resource we’re getting more of every day,” said Christina Ho, deputy assistant secretary at the US Department of the Treasury recently.15 Indeed, this resource is growing in both volume and value. Properly managed, it can drive competitive advantage, separating organizations that treat analytics as a collection of good intentions from those that industrialize it by committing to disciplined, deliberate platforms, governance, and delivery models.

Deloitte Consulting LLP’s Technology Consulting practice is dedicated to helping our clients build tomorrow by solving today’s complex business problems involving strategy, procurement, design, delivery, and assurance of technology solutions. Our service areas include analytics and information management, delivery, cyber risk services, and technical strategy and architecture, as well as the spectrum of digital strategy, design, and development services offered by Deloitte Digital. Learn more about our Technology Consulting practice on www.deloitte.com.

© 2021. See Terms of Use for more information.