Article

A milestone for AI regulation: European Parliament approves new legal framework for artificial intelligence

Artificial intelligence (AI) is on everyone's lips, not least due to the rise of generative AI tools. Its rapid development and increasing influence on all areas of life are undeniable. AI applications can be found in companies across almost all sectors. But while AI is constantly opening up new opportunities, it also poses the challenge of understanding and managing the risks associated with its use. The speed at which AI systems are being developed has outpaced legislation and created a regulatory gap that needs to be closed urgently. With the adoption of the long-debated proposal for an "Artificial Intelligence Act", the European Parliament paved the way on 14 June 2023 for the first harmonised regulatory framework for AI systems within the European Union.

Explore Content

- What does the new AI Act regulate?

- When does the AI Act come into force?

- What can companies already do today?

What does the new AI Act regulate?

Scope of application and terminological standardisation

Finding a uniform definition of "artificial intelligence" was at the centre of the delicate discussion. Ultimately, at the beginning of 2023, the representatives of the political groups in the European Parliament were able to agree on a uniform definition and, at the same time, define the material scope of the AI Act. Art. 3 No. 1 of the AI Act defines an "artificial intelligence system (AI system)" as:

"machine-based system that is designed to operate with varying levels of autonomy and that can, for explicit or implicit objectives, generate outputs such as predictions, recommendations, or decisions that influence physical or virtual environments".

In contrast to the first draft of the AI Act from 21 April 2021, the current definition in the amendment of 16 May 2023 is thus more restrictive, but essentially corresponds with the definition of the Organisation for Economic Co-operation and Development (OECD).

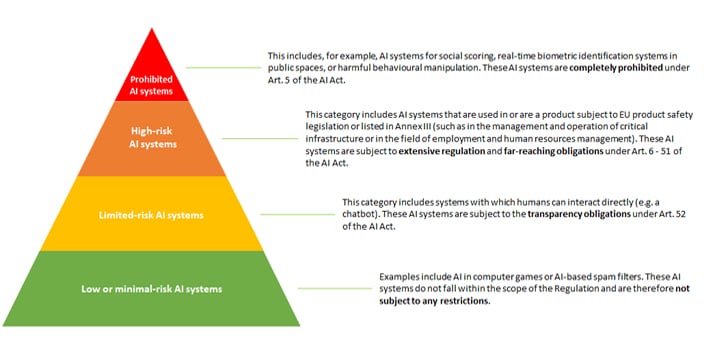

Risk-based regulation

The AI Act follows a risk-based approach in the form of a four-tier model. The classification is based on the risks posed by the AI system to the user and possible third parties. The higher the risks, the higher the regulatory requirements for the AI system.

The risk classification include:

Generative AI

Due to the great success of generative AI systems such as Midjourney, ChatGPT or Bard, an attempt is now being made to adequately represent generative AI systems in the regulation as well. In future, this type of AI system will be referred to as a "foundation model" (cf. Art. 3 No. 1c AI Act). The classification of an AI system as a "Foundation Model" alone will already results in certain restrictions, regardless of the classification under risk aspects. For providers of such AI systems in particular, considerable obligations, such as transparency and disclosure obligations (cf. in particular Art. 28b of the AI Act) are envisaged.

When does the AI Act come into force?

The AI Act was proposed by the European Commission and will be adopted through the ordinary legislative procedure (Art. 294 TFEU). After adoption by the Council of Ministers (so-called Council of the EU) and now also by the European Parliament, the final negotiations between the Commission, the Council and the Parliament will follow (so-called "trilogue procedure").

Provided an agreement can be reached in the trialogue procedure later this year, the proposal could formally enter into force in mid-2024. Since the AI Act is a regulation, it would be directly applicable according to Art. 288 para. 2 sentence 2 TFEU. However, the AI Act provides for a transitional period of 24 months in Art. 85 para. 2 AI Act. Overall, there is still some time for all those affected to prepare for the impact the AI Act will have on them.

What can companies already do today?

Already today, companies should prepare for the impact of the AI Act. This applies in particular when AI systems will be used in essential business operations or when considerable investment costs are associated with the introduction of AI systems. If later, for any reason, transpires that the AI system is categorised as a high-risk or even prohibited AI system, their use might only be permitted to either a limited extent or not at all from the date of application of the AI Act. Furthermore, additional costs for fulfilling the requirements of the AI Act could arise.

Even without the AI Act, companies should consider the legal implications of using an AI system. These include in particular:

Industrial property rights: Many AI systems require large amounts of training-data, which are regularly obtained from publicly accessible sources. It is not always guaranteed that the corresponding use of said training-data is also permissible. In addition, companies should bear in mind that, at least in Germany, no intellectual property rights can be obtained for the results produced by generative AI systems, which should be considered depending on the intended use.

Confidentiality: Insofar as AI systems require the user to provide information (e.g. by entering prompts), the protection of the data must either be ensured by technical-organisational measures or the use of the AI systems must be prohibitted (e.g. by a instruction by the employee) at least to the extent that no confidential information or business secrets are processed by the AI.

Data protection: If personal data are processed by AI systems, the requirements of the GDPR and local member state laws must be complied with. In addition to determining the specific roles of the parties involved in terms of the GDPR and compliance with the associated obligations (such as ensuring appropriate legal bases for all processing operations and agreeing on and implementing appropriate technical and organisational measures), this also includes carrying out data protection impact assessments or, when personal data is being transferred to a third country, a transfer impact assessment. In addition, consideration should be given at an early stage to how the rights of the data subjects will be respected. Since the AI Act only contains isolated rules for data protection (cf. e.g. Art. 10 AI Act), the interplay of the GDPR and the AI Act will play an important role in the future, for example in the case of a "double commitment".

Terms of use: Before using AI systems, the underlying terms of use should be carefully checked, for example with regard to the details of the performance, liability, data protection documentation or confidentiality. Especially in the case of publicly accessible AI systems, where the company does not (yet) have to conclude a contract with the AI provider, this aspect is often forgotten, and companies use systems that contain legally risky contractual provisions and, in many cases, contradict the company's internal guidelines.

For a more in-depth legal analysis, Deloitte Legal will provide a new publication shortly.

Our Deloitte Legal experts are available to answer your questions. Feel free to contact us!

Published: June 2023

Press release

of the European Parliament on the AI Act:

MEPs ready to negotiate first-ever rules for safe and transparent AI

Recommendations

The Legal Front Door: An introduction to digital legal matter intake

Part 1: The benefits of a Legal Front Door