Telling a story with data has been saved

Telling a story with data Communicating effectively with analytics

31 January 2013

Despite the respect it commands in concept, analytics can be difficult to explain and understand. As a result, analytical capabilities may not get used effectively as decision makers fall back on their intuition or experience. Yet there are approaches that can help quantitative analysts tell a story with data and tactics that can help decision makers develop beneficial relationships with analysts.

Analytics and data are transforming decision-making processes in leading organizations around the world. Yet one of the great difficulties with analytics is that it can be difficult to explain and understand; it is widely held that analytical people don’t communicate well with decision makers, and vice-versa. As a result, analytical capabilities may not get used effectively, and decision makers may fall back on their intuition or experience. There are, however, a variety of approaches that can help quantitative analysts tell a story with data and tactics that can help decision makers develop trusting and beneficial relationships with analysts.

There is much enthusiasm in organizations today about analytics and “big data.” However, unless decision makers understand analytics and its implications, they may not change their behavior and adopt analytical approaches while making decisions. Quantitative analysts who care whether their work is implemented—whether it changes decisions and influences actions—care a lot about this issue and devote a lot of time and effort to it. Analysts who don’t care about such things believe that the results “speak for themselves,” and don’t worry much about communications. As a rule they are not effective—today or throughout history.

Historical examples of communicating results, good and bad

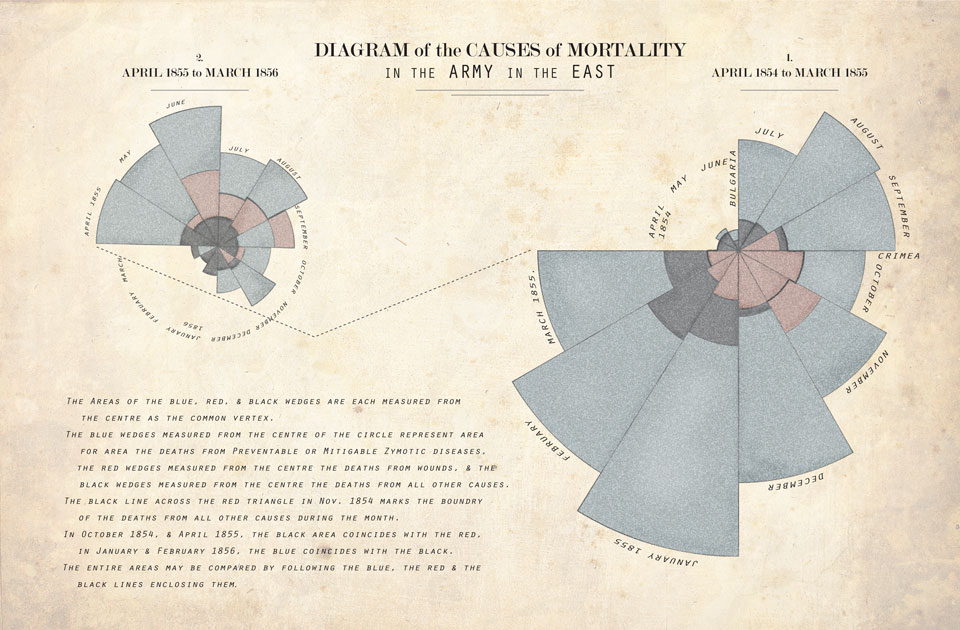

The effective presentation of quantitative results is a technique that has been used for a long time.1 For an example, let’s look at the work of Florence Nightingale. Nightingale is widely known as the founder of the profession of nursing and a reformer of hospital sanitation methods, but she was also a very early user of quantitative methods. When Nightingale and 38 volunteer nurses were sent in October 1854 to a British military hospital in Turkey during the Crimean War, she found terrible conditions in a makeshift hospital. Most of deaths in the hospital were attributable to epidemic, endemic, and contagious diseases and not to the wounds inflicted in battle. In February 1855, the mortality of cases treated in the hospital was 43 percent.2 In addition to improving basic sanitation at the hospital, Nightingale believed that statistics could be used to solve the problem. She started to keep detailed daily records of admissions, wounds, diseases, treatment, and deaths.

Nightingale’s greatest innovation, however, was in the presentation of the results. Although she recognized the importance of proof based on numbers, she also understood that numeric tables were not universally interesting (even when they were much less common than they are today!) and that the average reader would avoid reading them and thereby miss the evidence. As she wanted readers to receive her statistical message, she developed diagrams to dramatize the needless deaths caused by unsanitary conditions, and the need for reform. While taken for granted now, it was at that time a relatively novel method of presenting data.

Her innovative diagrams were a kind of pie chart with displays in the shape of wedge cuts. Nightingale printed them in several colors to clearly show how the mortality from each cause changed from month to month. The evidence of the numbers and the diagrams was clear and indisputable.

Nightingale used the creative diagrams to present reports on the nature and magnitude of the conditions of medical care in military hospitals to members of Parliament, who would have been unlikely to read or understand just the numeric tables. People were shocked to find that the wounded soldiers were dying, rather than being cured, in the hospital. Eventually, death rates were sharply reduced, as shown in the data Nightingale systematically collected. When she returned to England in June of 1856 after the Crimean War ended, she found herself a celebrity and praised as a heroine. She was made a Fellow of the Royal Statistical Society in 1859—the first woman to become a member—and an honorary member of the American Statistical Association in 1874.3

For a less impressive example of communicating results—and a reminder of how important the topic is—consider the work of Gregor Mendel.4 Mendel, the father of the concept of genetic inheritance, said a few months before his death in 1884 that, “My scientific studies have afforded me great gratification; and I am convinced that it will not be long before the whole world acknowledges the results of my work.” Perhaps if he had been better at communicating his results, the adoption of his ideas would have happened much more quickly—perhaps while he was still alive.

Mendel, a monk, studied the inheritance of certain traits in pea plants. Between 1856 and 1863, Mendel crossed and cataloged tens of thousands of plants in order to prove the laws of inheritance. He was able to demonstrate that the inheritance of genetic information from generation to generation follows particular laws, which were later named after him. The significance of Mendel’s work was not recognized until the turn of the 20th century; the independent rediscovery of these laws formed the foundation of the modern science of genetics.

If only Mendel’s communication of results had been as effective as his experiments. He published his results in an obscure Moravian scientific journal. It was distributed to more than 130 scientific institutions in Europe and overseas yet had little impact at the time and was cited only about three times over the next 35 years.5 The complex and detailed work Mendel produced was not understood even by influential people in the same field. If Mendel had been a professional scientist rather than a monk, he might have been able to project his work more extensively and perhaps publish his work abroad. Mendel did make some attempt to contact scientists overseas by sending Darwin and others reprints of his work (the whereabouts of only a handful are now known). Darwin, it is said, didn’t even cut the pages to read Mendel’s work.

Mendel died not knowing how much his findings would change history. Although Mendel’s work was brilliant and unprecedented at the time, it took more than 30 years for the rest of the scientific community to catch up to it. If you don’t want your analytical results to be ignored for that long or longer, you should probably devote considerable attention to the way they are communicated.

Two recent examples

More recently, there have been some notable stories about the use of analytics by organizations that are based not just on the quality of the analysis but also on the effort put into communications and adoption. In business, one need only look at the FICO credit score for an example of an effectively communicated and widely adopted analytical result. The FICO score, a three-digit number between 300 and 850 offered by the company of the same name, is a snapshot of a person’s financial standing at a particular point in time.6 When you apply for credit—whether for a credit card, a car loan, or a mortgage—lenders want to know what risk they’d take by loaning money to you. FICO credit scores are used by many lenders to determine your credit risk. Your FICO scores affect both how much and what loan terms (interest rate, etc.) lenders will offer you at any given time. It is a successful example of converting analytics into action since just about every lender in the United States—and growing numbers elsewhere—makes use of it.

FICO scores have dramatically improved the efficiency of US credit markets by improving risk assessment. They give the lender a better picture of whether the loan will be repaid, based entirely on a consumer’s loan history. A growing number of companies having nothing to do with the business of offering credit (such as automobile insurance companies, cellular phone companies, landlords, and affiliates of financial service firms) are now assessing FICO scores and using this information to decide whether to do business with a consumer and to determine rate tiers for different grades of consumers.

The FICO score was developed when engineer Bill Fair and mathematician Earl Isaac founded FICO (then known as Fair, Isaac) in 1956.7 Each made an initial investment of $400, and they began working on a credit scoring model. In 1958, they wrote letters to the 50 biggest American credit grantors asking for an appointment to explain the idea of credit scoring. They got only one reply. However, they worked to demonstrate the idea and the model to potential customers, including banks, credit card providers and processors, insurance companies, retailers, and credit bureaus. They acquired consulting capabilities and software that could embed FICO scores into decision workflows. By the turn of the twenty-first century, they had expanded around the globe and had introduced score visibility to consumers with customized advice for how to improve it.

Not just commercial but even academic analytical research can have a major impact if communicated well. Some readers may have heard, for example, of an analytical model that predicts the likelihood that a married couple will stay together. Professor John Gottman, a psychologist at the University of Washington, collaborated with Professor James Murray, an applied mathematician at Oxford University, on the research. Gottman supplied the hypotheses and the data—collected in videotaped and coded observations of many couples—and a long-term interest in what makes marriages successful. Murray supplied the expertise on nonlinear models. The resulting model, which assigns scores to a couple based on observed behaviors, is 94 percent accurate8 at predicting the future success of a marriage.

The model was published in a book by Gottman, Murray, and their colleagues, called The Mathematics of Marriage: Dynamic Nonlinear Models. This book was primarily intended for other academics. But unlike many academics, Gottman was also very interested in influencing the actual practices of his research subjects. He has published a variety of books and articles on the research and has created (with his wife Julie) The Gottman Relationship Institute (www.gottman.com), which provides training sessions, videos on relationship improvement, and a variety of other communications vehicles for both couples and therapists. Finally, the model also allows researchers to simulate couples’ reactions under various circumstances. Thus, modeling can lead to “what if” thought experiments that can be used to help design new scientifically based intervention strategies for troubled marriages. Gottman helped create the largest randomized clinical trial of couples to date, using more than 10,000 couples in the research. He has noted how the research helps actual couples:

For the past eight years I’ve been really involved, working with my amazingly talented wife, trying to put these ideas together and use our theory so that it helps couples and babies. And we now know that these interventions really make a big difference. We can turn around 75 percent of distressed couples with a two-day workshop and nine sessions of marital therapy.9

That is an example of effective communication and action.

Despite these results, communicating about analytics has not traditionally been viewed as a subject germane to the education of quantitative analysts. Most academics, particularly those with a strong analytical orientation in their own research and teaching, have traditionally been heavily focused on the analytical methods themselves, and not enough on how to communicate effectively about them. Fortunately, this situation is beginning to change. Xiao-Li Meng, the chair of the Harvard Statistics Department and recently named the dean of the Graduate School of Arts and Sciences at Harvard, has described his goal of creating “effective statistical communicators.” He wrote:

Intriguingly, the journey, guided by the philosophy that one can become a wine connoisseur without ever knowing how to make wine, apparently has led us to produce many more future winemakers than when we focused only on producing a vintage.10

Communications is an important topic whether you are an analyst or a consumer of analytics (put another way, an analytical winemaker or a consumer of wine). Analysts, of course, can make their research outputs more interesting and attention-getting so as to inspire more action. Consumers of analytics—say, a manager who has commissioned an analytical project—should insist that they receive results in interesting, comprehensible formats. If the audience for a quantitative analysis is bored or confused, it’s probably not their fault. Consumers of analytics can work with quantitative analysts to try to make results more easily understood and used. And of course it’s generally the consumers of analytics that make decisions and take action on the results.

Communicating the basics of an analysis

The essence of analytical communication is describing the problem and the story behind it, the model, the data employed, and the relationships among the variables in the analysis. When the relationships among variables are identified, the meaning of the relationships should be interpreted, stated, and presented relevant to the problem. The clearer the results presentation, the more likely that the quantitative analysis will lead to decisions and actions—which are, after all, usually the point of doing the analysis in the first place.

The presentation of the results should cover the outline of the research process, the summary and implications of the results, and the recommendation for action—though probably not in that order. It’s usually best to start with the summary and recommendations and to communicate the results either in a meeting with the relevant people or in a written, formal report.

Simply presenting data in the form of black-and-white numeric tables and equations is a pretty good way to have your results ignored, even if it’s a simple reporting exercise. Basic reports can almost always be described in simple graphical terms: a bar chart, pie chart, graph, or something more visually ambitious such as an interactive display. There are some people who prefer rows and columns of numbers over more visually stimulating presentations of data, but there aren’t many of them.

Telling a story with data

Among the more effective analysts are those who can tell a story with data. Regardless of the details of the analysis method and the means of getting it across, the elements of good analytical stories are similar. They have a strong narrative, typically driven by the business problem or objective. A presentation of an analytical story on customer loyalty might begin, “As you all know, we have wanted for a long time to identify our most loyal customers and ways to make them even more loyal to us—and now we can do it.”

Good stories present findings in terms that the audience can understand. If the audience is highly quantitative and technical, then statistical or mathematical terms—even an occasional equation—can be used. Most frequently, however, the audience will not be technical, so the findings should be presented in terms of concepts that the audience can understand and identify with. In business, this often takes the form of money earned or saved, or return on investment.

Good stories conclude with actions to take and the predicted consequences of those actions. Of course, that means that analysts should consult with key stakeholders in advance to discuss various action scenarios. No one wants to be told by a quantitative analyst that “You need to do this, and you need to do that.”

Emma Coats, a former story artist at Pixar, published on Twitter a list of 22 rules for storytelling.11 Although all 22 rules may not directly apply to analytics, these 4 seem particularly relevant:

- “You gotta keep in mind what’s interesting to you as an audience, not what’s fun to do as a writer [or quantitative analyst]. They can be very different.”

- “Come up with your ending before you figure out your middle. Seriously. Endings are hard; get yours working up front.”

- “Putting it on paper lets you start fixing it. If it stays in your head, a perfect idea, you’ll never share it with anyone.”

- “What’s the essence of your story? Most economical telling of it? If you know that, you can build out from there.”

It may also be useful to have a structure for the communications you have with your stakeholders. That can make it clear what the analyst and decision maker are supposed to do. For example, at Intuit, George Roumeliotis heads a data science group that analyzes and creates product features based on the vast amount of online data that Intuit collects. For every project in which his group engages with an internal customer, he recommends a methodology for conducting the analysis and communicating about it. Most of the steps have a strong business orientation:

- My understanding of the business problem

- How will I measure the business impact?

- What’s the available data?

- The initial solution hypothesis

- The solution

- The business impact of the solution

Data scientists using this methodology are encouraged to create a wiki so that they can post the results of each step. Clients can review and comment on the content of the wiki. Roumeliotis notes that although the wiki is an online tool used to review the results, it encourages direct communication between the data scientists and the client.

What not to communicate

Since analytical people are comfortable with technical terms—stating the statistical methods used, specifying actual regression coefficients, pointing out the R2 level (the percentage of variance in the data that’s explained by the regression model being used), and so forth—they often assume that their audience will be too. But most of the audience won’t understand a highly technical presentation or report. As one analyst at a travel firm put it, “Nobody cares about your R2.”

Analysts also are often tempted to describe their analytical results in terms of the sequence of activities they followed to create them: “First we removed the outliers from the data, then we did a logarithmic transformation; that created high autocorrelation, so we created a one-year lag variable”—you get the picture. Again, the audiences for analytical results don’t really care what process you followed; they only care about results and implications. It may be useful to make such information available in an appendix to a report or presentation, but don’t let it get in the way of telling a good story with your data—and start with what your audience really needs to know.

Modern methods of communicating results

These days there a variety of new tools to communicate the results of analytics, and every analyst should be aware of the possibilities. Of course, the appropriate communications tool depends on the situation and your audience, and you don’t want to employ sexy visual analytics simply for the sake of their sexiness.

Visual analytics (also known as data visualization) have advanced dramatically over the last several years. Bar and pie charts only scratch the surface of what you can do with visual display. There are scatterplots, matrix plots, heat maps, line graphs, bubble charts, tree maps, and many other options. It may seem difficult to decide which kind of chart to use for what purpose, but at some point visual analytics software can help do it for you based on the kind of variables in your data. SAS Visual Analytics, for example, is one tool that already does that for its users; the feature is called “autochart.” If the data includes, for example, “one date/time category and any number of other categories or measures,” the program will automatically generate a line chart.12

Many people employ static charts, but visual analytics are increasingly becoming dynamic and interactive. Hans Rosling, a Swedish professor, popularized this approach with his frequently viewed TED Talk13 that used visual analytics to show the changing population health relationships between developed and developing nations over time. Rosling has created a website called Gapminder (www.gapminder.org) that displays many of these types of interactive visual analytics. It is likely that we will see more of these interactive analytics to show movement in data over time, but they are not appropriate or necessary for all types of data and analyses.

Sometimes you can even get more tangible with your outputs than graphics. For example, Vince Barabba, a market researcher and strategist for several large companies, applied creative thinking when he worked for these firms about how best to communicate market research. At one automobile company where he led the market research department, for example, he knew that executives were familiar with assessing the potential of three-dimensional (3-D) clay models of cars. So at one point when he had some market research results that were particularly important to get across, he developed a 3-D model of the graphic results that executives could walk through and touch. Seeing and touching a “spike” of market demand was given new meaning by the display.

At Intercontinental Hotels Group (IHG), there are several analytics groups. David Schmitt is head of one in the finance organization called Performance Strategy and Planning. Schmitt’s group is supposed to tell reporting-oriented stories about IHG’s performance. They are quite focused on “telling a story with data” and on using all possible tools to get attention for, and stimulate action based on, their results. They have a variety of techniques to do this, depending on the audience. One approach they use is to create “music videos”—five-minute, self-contained videos that get across the broad concepts behind their results using images, audio, and video. They follow up with verbal presentations with supporting information to drive home the meaning behind the concepts.

For example, Schmitt’s group recently coordinated the creation of a video describing predictions for summer demand. Called “Summer Road Trip,” it featured a car going down the road. In the video, the car passed road signs saying “High Demand Ahead,” and billboards with market statistics along the side of the road. The goal of the video was to get the audience thinking about what would be the major drivers of performance in the coming period and how they relate to different parts of the country. As Schmitt notes, “Data isn’t the point; numbers aren’t the point—it’s about the idea.” Once the basic idea has been communicated, Schmitt will use more conventional presentation approaches to delve into the data. But he hopes that the minds of the audience members have been primed and conditioned by the preceding video.

Games are another approach to communicating analytical results and models. They can be used to communicate how variables interact in complex relationships. For example, the “Beer Game,” a simulation based on a beer company’s distribution processes, was developed at MIT in the 1960s and has been used by thousands of companies and universities to teach supply chain management models and principles such as the “bullwhip effect.” Other companies are beginning to develop their own games to communicate specific objectives. A trucking company, Schneider National, has developed a simulation-based game to communicate the importance of analytical thinking in dispatching trucks and trailers. The goal of the game is to minimize variable costs for a given amount of revenue and reduce the driver’s time at home. Decisions to accept loads or move trucks empty are made by the players, who are aided by decision support tools. Schneider uses the game to help its own personnel understand the value of analytical decision aids, to communicate the dynamics of the business, and to change the mindset of employees from “order takers” to “profit makers.” Some Schneider customers have also played the game.

Companies can also use contemporary technology to allow decision makers to interact directly with data. For example, Deloitte Consulting LLP has created an iPad-based airport operations query and reporting system. It uses Google Maps to show on a map the airports to which a particular airline flies. Different airplane colors (red for bad, green for good) indicate positive or negative performance at an individual airport. Touching a particular airport’s symbol on the map brings up financial and operational data for that particular airport. Touching various buttons can bring up indicators of staffing, customer service levels, finances, operations, and problem areas. This “app” is only one example of what can be done with today’s interactive and user-friendly technologies.

Beyond the report

Presentations or reports are not the only possible outputs of analytical projects. It’s even better if analysts have been engaged to produce an outcome that is closer to producing value. For example, many firms are increasingly embedding analytics into automated decision environments.14 In insurance, banking, and consumer-oriented pricing environments (such as airlines and hotels), automated decision making based on analytical systems is very common. In these environments, we know the analytics will be used because there is no choice in the matter (or at least very little; humans will sometimes review exceptional cases). If you’re a quantitative analyst or the owner of an important decision process and you can define your task as developing and implementing one of these systems, it is far more effective than producing a report, and it bypasses the vagaries of communicating analytical findings.

In the online information industry, companies have “big data” involving many petabytes of information. New information comes in at such volume and speed that it would be difficult for humans to comprehend it all. In this environment, the “data scientists” (quantitative analysts with high levels of data management and programming skills) working in such organizations are often located in product development organizations.15 Their goal is to develop product prototypes and new product features, not reports or presentations.

For example, the Data Science group at business networking site LinkedIn is a part of the product organization and has developed a variety of new product features and functions based on the relationships between social networks and jobs. They include People You May Know, Talent Match, Jobs You May Be Interested In, InMaps visual network displays, and Groups You Might Like. Some of these features (particularly People You May Know) have had a dramatic effect on the growth and persistence of the LinkedIn customer base.

Whether your goal is to change the approach that a decision maker uses or actually improve a product or process, communications are critical to your success. From the beginning of the analysis process, an analyst should be thinking deeply about how the results will be communicated. Leading analytical communicators don’t even wait until the end of the analysis but rather use the entire process as a vehicle to communicate with stakeholders.