In-memory revolution has been saved

In-memory revolution Tech Trends 2014

22 February 2014

- Mike Brown, Doug Krauss

The sweet spot for in-memory technologies is where massive amounts of data, complex operations, and business challenges demanding real-time support collide.

As in-memory technologies move from analytical to transactional systems, the potential to fundamentally reshape business processes grows. Technical upgrades of analytics and ERP engines may offer total cost of ownership improvements, but potential also lies in using in-memory technologies to solve tough business problems. CIOs can help the business identify new opportunities and provide the platform for the resulting process transformation.

An answer to big data

Learn more on Deloitte.com

Learn more about in-memory revolution.

Create and download a custom PDF of the Business Trends 2014 report.

Data is exploding in size—with incredible volumes of varying forms and structure—and coming from inside and outside of your company’s walls. No matter what the application—on-premises or cloud, package or custom, transactional or analytical—data is at its core. Any foundational change in how data is stored, processed, and put to use is a big deal. Welcome to the in-memory revolution.

With in-memory, companies can crunch massive amounts of data, in real time, to improve relationships with their customers—to generate add-on sales and to price based on demand. And it goes beyond customers: The marketing team wants real-time modeling of changes to sales campaigns. Operations wants to adjust fulfillment and supply chain priorities on the fly. Internal audit wants real-time fraud and threat detection. Human resources wants to continuously understand employee retention risks. And there will likely be a lot more as we learn how lightning-fast data analysis can change how we operate.

An evolution of data’s backbone

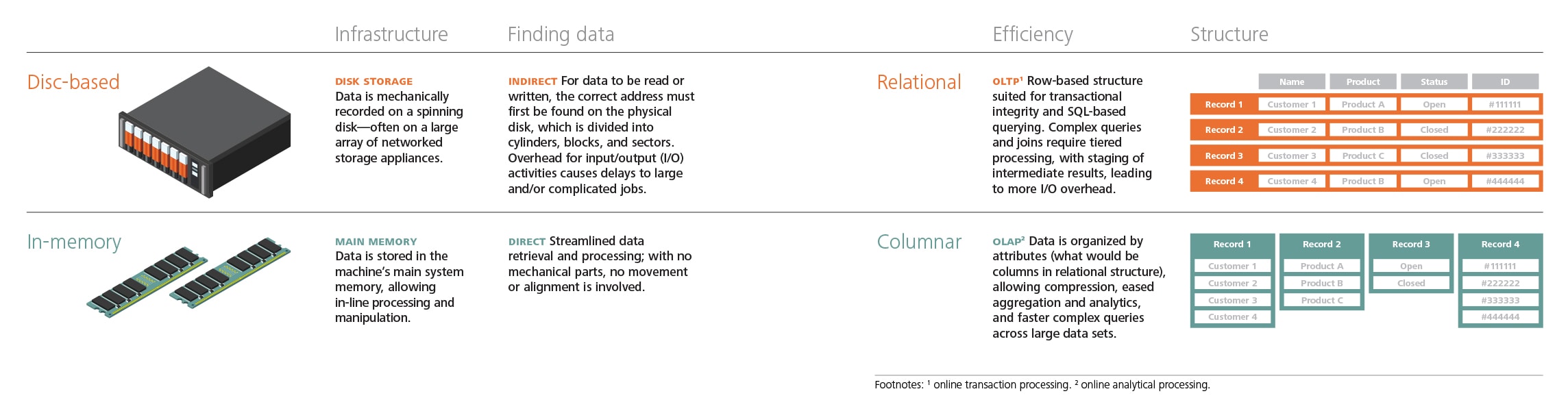

Traditionally, data has been stored on spinning discs—magnetic, optical, electronic, or other media—well suited for structured, largely homogeneous information requiring ACID1 transaction processing. In-memory technologies are changing that core architecture—replacing spinning discs with random access memory (RAM) and fueling a shift from row-based to column-based data storage. By removing the overhead of disc I/O, performance can be immediately enhanced. Vendor claims vary from thousand-fold improvement in query response times2 to transaction processing speed increases of 20,000 times.3 Beyond delivering raw speed, in-memory responses are also more predictable, able to handle large volumes and a mix of structured, semi-structured, and unstructured data. Column-based storage allows for massive amounts of data of varying structures to be promptly manipulated, preventing redundant data elements from being stored.

While the concept of in-memory is decades old, the falling price of RAM and growing use cases have led to a new focus on the technology. CIOs can reduce total cost of ownership because the shift from physical to logical reduces the hardware footprint, allowing more than 40 times the data to be stored in the same finite space. That means racks and spindles can be retired, data center costs can be reduced, and energy use can be slashed by up to 80 percent.4 Operating costs can also be cut both by reducing maintenance needs and by streamlining the performance of employees using the technology.5 In addition, cloud options provide the possibility of pivoting from fixed to variable expenses. The bigger story, though, is how in-memory technology shakes up business processes.

Beyond the technology

CIOs should short-circuit market hype and determine which areas of their business can take advantage of in-memory technology. In last year’s Tech Trends report, our “Reinventing the ERP Engine”6 chapter asked a specific question: What would you do differently if you could close your books in seven seconds instead of seven days? Today, with advances in in-memory technology, that “what if” has become a reality that is driving companies to consider both the costs of ERP upgrades and the breakthrough benefits of real-time operations.

Operational reporting and data warehousing are easy targets for in-memory, especially those with large (billion-plus-record) datasets, complex joins, ad hoc querying needs, and predictive analytics. Core processes with long batch windows are also strong candidates: planning and optimization jobs for pricing and promotions, material requirements planning (MRP), and sales forecasting. The sweet spot is where massive amounts of data, complex operations, and business challenges demanding real-time support collide. Functions where the availability of instantaneous information can improve decision quality—telecommunications, network management, point-of-sale solutions—are good candidates for an in-memory solution. Over the next 24 months, some of the more important conversations you’ll have will likely be about in-memory technologies.

Not every workload will be affected equally, and the transition period will see a hearty mix of old and new technologies. We’re still in the early stages of businesses rewiring their processes to take advantage of the new engine. Analytics will continue to see the lion’s share of investment, with in-memory–fueled insights layered on top of existing legacy processes. Point technical upgrades will offer incremental benefits without the complexity of another round of process transformation. And ERP vendors are still in the midst of rewriting their applications to exploit the in-memory revolution.

And while benefits exist today, even more compelling scenarios are coming soon. The holy grail is in sight: a single data store supporting transactions and analytics. This is great news for CIOs looking to simplify the complexity of back-end integration and data management. It’s even better news for end users, with new experiences made possible by the simplified, unified landscape.

Lessons from the front lines

Reinventing production planning

When a large aerospace and defense company sought to uncover and overcome challenges in its ability to deliver products on time, the company turned to an in-memory-based analytics platform.

By using descriptive statistics to determine the root causes of the performance issues, they discovered that only a small number of the more than 50,000 assembly parts delivered performance within 10 percent of the company’s plan, uncovering the need for more accurate data to fuel the planning process. Additionally, performance variation among component parts was high. By splitting the bill of materials into delivery segments, more advanced statistics could be generated, allowing performance to be evaluated at the individual part level.

Predictive models were then used to determine the factors contributing to the longer lead times. Subsequently, 35 unique parts were identified as representing significant risks to meeting delivery timelines. Clustering analytics assigned these high-risk parts into 11 groups of performance improvement tactics. Finally, predictive models were again run to align performance targets and financial goals with each of the tactics identified.

As a result of the diagnostics and actionable insights generated by this analytics platform, benefits valued in excess of $100 million were achieved. In addition, reduced product lead time, reduced inventory holding costs, and a 45 percent increase in on-time delivery were attained.

Drilling for better performance

Pacific Drilling, a leading ultra-deepwater drilling contractor founded in 2008, grew aggressively in its first few years. As a result, IT was tasked with providing a state-of-the-art platform for measuring company performance. Additionally, Pacific Drilling’s ERP system—implemented early on in the company’s life—was due for an upgrade. The company selected an approach that addressed both projects, allowing it to keep pace with expansion plans and gain a strategic edge with its information system.

Pacific Drilling implemented a single in-memory data platform for both advanced analytics and its upgraded ERP system. On this platform, the company was able to more effectively run maintenance, procurement, logistics, human resources, and finance functionalities in its many remote locations. It also could perform transactional and reporting operations within one system in real time. Business leaders are gaining insight, while IT delivers reports and dashboards with reduced time-to-value cycles.

With an in-memory solution, Pacific Drilling can more effectively measure performance and processes across the enterprise, and is better positioned to expand its business and competitiveness in the industry.

Communicating at light-speed

In the telecommunications industry, the customer experience has historically been defined through a series of disconnected, transactional-based interactions with either a call center or retail store representative. Customers have likely witnessed frustrating experiences such as service call transfers that require repeated explanations of the problem at hand; retail store interactions conducted with little understanding of their needs; and inconsistency of products and offers across different channels. While some companies may see this as a challenge, T-Mobile US, Inc. recognized an opportunity to innovate its customer experience.

The challenge many companies face is a “siloed” view of customer interactions across traditional marketing, sales, and service channels, as well as across emerging channels such as mobile devices and social media. T-Mobile recognized the potential in connecting the dots across these interactions and creating a unified customer experience across its channels, both traditional and emerging. By shifting its customer engagement model from reactive to proactive, T-Mobile could understand, predict, and mitigate potential customer issues before they occur and drive offers based on an individual’s personal history (i.e., product usage, service issues, and buying potential). This was certainly a tall order, but it was a compelling vision.

T-Mobile’s approach was to create a single view of customer interactions across channels. Each time a customer interacts with T-Mobile, the company records a “touch,” and each time T-Mobile corresponds with a customer, the company also records that as a touch—creating a series of touches that, over time, resemble a conversation. The next time that T-Mobile and a customer interact, there are no awkward exchanges: The conversation starts where it left off. And the collection of touches can be used in real time to drive personalized pricing and promotions—through the web, on a mobile device, or while talking to a call center agent.

The situation T-Mobile faced when getting started: Two terabytes of customer data, created just in the last three months, stored in 15 separate systems with 50 different data types. Producing a single, consumable view of a customer’s interaction history with the company’s existing systems was likely impossible. Add the desire to perform advanced analytics, and it was clear the existing systems could not support the effort. To address these technical limitations, T-Mobile implemented an in-memory system that integrates multichannel sales, service, network, and social media data. The powerful data engine, combined with a service-oriented architecture, allows T-Mobile to capture customer interactions in a dynamic in-memory data model that accommodates the ever-changing business and customer landscape. The in-memory capabilities enable the integration of advanced customer analytics with a real-time decision engine to generate personalized experiences such as a discount for purchasing a new device the customer is researching or an account credit resulting from network issues impacting the customer.

T-Mobile’s multichannel customer view takes the guesswork out of providing “the next best action” or “the next best offer” to customers. Data integration across traditional and emerging channels allows T-Mobile to see the full picture of a customer’s experience so its employees can proactively engage customers with the appropriate message at the appropriate time. The importance of the customer experience has never been greater, and T-Mobile is shaking up the wireless industry by eliminating customer pain points. Customers can take advantage of its no-annual-service-contract Simple Choice Plan, an industry-leading device upgrade program, as well as unlimited global data at no extra charge. By implementing an in-memory platform to better understand its customers, T-Mobile continues to extend its competitive advantage with its differentiated customer experience.

Next-generation ERP

With customers demanding reductions in time-consuming workloads and better performance of transactional and informational data, ERP providers are looking for ways to improve existing products or introduce new ones. In-memory capabilities, often used for analytics in the past, can give vendors a way to address such concerns and create core business offerings that were previously unachievable. Both SAP and Oracle provide technical upgrade paths from recent releases.

To this end, Oracle announced the release of 13 in-memory applications with a performance improvement of 10- to 20-fold.7 Seven of these applications are new, intended to make possible several business processes previously deemed too intensive. Changes to the algorithms of six legacy applications will also allow in-memory versions to be created.8 Additionally, an in-memory option, intended to double the speed of transaction processing, has been added to the core 12c database.9

Similarly, SAP has shifted its core business applications to an in-memory database and has gained the participation of more than 450 customers as of October 2013.10 For these customers, this shift could make real-time financial planning possible, including interactive analysis of vast amounts of data.11 The outcomes of immediate data-driven decision making may soon be seen as adoption of in-memory business applications continues.

My take

Jason Maynard, software and cloud computing analyst, Wells Fargo Securities

The in-memory movement is upon us and a bevy of approaches are emerging to solve new and existing business problems. So with the goal of doing things “faster, better, cheaper,” in-memory databases help to get you the “faster”—with an opportunity for “better” and “cheaper” when you use in-memory to tackle business, not technical, problems.

From my perspective, mass-market in-memory adoption is still in the early stages but poised to gain momentum. While in-memory database systems aren’t new per se, they are gaining in popularity because of lower cost DRAM and flash options. I think it has greater potential than many recognize—and more known use cases than many believe. The available products still have room to mature in their development lifecycles, and I think customers are looking for pre-packaged, purpose-built applications and platforms that show concrete benefit. I think there is opportunity, however, for early-adopting customers to lead the charge and gain competitive advantage.

From my perspective, mass-market in-memory adoption is still in the early stages but poised to gain momentum. While in-memory database systems aren’t new per se, they are gaining in popularity because of lower cost DRAM and flash options. I think it has greater potential than many recognize—and more known use cases than many believe. The available products still have room to mature in their development lifecycles, and I think customers are looking for pre-packaged, purpose-built applications and platforms that show concrete benefit. I think there is opportunity, however, for early-adopting customers to lead the charge and gain competitive advantage.

In-memory databases are database management systems that use main memory for computer data storage instead of disk storage. This brings benefits such as faster performance, faster response time, and reduced modeling. Many industries, such as financial services, telecommunications, and retail, have used in-memory for trading systems, network operations, and pricing. But as new modern systems such as SAP’s HANA, Oracle’s Exalytics, and a number of startups appear, the market should expand in size. Moving in-memory from specialized markets to mainstream adoption means that many applications may need to be rewritten to take advantage of the new capabilities. SAP’s HANA database engine supports analytic applications and transactional systems. Oracle has released a number of in-memory apps across its application product portfolio.

My advice for companies is to start small. Identify a few bounded, business-driven opportunities for which in-memory technology can be applied. Budgeting, planning, forecasting, sales operations, and spreadsheet-driven models are good places to start, in my view. Their massive data volumes and large numbers of variables can yield faster and more informed decisions and likely lead to measurable business impact. The idea here is to reduce cycles and increase planning accuracy. By allowing a deeper level of operational detail to be included within the plan, users can perform more what-if scenario modeling iterations in the same budgeting window. This means insights can be put to use without reengineering the back-end core processes and existing transactions can be run with more valuable, timely input. Among others, Anaplan has released a cloud-based planning and modeling solution that was built with an in-memory engine.

The extended ecosystem of ERP vendors, independent software vendors (ISVs), systems integrators, and niche service providers are working hard to close the gap between in-memory’s potential and the current state of the market. It will take time, and the full potential is unlikely to be realized until application stacks are rewritten to take advantage of the new data structures and computational scale that in-memory computing provides. But—in-memory computing has the potential to transform business.

Where do you start?

Vendors are making strategic bets in the in-memory space. IBM and Microsoft have built in-memory into DB2 and SQL Server. A host of dedicated in-memory products have emerged, from open source platforms such as Hazelcast to Software AG’s BigMemory to VMWare’s SQLFire.

But for many CIOs, the beachhead for in-memory will come from ERP providers. SAP continues to heavily invest in HANA, moving from analytics applications to its core transactional systems with Business Suite on HANA. SAP is also creating an ecosystem for developers to build adjacent applications on its technology, suggesting that SAP’s HANA stack may increase over the next few years.12

Oracle is likely making a similar bet on its latest database, 12c, which adds in-memory as an option to its traditional disc-based, relational platform.13 While there will be disruption and transition expenses, the resulting systems will likely have a lower total cost of ownership (TCO) and much higher performance than today’s technology offers.

In addition, Oracle and SAP are pressing forward to create extensive ecosystems of related and compatible technologies. From captive company-built applications to licensed solutions from third parties, the future will be full of breakout opportunities. Continuous audits in finance. Real-time supply chain performance management. Constant tracking of employee satisfaction. Advanced point-of-sale solutions in retail. Fraud and threat detection. Sales campaign effectiveness. Predictive workforce analytics. And more. Functions that can benefit from processing crazy amounts of data in real time can likely benefit from in-memory solutions.

Vendors are pitching the benefits of the technology infrastructure, with an emphasis on real-time performance and TCO. That’s a significant start, but the value can be so much more. The true advantage of an in-memory ecosystem is the new capabilities that can be created across a wide range of functions. That’s where businesses come in. Vendors are still on the front end of product development, so now is the time to make your requirements known.

- Start by understanding what you’ve already bought. In-memory is an attractive and invasive technology—a more effective way of doing things. You may already have instances where you’re using it. Assess the current benefits and determine what more you may need to spend to capitalize on its real-time benefits.

- Push the vendors. Leading ERP vendors are driving for breakthrough examples of in-memory performance—and are looking for killer applications across different industries and process areas. Talk with your sales reps. Get them thinking about—and investing in—solutions you can use.

- Ask for roadmaps. Move past sales reps to senior product development people at vendors and systems integrators. Ask them to help create detailed roadmaps you can use to guide the future.

- First stop: analytics. You’ll likely find more immediate opportunities around your information agenda—fueling advanced analytics. In-memory can be used to detect correlations and patterns in very large data sets in seconds, not days or weeks. This allows for more iterations to be run, drives “fast failing,” and leads to additional insights and refined models, increasing the quality of the analysis. These techniques used to be reserved for PhD-level statisticians—but not anymore.

- Focus on one or two high-potential capabilities. No company wants to conduct open-heart surgery on its core ERP system. Instead, pick a few priority functions for your business to gain buy-in. Your colleagues need to see the potential upside before they’ll appreciate what a big deal this is. Analytics is a good starting point because it’s fairly contained. Customer relationship management (CRM) is another good match, with its focus on pricing agility and promotion. After that, consider supply chain and manufacturing.

- Watch competitors. Experimentation will take place in many areas over the next two years. If a competitor develops new capabilities with demonstrated value in a particular area, the dam will break and the adoption curve will take off. Be ready for it.

Bottom line

Some technology trends explode onto the scene in a big way, rapidly disrupting business as usual and triggering an avalanche of change. Others quietly emerge from the shadows, signaling a small shift that builds over time. The in-memory revolution represents both. On the one hand, the technology enables significant gains in speed, with analytics number-crunching and large-scale transaction processing able to run concurrently. At the same time, this shift has opened the door to real-time operations, with analytics insights informing transactional decisions at the individual level in a virtuous cycle. The result? Opportunities for continuous performance improvement are emerging in many business functions.