Autonomic platforms has been saved

Autonomic platforms Building blocks for labor-less IT

25 February 2016

Almost all traditional IT operations could be candidates for autonomic computing—which really means taking automation to the next level by basing it in machine learning.

IT may soon become a self-managing service provider without technical limitations of capacity, performance, and scale. By adopting a “build once, deploy anywhere” approach, retooled IT workforces—working with new architectures built upon virtualized assets, containers, and advanced management and monitoring tools—could seamlessly move workloads among traditional on-premises stacks, private cloud platforms, and public cloud services.

Explore

View Tech Trends 2016

Learn more about Deloitte Technology Consulting

Create and download a custom PDF of the 2016 report

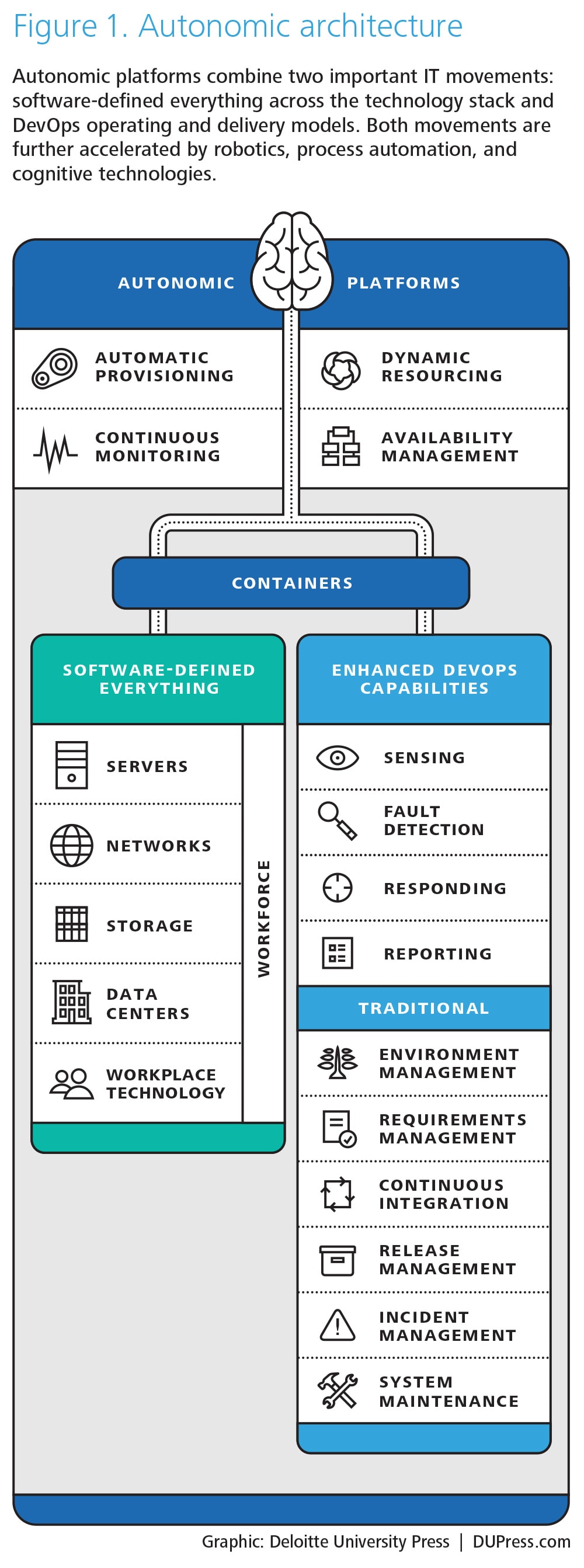

Autonomic platforms build upon and bring together two important trends in IT: software-defined everything’s1 climb up the tech stack, and the overhaul of IT operating and delivery models under the DevOps2 movement. With more and more of IT becoming expressible as code—from underlying infrastructure to IT department tasks—organizations now have a chance to apply new architecture patterns and disciplines. In doing so, they can remove dependencies between business outcomes and underlying solutions, while also redeploying IT talent from rote low-value work to the higher-order capabilities needed to deliver right-speed IT.3

To truly harness autonomic platforms’ potential, one must first explore its foundational elements.

Virtualization up

The kernel of software-defined everything (SDE) has been a rallying cry in IT for decades. The idea is straightforward: Hardware assets like servers, network switches, storage arrays, and desktop facilities are expensive, difficult to manage, and often woefully suboptimized. Often procured and configured for single-purpose usages, they are sized to withstand the most extreme estimates of potential load. Moreover, they are typically surrounded by duplicate environments (most of them idle) that were created to support new development and ongoing change. In the mainframe days, such inefficiencies were mitigated somewhat by logical partitions—a segment of a computer’s hardware resources that can be virtualized and managed independently as a separate machine. Over the years, this led to virtualization, or the creation of software-based, logical abstractions of underlying physical environments in which IT assets can be shared.

Notably, virtualization shortened the time required to provision environments; rather than waiting months while new servers were ordered, racked, and configured, organizations could set up a virtual environment in a matter of hours. Though virtualization started at the server level, it has since expanded up, down, and across the technology stack. It is now also widely adopted for network, storage, and even data center facilities. Virtualization alone now accounts for more than 50 percent of all server workloads, and is projected to account for 86 percent of workloads by the end of 2016.4

DevOps down

As virtualization was working its way up the stack, the methods and tools for managing IT assets and the broader “business of IT” were undergoing a renaissance. Over time, IT departments became saddled with manual processes, cumbersome one-size-fits-all software development lifecycle (SDLC) methodologies. Or they developed “over-the-wall engineering” mind-sets in which individuals fulfill their own obligations with little understanding or concern about the needs of downstream teams. This operational baggage has fueled tension between IT’s development group, which pushes for speed and experimentation with new features and tools, and its operations organization, which prizes stability, performance, and predictable maintenance.

To combat organizational inefficiency as well as any discord that has arisen among various parts of the IT value chain, many organizations are implementing DevOps,5 a new way of organizing and focusing various teams. DevOps utilizes tools and processes to eliminate some of the waste embedded in legacy modes for operating IT. In a way, it also extends the software-defined-everything mission into the workforce by instilling abstractions and controls across the tasks required to manage the end-to-end life cycle of IT. Scope varies by organization, but DevOps often concentrates on a combination of the following:

- Environment management: Configuration, provisioning, and deployment of (increasingly virtualized) environments

- Requirements management: Disciplines for tracking details and priorities of features and specifications—both functional and nonfunctional

- Continuous integration: Providing the means to manage code updates across multiple developers and to validate and merge edits to resolve any conflicts, issues, or redundant efforts

- Release management: Bundling and deploying new solutions or editing existing solutions through staging environments into production

- Event/incident management: Tracking, triage, and resolution of system bugs or capability gaps

- System maintenance/monitoring: Ongoing tracking of overall solution performance across business and technical attributes, including network, servers, and storage components

- Production support: Managing and monitoring application performance in real time, including monitoring run-time activities, batch jobs, and application performance

- Business command center: Real-time management/monitoring of business transactions such as payment flows, inventory bottlenecks, and existential threats

Enter autonomic platforms

With the building blocks in place, the real fun can begin: automating and coordinating the interaction of these software-defined capabilities. That’s where autonomic platforms come in, layering in the ability to dynamically manage resources while integrating and orchestrating more of the end-to-end activities required to build and run IT solutions.

When discussing the concept of autonomics, we are really talking about automation + robotics, or taking automation to the next level by basing it in machine learning. Almost all traditional IT operations are candidates for autonomics, including anything that’s workflow-driven, repetitive, or policy-based and requires reconciliation between systems. Approaches have different names: robotic process automation (RPA), cognitive automation, intelligent automation, and even cognitive agents. However, their underlying stories are similar—applying new technologies to automate tasks and help virtual workers handle increasingly complex workloads.

Virtualized assets are an essential part of the autonomics picture, as are the core DevOps capabilities listed above. Investments in these assets can be guided by a handful of potential scenarios:

- Self-configuration: Automatic provisioning and deployment of solution stacks include not only the underlying data center resources and requisite server, network, and storage requirements, but also the automated configuration of application, data, platform, user profile, security, and privacy controls, and associated supporting tools, interfaces, and connections for immediate productivity. Importantly, efforts should also be made to build services to automatically decommission environments and instances when they are longer needed—or, perhaps more interestingly, when they are no longer being used.

- Self-optimizing: Self-optimizing refers to dynamic reallocation of resources in which solutions move between internal environments and workloads are shifted to and between cloud services. The rise of containers has been essential here, creating an architecture that abstracts the underlying physical and logical infrastructure, enabling portability across environments. Note that this “build once, deploy anywhere” architecture also requires services to manage containers, cloud services, and the migration of build- and run-time assets.

- Self-healing: DevOps asked designers to embed instrumentation and controls for ongoing management into their solutions, while also investing in tools to better monitor system performance. Autonomic platforms take this a step further by adding detailed availability and service-level availability management capabilities, as well as application, database, and network performance management tools. These can be used to continuously monitor the outcome-based health of an end-to-end solution, anticipate problems, and proactively take steps to prevent an error, incident, or outage.

The journey ahead

The promise of autonomic platforms (like those of its predecessors, SDE and DevOps) can wither in the face of circumscribed initiatives and siloed efforts. Heavy segmentation among infrastructure and delivery management tool vendors does not help either. The good news is that established players are now introducing products that target parts of emerging platforms and help retrofit legacy platforms to participate in autonomic platforms. Start-ups are aggressively attacking this space, bolstered by their ability to capture value through software enablers in segments historically protected by huge manufacturing and R&D overheads. Multivendor landscapes are the new reality—the arrival of an “ERP for IT” solution by a single vendor remains years away. Yet even without a single end-to-end solution, leading organizations are taking holistic, programmatic approaches to deploy and manage autonomic platforms. These often include taking tactical first steps to consolidate environments and solutions and to modernize platforms—steps that can help fund more ambitious enablement and automation efforts.

Furthermore, autonomic platforms can act as a Trojan horse that elevates IT’s standing throughout the enterprise. How? By boosting IT responsiveness and agility today and by providing the architectural underpinnings needed to support the next-generation solutions and operating environments of tomorrow.

Lessons from the front lines

Automating one step at a time

In support of the company’s ongoing digital transformation, American Express continually strives to enhance the way it does business to meet changing customer needs. The financial services company’s goal is engage new customer groups wherever they are, whenever they choose, using whatever device they prefer.

To this end, tech and business leaders have challenged themselves to accelerate their existing product development cycle. The technology organization took up the challenge by looking for a way to scale its infrastructure and operations to deliver across a large and often siloed ecosystem in new and innovative ways. Cost and speed complaints surfaced, with incremental plans for modest improvements leading to one executive observing, “We don’t need a faster horse; what we really need is an automobile.” In response, the group supporting the prepaid/mobile payments platform—which serves as the backbone for the company’s digital products—rallied behind a “software-defined everything” strategy—from environments and platforms to operating and delivery models.

They first began working on reducing cost, improving time to market, and automating much of the prepaid/mobile payments platform. Then, they put tools in place that allowed developers to deploy their solutions, from development through production, without friction. Development teams are now incented differently—focused not only on speed to value, but also on building operationally manageable code. Such enhancements typically manifest in important ways, from the percentage of SCRUM scope reserved to pay down technical debt6 to creating expectations for embedded instrumentation for ongoing ops.

On the operations side, Amex’s technology team adopted ITIL processes built around controls, checkpoints, and segregation of duties. Metrics emphasized process compliance rather than what the process was trying to control or its impact to the business. To change the mind-set, infrastructure specialists were given training to help them hone their development skills and gain expertise in autonomic platforms and tools. These specialists were also charged with creating lower-level function automation and putting in place monitoring, maintenance, and self-healing capabilities functions focused on business process and activity monitoring, not on the performance of the underlying technology components.

Additionally, other product teams have begun transforming the systems that support other American Express products and platforms. In this environment, product development still relies upon more traditional software engineering methods, such as waterfall methodologies and manual testing and deployment. Developers have begun to tackle transformation in stages—introducing concepts from agile development such as regression testing, measuring legacy code quality, and establishing foundational hooks and automated routines for instrumentation and maintenance. In addition, they are working to help all team members become more versed in agile and DevOps processes and techniques.

Given the complexity of the existing multivendor, multiplatform IT ecosystem and the overarching financial regulatory requirements, American Express is taking a variety of approaches to automating and integrating its IT ecosystem—rolled out in phases over time. And while the existing team has taken impressive leaps, the company has also strategically inserted talent who “have seen this movie before.” At the end of the day, the challenge is about fostering a culture and mind-set change—pushing a new vision for IT and revitalizing existing technical and talent assets to drive improved value for customers and to differentiate American Express from the competition.7

Boosting productivity with DevOps

To keep up with exploding demand for cloud services in a marketplace defined by rapid-fire innovation, VMware’s development operations team had become a beehive of activity. In 2014 alone, the company ran five release trains in parallel and brought 150 projects to completion.

Yet, the pace of development was becoming unsustainable. The process was beset by too many handoffs and downtimes for code deployments. Quality assurance (QA) was slowing release momentum. According to Avon Puri, VMware’s vice president of IT, enterprise applications, and platforms, the company’s approach to development—from requirements to release—needed an overhaul. “It was taking us 22 days to get a new operating environment up and running,” he says. “Also, because of our overhead, our projects were becoming increasingly expensive.”

So the company launched a nine-project pilot to explore the possibility of transitioning from traditional development to a DevOps model with a 70-member DevOps team, with the aim of bringing down costs, completing projects more quickly, and streamlining the QA process. The pilot took a three-pronged “people-process-technology” approach, and focused on the following parameters: resource efficiency, application quality, time to market, deployment frequency, and cost savings.

The people transformation component involved organizing key talent into a self-contained DevOps team that would own projects end-to-end, from design to release. To support this team, pilot leaders took steps to break down barriers between departments, processes, and technologies, and to help developers work more effectively with business units in early planning. Notably, they also deployed a multimodal IT working model in which process models and phase gates were aligned to support both traditional and nimble IT teams. On the process front, key initiatives included creating a continuous delivery framework and implementing a new approach to QA that focused more on business assurance than quality assurance, and emphasized automation throughout product testing.

Finally, the pilot’s technology transformation effort involved automating the deployment process using VMware’s own vRealize suite, which helped address “last-mile” integration challenges. Pilot leaders deployed containers and created a software-defined stack across servers, storage, and the network to help reduce deployment complexity and guarantee portability.

Pilot results have been impressive; Resource efficiency improved by 30 percent, and app quality and time to market each improved by 25 percent. Puri says that results like these were made possible by aligning everyone—developers, admins, and others—on one team, and focusing them on a single system. “This is where we get the biggest bang for the buck: We say, ‘Here’s a feature we want, this is what it means,’ someone writes the code, and after QA, we put it into production.”8

Innovation foundry

In downtown Atlanta, a software engineer shares an idea with his fellow team members for improving a product under development. Over the course of the morning, Scrum team members quickly and nimbly work to include the idea in their current sprint to refine and test the viability of the idea, generate code, and publish it to the code branch. Within hours, the enhancement is committed into the build, validated, regression-tested against a full suite of test scenarios, dynamically merged with updates from the rest of the team, and packaged for deployment—all automatically.

This is just another day in the life of iLab, Deloitte Consulting Innovation’s software product development center, which builds commercial-grade, cloud-based, analytics-driven, software products designed to be launched as stand-alone offerings. iLab was born out of a need to industrialize the intellectual property of the core consulting practice through software creation by dedicated product development teams, says Casey Graves, a Deloitte Consulting LLP principal responsible for iLab’s creative, architecture, infrastructure, engineering, and support areas. “Today, our goal is to develop impactful products, based upon our consulting intellectual property, quickly and efficiently. That means putting the talent, tools, and agile processes in place to realize automation’s full potential.”

To support the pace of daily builds and biweekly sprints, iLab has automated process tasks wherever possible to create a nearly seamless process flow. “Because we use agile techniques, we are able to use continuous integration with our products,” Graves says. “Today we still deploy manually, but it is by choice and we see that changing in the near future.”

iLab’s commitment to autonomic platforms has upstream impacts, shaping the design and development of solutions to include hooks and patterns that facilitate automated DevOps. Downstream, it guides the build-out of underlying software-defined networks designed to optimally support product and platform ambitions. Moreover, supporting a truly nimble, agile process requires ensuring that product teams are disciplined to following the processes and have the tools, technologies, skills they need to achieve project goals, and to work effectively on an increasingly automated development platform.9

My take

James Gouin CTO and transformation executive, AIG

AIG’s motto, “Bring on tomorrow,” speaks to our company’s bedrock belief that by delivering more value to our 90 million insurance customers around the world today, we can help them meet the challenges of the future.

It also means reinventing ourselves in a market being disrupted by new players, new business models, and changing customer expectations. Enablement of end-to-end digital services is everything today. Our customers want products and services fast, simple, and agnostic of device or channel. To deliver that value consistently, we’re working to transform our IT systems and processes. Our goal: to build the flexible infrastructure and platforms needed to deliver anytime, anywhere, on any device, around the globe—and do it quickly.

This is not just any IT modernization initiative; the disruptive forces bearing down on the financial services sector are too fundamental to address with a version upgrade or grab bag of “shiny object” add-ons. Insurance providers, banks, and investment firms currently face growing pressure to transform their business models and offerings, and to engage customers in new ways. This pressure is not coming from within our own industry. Rather, it emanates from digital start-ups innovating relentlessly and, in doing so, creating an entirely new operating model for IT organizations. I think of this as a “west coast” style of IT: building minimally viable products and attacking market niches at speeds that companies with traditional “east coast” IT models dream of.

Adapting a large organization with legacy assets and multiple mainframes in various locations around the globe to this new model is not easy, but it is a “must do” for us. Our goal is to be a mobile-first, cloud-first organization at AIG. To do that, our infrastructure must be rock solid, which is why strengthening the network was so important. We’re investing in a software-defined network to give us flexible infrastructure to deploy anytime and anywhere—region to region, country to country. These investments also introduce delivery capabilities in the cloud, which can help us enter new relationships and confidently define a roadmap for sourcing critical capabilities that are outside of AIG’s direct control.

Everything we’re doing is important for today’s business. But it is also essential in a world where the sensors and connected devices behind the Internet of Things have already begun changing our industry. Telematics is only the beginning. Data from sensors, people, and things (“smart” or otherwise) will give us unprecedented visibility into customer behavior, product performance, and risk. This information can be built into product and pricing models, and used to fuel new offerings. The steps we are taking to automate IT and create a flexible, modern infrastructure will become the building blocks of this new reality.

AIG’s transformation journey is happening against a backdrop of historic change throughout the insurance industry and in insurance IT. Not too long ago, underwriters, actuaries, and IT were part of the back office. Now, IT also operates in the middle and front offices, and has taken a seat in the boardroom. Likewise, the consumerization of technology has made everyone an IT expert; executives, customers, and everyone in between now have extremely high expectations of technology—and of those who manage it within organizations like ours. The bar is high, but at the end of the day everyone is just reinforcing why we’re here: to deliver great products and services for our customers.

Cyber implications

Risk should be a foundational consideration as organizations create the infrastructure required to move workloads seamlessly among traditional on-premises stacks, private cloud platforms, and public cloud services. Much of this infrastructure will likely be software-defined, deeply integrated across components, and potentially provisioned through the cloud. As such, traditional security controls, preventive measures, and compliance initiatives will likely be outmoded because the technology stack they sit on top of was designed as an open, insecure platform.

To create effective autonomic platforms within a software-defined technology stack, key concepts around things like access, logging and monitoring, encryption, and asset management should be reassessed, and, if necessary, enhanced. There are new layers of complexity, new degrees of volatility, and a growing dependence on assets that may not be fully within your control. The risk profile expands as critical infrastructure and sensitive information is distributed to new and different players.

Containers make it possible to apply standards at the logical abstraction layer that can be inherited by downstream solutions. Likewise, incorporating autonomic capabilities within cyber-aware stacks can help organizations respond to threats in real time. For example, the ability to automatically detect malware on your network, dynamically ring-fence it, and then detonate the malware safely (and alert firewalls and monitoring tools to identify and block this malware in the future) could potentially make your organization more secure, vigilant, and resilient.

Revamped cybersecurity components should be designed to be consistent with the broader adoption of real-time DevOps. Autonomic platforms and software-defined infrastructure are often not just about cost reduction and efficiency gains; they can set the stage for more streamlined, responsive IT capabilities, and help address some of today’s more mundane but persistent challenges in security operations.

Incorporating automated cyber services in the orchestration model can free security administrators to focus on more value-added and proactive tasks. On the flip side, incorporating cyber into orchestration can give rise to several challenges around cyber governance:

- Determining what needs to stay on-premises and what doesn’t: Every organization will have unique considerations as it identifies the infrastructure components that can be provisioned from external sources, but for many the best option will likely be a mix based on existing investments, corporate policies, and regulatory compliance. In any scenario, the security solutions should be seamless across on-premises and off-premises solutions to prevent gaps in coverage.

- Addressing localization and data management policies: As systems spread inside and outside corporate walls, IT organizations may need to create a map that illustrates the full data supply chain and potential vulnerabilities. This can help guide the development of policies that determine how all parties in the data supply chain approach data management and security.

Growing numbers of companies are exploring avenues for using virtualization to address these and other challenges while improving security control. At VMware, one approach leverages the emerging microsegmentation trend. “Microsegmentation is about using virtualization as a means to create a virtual data center where all the machines that enable a multi-tiered service can be connected together within a virtual network,” says Tom Corn, senior vice president of security products. “Now you have a construct that allows you to align your controls with what you want to protect.”

Corn adds that with microsegmentation, it is very difficult for an attacker to get from the initial point of entry to high-value assets. “If someone breaks in and has one key, that one key should not be the key to the kingdom; we need to compartmentalize the network such that a breach of one system is not a breach of everything you have.”10

Where do you start?

Autonomic platforms typically have humble beginnings, often emerging from efforts to modernize pieces of the operating environment or the SDLC. Replacing siloed, inefficient components with siloed, optimized components no doubt has an upside. However, companies may achieve more compelling results by shifting from one-offs to any of the following integrated, orchestrated approaches:

- Option 1: A greenfield build-out of an autonomic ecosystem featuring cloud, software-defined everything, and agile techniques. Existing workloads can be moved to the greenfield over time, while new workloads can start there.

- Option 2: Move to the new autonomic platform world using containers. Simplify the environment and sunset redundant or useless components over time.

- Option 3: Move to the new world application by application, realizing that some applications will never make the journey and will have to die on the vine.

Before we drill deeper into these and other strategies, it is important to note that ongoing budget pressures and rising business expectations don’t lend themselves to far-reaching transformation agendas—especially those targeting IT’s behind-the-scenes trappings. That said, operations continue to dominate global IT budgets, accounting for 57 percent of overall spending, according to findings from Deloitte’s 2015 global CIO survey.11 (Interestingly, only 16 percent of spending targets business innovation.) Addressing inefficiencies inherent to legacy infrastructure and IT delivery models can likely improve operations and reduce costs. That those same investments can also increase agility and business responsiveness may seem too good to be true—a self-funding vehicle to build concrete capabilities for the IT organization of the future.

The following can serve as starting points in the journey to autonomic platforms:

- Containers: Though the hype around the container movement is largely justified, we remain in the early days of adoption. IDC analyst Al Gillen estimates that fewer than one-tenth of 1 percent of enterprise applications are currently running in containers. What’s more, it could be 10 years before the technology reaches mainstream adoption and captures more than 40 percent of the market.12 Consider adopting a twofold strategy for exploration. First, look at open-source and start-up options to push emerging features and standards. Then, tap established vendors as they evolve their platforms and offerings to seamlessly operate in the coming container-driven reality.

- API economy:13 In some modern IT architectures, large systems are being broken down into more manageable pieces known as microservices. These sub-components exist to be reused in new and interesting ways over time.14 For example, organizations may be able to realize autonomic platforms’ full potential more quickly by deconstructing applications into application programming interfaces (APIs)—services that can be invoked by other internal or external systems. These modular, loosely coupled services, which take advantage of self-configuration, self-deployment, and self-healing capabilities much more easily than do behemoth systems with hard-wired dependencies, help reduce complex remediation and the amount of replatforming required for legacy systems to participate in autonomic platforms.

- Cloudy perspective: Cloud solutions will likely play a part in any organization’s autonomic platform initiatives. Increasingly, virtualized environments are being deployed in the cloud: In 2014, 20 percent of virtual machines were delivered through public infrastructure-as-a-service (IaaS) providers.15 However, don’t confuse the means with the end. Ultimately, cloud offerings represent a deployment option, which may or may not be appropriate based on the workload in question. Price per requisite performance (factoring in long-term implications for ongoing growth, maintenance, and dependencies) should drive your decision of whether to deploy a public, private, or hybrid cloud option or embrace an on-premises option based on more traditional technologies.

- Robotics: While much of the broader robotics dialogue focuses on advanced robotics— drones, autonomous transportation, and exoskeletons—progress is also being made in the realm of virtualized workforces. RPA, cognitive agents, and other autonomic solutions are advancing in both IT operations and business process outsourcing. Their promise encompasses more than efficiency gains in mundane tasks such as entering data. Indeed, the most exciting opportunities can be found in higher-order, higher-value applications, such as virtual infrastructure engineers that can proactively monitor, triage, and heal the stack, or virtual loan specialists that help customers fill out mortgage applications.

Bottom line

IT has historically underinvested in the tools and processes it needs to practice its craft. Technology advances in underlying infrastructure now offer IT an opportunity to reinvent itself with revamped tools and approaches for managing the life cycle of IT department capabilities. By deploying autonomic platforms, IT can eliminate waste from its balance sheet while shifting its focus from low-level tasks to the strategic pillars of business performance.

Deloitte Consulting LLP’s Technology Consulting practice is dedicated to helping our clients build tomorrow by solving today’s complex business problems involving strategy, procurement, design, delivery, and assurance of technology solutions. Our service areas include analytics and information management, delivery, cyber risk services, and technical strategy and architecture, as well as the spectrum of digital strategy, design, and development services offered by Deloitte Digital. Learn more about our Technology Consulting practice on www.deloitte.com.