Reimagining core systems has been saved

Reimagining core systems Modernizing the heart of the business

25 February 2016

Accelerating digital transformation and emerging technologies such as sensors, connected devices, blockchain, and cognitive computing are making it a strategic imperative to revitalize legacy core systems to drive untapped value.

Core systems that drive back, mid, and front offices are often decades old, comprising everything from the custom systems built to run the financial services industry in the 1970s to the ERP reengineering wave of the 1990s. Today, many roads to digital innovation lead through these “heart of the business” applications. For this reason, organizations are now developing strategies for reimagining their core systems that involve re-platforming, modernizing, and revitalizing them. Transforming the bedrock of the IT footprint to be agile, intuitive, and responsive can help meet business needs today, while laying the foundation for tomorrow.

Explore

View Tech Trends 2016

Learn more about Deloitte Technology Consulting

Create and download a custom PDF of the 2016 report

IT’s legacy is intertwined with the core systems that often bear the same name. In a way, the modern IT department’s raison d’être can be traced to the origins of what is now dubbed “legacy”—those heart-of-the-business, foundation-of-the-mission systems that run back-, mid-, and front-office processes. Some include large-scale custom solutions whose reach and complexity have sprawled over the decades. Others have undergone ERP transformation programs designed to customize and extend their capabilities to meet specific business needs. Unfortunately, the net result of such efforts is often a tangle of complexity and dependency that is daunting to try to comprehend, much less unwind.

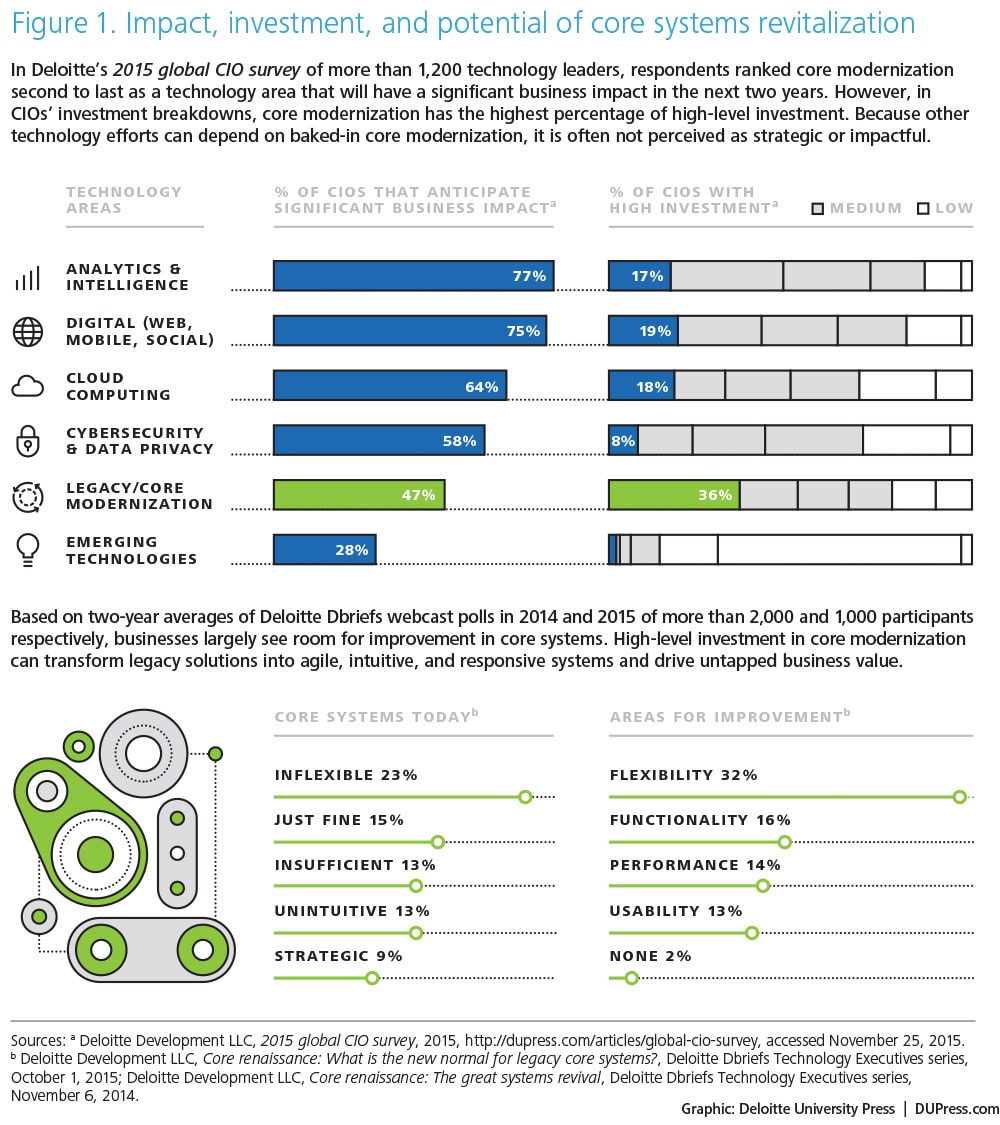

Meanwhile, core upkeep and legacy modernization lay claim to inordinate amounts of IT budget. Deloitte’s 2015 global CIO survey1 found that core-related expenditures are the single biggest line item in IT investment dollars. This leads to an internal PR problem for the core: High cost is seen as low value. When asked to rank the technology investments they think will have significant impacts on their business, survey respondents cited analytics, digital, and cloud.

Clearly, it’s easy to overlook the fact that these and other emerging technologies are highly dependent on the underlying data and processes the core enables.

Broad systems transformation involves bringing together sales, operations, supply chain, and service information to redefine underlying processes and inform new products, services, and offerings. As such, efforts to reimagine core systems can form the foundation upon which higher-order, “shinier” initiatives are built. Reimagining the core can also help companies establish a modern baseline for improved efficiency, efficacy, and results. With this in mind, leading organizations are evolving their systems roadmaps to approach the core not as an anchor, but as a set of customer-focused, outcome-driven building blocks that can support the business in the digital age and beyond.

The time is now

The pace of change and innovation in technology is continuing to accelerate at an exponential pace, offering ripe opportunities to rewire the way we work and rewrite the rules of engagement. The rate at which data is produced by devices, sensors, and people is accelerating—and that data is being harnessed in real time by advanced predictive and prescriptive analytics to expertly guide business decisions once ruled by instinct and intuition. Digital has unlocked new ways of engaging customers and empowering employees—not just making it possible for old jobs to be done differently, but also creating fundamentally new and different patterns of interaction. Mobile and tablet adoption served as a clarion for wearables, the Internet of Things, and now augmented and virtual reality. Competitive dynamics are also driving change. Start-ups and companies without strong ties to legacy systems will find themselves increasingly able to innovate in service delivery, unhindered by decades of technical debt and legacy decisions.

At the same time, significant external forces are redrawing core roadmaps. ERP vendors have invested heavily in next-generation offerings and are aggressively positioning their modernized platforms as the answer to many enterprise system challenges. Cloud players are offering a rapidly growing catalog of back- and mid-office solutions. At the same time, expertise in COBOL and other mainframe programming languages is growing scarce as aging programmers retire—a situation that weighs heavily in more and more replatforming decisions.2 Against this backdrop, a steady drumbeat of hype touting emerging technologies casts legacy as a four-letter word in the minds of C-suite executives and line-of-business leaders.

All of these factors make it more important than ever for CIOs to define a deliberate core strategy based on the needs and goals of the business. Start with existing business processes. Does the core IT stack help or hinder users with their daily tasks across departments, processes, and workloads? How is the strategy of each function evolving, and what impact will those strategies have on the core? How is the broader business positioning itself for growth? What are the key constraints and enablers for business growth today? Implications for the core will no doubt follow, but specific needs will differ depending on plans for organic growth in existing markets, business model innovation, mergers, acquisitions, and divestitures, among others.

Next, examine technology-driven factors by putting their potential impacts in a business context. For example, translate abstract technical debt concerns into measurable business risks. Technical scalability can be represented by inhibitors to growth due to limits on the number of customers, orders, or payments that can be transacted. Translate reliability concerns into lost revenue or penalties for missing SLAs due to outages or service disruptions. Immature integration and data disciplines can have concrete impacts in terms of project delays—and the extent to which they may hobble digital, analytics, and cloud efforts.

Go big, go bold

Approach reimagining the core with a transformational lens—it is, after all, a chance to modernize much more than the underlying technology. Challenge the business to imagine how functions and processes should and could operate based on today’s realities, not yesterday’s constraints. How is digital eliminating physical location constraints in your business? What if complex analysis could be deployed across all of your organization’s data in an instant? Where are business ecosystems blurring or obliterating lines between competitors, partners, and customers? How is cloud offering a different way of procuring, building, integrating, and assembling services into systems? Core modernization can provide a path toward much more than reengineering; it may ultimately help the business reinvent itself.

Reimagining the core could also involve more modest ambitions. For example, for some core challenges, rote refactoring without enhancing capabilities, or making technical upgrades to underlying platforms without improving process scope or performance, may be the appropriate response. Tactical execution can be an option, but only after thoughtful consideration; it should not be undertaken simply because it represents the path of least resistance. Regardless of direction, define an explicit strategy, create a roadmap based on a series of manageable intermediate investments, and create a new IT legacy by reimagining core systems.

The five Rs

Roadmaps for reimagining the core should reflect business imperatives and technical realities, balancing business priorities, opportunities, and implementation complexity. Approaches may range from wholesale transformational efforts to incremental improvements tacked on to traditional budgets and projects. But they will likely involve one or more of the following categories of activities:

- Replatform: Upgrade platforms through technical upgrades, updates to latest software releases, migration to modern operating environments (virtualized environments, cloud platforms, appliance/engineered systems, in-memory databases, or others), or porting code bases. Unfortunately, these efforts are rarely “lift and shift” and require analysis and tailored handling of each specific workload.

- Revitalize: Layer on new capabilities to enhance stable underlying core processes and data. Efforts typically center around usability—either new digital front-ends to improve customer and employee engagements, or visualization suites to fuel data discovery and analysis. Analytics are another common area of focus—introducing data lakes or cognitive techniques to better meet descriptive reporting needs and introduce predictive and prescriptive capabilities.

- Remediate: Address internal complexities of existing core implementations. This could involve instance consolidation and master data reconciliation to simplify business processes and introduce single views of data on customers, products, the chart of accounts, or other critical domains. Another likely candidate is integration, which aims to make transaction and other business data available as APIs to systems outside of the core and potentially to partners, independent software vendors, or customers for usage outside of the organization. These new interfaces can drive digital solutions, improve the reach of cloud investments, and simplify the ongoing care and maintenance of core systems. Finally, remediation may mean rationalizing custom extensions to packages or simplifying the underlying code of bespoke solutions.

- Replace: Introduce new solutions for parts of the core. This may mean adopting new products from existing vendor partners. In some industries, it may involve revisiting “build” versus “buy” decisions, as new entrants may have introduced packages or cloud services performing core processes that previously required large-scale custom-built solutions. Ideally, organizations will use these pivots to revisit the business’s needs, building new capabilities that reflect how work should get done, not simply replicating how work used to get done on the old systems.

- Retrench: Do nothing—which can be strategic as long as it is an intentional choice. “Good enough” may be more than enough, especially for non-differentiated parts of the business. Weigh the risks, understand the repercussions, inform stakeholders, and focus on higher-impact priorities.

Lessons from the front lines

Driving core transformation

The Texas Department of Motor Vehicles (TxDMV) had a problem. The legacy mainframe system it used to register almost 24 million vehicles annually3 was becoming, as they say in Texas, a bit long in the tooth. The registration and title component, built on ADABAS/Natural database, had been online since 1994. Its COBOL-based vehicle identification system had been active for almost as long. Other components were, at best, vintage.

After years of heavy use, these systems were becoming costly and challenging to maintain. Their lack of agility impeded significant business process improvements. On the data front, users struggled to generate ad hoc reports. The kind of advanced analytics capabilities the agency needed to guide its strategy and operations were nonexistent. What’s more, the entire TxDMV system had become vulnerable to maintenance disruption: If IT talent with the increasingly rare skills needed to maintain these legacy technologies left the organization, it would be difficult to replace them.

“It was as if our system was driving our processes rather than the other way around,” says Whitney Brewster, TxDMV’s executive director. “We realized that, in order to make the changes needed to serve our customers better, we were going to need a more agile system.”

In 2014, TxDMV launched a core modernization initiative designed to update critical systems, address technical debt, and help extract more value from long-standing IT investments by making them more agile. Because they didn’t have the budget to “rip and replace” existing infrastructure, agency IT leaders took a different approach. Using a set of specialized tools, they were able to automatically refactor the code base to a new Java-based platform that featured revamped relational data models.

This approach offered a number of advantages. It allowed TxDMV to transition its legacy ADABAS, Natural, COBOL, and VSAM applications to a more modern application platform more quickly, with higher quality, and at lower risk than it would have been able to do with traditional reengineering. End-user impacts were minimized because the refactored system retained the functional behavior of the legacy app while running on a stable, modern technology platform. According to TxDMV CIO Eric Obermier, relatively little code remediation was required. “We had to rewrite a couple of particularly complex modules so the refactoring engine could handle them, but for the most part, our efforts were focused on creating test cases and validating interfaces.”

Thus far, TxDMV’s efforts to reimagine its core systems have borne welcome fruit. End users can now develop and filter structured reports in a modern format. Moreover, the platform provides a stable base for adding more functionality in the future. “Our next step is to modernize our business processes and increase end-user engagement to help drive the future of the registration and titling system. Given how much we’ve learned already, those, along with future projects, will go much more smoothly,” says Brewster. “This has been a real confidence-builder for our entire team.”4

The art of self-disruption

In the highly competitive digital payments industry, warp-speed innovation and rapidly evolving customer expectations can quickly render leading-edge solutions and the core systems that support them obsolete.

To maintain a competitive edge in this environment, PayPal, which in 2014 processed 4 billion digital payments,5 has committed to a strategy of continuous self-disruption. Over the last few years, the company has undertaken several major initiatives in its development processes, infrastructure, and core systems and operations.

One of the company’s more significant undertakings involved transitioning from a waterfall to an agile delivery methodology, an effort that required retraining 510 development teams spread across multiple locations in a matter of months.6The revamped approach applied to both new digital platforms and to legacy system enhancements. Though embracing agile was a needed change, PayPal knew the impact might be diminished if existing infrastructure could not become more nimble as well. So the company decided to re-architect its back-end systems, including transitioning its existing data center infrastructure into a private cloud using OpenStack cloud management software. The net result of deploying agile delivery and operating with a revitalized infrastructure was accelerated product cycles that provide scalable applications featuring up 40 percent fewer lines of code.7

Additionally, over the last few years, PayPal has acquired several mobile payment technology vendors whose innovations not only fill gaps in PayPal’s portfolio of services, but also drive beneficial disruption within its core systems and operations. In 2013, PayPal acquired Chicago-based Braintree, whose Venmo shared payments products could potentially boost PayPal’s presence in the mushrooming peer-to-peer (P2P) transaction space.8 In July 2015, the company announced the acquisition of Xoom, a digital money transfer provider, in order to grow its presence in the international transfers and remittances market.9

With a multifaceted approach to modernizing its systems and processes, PayPal has revitalized its existing platform, added new service capabilities, and transformed itself into a broad-reaching “payment OS for all transactions.”10 This approach will certainly be tested as new firms enter the financial services technology sector, each aiming to disrupt even the most innovative incumbents.

All aboard! Amtrak reimagines its core reservation system

What began in 2011 as a channel-by-channel effort to modernize Amtrak’s legacy reservation system has evolved into what is now a major customer experience transformation initiative grounded in core revitalization.

Dubbed “EPIC,” this initiative is creating an enterprise platform for new capabilities that can deliver a seamless and personalized customer experience across Amtrak.com, mobile, call centers, in-station kiosks, and third-party touchpoints. From a business perspective, the EPIC platform will enable Amtrak to respond to changing market conditions and customer needs more quickly, offer customers a fully integrated travel service rather than just a train ticket, and support distribution partners in ways that currently overload its systems.

The EPIC transformation is built on top of ARROW, the system at the heart of Amtrak’s pricing, scheduling, and ticketing. Built 40 years ago, ARROW provides the foundation for processing transactions according to Amtrak’s complex business rules. Instead of ripping out legacy core systems, Amtrak is modernizing them to enable new digital capabilities, which makes it possible for Amtrak to maintain operational stability throughout the transformation effort.

To achieve EPIC’s full potential, Amtrak must first address a host of system challenges within ARROW. The ARROW system maintains reservation inventory and provides data to other Amtrak systems for accounting, billing, and analysis. Over the years, Amtrak IT has added useful custom capabilities, including automatic pricing, low-fare finders, and real-time verification of credit and debit cards.11 But the customization didn’t stop there, says Debbi Stone-Wulf, Amtrak’s vice president of sales, distribution, and customer service. “There are a lot of good things about ARROW, but there are items we need to address. We’ve hard-coded business rules that don’t belong there and added many point-to-point interfaces over the years as well. Changes take time. Our plan is to keep the good and get rid of the bad.”

In anticipation of a 2017 rollout, IT and development staff are working to design EPIC’s architecture and interfaces, and map out a training plan for business and front-end users. The solution includes a new services layer that exposes ARROW’s core capabilities and integrations to both local systems and cloud platforms. Amtrak is also looking for opportunities to leverage the EPIC platform in other parts of the organization, including, for example, bag tracking, which is currently a largely manual process.

“This project has helped us look at modern technology for our enterprise platform and implement it more seamlessly across legacy and new systems,” says Stone-Wulf. “It has also helped us renew our focus on customers and on what Amtrak is delivering. In the past we would ask, ‘How does it help Amtrak?’ Now we ask, ‘How does it help our customers?’”12

My take

Karenann Terrell Executive vice president, CIO, Wal-Mart Stores Inc.

As CIO of Wal-Mart Stores Inc., my top priority is building and maintaining the technology backbone required to meet Wal-Mart’s commitment to its customers, both today and in the future. Given our company’s sheer size—we operate 11,500 stores in 28 countries—scale is a critical use case for every technology decision we make. And this burgeoning need for scale shows no sign of abating: In 2015, our net sales topped $482 billion; walmart.com receives upward of 45 million visits monthly. As for our systems, there are probably more MIPS (million instructions per second) on our floor than any place on Earth. We’ve built a core landscape that combines custom-built solutions, packaged solutions we’ve bought and adapted, and services we subscribe to and use.

All of this makes the IT transformation effort we have undertaken truly historic in both complexity and reach. Over the last few years, technological innovation has disrupted both our customers’ expectations and those of our partners and employees. We determined that to meet this challenge, we would modernize our systems to better serve Wal-Mart’s core business capabilities: supply chain, merchandizing, store systems, point-of-sale, e-commerce, finance, and HR. As such, in all of these areas, we are working to create greater efficiencies and achieve higher levels of speed and adaptability.

From the beginning, three foundational principles have guided our efforts. First, we have grounded this project in the basic assumption that every capability we develop should drive an evergreen process. As technological innovation disrupts business models and drives customer expectations, modernization should be a new way of working, not an all-consuming, point-in-time event. Our goal has been to embed scope into the roadmaps of each of our domain areas as quickly as possible to accommodate immediate and future needs. If we’re successful, we will never have to take on another focused modernization program in my lifetime.

Second, in building our case for taking on such a far-reaching project now, we knew our ideas would gain traction more quickly if we grounded them in the business’s own culture of prioritization. By focusing on enhanced functional capabilities, our efforts to reimagine the underlying technology became naturally aligned with the needs of the business. Within the larger conversation about meeting growing needs in our function areas, we deliberately avoided distinguishing or calling out the scope of modernization efforts. This approach has helped us build support across the enterprise and make sure modernization isn’t viewed as something easily or quickly achieved.

Finally, we created strong architectural guidelines for our modernization journey, informed by the following tenets:

Get started on no-brainer improvements—areas in which we have no regrets, with immediate mandate to take action and do it! For example, we had applications with kernels built around unsupported operating systems. We just had to get rid of them.

Build out services-based platforms, aligned around customer, supply chain, and our other functional domains. Our “classic” footprint (a term I prefer to “legacy,” which seems to put a negative emphasis on most strategic systems) required decisions around modernization versus transition. We ended up with several platforms because of our scale and complexity—unfortunately, one single platform just isn’t technologically possible. But we are building micro-services, then macro services, and, eventually, entire service catalogs.

Create a single data fabric to connect the discrete platforms. The information generated across all touchpoints is Wal-Mart’s oil—it provides a single, powerful view of customers, products, suppliers, and other operations, informing decision-making and operational efficiency while serving as the building block for new analytics products and offerings.

Is there a hard-and-fast calculus for determining which approach to take for reimagining core systems? Only to fiercely keep an eye on value. Our IT strategy is not (and will never be) about reacting to the technology trend of the moment. I’m sometimes asked, “Why don’t you move everything to the cloud?” My answer is because there’s nothing wrong with mainframes; they will likely always play a part in our solution stack. Moreover, there’s not enough dark fiber in the world to meet our transaction volumes and performance needs.

Business needs drive our embrace of big data, advanced analytics, mobile, and other emerging domains; we never put technology first in this equation. With that in mind, we’re positioning our technology assets to be the foundation for innovation and growth, without compromising our existing core business, and without sacrificing our core assets. I’m confident we’re on the right path.

Cyber implications

Revitalizing core IT assets can introduce new cyber risks by potentially exposing vulnerabilities or adding new weaknesses that could be exploited. Technical debt in nonstandard or aging assets that have not been properly maintained, or legacy platforms that are given exceptions or allowed to persist without appropriate protections, allow threats that could otherwise be reasonably mitigated to persist, and expand risk.

Accordingly, efforts to reimagine the core can introduce both risk and opportunity. On the risk front, remediation efforts may add new points of attack with interfaces that inadvertently introduce issues or raise the exposure of long-standing weaknesses. Similarly, repurposing existing services can also create vulnerabilities when new usage scenarios extend beyond historical trust zones.

Yet, reimagining the core also presents opportunities to take stock of existing vulnerabilities and craft new cyber strategies that address the security, control, and privacy challenges in revitalized hybrid—or even cloud-only—environments. Companies can use core-focused initiatives as opportunities to shore up cyber vulnerabilities; insert forward-looking hooks around identity, access, asset, and entitlement management; and create reusable solutions for regulatory and compliance reporting.

We’re moving beyond misperceptions about cloud vulnerabilities and the supposedly universal advantages of direct ownership in managing risk. Some companies operating legacy IT environments have mistakenly assumed that on-premises systems are inherently more secure than cloud-based or hybrid systems. Others have, in effect, decoupled security from major core systems or relied upon basic defensive capabilities that come with software packages. Core revitalization initiatives offer companies an opportunity to design security as a fundamental part of their new technology environments. This can mean major surgery, but in the current risk and regulatory environments, many organizations may have no choice but to proceed. Doing so as part of a larger core renaissance initiative can help make the surgery less painful.

Moreover, deploying network, data, and app configuration analysis tools during core renaissance discovery, analysis, and migration may also provide CIOs with greater insight into the architectural and systemic sprawl of legacy stacks. This, in turn, can help them fine-tune their core renaissance initiatives and their cyber risk management strategies.

Revitalizing core systems; deploying new analytics tools; performing open heart surgery—where will CIOs find the budget for efforts that many agree are now critical? Core renaissance can help on this front as well. Revitalizing and streamlining the top and bottom layers of legacy stacks may provide cost savings as efficiencies increase and “care and feeding” costs are reduced. Budget and talent resources can then be directed toward transforming security systems and processes. IT solutions increasingly focus either on built-in security and privacy features or on providing easy integration with third-party services that can address security and privacy needs. It is imperative that risk is integrated “by design” as opposed to bolted-on as an afterthought.

Approaches for addressing vulnerabilities will vary by company and industry, but many are crafted around the fundamental strategy of identifying cyber “beacons” that are likely to attract threats, and then applying the resources needed to protect those assets that are more valuable or that could cause significant damage if compromised. As last year’s cyber-attacks have shown, customer, financial, and employee data fit these risk categories.13 In addition to securing data and other assets, companies should also implement the tools and systems needed to monitor emerging threats associated with cyber beacons and move promptly to make necessary changes.

In the world of security and privacy there are no impregnable defenses. There are, however, strategies, tactics, and supporting tools that companies can utilize to become more secure, vigilant, and resilient in managing cyber risk. Efforts to reimagine the core are an opportunity to begin putting those ramparts in place.

Where do you start?

Core modernization is already on many IT leaders’ radar. In a recent Forrester survey of software decision makers, 74 percent listed updating/modernizing key legacy applications as critical or high priority.14 The challenge many of these leaders face will be to move from an acknowledged need to an actionable plan with a supporting business case and roadmap. Reimagining the core could involve gearing up for a sustained campaign of massive scope and possible risk, potentially one that business leaders might not be able to understand or willing to fully support.

Yet, the fact is that CIOs no longer have a choice. In the same way that the core drives the business, it also drives the IT agenda. Consider this: What if that same legacy footprint could become the foundation for innovation and growth and help fuel broader transformation efforts?

The following considerations may help bring this vision more clearly into focus:

- It’s a business decision: There should be a clear business case for modernizing core systems. Historically, business case analysis has tended to focus primarily on cost avoidance, thus rendering many proposed initiatives uneconomical. It’s hard to justify rewriting a poorly understood, complex core legacy application based only on the prospect of avoiding costs. However, when companies frame the business case in terms of lost business opportunities and lack of agility, the real costs of technical debt become more apparent. Even then, however, it is important to be realistic when projecting the extent of hidden complexity, and how much work and budget will be required to meet the challenges that surround this complexity.

- Tools for the trade: Until recently, the process of moving off ERP customizations and rewriting millions of lines of custom logic has been resource-intensive and issue-prone to the point of being cost-prohibitive. Why migrate that old COBOL application when it’s cheaper to train a few folks to maintain it? Increasingly, however, new technologies are making core modernization much more affordable. For example, conversion technologies can achieve close to 100 percent automated conversion of old mainframe programs to Java and modern scripting languages. New tools are being developed to automate the scanning and analysis of ERP customizations, which allows engineers and others to focus their efforts on value-added tasks such as reinventing business processes and user interactions.

- Shoes for the cobbler’s children: Leading CIOs caution against approaching core modernization as a project with a beginning and an end. Instead, consider anchoring efforts in a broader programmatic agenda.15 Keep in mind that core modernization efforts shouldn’t be limited to data and applications—they should also revisit underlying infrastructure. As IT workloads migrate to higher levels of abstraction, the infrastructure will require deep analysis. Shifting simultaneously to software-defined environments and autonomic platforms16 can amplify application and data efforts. Similarly, higher-level IT organization, delivery, and interaction models will need to evolve along with the refreshed core. Consider undertaking parts of the right-speed IT model17 in conjunction with core modernization efforts.

- Honor thy legacy: Reimagining the core has everything to do with legacy. That legacy is entangled in a history of investment decisions, long hours, and careers across the organization. A portion of your workforce’s job history (not to mention job security) is embedded in the existing footprint. As such, decisions concerning the core can be fraught with emotional and political baggage. As you reimagine core systems, respect your company’s technology heritage without becoming beholden to it. Sidestep subjective debates by focusing on fact-based, data-driven discussions about pressing business needs.

Bottom line

Legacy core systems lie at the heart of the business. In an age when every company is a technology company, these venerable assets can continue to provide a strong foundation for the critical systems and processes upon which growth agendas are increasingly built. But to help core systems fulfill this latest mission, organizations should take steps to modernize the underlying technology stack, making needed investments grounded in outcomes and business strategy. By reimagining the core in this way, companies can extract more value from long-term assets while reinventing the business for the digital age.

Deloitte Consulting LLP’s Technology Consulting practice is dedicated to helping our clients build tomorrow by solving today’s complex business problems involving strategy, procurement, design, delivery, and assurance of technology solutions. Our service areas include analytics and information management, delivery, cyber risk services, and technical strategy and architecture, as well as the spectrum of digital strategy, design, and development services offered by Deloitte Digital. Learn more about our Technology Consulting practice on www.deloitte.com.