Military readiness through AI How technology advances help speed up our defense readiness

13 minute read

24 April 2019

Today's military forces need more than ammunition to win battles. When evaluating resources, leaders need to leverage the latest technology tools—artificial intelligence and digital information—to make the best decisions first.

In July 1950, a small group of American soldiers called Task Force Smith were all that stood in the way of an advance of North Korean armor. The soldiers’ only anti-armor weapons were bazookas left over from World War II. The soldiers of Task Force Smith quickly found themselves firing round after round of bazooka ammunition into advancing North Korean T-34s only to see them explode harmlessly on the heavily armored tanks. Within seven hours, 40 percent of Task Force Smith were killed or wounded, and the North Korean advance rolled on.1

Learn More

Read other content from the Defense innovation series:

Designing for military dominance

View the AI in government collection

Subscribe to receive related content from Deloitte Insights

The shortcomings of the bazooka were no surprise. However, budget cutbacks after World War II scuttled adoption of an improved design. So when, in 1950, waves of North Korean troops pushed down the Korean Peninsula, US troops were armed only with the older, less effective WWII-vintage bazooka, a weapon they knew could not compete on the battlefield.

The lesson is that peacetime innovation is often overlooked only at the cost of wartime casualties. While today the United States may have an edge in bullets and rockets, any advantage in critical areas such as artificial intelligence (AI) and processing of digital information may be quickly eroding. With wars won and lost based on making the right decisions first, AI and information processing tools may be just as critical to victory as ammunition. In fact, some senior military leaders think that AI will be more important to great power competition than military power itself.2 The military needs a strong plan now if it does not want to find itself shooting useless algorithms at its most challenging problems tomorrow.

Readiness—a keystone challenge for AI

Assessing readiness informs or draws upon nearly every aspect of military decision-making, from tactical operations to force structure to budgeting. To make readiness assessments and decisions effectively also requires huge volumes of diverse data from many different sources. Large data volumes, diverse sources of information, complex interactions, and the need for speed and accuracy make readiness a problem tailor made for AI to tackle. And if AI can help tackle readiness, it can help the military tackle just about anything.

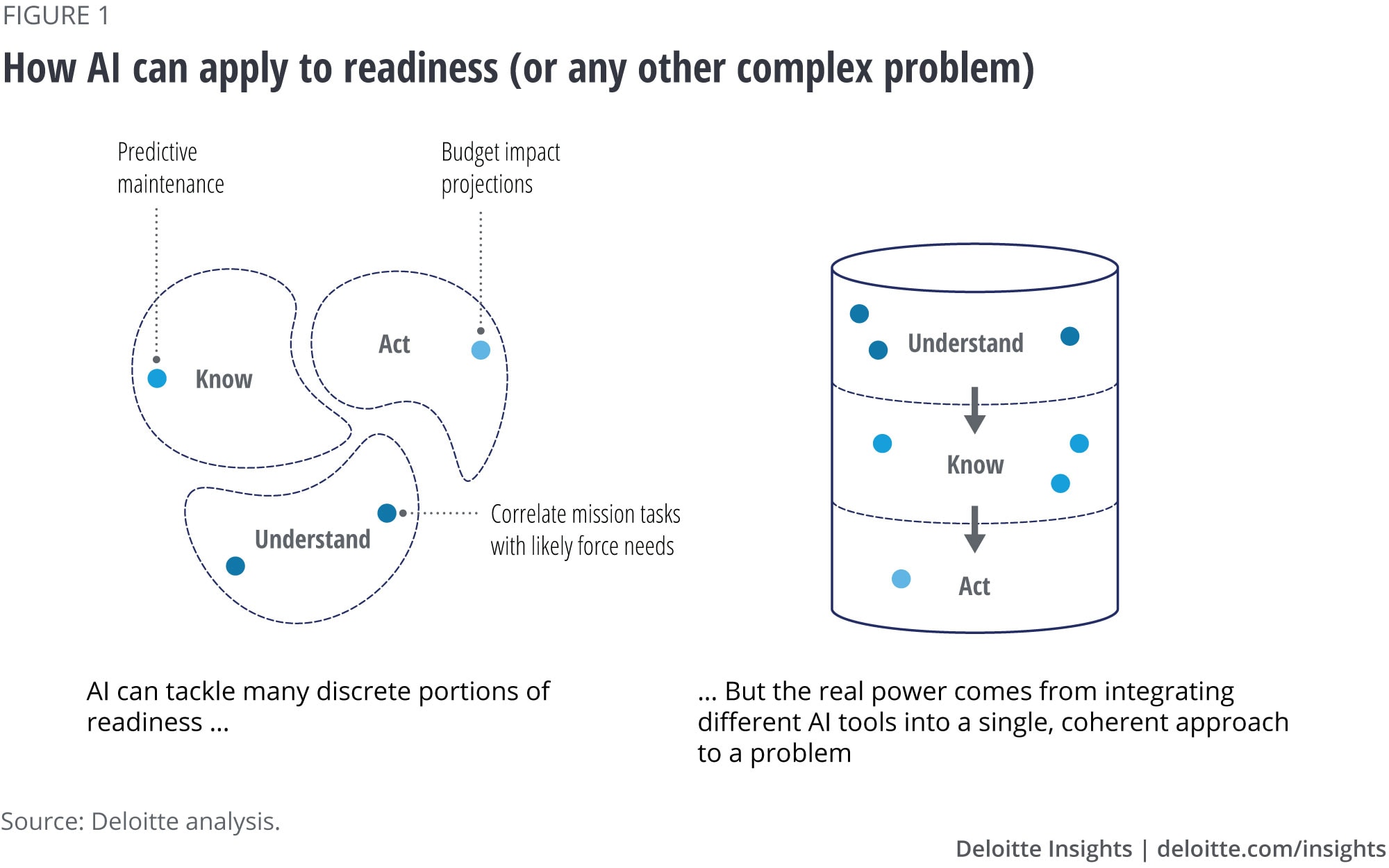

In previous research, we have described how redefining readiness can help bring new tools and technologies to bear and provide greater insight than ever before.3 At its core, this redefining breaks readiness assessments into three smaller tasks: You have to understand what capabilities are required, to know the current status of those capabilities, and to act to improve those capabilities where needed. Each of these readiness tasks involve sifting through mountains of information, teasing apart complex interactions, and then trying to understand the effects of any decision. That makes them incredibly difficult for human planners to tackle, but perfect for AI.

AI tools can tackle many different aspects of readiness, everything from understanding force requirements to increasing aircraft up-time with predictive maintenance (figure 1). However, the real power of AI in readiness does not come from discrete point solutions, but from linking many different AI-powered tools together. Then, the smart output of one tool can become the smart input to another.

Putting the AI pieces in play

The term AI may be misleading in one respect. It may lead us to believe that there is just one type of “intelligence” that all AI tools aspire toward. Nothing could be further from the truth. Different AI tools have different purposes, different strengths, and different weaknesses. (See the sidebar, “What do we mean by AI?” for examples of these different tools.)

The important insight here is that AI is not a magic bullet to all problems. Until future research breakthroughs create a general purpose and context-aware AI, users must make informed choices about the trade-offs inherent in different AI tools.4 Perhaps the most basic trade-off is between depth of insight and model complexity, which is at the heart of any discussion of assessing military readiness. Some of the information requirements inherent in assessing readiness are simpler and can be aided by simpler AI tools.

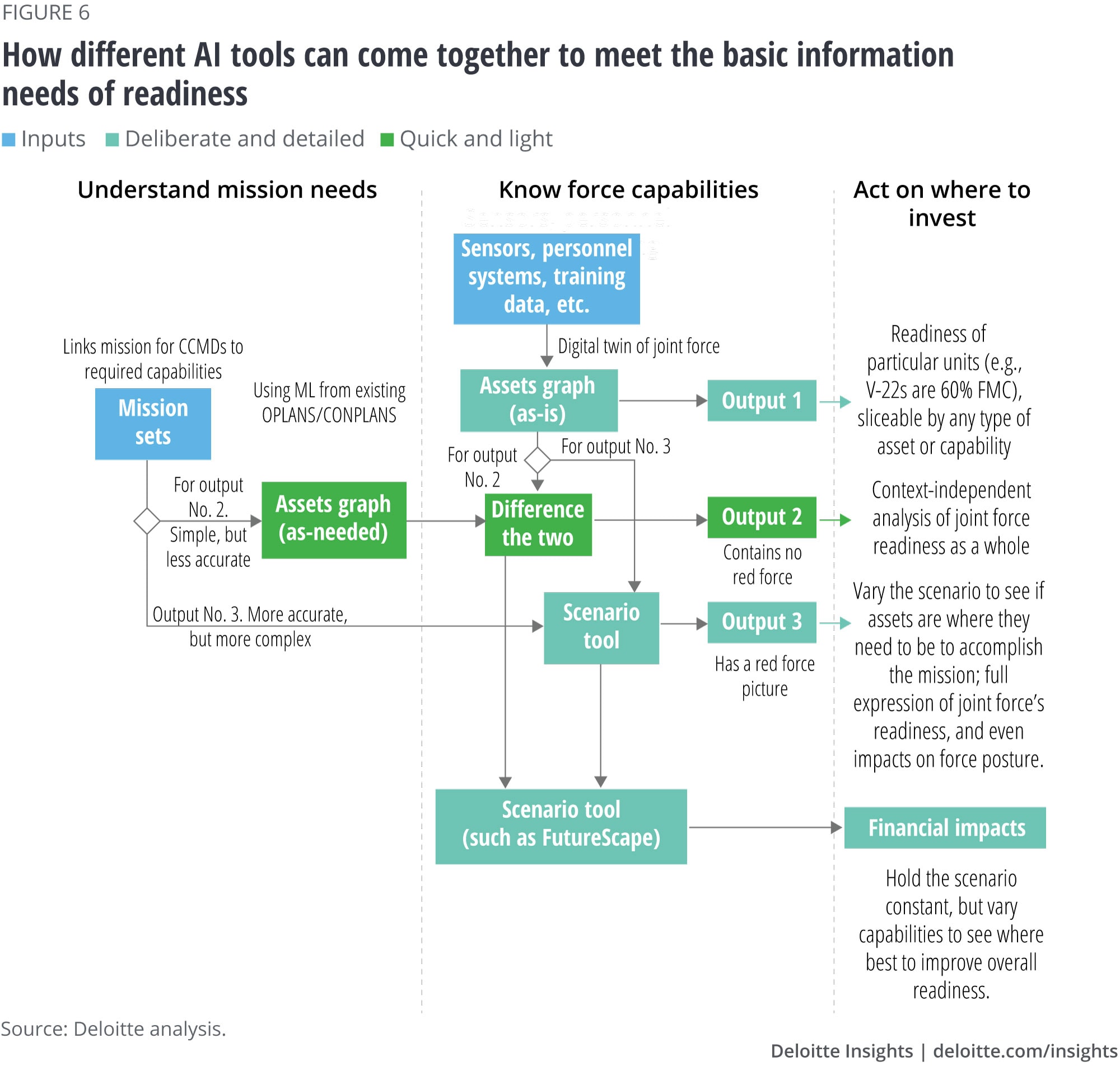

For example, today the requirement to understand the assets required of an assigned mission is often done in the context of static planning documents. These are assembled at a strategic or operational level and infrequently change. However, even relatively simple AI can yield more dynamic and potentially more accurate predictions by making use of historical mission data. Historical mission examples and existing plans, such as operation plans (OPLANS) and concept plans (CONPLANS), provide a wealth of information on which assets—people, equipment, and infrastructure—have been deployed around the world. Each historical mission also has unique factors, from terrain to adversary capabilities to timeline. Pairing these two types of data in an AI tool such as a neural network can allow users to make predictions about which assets are vital for success of their particular mission set.

However, other aspects of readiness require deeper insights that can only be provided by more complex models. The resources and time required to build and run these complex models mean that they are not well-suited to every situation. As a result, defense leaders seeking to know current force capabilities or how to act to best improve those capabilities face a choice. They can either have faster, lighter, but less reliable answers to those questions or more reliable answers but at the cost of time and resources. It’s important to understand that these are the general trade-offs military leaders face in their adoption of AI.

What do we mean by AI?

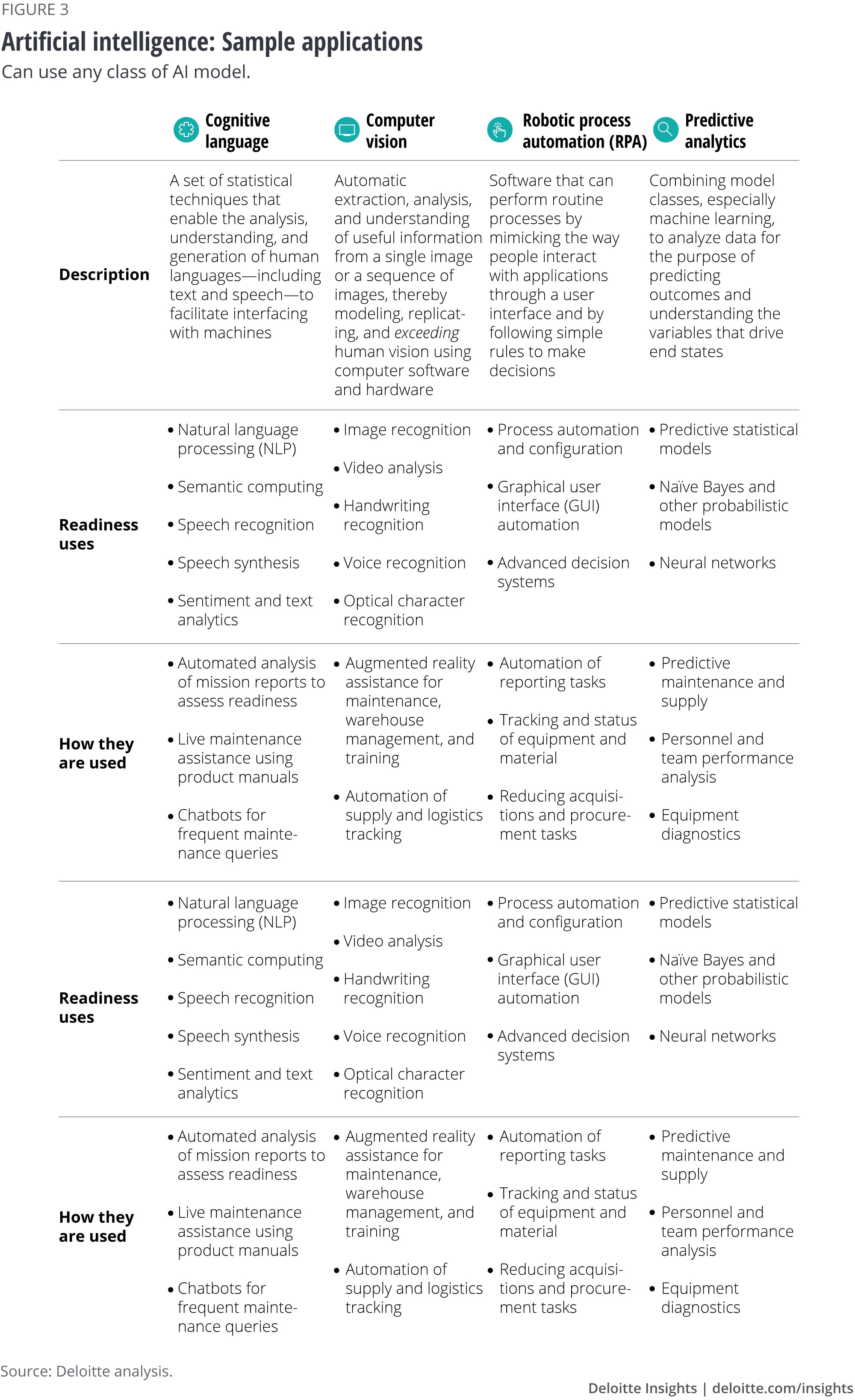

The term “artificial intelligence” can mean a huge variety of things depending on the context. To help leaders understand such a wide landscape, it is helpful to distinguish between the types of model classes of AI, and the applications of AI. The first are the classifications based on how AI works; the second is based on what tasks AI is set to do.

Quick and light

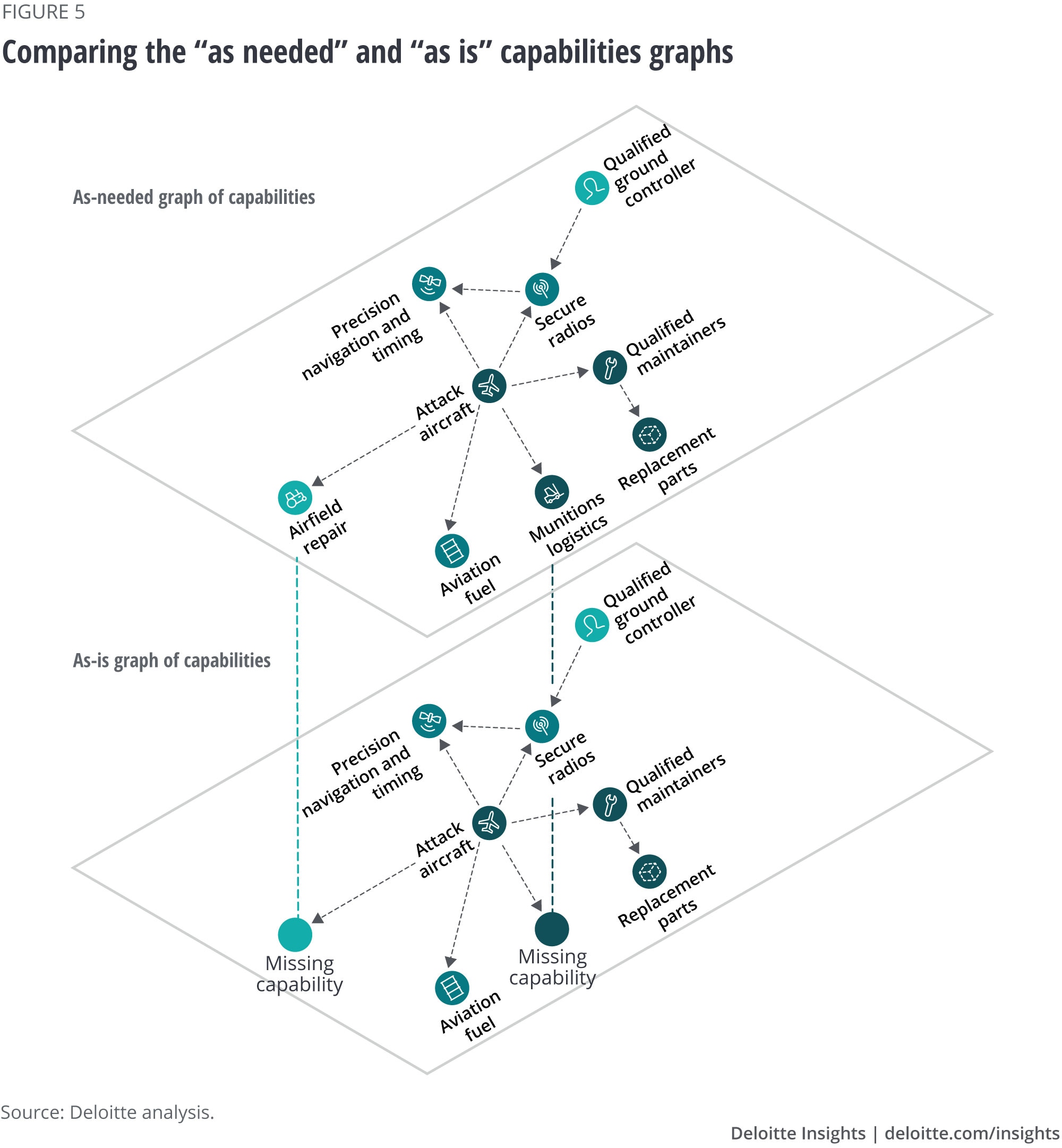

The faster, if less accurate approach to AI begins with the list of all required assets described above. This list can be turned into a dependency graph describing how different assets rely on each other to form needed capabilities (figure 4). In other words, the capability of close air support is not just about having the right attack aircraft, but also the right munitions, pilots, radios, and trained controllers on the ground or in the air. So having a graph of needed assets can offer leaders a more complete, accurate, and flexible picture of what a military can bring to bear than a simple list.

The “as-needed” graph of capabilities can then be compared to an “as-is” graph (figure 5) created by compiling the current status of all equipment, personnel, and infrastructure from real-time data. Comparing these two graphs can help to highlight where existing assets cannot meet mission need. The resultant differences between “as-needed” and “as-is” point to gaps in the existing force structure, which can then be addressed through proactive investment for the most important mission sets. This approach answers the fundamental readiness questions of “Is the force ready for a given mission set?” and “How can that readiness be improved?”

While this approach allows for faster mission analysis, requires less data and computation, and demands less human judgment in the modeling choices, it also has some serious shortcomings. Because it remains divorced from a broader scenario planning context, an approach built on graphs may fail to include time/distance factors or competing demands for capabilities. So, for example, if a mission set requires two C-5 squadrons for mobility, as long as there are two mission-capable squadrons anywhere in the joint force, it will show as ready. It will not take into account if those squadrons can actually make it to where they are needed in time or if they are tied up on other missions. Another key shortcoming of this approach is the limited influence of the adversary. Enemy capabilities are accounted for in historical mission data, so this method predicts future demands based on past performance, and therefore does not include an agent-based or adaptive red force of the future.

Deliberate and detailed

Another approach to the basic questions of readiness takes these limitations into account, albeit at the cost of greater time and resources. This second method creates a fuller, more complex picture, by inputting the “as-is” picture of the current status of all assets into a scenario analysis tool that can model the full set of assigned missions. Running the scenario tool then allows for variation and testing of how the current force could execute those missions under different conditions. Rather than relying on historical analysis, varying the scenario can determine if a given set of assets truly can do what the mission asks of them, or if other capability mixes can. This approach can answer questions like, “Can the C-5s reach the airfield in time?” or “Can the helicopters assigned to the mission fit the raid force’s M327 120mm mortars?” It also allows for multiple scenarios to run against the “as-is” picture of the force concurrently. If a mission in the Asia-Pacific and a mission in the Middle East overburden the same resources, then they cannot be effectively executed simultaneously, and these would be areas for potential investment.

Even more importantly, this method allows for agent-based simulation to be combined with the breadth of data and variation that AI can provide, creating the most realistic depiction possible of adversary capabilities and courses of action (figure 6). Here, the enemy is not simply a static list of capabilities or doctrinal templates; it can react appropriately to the strategy and tactics being used in the simulation. This aspect of scenario-based tools helps military planners to take into account new tactics or new adversaries on which there may not be much historical data. For example, how could the Navy possibly know how to counter emerging technologies like hypersonic missiles or what if the Army meets a new terrorist group? The answer is to fight against them hundreds if not thousands of times digitally before ever meeting them on the battlefield.

Scenario analysis tools form a large part of meeting the National Defense Strategy Commission’s recommendation that the Department of Defense “must use analytic tools that can measure readiness across this broad range of missions, from low-intensity, gray-zone conflicts to protracted, high-intensity fights.”5 In short, this more detailed approach can not only help the military be ready for the fight today but also set appropriate force posture to be ready for future fights.

However, this method is also computationally intensive. The greater the accuracy desired from a model, the more and more varied types of data that must go into that model. A full-scale scenario model, for example, would require a near real-time picture of the force, meaning actively sensing and sending data on every operating asset. That is an incredible amount of data to ingest and manage in one system. Beyond those technical challenges, there are philosophical challenges to overcome as well. Even the detailed and accurate model is still a model. As such, it is subject to the flaws and biases in human decision-making, as users make determinations around which models, scenarios, and parameters are most likely. As General Paul K. Van Riper showed in the famous Millennium Challenge 2002 war games, the final capabilities of friendly and enemy forces are not determined merely by the number of assets, but by how those assets are put to use.6

Overcoming the challenges

Military decision-makers will face many challenges when implementing AI into their readiness strategies. These challenges can include but are not limited to: who owns the data; how to validate the data; where and how it is stored; the dependence of high-level simulations on lower-level simulations; the classification of data and outputs; and on what network everything should reside. A combination of general AI practices and custom considerations can help military leaders navigate this tangle of choices and chart a path to a fundamentally new readiness system.

The first common challenge to any AI implementation is the most basic and yet the most challenging.

Asking the right questions

AI is not magic. As we have seen, different types of AI have different strengths and do different things well, but with corresponding limitations. But real-world problems are rarely encapsulated in discrete, neatly defined questions. They are complex topics with many messy, interrelated issues. Therefore, the first challenge is to discover ways to render a general readiness problem into specific questions suitable for AI without losing fidelity or applicability to the real work problem at hand. This is more of an intellectual and philosophical challenge versus a technical one. But unless that hard thinking is done up front, any solution generated by AI could be largely irrelevant to the mission problems faced in the real world.7

The who, what, and where of data

The best starting point when dealing with such significant volumes of data is often going to be the cloud, which allows for a single, extensible repository. Increasingly, cloud providers are also integrating additional AI-enabled services that can speed data validation and other tasks. In fact, our estimates suggest that by 2020, 87 percent of AI users will get at least some of their AI capabilities from cloud-based enterprise software.8

The support available from cloud providers underlines the importance of getting all of the data in the first place. Gathering real-time statuses for every piece of equipment, infrastructure, and service member in the joint force may seem like an impossible task. However, the military may already have much of the data it needs without even knowing it. For example, the Air Force only recently began running predictive maintenance programs on C-5, B-1, and C-130J airframes that had been producing detailed data about aircraft status that went uncollected for years. Identifying and tapping into such existing data sources can jump-start AI-enabled readiness assessments without the need for costly new systems. Previous research has shown that even adoption of transformational technology can often be accomplished by focusing on the existing data that an organization has without the need for new capital investments.9

Model accuracy

Another common problem for AI adoption is ensuring the accuracy of tools. Even the most advanced AI tools are still tools constructed by humans and, as such, can often mirror the judgments and biases of humans.10 For example, an AI-based system for assessing a prisoner’s risk of recidivism to help aid in setting bail and sentencing turned out to have a significant built-in racial bias. Prisoners wrongly labeled as high-risk were twice as likely to be black while those wrongly labeled as low-risk were more likely to be white.11

Since they often reflect quirks of human judgment or issues with training data, these types of biases can be hard to uncover and eliminate. One way to help ensure the desired accuracy of an AI system is to use participatory design, a process that includes a wide array of stakeholders, not just programmers and end-users, in the design process.12 This can help ensure a variety of perspectives are included in a simulation and that the right performance parameters are selected. In military applications, this can be even more important, because every military decision carries with it an implicit understanding of our own tactics and doctrine. Since the enemy does not play by the same rules, to avoid AI tools that are not unintentionally biased toward our own strategies—and therefore predict overly rosy outcomes—it is crucial to include a “red team” dedicated to playing devil’s advocate in the design process.

Military-specific challenges

Design and data challenges are common to any organization pursuing a large-scale AI project. However, there will also be some challenges unique to the military that will need to be overcome.

Model dependencies

A complex scenario analysis tool is composed of several different models at different levels of detail. Higher-level models are dependent on lower-level models for their accuracy. For example, a force-flow model of fighter jets depends upon lower-level, more detailed models about engine performance and fuel consumption at various altitudes. If those lower-level models are wrong, they can result in serious inaccuracies in a simulation, with aircraft flying faster than possible or never running out of fuel, or ground units walking for hundreds of miles without getting tired. In short, higher-level models cannot be accurate without getting the details of lower-level models right first.

To obtain the most accurate baseline models possible may require gathering the technical baseline data on key weapons systems. Readiness personnel should work with their acquisition counterparts to gather or gain access to that information for current systems and ensure that future contracts have access to that information for future systems.

Classification management

Perhaps the most closely held military secrets are what a military can and cannot do. So when assessing the readiness of a force against real-world mission sets, naturally, the results are expected to be classified. However, many of the lower-level models may use publicly available data. It is only through aggregating many of these different data points that details of military capabilities and weaknesses are revealed. As a result, classification of such an AI-enabled system needs to be carefully managed to ensure that key vulnerabilities are not accidentally revealed.

This challenge is compounded when considering that the classification of information will determine which communications network the tools must reside on. The higher the classification, the more difficult it will be to get tools certified to operate on that network. As a result, it is likely that an AI-enabled readiness system would exist on multiple networks, from unclassified to different levels of classification. The system will need procedures and tools for moving data from low-to-high and possibly for releasing appropriately classified data from high-to-low without revealing any important information or introducing vulnerabilities to the higher-classification networks.

Tomorrow’s solutions, today

AI and cognitive tools may not have the history of the tank or the cachet of the aircraft carrier, but they are undoubtedly important parts of future militaries. Understanding the benefits and common challenges of applying AI to military problems such as readiness can not only improve readiness assessments, but can also position the military to use other forms of AI more effectively.

Navigating the general and military-specific challenges is just the first step to AI adoption. Creating a structured campaign plan for AI can help deploy the right AI for the right problems and avoid the analog/digital equivalent of ineffective bazooka rounds against T-34s. The adoption of AI is not just like adding another team member: It can fundamentally change how humans and technology work together. Military leaders can use the following staged approach to help harness the power of AI, while mitigating some of the new challenges:

- Resolve. Determine the key readiness problem sets for AI to address. Identify the data you have related to those problem sets and resolve the issues with it; that is, organize and prepare your data to yield insights.

- Remodel. Change how you structure your data and your organization to make best use of the insights produced by AI. Make sure you have sufficient infrastructure and talent to manage the data and its use within the organization. Remember that outputs from AI systems may need some expert interpretation before decision makers can use them.

- Reimagine. Finally, pilot entirely new services and tools that apply AI to even more complex or pressing problem sets. For example, AI is already aiding in real-world scenario planning, helping airports respond to weather events and Formula One Race teams anticipate their competitors’ every move.13

AI is not magic; adopting AI tools is no guarantee of success. But given the role AI is likely to play in future conflict, not adopting AI will likely guarantee failure. Therefore, learning how to effectively use AI today—with all of its strengths and weaknesses—will be critical to success on future battlefields.

Explore AI in government

-

Artificial intelligence in government Collection

-

How artificial intelligence could transform government Executive summary7 years ago

-

How much time and money can AI save government? Article7 years ago

-

Case Studies: Customer experience in government Interactive8 years ago

-

Push innovation in government Article5 years ago

-

Can AI be ethical? Article5 years ago