From bytes to barrels has been saved

From bytes to barrels The digital transformation in upstream oil and gas

15 September 2017

Despite a deluge of digital advancements, upstream oil and gas companies have been slow to seize the opportunity. The prize of going digital is clear, but for most companies, getting there is not easy. A coherent road map could help make sense of the digital muddle and drive more value.

The case for becoming digital

Advancement in technologies, the falling cost of digitalization, and the ever-widening connectivity of devices provide a real competition-beating opportunity to upstream oil and gas (O&G) companies who play the digital revolution right. The lower-for-longer downturn and moderating operational gains have provided an extra incentive—or turned the opportunity into a need—for companies to save millions from their operating costs and, most importantly, make their $3.4 trillion asset base smarter and more efficient.1

What is holding them back from realizing this opportunity? More than the technicalities, it is often the digital muddle that’s deterring companies from achieving digital maturity. Companies can benefit from a strategic road map that helps them assess the digital standing of every operation and identify digital leaps for achieving specific business objectives. More importantly, it could push them to embrace a long-term goal of transforming their core assets and, finally, adopting new operating models (the journey from bytes to barrels).

This paper, first in the series of digital transformation in oil and gas, presents Deloitte’s Digital Operations Transformation (DOT) model—a framework that explains the digital journey through 10 stages of evolution, with cybersecurity and digital culture at the core—and uses it to ascertain the prospective value for seismic exploration, development drilling, and production segments.

Although some segments are ahead of others in data-driven analytics—seismic exploration is ahead of development drilling in analyzing and visualizing information while the production segment is still grappling with sensorizing its decade-old wells or making sense of the stored production data—the industry in general can draw lessons from digitally leading capital-intensive industries that are influencing a big change in their physical assets and capital models. The prize is sizeable for the O&G industry—even a 1 percent gain in capital productivity could help offset the cumulative net loss of $35 billion reported by listed upstream, oilfield services, and integrated companies worldwide in 2016.2

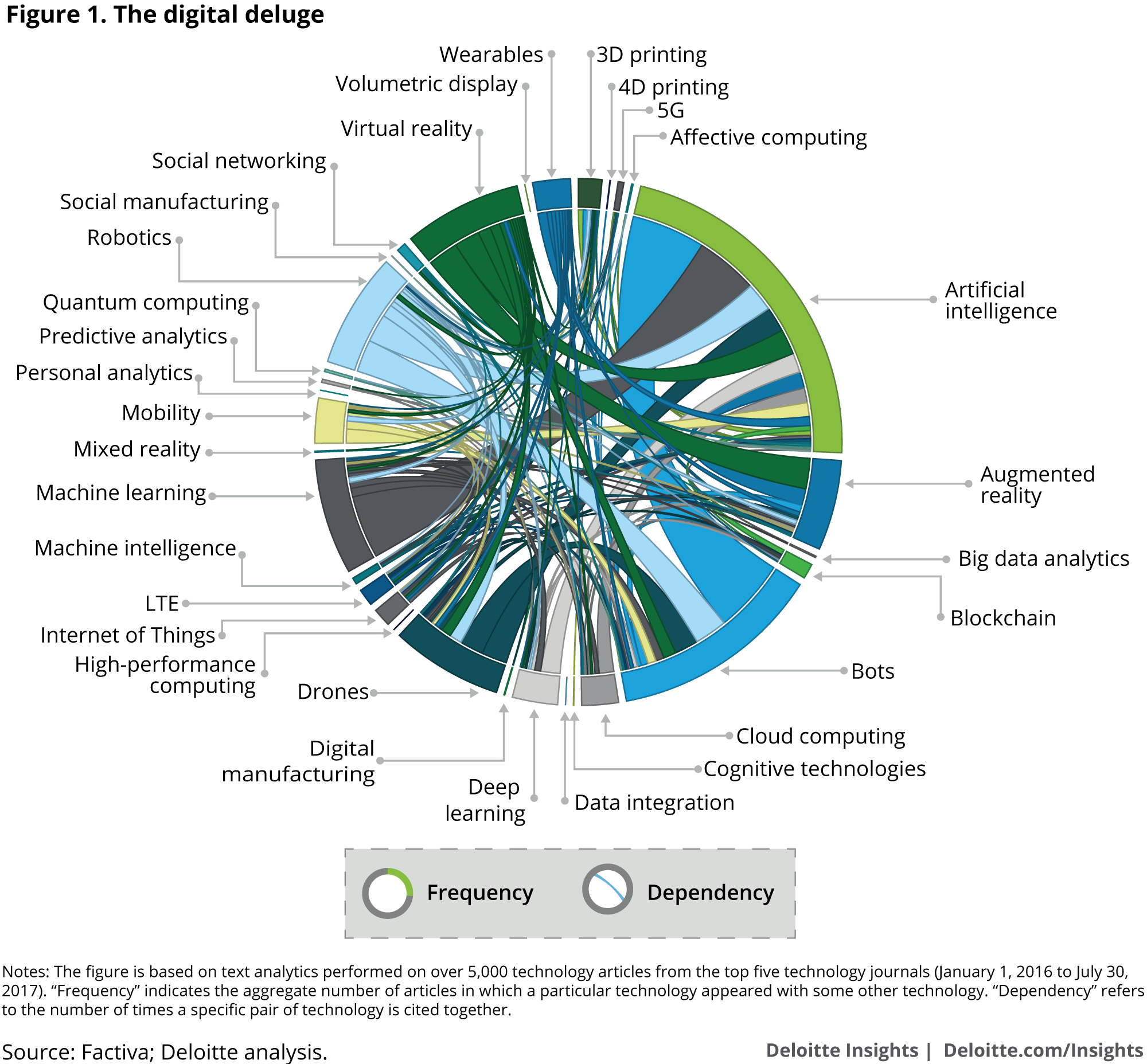

The digital deluge

Digital technologies are helping almost every industry rewrite its operating landscape, and the O&G industry can no longer remain behind. The potential benefits of going digital are clear—increased productivity, safer operations, and cost savings. Further, for O&G players, who are already grappling with weak oil prices and moderating operational gains, one of the biggest advantages of adopting digital technology could be the resilience these technologies offer to weather the downturns that the industry is prone to. 3

However, rapid changes in the digital world, a complex web of interdependencies between technologies, and even many names for the same technology often make it difficult for the industry to enable its digital transformation. For instance, out of the more than 200 technologies ever listed on Gartner’s Hype Cycle from 2000 to 2016, over 50 individual technologies appeared for just a single year, while many took years longer to register mainstream success.4

Our text analytics on 5,000 odd-articles from the top five technology journals reiterate this digital deluge (figure 1). Prominent technologies or buzzwords such as artificial intelligence and machine learning, and augmented reality and virtual reality are almost indistinguishable in terms of their benefits and highly dependent on each other. The result: high sunk and switching costs associated with technologies, siloed or marginal benefits, and non-scalable digital investments.

Although rapid prototyping (“fail early, fail fast, learn faster”) of new digital technologies is acceptable in many sectors, capital-intensive businesses such as O&G can’t solely rely on a trial-and-error approach or take a multi-technology route to address a problem. "Consumers and IT-based firms know the early bird gets the worm, but oil and gas players would rather be the second mouse that gets the cheese. This is because it's more costly to be the first to adopt new oil and gas innovations,” says an upstream executive with a supermajor.5

Further, current digital narratives in the O&G marketplace are often narrow and follow a bottom-up technology language. What could help the industry is a structured, top-down approach that not only overcomes this digital deluge but also helps O&G executives to draw a comprehensive road map for company-wide digital transformation. Put simply, the approach should answer three strategic questions on digital—“How digital are you today?,” “How digital should you become?,” and “How do you become more digital?”

Decoding the digital deluge: The DOT model

Given the diverse starting points and an array of choices, O&G companies could benefit from a coherent framework that helps them achieve their near-term business objectives, measures their digital progression through stages of evolution and, above all, gives them a pathway to ultimately transform the core of their operations, the real assets and the business model itself.

The Digital Operations Transformation (DOT) model is such a road map—a digital journey of 10 milestones, where the leap from one stage to another marks the achievement of specific business objectives, and puts cybersecurity and an organization’s digital traits at the core. Although the journey technically completes at stage 10 for a specific asset or operation, it should be broadened and extended into a never-ending loop to include a wider set of assets or business segments, the entire organization and, ultimately, the ecosystem of a company, including supply chain and external stakeholders (figure 2; also refer to table 2 in the appendix).

First up in the journey, we have companies mechanizing the process using hydraulic, pneumatic, or electrical control systems. This can allow players to anticipate and prepare for failures and unusual conditions. The journey then progresses to capturing information from the physical world (the physical to digital realm) by sensorizing equipment and transmitting data generated in the field using IT networks.6 By doing this, an O&G company may be able to respond to field conditions and monitor operations remotely.

The next set of milestones can be achieved when a company breaks operational silos between disciplines, realizes hidden productivity gains, improves the usability of data, and identifies new areas of value creation. For this, the transformation should progress from integrating diverse data (using cloud-solvers, servers, data standards, etc.), analyzing and visualizing data using new-age computers and platforms (for example, big data analytics, wearables, and interactive workstations) to augmented decision-making (for example, self-learning machines).

Typically, in the O&G domain, the digital thinking and narratives stop at data-driven insights. But to become a digital leader, a company should consider making a change in its physical world by modernizing its core assets (in this case, rigs, equipment, platforms, and facilities). In other words, it should complete the last three legs of the journey from bytes to barrels by closing out the physical-digital-physical loop.

This phase starts with robotizing facilities and progresses to the crafting of new products to improve precision, reliability, and design aspects of physical assets. Ultimately, the phase ends with virtualizing the entire asset base by creating digital twins and the digital thread, to not only extend the life of assets but also to adopt new business and asset models in the long term.7 A digital twin/thread vision would inevitably trigger the thinking and necessity to enable cross-organizational and cross-vendor workflows, the biggest bottleneck for many digital transformations.

Typically, the digital thinking and narratives stop at data-driven insights. But to become a digital leader, a company should consider making a change in its physical world by modernizing its core assets.

As mentioned earlier, once this physical-digital-physical loop has covered an asset, the loop can be restarted and widened to include a system of assets in a particular business line or geography, then the organization and, ultimately, the entire supply chain and external stakeholders of a company. A comprehensive cyber risk management program that is secure, vigilant, and resilient, and an organizational culture—or digital DNA—that would enable this transformation remain at the core of the model.8 (For more on safeguarding upstream operations, read our recently published paper, Protecting the connected barrels.)

Using this model, the following section maps the current digital standing of the upstream industry, identifies near-term digital leaps that upstream companies can take to meet their both near- and long-term objectives, and offers solutions for major operations in the exploration, development, and production segments.

Assessing digital transformation pathways for upstream operations

Although digital maturity varies from company to company, the exploration segment of the industry in general is digitally ahead of development and production. While decades of earth science understanding and advanced imaging technology have helped exploration, a complex ecosystem and a legacy asset base have constrained the digital evolution of the drilling and production segments, respectively.

However, not all subsegments within the exploration segment are ahead; similarly, there are a few subsegments within drilling and production that are adapting and getting ready for their digital leaps. Rather than detailing each subsegment, the following section talks about a prime subsegment within each segment—seismic imaging, development drilling, and production operations—where either the digital transformation is most needed or has the highest value creation potential (figure 3).

Seismic imaging

Seismic imaging—a process the industry has been using for over 80 years in evaluating and imaging new and complex subsurface formations—is largely at an advanced stage of data analysis and visualization in the DOT framework. Standardization of geological data and formats, investment in advanced algorithms by companies, and the evolution toward high-performance computers that can analyze the geoscience data of thousands of wells in a few seconds explain the analytical lead of this segment. ExxonMobil, for instance, is using seismic imaging to even predict the distribution of fractures in tight reservoirs, helping it to enhance flow and optimize well placement.9

Similarly, on the visualization front, the industry has made solid progress in developing 3D interpretation systems capable of geological and velocity modeling, structural and stratigraphic interpretation, and depth imaging. In fact, some companies have started to use time-lapse, 4D seismic models that integrate production data to track changes in O&G reservoirs.10 And, a few are thinking ahead by adding the elements of virtual reality to seismic imaging for improving the spatial perception of 3D objects. For example, a team of researchers at the University of Calgary is using virtual reality, augmented reality, and advanced visualization techniques to help Canadian producers using steam-assisted gravity drainage (SAGD) better manage their complex reservoirs by interacting with simulations in a real 3D world.11

Should players stop here? The recent oil price downturn has, at least for now, impacted business objectives. More than eyeing new and complex reservoirs in frontier locations, the near-term objective of the seismic imaging unit of O&G companies has shifted toward rightsizing their existing resource portfolio, including the identification of sub-commercial, marginal resources that are reducing profitability and locking up significant capital. “With a more focused asset base and improved balance sheet we are very willing to further high-grade our portfolio and deploy additional investment toward the best projects in our portfolio,” says Dave Hager, CEO of Devon Energy.12

The near-term objective of the seismic imaging unit of O&G companies has shifted toward rightsizing their existing resource portfolio, including the identification of sub-commercial, marginal resources that are reducing profitability and locking up significant capital.

What’s at stake? In the United States alone, about 65 percent of producing oil wells are marginal oil wells, producing less than 10 barrels per day (bpd) of oil. 13 Similarly, the industry’s 2P reserves that have extraction certainty of 50 percent account for about half of its 1P reserves.14 This divide of “good” and “bad” resources contributes to the valuation mismatch between buyers and sellers of upstream assets. As of July 2017, more than 1,250 upstream assets are up for sale globally, with 100-odd assets looking for buyers for more than three years.15

To bridge this gap or identify new areas of value creation, players should consider moving toward the augment stage, where machines reveal the geology through iterative learning and independently adapt using several factors, patterns, relationships, and scenarios. For example, a geological interpretation firm is incorporating a cognitive workflow where principal component analysis and self-organizing maps analyze combinations of seismic attributes corresponding to relevant hydrocarbon indicators.16

While framing their digital leap strategies, however, companies should consider if the augmentation solution creates an optimal balance between the data-driven and expert-guided aspects in seismic imaging. Interpretation of seismic data is fundamental and it continues to depend on the visual cognition of geoscientists. In Equatorial Guinea, for example, a service provider combined its cognitive workflows with both a color space optimized for human vision and an interface that maximizes human cognitive capabilities. The result was enhanced interpretation and early identification of prospects, allowing the operator to acquire interest in the block from new partners.17

Food for thought: The benefits of cognitive techniques can multiply when cross-discipline data across the life cycle of a reservoir is used for seismic modeling and interpretation—extremely relevant for the highly competitive US shale market that has an abundance of drilled and producing well data to tap into for geoscientists.18

Development drilling

Development drilling is at the embryonic stage of data integration in the DOT framework as many sought-after analytical platforms are still incapable of aggregating and standardizing cross-vendor data.19 Distinct objectives of over 15 services required in drilling; hundreds of proprietary tools, software, and technologies of over 300 oilfield service firms; and the lack of standardized data formats explain the data integration issue.20 The result: petabytes of data not put to best use. “If we can’t get data to move smoothly across all of these areas, from the rig control system to the electronic digital records to third-party supplies that come on site with a logging system or cement units, it fails right away,” says Michael Behounek, senior drilling adviser at Apache Corp.21

Breaking data silos is key for the industry to continue to report efficiency gains and cost savings. Over the past five years or so, advancements in operational technologies, such as multi-well pad drilling, has helped the industry lower average drilling time in shales from 35 days in 2012 to about 15 days at present.22 But these operational gains are showing signs of moderation (indicated by flatness in drill days and new-well production per rig in US shales), calling for digital technologies to take up the baton now.23

Similar to the way upstream and oilfield service companies worked together to achieve drilling gains through operational technologies in this lower-for-longer environment, they should consider joining forces on the digital front as well. Both have to find an acceptable return on investment in digital solutions, without which hackers may exploit the disintegrated workflows, margins will likely keep migrating between the two, and the industry’s pace of innovation can suffer a setback. Justin Rounce, senior vice president of Schlumberger, puts it rightly when he says, “It’s hard to continue to invest in technology when you’re not getting the value associated with that investment.”24

[Upstream and oilfield service companies] have to find an acceptable return on investment in digital solutions, without which hackers may exploit the disintegrated workflows, margins will likely keep migrating between the two, and the industry’s pace of innovation can suffer a setback.

Attaining this balance likely requires industry participants to collaborate and develop common data standards. However, knowing the complexity of the task at hand and the long lead time associated with standardizing all data formats—it took nearly four years for the Standards Leadership Council to complete the pilot project on integrating PPDM (Professional Petroleum Data Management) and WITSML (wellsite information transfer standard markup language) data models—the near-term aggregation strategy of an upstream company must be tied around third-party solutions that can securely layer integration frameworks on diverse drilling data.25 Apache Corp., for example, is deploying data integration boxes on 21 North American rig sites that allow monitoring and linear analysis on assimilated data from drilling control systems, logging while drilling systems, cement units, etc.26

Once this bottleneck is cleared, the digital leap toward advanced analytics could be much faster. When achieved, this leap could potentially deliver annualized well cost savings of about $30 billion27 to upstream players, while oilfield service players can potentially create multibillion-dollar high-margin revenue streams.28 Knowing there are several areas of value creation in drilling—optimization of trajectory, rate of penetration, frictional drag, drill string vibrations, equipment performance, etc.—traditional companies could prioritize and pilot a few things, while digital leaders can focus on maximum value realization through integrated advanced analytics at a company level.29

Noble and Baker Hughes, a GE company (BHGE), for instance, are targeting a 20 percent reduction in offshore drilling cost by jointly developing an advanced data analytics system. The companies plan to optimize the drilling process through performance analytics, which includes establishing new key performance indicators, analyzing high-frequency signatures on drive systems, and assessing usage intensity on key assets. These capabilities will be housed on the rig site, while the data would be sent to onshore centers where predictive algorithms would identify potential vibration issues, temperature issues, etc., weeks before they are identified by conventional automation systems.30

Although there is a significant buzz in the market to augment drilling operations or move toward autonomous drilling by developing linear and nonlinear solutions such as automated weight on bit adjustments, the industry should consider focusing on setting things right at the integrate and analyze stages. Clearing these bottlenecks is necessary for building automated rigs in the future. Schlumberger’s Rig of the Future program, for instance, primarily rests on integrating diverse drilling systems by working with third-party contractors using open source architecture.31

Food for thought: Pushing the integrated and advanced drilling analytics closer to operations could significantly reduce cost and time overruns in complex projects that have high wrench-time and require on-field customization—and open up opportunities to combine engineering design and operational expertise in sensor systems, thus allowing complex data analytics to be performed within the sensor array.32

Production operations

Unlike exploration and development, the production segment of a company mostly consists of brownfield wells, platforms, instruments, and control systems. About 40 percent of global crude oil and natural gas production comes from fields that have been in operation for more than 25 years—in fact, there are about 175 fields that have been producing for more than 100 years.33 Considering that the continuity of production, and thus the cash flows, is key, the industry always finds itself in a never-ending cycle of upgrading and retrofitting. Put simply, the industry always has a large portfolio of producing assets that are less sensorized, digitally behind, and even prone to cyberattacks.34

Joint venture structures in many fields, the dispersion of wells and assets along the life cycle, and the cost associated with upgrading the entire infrastructure can complicate and delay the modernization of legacy assets further. “Monitoring production data is nothing new for operators, but it's only been something the supermajors and major companies could afford to do . . . and even then, they had to pick and choose, only able to monitor maybe 60% to 70% of their wells,” says an executive with WellAware.35

Addressing this problem has never been more important than it is today. Weak cash flows and uncertain cost inflation in new projects have led to a change in the business objective of many producers—from chasing growth in greenfield projects to optimizing production from existing fields without spending much. ConocoPhillips CEO Ryan M. Lance rightly framed the current situation when he said, “Low capital intensity is a CFO’s best friend.”36

But what should be the digital strategy of a company for its legacy assets? A blanket digital investment for all or a digital prototyping on just a few producing wells? Neither, as the former is impractical and the latter would only yield marginal gains. Then? Just as every operational solution (for example, enhanced oil recovery) and business strategy (for example, acquisition of a nearby field to leverage existing infrastructure) is specific to a field, a digital investment should be prioritized and customized for each field or well.

A high-potential field, for instance, might merit installation of advanced distributed sensors and “smart” OEM equipment to provide new insights on the operating conditions of a well both above and below the surface. A field with moderate potential could benefit from pervasive sensors (to monitor temperature, vibration, rotation, etc.) on pumps, valves, and equipment so as to develop a condition-based maintenance schedule. A field with low potential, on the other hand, may require standard automation and monitoring solutions to keep the well running at optimal levels. Such digital segmentation would likely cover the entire asset base and optimize the overall production portfolio without taking up much capital.

Once this layered strategy of sensorizing equipment is in place, a digital leap toward advanced analytics could start creating new value on the optimization and maintenance fronts. Prominent legacy field issues, such as gas interference, equipment choking, damaged fluid pound due to overpumping, and inefficient recovery due to under pumping, could be addressed by integrating automation protocols with cloud-based analytical platforms in a secure environment.37 Although the benefits would vary from field to field, as per some estimates, optimizing production in a 100-well project can generate annualized cash flows of $20 million (approximately $20 billion at an industry level), leaving aside the cost avoidance for equipment failure and repair.38

The rate of value creation would likely increase exponentially when the analysis extends to a reservoir level. An operator in Kazakhstan, for example, was facing poor pump pressure and production deferment in several mature gas condensate wells. Apart from installing new electrical submersible pumps (ESPs), the operator used real-time analytics to make proactive adjustments to ESP trips and modifications to the motor amps to better suit the changing reservoir condition encountered for each well. This reservoir-level intelligence reduced downtime by an additional 27 percent, over and above the benefits from new ESPs.39

Scaling these solutions and sustaining benefits across the fields would likely require modeling and assimilation of the entire well life cycle data to develop a dynamic tolerance- and volatility-based predictive analytics approach. A Middle Eastern operator is applying this predictive approach across its 1,000-odd wells, without having to invest in new modeling licenses and engineering analysis, and is expecting to save millions of dollars in technology and time costs.40

Food for thought: The greatest potential in sensorizing and analyzing producing wells exists in the Middle East as 35 percent of its O&G production comes from legacy fields that have been producing for more than five decades, followed by North Africa.41

Keep the digital wheel turning: A long-term goal for companies

The majority of digital solutions currently in the market are aimed at reducing the industry’s operating costs, which were about $2.3 trillion in 2016. Undeniably digital has, and will continue to, lower the industry’s operating costs, but there is a much bigger category of $3.4 trillion in net property, plant, and equipment—or the productive capital—which is nearly untouched by existing digital solutions.42 Remember, there is an annual addition of about $500 billion in capex to this already sizeable figure.43

Undeniably digital has, and will continue to, lower the industry’s operating costs, but there is a much bigger category of $3.4 trillion in net property, plant, and equipment—or the productive capital—which is nearly untouched by existing digital solutions.

Even a 1 percent gain in capital productivity from this figure—by reducing future opportunity cost through intelligent robots that also ensure safety of assets and people, lowering the replacement cost for worn-out producing assets through on-demand rapid printing, or extending the economic life of offshore assets by regularly tracking their structural integrity—could mean savings of about $40 billion.44 (To put this saving into perspective, listed pure-play upstream, integrated, and oilfield services companies worldwide reported a cumulative net loss of about $35 billion in 2016.45) And when digital efforts start optimizing both operating and capital costs, O&G companies could reverse the falling trend of their return on capital employed (ROCE) even in a lower-for-longer oil price environment.

An O&G company could optimize its physical operating environment and adopt new capital models in three stages—from having augmented robots that go beyond their typical use of surveillance and inspection; to crafting and manufacturing specific parts that have a longer time-to-market cycle and are limited by their custom design; to creating a living model of the physical asset, or a virtual clone (technically referred to as a digital twin) that combines physics-based models and data-driven analytics.

Although some use cases have started to flow in, the industry has only scratched the surface in influencing a big change in its physical assets. Table 1 highlights some aspiring use cases for the industry to build upon to scale up its digital solutions across the three stages. Details on specific areas or operations where we foresee significant capital efficiency potential are also identified.

As apparent in these use cases, this is only the start of a journey. How can the industry move quickly from piloting proof-of-concepts to scaling digital solutions at a company level, especially when it has a large asset base of legacy assets and its operations are dependent on multi-vendor control systems? Rather than limiting itself to finding solutions within, the industry can study digitally leading capital-intensive industries and draw lessons from their journeys and breakthroughs.

Renewables, especially wind energy, offer a lesson on scaling a digital solution. From following a typical asset-based approach of building, optimizing, and maintaining a perfect turbine, GE has progressed toward building a predictive simulation (that is, a digital twin) for every turbine in the wind farm—without discounting the unique requirements of each—and ensuring that each turbine in the farm operates at its peak performance level.51

Manufacturing, including automotive, has many simple, yet telling, examples of bringing smart technology to old, but still viable, assets. For instance, in 2010, Harley Davidson retrofitted hundreds of working machines with sensors, used wireless communication to collect data, and deployed a software system to successfully look for early signs of mechanical problems. Contrary to other stand-alone solutions, sensorization of complete plant or operations is already happening due to the falling cost of sensors. The key, however, is deciding which sensors to use and for what.52

Aerospace and defense continues to offer leading practices in the areas of integrating people, processes, tools, materials, environments, and data with digital twins. Lockheed Martin is combining the idea of a digitally connected product and manufacturing processes and information with the notion of digital twins. Using next-gen technology enablers, the company is creating a digital twin of every product by integrating all the four ecosystem hubs—engineering, manufacturing, test and check-out, and sustainment—through a common data language and open system architecture.53 (Figure 5 provides key digital solutions that could help an offshore-focused company to transform its core operations.)

Embracing digital for good

Once the first loop of the DOT framework is completed at an asset or operation level, companies should consider mechanizing and augmenting more and more brownfield assets and expand their digital coverage at a company, and ultimately, at an ecosystem, level. Every completed loop could throw open new operating, capital, and business models for a company.

What would keep this digital wheel moving at the right pace and in the right direction? Just adopting digital technologies alone is not enough. For being digital and leading the digital disruption, an upstream company should also consider exhibiting and embracing the following digital behaviors:

- Promoting cross-discipline, cross-company workflows: Democratizing information across the organization by investing in secure integrated platforms and morphing new team structures of geo and data scientists

- Endorsing standardization while maintaining a competitive edge: Bringing together suppliers, partners, and technology providers to develop open platform solutions in areas with high value creation potential and minimal competitive edge

- Implementing systemic changes in workforces and cultivating a digital culture: Rebranding the industry by placing a premium on attracting digital talent and bringing a fundamental shift in the corporate and leadership mind-set to spur a forward-looking digital culture 54

- Maintaining velocity between digital and legacy: Avoiding the suboptimization of digital investments by not holding on to or delaying the modernization of legacy assets, structures, and decision-making

- Generating momentum from experiments to drive scale: Studying digitally advanced industries and learning how they have scaled solutions, integrated systems, and changed their business models

- Taking a longer view on digital strategy: Securing the board’s commitment by clearly articulating the long-term vision and benefits of being digital, which should not be limited to reducing operational costs—about 30 percent of digitally mature organizations have a planning horizon of five years or more.55

It’s time for action. While going digital has mostly become the norm now, it perhaps makes more sense for O&G companies to seize the opportunity and scale up the impact, especially in today’s lower-for-longer environment that requires new operating and capital cost models. And with a comprehensive road map in hand, the journey may not be so cumbersome after all.

Appendix

The mapping of each upstream operation on our Digital Operations Transformation (DOT) model is based on extensive secondary research on the process flows and study of latest solutions and technologies provided by major oilfield services, automation, and software firms.

Further, the near-term digital leap is defined on the basis of objectives that most of the companies are trying to achieve from their respective operations. These business objectives were established by the detailed examination of recent SEC filings and corporate presentations of various US and global firms. Further, they were aligned with the stages in the DOT model (see table 2) by developing and analyzing an inventory of research on new digital solutions that are being implemented or planned for various upstream operations.

© 2021. See Terms of Use for more information.