AWS re:Invent 2020 – Machine Learning Keynote – Deloitte On Cloud Blog | Deloitte US has been saved

A blog post by Chida Sadayappan, lead specialist, Cloud, Data, & Machine Learning; and co-authored by Sudi Bhattacharya, managing director, Cloud Machine Learning; Samir Sirohi, senior consultant; and Ishita Vyas, Cloud Strategy consultant. All professionals are from Deloitte Consulting LLP.

Swami opened this year’s Machine Learning keynote, highlighting the accelerated customer base adopting ML, with over 1,000 customers using AWS’ Artificial Intelligence/Machine Learning (AI/ML) services. He elaborated on how ML has moved from being a niche area to the core of many businesses, illustrating success stories for AWS customers like Dominos leveraging ML to meet its goal of 10 minutes or less for pizza delivery, Roche accelerating medical experiences, BMW processing 7PB of data with SageMaker, Nike using ML for product recommendations, and Formula 1 analyzing over 550M data points on car design and simulations to speed up design and development.

The keynote stressed AWS’s broad and deep portfolio of AI/ML offerings, which AWS is working on expanding further continuously. For example, AWS launched 250+ features in the last one year to broaden their AI/ML offerings.

Five key tenets of AI/ML were mentioned in the keynote:

- Freedom to reinvent

- Provides the shortest path to success

- Good ideas can come from anywhere in the organization

- Solve real business problems, end-to-end

- Learn continuously

As we’ll see in the following sections, AWS has developed its AI/ML services and features in alignment with these five tenets.

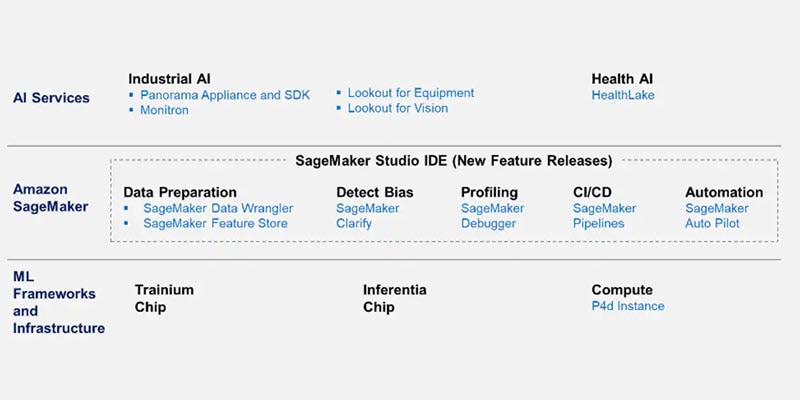

A quick snapshot of the newly announced services across the three layers of AI/ML:

ML frameworks and infrastructure

To provide the builders the “freedom to invent,” optimized frameworks and powerful infrastructure for training and deployment are essential.

Machine Learning frameworks and specialized infrastructure speed up model training and deployment of ML workloads, providing builders the freedom to invent.

There is no single standardized framework for ML, but the three main ones in use are TensorFlow, PyTorch, and Mxnet. AWS supports all the major deep learning frameworks, and they are optimized to run on AWS, thus providing customers choice to use their best-fit framework. Referencing a Nucleus research study, Swami claimed that 92% of cloud-based TensorFlow and 91% of cloud-based PyTorch workloads run on AWS!

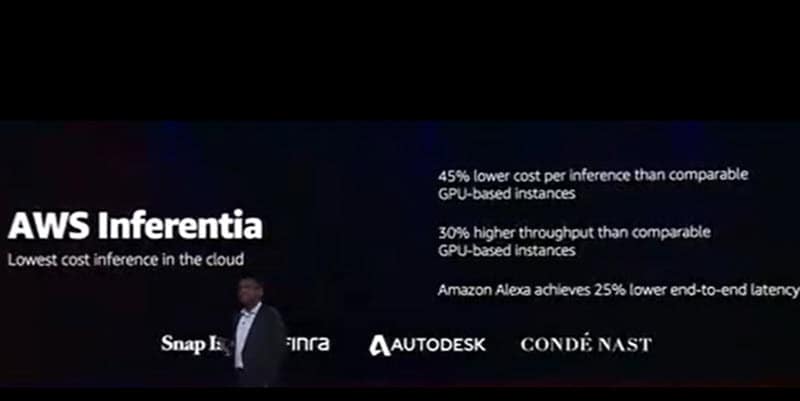

In addition to optimized frameworks, the second key part of firm foundations is providing builders specialized, powerful infrastructure to train and run their ML workloads. Swami discussed the different type of optimized instances available on AWS to cater to the need of high compute power, dedicated GPUs, and network bandwidth during training and inference for ML models and workloads. AWS has launched new instance-type P4d, which provides the highest performance for ML training in the cloud.

Referring to Andy Jessy’s keynote, Swami also mentioned about Inferentia, a new chip designed specifically for deep learning workloads, and Trainium, a new chip set designed specifically for training machine learning models.

Advancements in Amazon SageMaker

Amazon SageMaker is Amazon’s flagship product for AI/ML, introduced in 2017. With AWS continuously reinventing its services, AWS has built a portfolio of services that cater to all the steps in the ML life cycle.

Swami announced the following new services and features under the Amazon SageMaker umbrella:

Amazon SageMaker now supports a new data parallelism library that makes it easier and 40% faster to train models on data sets that may be as large as hundreds or thousands of gigabytes.

NFL case study

Jennifer Langton from the NFL discussed the innovations that the league has brought to the game for fans and players using machine learning. ML is used to not only generate real-time, on-screen game insights for viewers, but behind the scenes, there’s a huge amount of additional analysis going on around player safety. The NFL and AWS conducted a biomechanical analysis to review gameplay data to improve helmet safety and reduce concussions. Now, the NFL is using ML to predict and prevent injuries for NFL players.

Amazon SageMaker Data Wrangler is a new visual interface for Amazon SageMaker, which helps prepare data for machine learning applications using a visual interface. With SageMaker Data Wrangler, data preparation and feature engineering process can be simplified, and builders can complete each step of the data preparation workflow, including data selection, cleansing, exploration, and visualization, from a single visual interface.

Amazon SageMaker Feature Store: Features are the attributes or properties that models use during training and inference to make predictions. Amazon SageMaker Feature Store is a fully managed, purpose-built repository to store, update, retrieve, and share ML features.

Amazon SageMaker Clarify can be leveraged during the training and validation phase of the machine learning life cycle. It helps to detect bias in ML models across the end-to-end machine learning workflow. It increases transparency by helping explain model behavior to stakeholders and customers. It also performs continuous monitoring and can detect model drift once the ML model is in production. It then notifies the stakeholders of this kind of bias introduced from real-time data so that models can be trained on new data sets.

Deep Profiling for SageMaker Debugger profiles and debugs training jobs in real time to improve the performance of ML models on compute resource utilization and model predictions.

Amazon SageMaker Pipelines can be leveraged to apply DevOps practices to ML projects. Sagemaker Pipeline helps to create, automate, and manage end-to-end ML workflows at scale.

SageMaker Pipelines helps to automate different steps of the ML workflow, including data loading, data transformation, training and tuning, and deployment. With SageMaker Pipelines, we can build dozens of ML models a week and manage massive volumes of data, thousands of training experiments, and hundreds of different model versions. Workflows can be shared and reused to recreate or optimize models, helping the team scale ML throughout the organization.

Amazon SageMaker Edge Manager enables developers to optimize, secure, monitor, and maintain machine learning models on fleets of edge devices.

SageMaker Edge Manager compiles a trained model into an executable that discovers and applies specific performance optimizations that can make ML models run up to 25x faster on the target hardware. SageMaker Edge Manager allows users to optimize and package trained models using different frameworks, such as DarkNet, Keras, MXNet, PyTorch, TensorFlow, TensorFlow-Lite, ONNX, and XGBoost for inference on Android, iOS, Linux, and Windows-based machines.

Bringing ML to everyone

Traditionally, there are two main methods of ML model development:

- Manually built models–These are models built from scratch, with each step executed manually, and require deep expertise.

- AutoML-created models–In this method, the builder has little to no idea of how the model was created or why the model behaves the way it does and has little control over the process.

Aligned with AWS’s third tenet, “Good ideas can come from anywhere in the organization,” AWS aims to bring ML to other users in the organization, in addition to data scientists. As part of this effort to bring ML to non-data scientists while also giving greater control over their ML models, AWS launched a new service, Amazon SageMaker Autopilot. It automates various time-consuming and complex manual steps involved in model training, evaluation, and deployment to production.

The next set of users that AWS wants to bring ML services to are the database developers and analysts. To enable this, AWS is integrating ML with databases, data warehouses, data lakes, and business intelligence (BI) tools. Amazon announced the following services:

- Amazon Aurora ML–For Aurora-powered apps, the ML service can be invoked through a simple SQL query. Aurora then passes the relevant data to pretrained models, and the results can be used in applications.

- Amazon Athena ML–Lets database developers and analysts use Athena to write SQL statements that run ML inference using Amazon SageMaker.

- Amazon RedShift ML–This enables ML use cases for data warehousing. Developers can use SQL to make machine learning predictions from their data warehouse.

- Amazon Neptune ML–Enables easy, fast, and accurate predictions using ML for Graph applications. AWS also claims that it improves accuracy by more than 50% over traditional ML techniques through integration with state-of-the-art machine learning with Amazon SageMaker and Deep Graph library.

The next set of users that AWS is bringing ML services to are the business users. AWS announced launch of a new service, Amazon QuickSight Q, which is a machine learning–powered service that lets business users ask questions about their data in natural language and get answers in seconds. It uses natural language query (NLQ) to understand users’ questions and runs ML services in the background to answer those questions.

For example, in response to questions such as “What is my year-to-date, year-over-year sales growth?” or “What are the best-selling categories in California this year?” (picture shown below), QuickSight Q automatically parses the questions to understand the intent, retrieves the corresponding data, and returns the answer in the form of a number, chart, or table in QuickSight.

ML for anomaly detection

Swami started by mentioning the existing AWS ML services for business problems: Contact Lens for Amazon Connect for contact center, Amazon Kendra for intelligent search, and Amazon CodeGuru + DevOps Guru for code and DevOps. These services can be deployed quickly to solve real-life business problems and don’t need a lot of ML expertise to deploy.

He argued that problems that are a good fit for ML have three common characteristics: Rich data available, high impact on business, and difficulty in solving to date. This makes anomaly detection a very good use case for ML to solve for. Some of the current challenges around anomaly detection are:

High latency of detection

Failed detections

High rate of false positives

Lack of actionable results

Adaptability to change in data streams

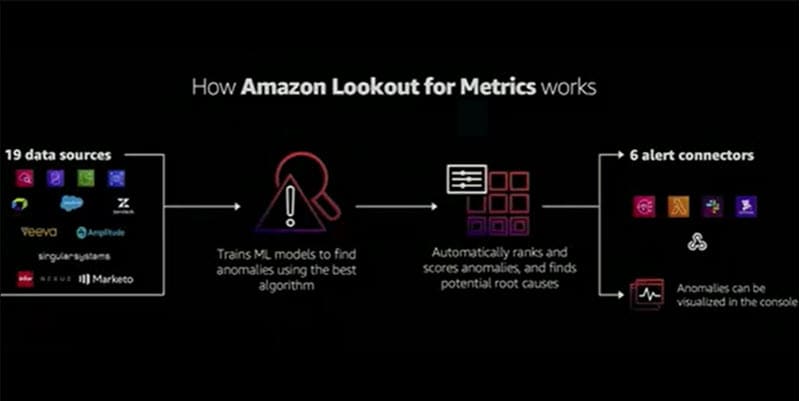

Aligned to tenet 4, “Solve real business problems, end-to-end,” AWS is launching a new service, Amazon Lookout for Metrics, which is an anomaly detection service for real-life metrics with root cause analysis. The service can integrate with 19 data sources (including AWS and external) for time series data, which is then processed through ML models to find anomalies, rank those anomalies, and find potential use cases.

It can also generate notifications whenever anomalies are detected, and customers can set up lambda functions to take automated, predefined actions whenever anomalies are detected. For example, if sales of a certain product see a sudden spike (due to incorrect listing price), Amazon Lookout for Metrics can detect the anomaly, and a lambda function can automatically suspend the listing temporarily while teams review the specific problem.

Improving industrial processes with Machine Learning

Dr. Mattwood then spoke about applications of ML for solving industrial problems. Industrial problems are often monolithic, with a lot of different systems and components interconnected. Dr. Mattwood then talked about Amazon Monitron, an end-to-end solution for industrial equipment maintenance.

AWS Monitron helps industries conduct preventive maintenance of equipment and requires no ML expertise to implement. It includes Monitron sensors that detect and report temperature and vibrations, a network gateway device, and a mobile app. The Monitron sensors capture vibration and temperature data, which is pushed to AWS Cloud, where the data is analyzed using ML, and the Monitron app then alerts technicians via push notifications.

Dr. Mattwood also covered Amazon Lookout for Maintenance, which is an anomaly detection service for industrial machinery. It can integrate with up to 300 sensors per industrial machine, integrates with existing monitoring software, and enables automatic actions when anomalies are detected.

Another key service announced for solving industrial problems was Amazon Lookout for Vision, a computer vision service to detect product defects, automating quality inspection on production lines. It can help manufacturing organizations improve product quality and reduce associated costs.

All of these services work with ML processing being done on the cloud, but what about computer vision at the edge, when either the latency required is very low, or the systems are not all connected to the internet? To address this, AWS launched AWS Panorama Appliance, which adds computer vision capabilities to existing smart cameras. It can integrate with 20 concurrent camera streams comes with preconfigured industry-specific models, and does all ML model processing at the edge. Customers can also build their own models in addition to the preconfigured models that come with the device.

To extend “ML at the edge” capabilities, AWS also launched a service for camera manufacturers, the AWS Panorama SDK, which provides an SDK and APIs to create cameras to run computer vision models at the edge.

Dr. Mattwood then explained how all of these AWS services will work together in an industrial setup, taking example of a pencil manufacturing setup. These services can help organizations achieve a high level of automation and control for their manufacturing processes.

Reinventing health care and life sciences with Machine Learning

Dr. Mattwood started with a brief overview of how a lot of health care and life sciences organizations are using AWS services to modernize and reinvent the core of their business. He talked about how Moderna used the AWS platform to speed up the development of its COVID-19 vaccine candidate.

A major problem in the health care and life sciences industries is that clinical data is incredibly complex. It’s often spread across various systems, the majority of the information is in unstructured data (medical records), and the processes for extracting key information are manual, so the process of gathering and preparing this data for any kind of analysis can take weeks or even months.

AWS announced the launch of Amazon HealthLake, which can help health care and life sciences organizations store, transform, and analyze health and life sciences data at a large scale in a very short time.

The service can integrate with hundreds of different data sources, use ML services to extract meaningful information from the data, and have all the information in one place, which will help health care and life sciences professionals understand the complete picture. This would enable quicker diagnosis and provide better care for patients.

A real-life example of how Philips is using SageMaker and other AWS services to power the Philips HealthSuite platform to improve diagnostic systems with the help of AI and ML was also discussed.

Machine Learning University

Finally, the last announcement of the keynote was in alignment with AWS’s fifth tenet, “Learn continuously.” To educate the next generation of machine learning developers, AWS has a free Machine Learning University, where developers can learn and be certified. In addition to this, AWS has also provided a lot of content on MOOC platforms and conducts some exciting ML competitions, such as AWS DeepRacer.

This blog was originally published on LinkedIn.

Get in touch

David Linthicum

As the chief cloud strategy officer for Deloitte Consulting LLP, David is responsible for building innovative technologies that help clients operate more efficiently while delivering strategies that enable them to disrupt their markets. David is widely respected as a visionary in cloud computing—he was recently named the number one cloud influencer in a report by Apollo Research. For more than 20 years, he has inspired corporations and start-ups to innovate and use resources more productively. As the author of more than 13 books and 5,000 articles, David’s thought leadership has appeared in InfoWorld, Wall Street Journal, Forbes, NPR, Gigaom, and Lynda.com. Prior to joining Deloitte, David served as senior vice president at Cloud Technology Partners, where he grew the practice into a major force in the cloud computing market. Previously, he led Blue Mountain Labs, helping organizations find value in cloud and other emerging technologies. He is a graduate of George Mason University.