Future of tech: Artificial intelligence (AI) has been saved

Perspectives

Future of tech: Artificial intelligence (AI)

Board Practices Quarterly, August 2023

By Natalie Cooper, Bob Lamm, and Randi Val Morrison

Artificial intelligence (AI), the use of technology to execute or simulate processes that would otherwise require human intelligence, is not new. But rapidly expanding technologies and evolving consumer digital preferences and expectations have generated intense interest in leveraging AI to help achieve efficiencies, increase competitive advantage, and enhance engagement with customers/clients and other stakeholders.

As companies continue to explore and invest in AI, they also are tasked with considering numerous business implications, such as ethics, compliance, and regulatory processes; risks (e.g., operational and reputational) and risk appetite; equity; governance; and the role of the board. This Board Practices Quarterly presents findings from a survey of members of the Society for Corporate Governance that focused on aspects of AI, including where in the organization AI resides, use policies/framework, risk mitigation measures, education and training, and board oversight.

Select findings

Respondents, primarily corporate secretaries, in-house counsel, and other in-house governance professionals, represent 97 public companies of varying sizes and industries.1 The findings pertain to these companies, and, where applicable, commentary has been included to highlight differences among respondent demographics. The actual number of responses for each question is provided.

Where does primary oversight for AI lie within your company’s board? (75 responses)

The most frequently cited response, that neither the board nor a board committee has express responsibility for AI, was reported by 25% of large-caps and 38% of mid-caps. This was followed by those reporting that AI is not yet being discussed at the board level by 19% and 22% of large- and mid-caps, respectively.

For those that have delegated oversight of AI to the full board or a committee, responses by market caps were:

- Large-caps—19% audit committee, 13% technology committee, and 13% full board

- Mid-caps—11% audit committee, 5% risk committee, and 5% full board

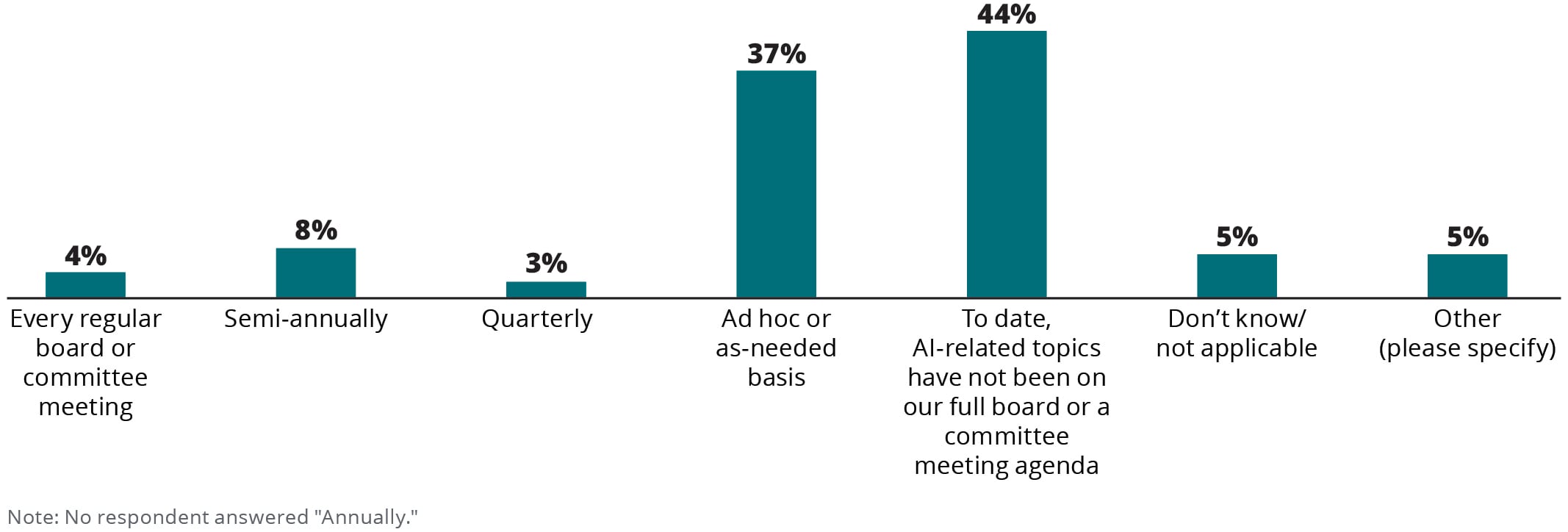

Describe the frequency of AI-related topics on the meeting agendas for the full board or board committee(s) with oversight responsibility. [Select all that apply] (73 responses)

Most common responses:

- Ad hoc or as-needed basis, reported by 52% of large-caps and 31% of mid-caps.

- To date, AI-related topics have not been on our full board or a committee meeting agenda, reported by 35% of large-caps and 53% of mid-caps.

Supplemental comments provided by some respondents were:

- "AI is discussed in connection with particular applications (e.g., in engaging with consumers)."

- "Frequency is ad hoc but is increasing in interest and relating to multiple presentations/discussions."

- "AI has been discussed as part of larger cybersecurity discussions."

- "AI was covered by the Technology Committee in Q1 2023."

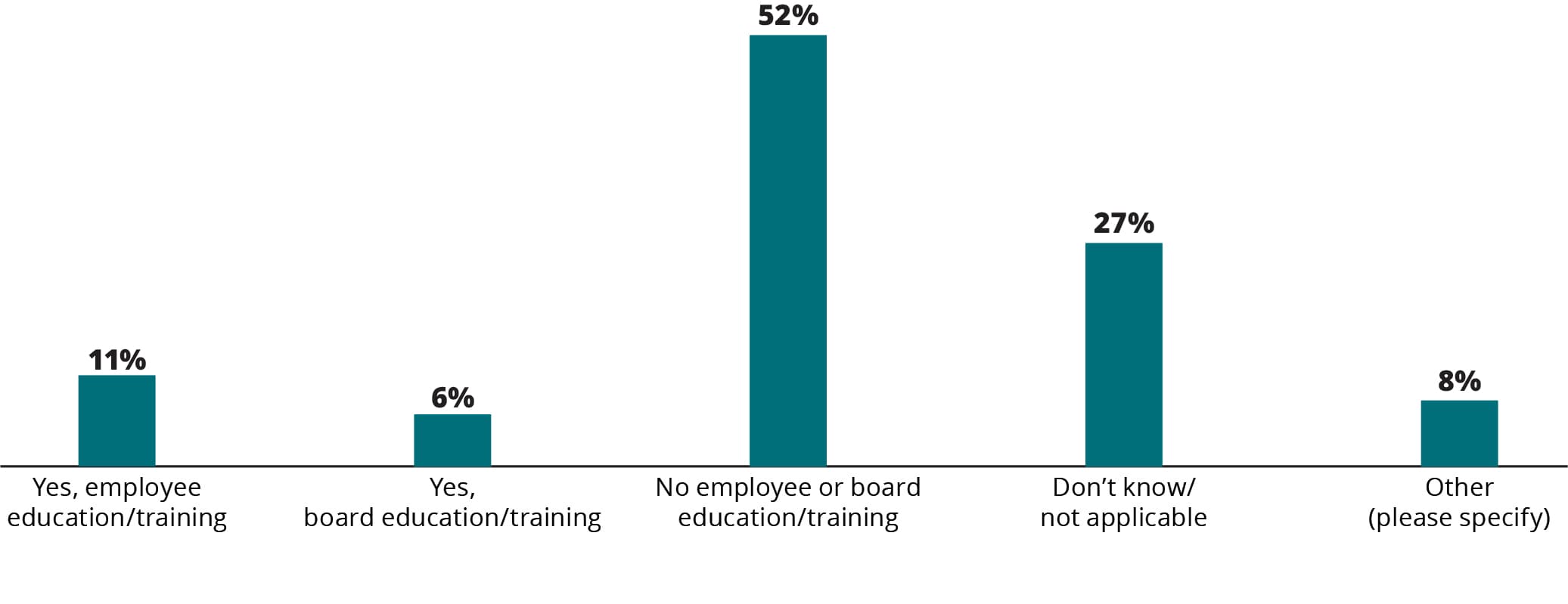

Does your company provide training/education on AI? [Select all that apply] (63 responses)

Some respondents provided supplemental comments about their company’s training/education on AI:

- "It is under consideration."

- "Presentations are given but no formal training."

- "Just recently launched an external AI service."

- "Not yet, but about to be launched."

- "None yet, but in development."

Endnotes

1 Public company respondent market capitalization as of December 2022: 44% large-cap (which includes mega- and large-cap) (> $10 billion); 47% mid-cap ($700 million to $10 billion); and 8% small-cap (which includes small-, micro-, and nano-cap) (< $700 million). Public company respondent industry breakdown: 33% consumer; 25% financial services; 24% energy, resources, and industrials; 12% technology, media, and telecommunications; and 6% life sciences and health care.

Small-cap and private company findings have been omitted from this report and the accompanying demographics report due to limited respondent population.

Throughout this report, percentages may not total 100 due to rounding and/or a question that allowed respondents to select multiple choices.

Recommendations

Board Practices Quarterly

Insights and benchmarking data for board practices